SEO Risks: A Business Owner’s Guide to Protecting Search Visibility

Table of Contents

Search engine optimisation involves calculated decisions at every turn. Some carry real risk; others are simply misunderstood. The businesses that protect their search visibility long-term are not the ones that avoid all risk; they are the ones that understand which risks are worth taking, which ones carry hidden consequences, and which ones look safe on the surface but can quietly erode years of ranking authority.

This guide is written for marketing managers and business owners in the UK and Ireland who want a clear, practical framework for managing SEO risk. It covers the main categories of risk, the tactics most likely to trigger penalties or traffic drops, and the steps you can take to audit your own exposure before problems surface.

What Are SEO Risks and Why Do They Matter for UK Businesses?

SEO risk is not just about Google penalties, though penalties are the most visible outcome. In practice, SEO risk encompasses any action or inaction that could result in reduced organic visibility, traffic loss, or damage to your site’s long-term authority.

For businesses operating in the UK and Ireland, the stakes are particularly concrete. Organic search is often the primary acquisition channel for SMEs without large paid media budgets. A significant ranking drop, a failed site migration, or a manual action from Google can take months to recover from, and the revenue impact during that window is real.

There are three distinct categories of SEO risk that every business should understand:

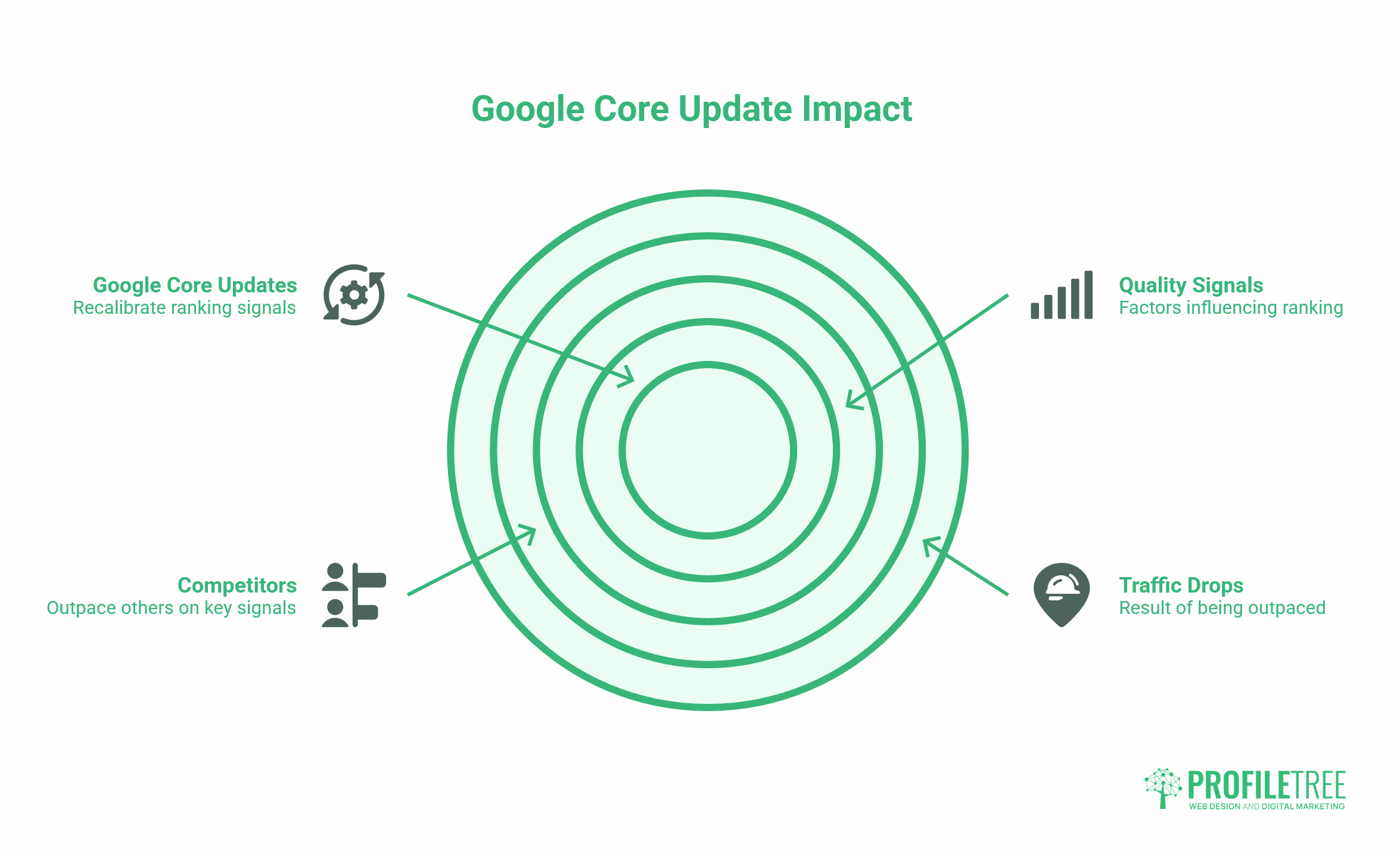

Algorithmic risk covers the exposure created by Google’s core updates. These updates do not target individual sites; they recalibrate how Google weights different quality signals across the entire index. Sites that rely too heavily on thin content, use excessive on-page keyword repetition, or include low-authority links often see significant drops following core updates. The December 2025 and February 2026 core updates specifically targeted lightly edited AI content, self-promotional structures, and sites without clear topical authority.

Technical risk covers the structural and infrastructure decisions that can disrupt how search engines crawl and index your content. Site migrations, CMS changes, redirect chains, and improper use of noindex tags are all common sources of technical risk. A single misconfigured robots.txt file can inadvertently block Googlebot from crawling your entire site.

Commercial and vendor risk is the category most often overlooked by business owners. Hiring the wrong SEO provider, relying on tactics that deliver short-term gains at the cost of long-term stability, or simply doing nothing while competitors build authority are all forms of commercial risk. The cost of inaction is real: every month a competitor publishes high-quality content and earns links, the gap you need to close grows wider.

Ciaran Connolly, founder of Belfast digital agency ProfileTree, puts it plainly: “Most businesses I speak to think SEO risk means getting penalised. In reality, the bigger risk for most UK SMEs is simply not building the kind of sustained authority that makes organic traffic predictable, and then wondering why they’re invisible to their ideal customers.”

The Main Types of SEO Risks: A Framework for Assessment

Understanding the main types of SEO risks helps you prioritise where to focus your audit efforts. The risks below are not equally likely or equally severe, but each one has caused measurable damage to real sites.

Technical and Infrastructure Risks

Site migrations and URL changes are among the most common sources of catastrophic traffic loss. When a business rebrands, moves to a new CMS, or restructures its URL hierarchy without a thorough redirect strategy, the link equity built over the years can evaporate. A 301 redirect passes roughly 90 to 99% of the original page’s authority to the new URL, but only when implemented correctly. Chains of multiple redirects dilute that equity at each hop. Missing redirects create 404 errors that destroy it entirely.

Before any migration, every URL on the current site should be mapped to its destination. The redirect implementation should be tested in a staging environment before it goes live. Post-launch, Google Search Console should be monitored daily for crawl errors, indexing gaps, and ranking changes.

Improper use of noindex tags is a subtler but surprisingly common problem. Noindex directives are useful tools when applied to genuinely low-value pages, thin category pages, internal search results, and staging environments. Applied incorrectly, they can accidentally exclude key service pages or blog content from the index. If you are using a WordPress plugin to manage this, check that no broad rules are accidentally applying noindex to content you intend to rank.

Crawl budget mismanagement disproportionately affects larger sites. If Googlebot is wasting its crawl allowance on low-value pages, parameter URLs, session IDs, or duplicate content, it may not reach your most important pages as frequently as it should. A well-configured XML sitemap, a clean robots.txt, and logical internal linking all help direct crawl budget towards priority content.

Content and Algorithmic Risks

Over-reliance on AI-generated content has become one of the most significant content risks in 2025 and 2026. Google’s Helpful Content System now operates as a sitewide quality signal, meaning that a high proportion of thin or unhelpful AI content can suppress the rankings of even your strongest pages. This does not mean AI tools have no place in content production; they can accelerate research and drafting, but content published without genuine expertise, editorial judgement, and real-world examples will not sustain rankings.

Keyword cannibalisation happens when multiple pages on your site compete for the same search intent. Instead of one strong page ranking, you have two or three weaker ones splitting signals. This is a common consequence of rapid content scaling without a clear topical map. The fix is usually consolidation: redirecting weaker variants into a single authoritative page, then building that page out properly.

Ignoring algorithm updates is a risk in itself. Google publishes guidance on major core updates through Search Central, and the SEO industry documents which sites gain and lose after each update. A site that does not monitor its Search Console performance after major updates cannot identify whether it has been affected, let alone respond. Set up automated alerts for significant traffic drops and review Search Console after every named core update.

Black Hat and Link-Building Risks

Black hat SEO tactics are techniques designed to manipulate rankings rather than genuinely earn them. The most common include buying backlinks, participating in link exchange schemes, keyword stuffing, cloaking, and doorway pages. These tactics may produce short-term ranking gains, but they carry serious long-term consequences.

Buying backlinks is the most widely discussed black hat risk, and for good reason. Google’s spam policies are explicit: paid links intended to manipulate PageRank are a violation. When detected, whether algorithmically or through manual review, the result can be a significant ranking penalty or a manual action requiring a formal reconsideration request to resolve.

Toxic backlink profiles can also accumulate passively. If your site has been the target of negative SEO, where a competitor or bad actor builds spammy links pointing at your domain, you may find low-quality links appearing in your profile without having purchased them. Regular backlink audits using tools such as Google Search Console’s Link report, combined with the disavow tool for genuinely harmful links, are the standard response. As Ciaran Connolly notes, “The disavow tool should be used cautiously. Disavowing links indiscriminately can do more harm than good; only clearly toxic links from irrelevant, spammy sources warrant disavowal.”

Aggressive anchor text patterns, where a high proportion of inbound links use exact-match commercial anchor text, can also trigger algorithmic scrutiny. Natural link profiles include a mix of branded, URL, and descriptive anchors. A site where 70% of inbound links say “best SEO agency Belfast” is not building a natural profile.

Commercial and Vendor Risks

The risk of hiring a low-cost SEO provider deserves direct treatment. The SEO industry has no formal qualifications required for entry, which means the quality range between providers is enormous. A provider who charges very little and promises rapid results is typically using one of three approaches: automating low-quality link building, churning out thin AI content at scale, or doing very little at all and waiting for the contract to expire.

The consequences of poor SEO work are not always immediate. Legacy toxic links can accumulate over months. Thin content pages may rank briefly before being caught in the next core update. By the time the damage is visible, the provider is often long gone, leaving the business to pay a reputable agency to clean up the mess.

When evaluating any SEO provider, ask for specific examples of organic growth they have delivered for comparable businesses, check their own organic presence, and request clarity on exactly what link-building activities they plan to undertake on your behalf.

The risk of inaction is the one most often absent from competitor content on this topic, but it is arguably the most significant commercial risk for UK SMEs. Every month that passes without a structured content programme, without consistent technical maintenance, and without a link-building strategy is a month in which competitors are compounding their authority. Organic search does not stand still. The gap between an active, well-maintained SEO programme and a neglected one widens consistently over time.

The SEO Risk Matrix: Calculating What’s Worth Taking

Not all SEO risks are equal, and not all risks should be avoided. The goal is to distinguish between calculated risks, actions that carry some exposure but offer meaningful strategic upside, and reckless risks, where the potential downside far outweighs any plausible gain.

| Tactic | Risk Level | Potential Upside | Verdict |

|---|---|---|---|

| Aggressive PR and digital outreach for links | Medium | High authority links from relevant publications | Worth taking |

| Publishing long-form original research | Low | Site migration without a full redirect map | Worth taking |

| Consolidating cannibalising pages | Low-Medium | Concentrated ranking signals | Worth taking |

| Buying backlinks from link farms | Very High | Short-term ranking bump | Reckless |

| Mass AI content without editorial review | High | Short-term content volume | Reckless |

| Ignoring core update monitoring | High | None | Avoidable inaction |

| Site migration without full redirect map | Very High | None | Avoidable inaction |

| Targeting high-competition keywords too early | Medium | Long-term positioning | Calculated; start with long-tail |

| Fixing technical crawl errors | Very Low | Improved indexing and ranking | Always worth doing |

The most defensible SEO programme is one that builds assets, strong content, clean technical foundations, genuine editorial links, while treating high-risk shortcuts as the false economy they are. Businesses that take the calculated risks in the left column and avoid the reckless ones in the right column consistently outperform those that do the reverse, over any meaningful time horizon.

How to Conduct an SEO Risk Audit

A quarterly SEO risk audit does not require specialist tools for every check. Start with what Google provides directly through Search Console, then build from there.

Step One: Check for Manual Actions and Security Issues

Log in to Google Search Console and work through to Security and Manual Actions. A manual action means Google has identified a specific guideline violation on your site. Manual actions do not resolve themselves; they require you to fix the underlying issue and submit a reconsideration request. Check this section first, every time.

Step Two: Review Crawl Coverage and Index Health

In Search Console, open the Pages report under Indexing. Identify any pages that are excluded from the index and review the reason. Pages excluded due to noindex tags, canonical tags, or crawl errors may require attention. Pages excluded as duplicates should be checked to confirm that the correct canonical is specified.

Step Three: Audit Your Backlink Profile

Review your inbound links via Search Console’s Links report. Look for patterns that suggest unnatural acquisition: a sudden spike in links from irrelevant sites, excessive exact-match anchor text, or links from domains that have been clearly spammy or penalised. For a more complete picture, third-party tools can cross-reference your profile against known spam databases.

Step Four: Check Core Web Vitals

Search Console’s Core Web Vitals report shows how your pages perform against Google’s page experience signals: Largest Contentful Paint, Interaction to Next Paint, and Cumulative Layout Shift. Pages marked as “Poor” should be prioritised for technical improvement. For UK businesses with GDPR-compliant cookie consent implementations, be aware that consent management platforms can add meaningful page load latency if not configured correctly, a specific technical SEO risk with a regulatory dimension.

Step Five: Review Content Quality at Scale

For sites with large content archives, use a crawl tool (Screaming Frog, for example, has a free tier for up to 500 URLs) to identify thin pages, duplicate title tags, missing meta descriptions, and orphaned content. Pages under 300 words that are not intentionally brief, contact pages, for instance, should either be expanded or consolidated.

At ProfileTree, our SEO services cover all five of these audit stages as part of an ongoing programme rather than a one-off exercise. The frequency of technical issues that accumulate between audits makes quarterly reviews the minimum effective cadence for any active site.

Protecting Your Site During Algorithm Updates

Google’s core updates do not penalise individual tactics in isolation; they recalibrate the weight given to different quality signals across the entire ranking system. Sites that see significant traffic drops after a core update have usually been outpaced by competitors on the signals that update emphasised, rather than having done something specifically wrong.

The practical implication is that recovery from a core update cannot be achieved by a single fix. It requires a sustained programme of content improvement, authority building, and technical maintenance. Google’s own guidance is consistent on this point: the best way to recover from a core update impact is to make your site genuinely better.

Monitoring your performance through Search Console immediately after a named update is announced gives you a baseline. Comparing your traffic and ranking position data in the weeks before and after the update date helps identify which pages and query clusters were affected. From there, the audit process described in the previous section provides a structured way to identify what needs to improve.

For businesses managing SEO internally, the SEO compliance and algorithm guidance published on Google Search Central is worth bookmarking and reviewing after major updates. The UK-specific digital marketing environment can exhibit volatility patterns distinct from those of the US index, another reason to monitor your own data rather than rely solely on industry-wide reporting.

Conclusion

SEO risk is not a reason to avoid SEO; it is a reason to approach it with a structured, evidence-based programme rather than ad hoc tactics or outsourced shortcuts. The businesses that rank consistently are those that invest in strong content, clean technical foundations, and genuine editorial authority, and that monitor their performance closely enough to catch problems before they compound.

If you are concerned about the SEO risks your site is currently exposed to, or if you are planning a site change, migration, redesign, or CMS switch that carries significant technical risk, ProfileTree’s SEO team works with businesses across Northern Ireland, Ireland, and the UK to plan and execute these changes safely. Get in touch to discuss what a structured SEO risk audit would look like for your site.

FAQs

What are the most common SEO risks for UK businesses?

Site migrations without full redirect mapping, thin AI content that fails Google’s Helpful Content standards, and backlink profiles inherited from previous providers. UK businesses also face a specific risk from GDPR-compliant cookie consent platforms, which can add page load latency and affect Core Web Vitals scores.

Can SEO damage my website?

Yes. Poor technical SEO, misconfigured redirects, and accidental noindex tags can quickly suppress rankings. Black-hat tactics, such as buying backlinks, can trigger a manual action from Google, requiring a formal reconsideration process before rankings recover. Both are correctable, but recovery typically takes three to six months.

Is hiring a cheap SEO agency a risk?

Yes, and it is one of the more significant commercial risks a business can take. Providers charging very little typically rely on low-quality automated link building or AI content that does not meet Google’s standards. The cost of cleaning up the resulting problems usually far exceeds the original savings. Ask any prospective provider for documented examples of organic growth, and explicitly ask what link-building methods they use.

What is negative SEO, and should I be concerned?

Negative SEO involves a third party building spammy links to your domain to damage your rankings. Google has become better at ignoring these patterns, and suppressing a well-established site is genuinely difficult. For most UK businesses, monitoring your backlink profile in Search Console is sufficient. If you see a sudden spike in low-quality inbound links, the disavow tool is the appropriate response.