The Role of Leadership in Driving AI Initiatives: From Vision to Scalable Success

Table of Contents

Most organisations have launched at least one set of AI initiatives in the past two years. Boards have approved budgets, vendors have been onboarded, and pilot projects have been celebrated in internal newsletters. Yet according to recent industry benchmarks, fewer than 15% of UK enterprises have managed to move those AI initiatives from a proof of concept into full-scale production. The gap between ambition and delivery is not a technology problem. It is a leadership problem.

At ProfileTree, a Belfast-based digital agency working with businesses across Northern Ireland, Ireland, and the UK, we have supported organisations at every stage of this journey. What separates the companies that scale AI initiatives from those that stall is rarely the sophistication of their tools. It is the quality of their leadership, the clarity of their vision, and the discipline of their execution. This article sets out the strategic and operational frameworks that leaders need to plan, manage, and sustain AI initiatives that actually deliver.

Why AI Initiatives Fail Without Strong Leadership

Before an organisation can build a plan for successful AI initiatives, it needs to understand the most common reasons they fail. The patterns are remarkably consistent across sectors and company sizes, and most of them come back to decisions made, or avoided, at leadership level.

The Technology-First Trap

The most frequent error leaders make is delegating AI initiatives entirely to the IT department. When AI is treated as a technical project rather than a strategic one, it tends to solve technical problems whilst missing the business problems that actually matter. A chatbot that reduces ticket volume by 8% looks impressive in a pilot. It looks far less impressive when the underlying customer service process has not been redesigned around it.

Leaders who treat AI initiatives as a line-item expense rather than a strategic pillar consistently underinvest in the people and process changes required for scale. Technology accounts for roughly 10% of what makes an AI transformation successful. The remaining 90% is people and process.

The Shadow AI Problem

In the absence of clear leadership guidance, employees across most organisations are already using generative AI tools on personal devices, often without oversight, data governance, or ethical guardrails. This shadow AI represents a significant data sovereignty and security risk that only leadership intervention can address.

The solution is not prohibition. It is a structured “safe sandbox” approach: providing teams with sanctioned tools, clear usage policies, and ethical boundaries. Organisations that address this proactively find it becomes an accelerant for their broader AI initiatives. According to the UK government’s AI Opportunities Action Plan, published in January 2025, harnessing AI across the economy requires organisations to build the internal governance capacity to use it responsibly, not simply to restrict access.

The Cultural Immune Response

Middle management often perceives AI initiatives as a threat to their relevance. Without a deliberate intervention to reframe AI as augmentation rather than replacement, organisations experience a natural cultural rejection of new technology. People slow-walk implementations, find reasons that pilots cannot be extended, and quietly resist change.

Effective AI leadership requires visible, consistent messaging from the top that positions AI initiatives as tools that elevate human capability, not reduce headcount. Companies that manage this reframing successfully see adoption rates that are typically three to four times higher than those that do not.

Building a Strategic Vision for AI Initiatives

A strategic vision for AI initiatives is not a technology roadmap. It is a business outcomes plan that happens to use AI as its primary delivery mechanism. Leaders who start with the business question rather than the AI solution consistently achieve better results, and are far less likely to find themselves trapped in costly pilots that never reach scale.

Outcome-First Planning

The single most useful shift a leader can make is from asking “What can AI do?” to asking “What does our business need to win?” This outcome-first approach shapes the digital strategy that underpins any successful AI transformation. In the UK market, where productivity growth has remained stubbornly flat, AI initiatives that target specific bottlenecks deliver the clearest measurable returns.

A practical example: a leader at a professional services firm should not aim to “implement a large language model.” They should aim to “reduce the time spent on initial document review by 55%.” The first framing puts technology at the centre. The second puts the business outcome at the centre and makes success measurable from the outset.

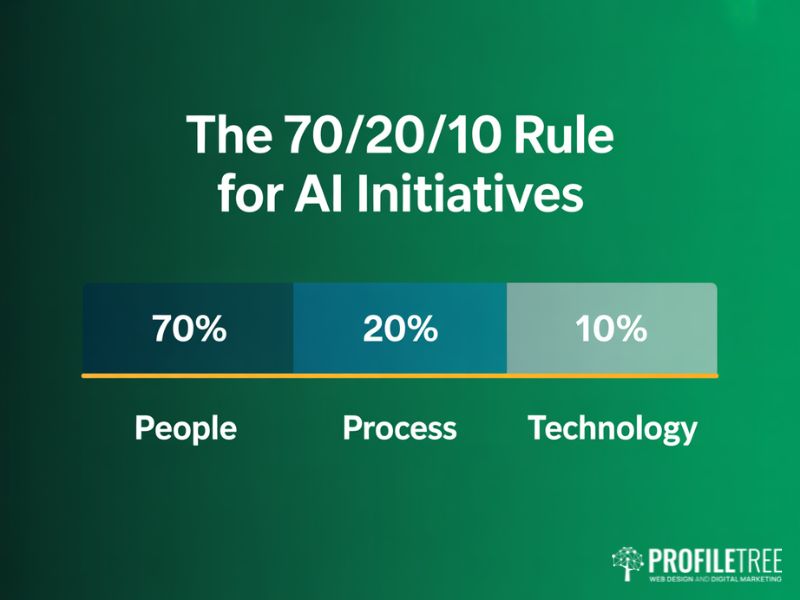

The 70/20/10 Model for AI Transformation

The organisations that scale AI initiatives most successfully follow a specific resource allocation model that their leaders enforce consistently:

70% People: Upskilling, change management, and cultural alignment. This is where the majority of leadership attention and investment must go.

20% Process: Re-engineering workflows to accommodate machine-speed data and AI-generated outputs.

10% Technology: Choosing the right models and infrastructure. This is where most organisations overspend and over-focus.

If your leadership team is spending the majority of its time on vendor selection and only a fraction on how your people will work differently, your AI initiatives are predisposed to fail regardless of the technology you choose.

Defining Good and Bad Leadership in an AI Context

Good and bad leadership qualities become more consequential in an AI context because the pace of change amplifies both. Good AI leadership is characterised by decisiveness grounded in data, transparency about what AI can and cannot do, and a genuine commitment to continuous learning. Bad AI leadership is characterised by delegating without accountability, chasing AI trends without clear business rationale, and treating AI governance as a compliance formality rather than a strategic tool.

ProfileTree’s Digital Strategist, Stephen McClelland, notes: “To champion AI initiatives, a leader must balance technical awareness with a deep understanding of how change affects their teams. The leaders who get this right tend to be the ones who spend as much time in conversations with their frontline staff as they do with their AI vendors.”

Setting Measurable Goals for AI Projects

Vague ambitions produce vague results. Every AI initiative should be accompanied by specific, time-bound performance metrics that connect directly to commercial outcomes. Whether the target is a reduction in processing time, an improvement in customer satisfaction scores, or an increase in revenue per employee, the metric must be agreed before the project begins, not after.

Leaders should also establish clear stage gates: criteria that a pilot must meet before it is funded for scale. This prevents the common pattern of pilots that drift from month to month without ever reaching a clear decision point.

Governance, Ethics, and Responsible AI

Governance is the element of AI initiatives that receives the least attention until something goes wrong. Leaders who treat it as an enabler rather than a constraint tend to move faster and with greater confidence than those who treat it as a bureaucratic obligation.

The UK Regulatory Context

Unlike the prescriptive EU AI Act, the UK government has taken a pro-innovation approach to AI regulation that places the primary responsibility for governance on individual organisations. The UK AI Regulation White Paper established principles rather than mandatory rules, which means UK-based businesses have more flexibility but also more accountability for the outcomes of their AI initiatives.

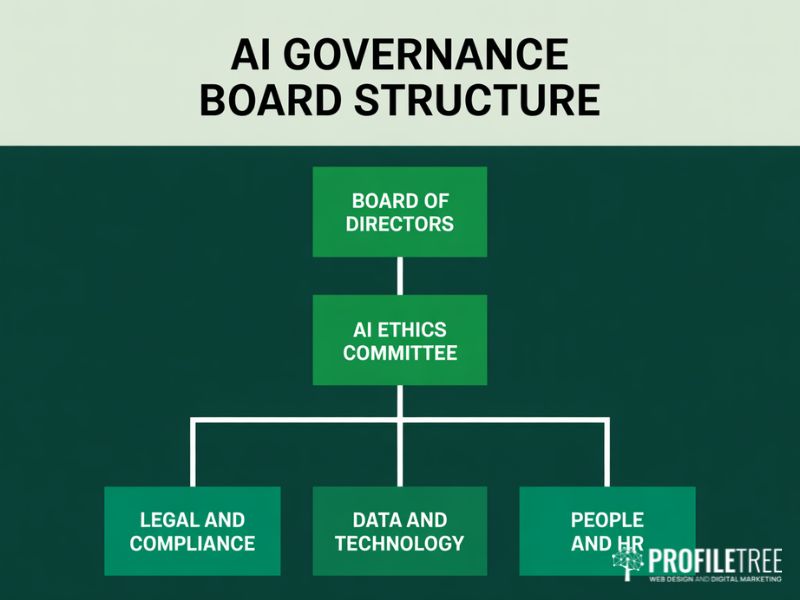

This approach puts internal governance at the centre of risk management. A well-structured AI ethics committee is not just a reputational shield. It is the mechanism through which an organisation maintains the trust of its customers, employees, and regulators as it scales its AI programmes.

Structuring Your AI Governance Board

An effective AI governance board for a mid-market UK organisation typically includes representation from legal and compliance, data and technology, HR and people management, and at least one senior business leader who can connect governance decisions to commercial priorities. The chair of the committee should report directly to the board.

The committee’s primary responsibilities are to define what constitutes acceptable use of AI within the organisation, to assess the risk profile of proposed AI initiatives before they are funded, and to conduct regular audits of deployed AI systems for bias, accuracy, and alignment with stated objectives.

Data Privacy and Bias Mitigation

AI initiatives are only as reliable as the data that underpins them. Leaders must ensure that data privacy protections are built into the design of every AI system from the outset, not bolted on after the fact. This means following principles of data minimisation, obtaining appropriate consent for data use, and maintaining clear records of how AI systems are trained and updated.

Bias in AI systems is an ongoing challenge that requires active management rather than a one-time fix. Routine audits of AI outputs, diverse training data, and multidisciplinary review teams are the practical mechanisms through which leaders can ensure their AI initiatives produce fair and consistent outcomes across different user groups.

Embedding AI Initiatives Across Your Organisation

Moving from a handful of AI initiatives to an AI-enabled organisation requires a deliberate approach to integration, upskilling, and cultural change. This is the phase where most transformation programmes either gain real momentum or quietly stall, and it is where the quality of day-to-day leadership matters most.

Integrating AI into Existing Workflows

AI must be adapted to fit within established processes before those processes are redesigned around AI. The practical starting point is workflow mapping: identifying the tasks within existing processes where AI can most clearly enhance decision-making, reduce processing time, or flag anomalies that would otherwise be missed.

In customer service, implementing AI chatbot tools to handle initial query triage allows human team members to focus on complex, high-value interactions. In finance, AI-driven anomaly detection reduces the time spent on manual reconciliation. In marketing and content, AI tools integrated into ProfileTree’s own content workflows have reduced the time required for initial research and structuring whilst maintaining the human editorial oversight that ensures quality and brand consistency.

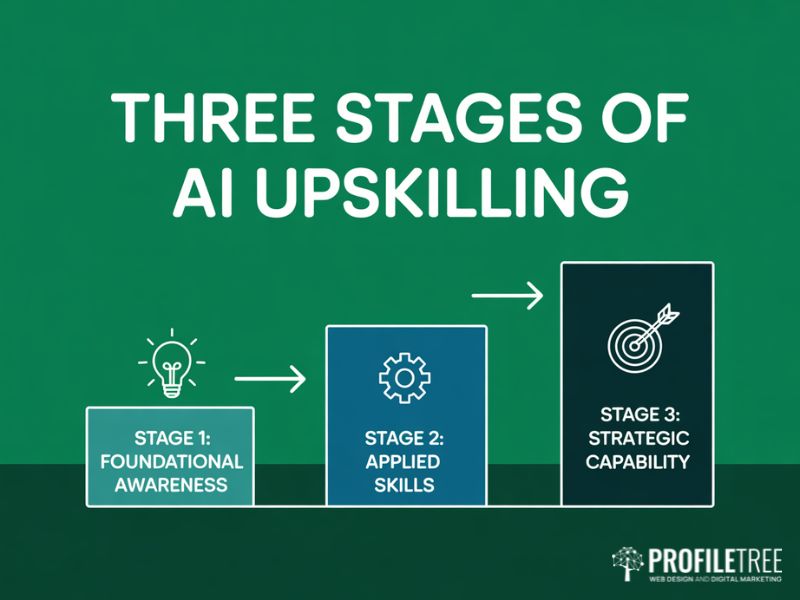

Upskilling and Training Programmes

The single most important investment a leader can make in their AI initiatives is in the people who will use, manage, and interpret AI outputs. This does not mean turning every employee into a data scientist. It means ensuring that every team member has sufficient AI literacy to work confidently alongside intelligent systems.

An effective upskilling programme for AI initiatives typically moves through three stages. The first builds foundational awareness: what AI can and cannot do, how it makes decisions, and where it tends to fail. The second develops applied skills: how to use specific AI tools within each person’s role. The third builds strategic capability: how to identify new opportunities for AI initiatives within a team’s domain and how to assess their viability.

ProfileTree delivers AI training for SMEs through Future Business Academy, and our experience consistently shows that organisations that invest in structured AI literacy programmes before deploying new AI tools achieve significantly higher adoption rates and return on investment than those that roll out tools and expect teams to self-educate.

Balancing Automation and Human Talent

The goal of AI initiatives is not to replace human judgement. It is to free human talent from the work that machines do better so that people can focus on the work that only humans can do well: building relationships, exercising creative judgement, navigating ambiguity, and making decisions that require ethical reasoning.

Leaders who communicate this framing clearly and consistently find that their teams become active contributors to new AI initiatives rather than passive recipients of change. As Stephen McClelland puts it: “AI should be seen not as a substitute for human expertise but as a complement that brings out the best in what our teams can do.”

Measuring ROI and Sustaining AI Momentum

Sustaining investment in AI initiatives over the medium and long term requires a clear, credible approach to measuring and communicating their value. Leaders who cannot demonstrate return on investment find that AI programmes are the first casualty when budgets come under pressure, regardless of the genuine progress being made.

Assessing the ROI of AI Investments

The most reliable approach to AI ROI measurement starts before the project begins. Define the baseline: what does the current process cost in time, money, and quality? Then define the target: what measurable improvement will the AI initiative deliver, and over what timeframe? Collect performance data from early pilots and use it to build the business case for scale.

Not every AI initiative will generate direct revenue impact. Some of the most valuable AI applications reduce risk, improve compliance, or increase the quality of outputs in ways that are harder to quantify but no less real. Leaders should develop a blended value framework that captures efficiency gains, risk reduction, quality improvements, and strategic positioning alongside direct commercial returns.

Leveraging Predictive Analytics for Better Decisions

One of the most powerful applications of AI initiatives for business leaders is predictive analytics: the use of historical data to model future outcomes and inform strategic decisions. Whether the application is forecasting customer demand, identifying early signals of employee disengagement, or modelling the financial impact of different strategic options, it transforms raw data into actionable foresight.

The key principle, as Stephen McClelland has noted, is that “harnessing predictive analytics positions organisations to preempt customer needs and tailor their services proactively, rather than reacting to market changes.” This requires investment not only in the AI tools themselves but in the data infrastructure and data quality standards that make reliable predictions possible.

Continuous Learning and Adaptability

AI initiatives are not one-time projects. The most successful organisations treat AI capability as a continuously evolving asset that requires ongoing investment, review, and refinement. This means building feedback loops into every deployed AI system, reviewing performance data regularly against original objectives, and being willing to retire or redesign initiatives that are not delivering.

The AI landscape is moving quickly, and the tools available to businesses today are meaningfully more capable than those available 18 months ago. Leaders who build a culture of continuous experimentation are better positioned to identify and act on new AI opportunities before their competitors do.

The UK Perspective on AI Leadership

Research from the Alan Turing Institute and surveys including the Deloitte Global Human Capital Trends report consistently show that leaders who actively engage with AI strategy, rather than delegating it, achieve significantly better outcomes. The UK’s position as a leading AI research hub, combined with the pro-innovation regulatory environment, gives British businesses a genuine structural advantage, but only if their leaders take an active, informed role in driving their AI initiatives forward.

For mid-market UK businesses in particular, the opportunity is significant. Larger enterprises often move slowly due to the complexity of their legacy systems and governance structures. Organisations with 50 to 500 employees have the agility to pilot, learn, and scale AI initiatives at a pace that their larger competitors struggle to match.

The First 90 Days of AI Leadership

If you are beginning or restarting your AI initiatives, the first 90 days are the most important. The actions taken in this period establish the culture, governance, and momentum that will determine whether your AI programme delivers lasting value or becomes another stalled project.

Days 1 to 30: Conduct an honest audit of existing AI initiatives, including any shadow AI activity. Define your outcome-first objectives. Identify two or three high-value, lower-complexity use cases that can demonstrate value quickly.

Days 31 to 60: Establish your AI governance structure. Begin the first stage of your upskilling programme. Launch a structured pilot with clear success criteria and a defined decision date for scale.

Days 61 to 90: Evaluate pilot results against your baseline. Communicate outcomes transparently to the organisation. Make a clear decision: scale, redesign, or stop. Use the learning to inform the next wave of AI initiatives.

ProfileTree works with businesses across Northern Ireland, Ireland, and the UK to develop and implement digital and AI strategy. If your organisation is ready to move its AI initiatives from aspiration to delivery, our team is available to support you at every stage of that journey.

FAQs

What makes good and bad leadership qualities in an AI context?

Good AI leaders are transparent about what AI can and cannot do, invest in upskilling their teams, and set clear governance frameworks before problems arise. Bad AI leadership typically means delegating AI strategy entirely to IT, chasing trends without clear business rationale, and treating governance as a box-tick exercise.

How do you measure the ROI of AI initiatives?

Establish a baseline before the project begins, set a measurable target, and collect performance data from the pilot phase. Account for efficiency gains, risk reduction, and quality improvements, not just direct revenue. Use stage gates to ensure investment decisions are based on evidence.

What is the biggest barrier to scaling AI initiatives?

Culture, not technology. Middle management resistance is the most consistent reason AI programmes stall. Organisations that invest in change management and AI literacy achieve higher adoption rates than those that focus primarily on tool selection.

How should a UK business approach AI governance?

The UK’s pro-innovation stance means responsibility sits with the organisation. Establish an AI ethics committee with board-level visibility, define acceptable use policies, and audit deployed systems regularly. Internal governance is your primary risk management tool.

What role does data quality play in AI initiatives?

AI systems produce outputs that are only as reliable as the data used to train and test them. Data governance frameworks, privacy compliance, and clean, well-maintained training datasets must be in place before AI initiatives are deployed at scale.