AI Ethics and Responsible Deployment: Guiding UK and Irish SMEs

Table of Contents

AI ethics and responsible deployment are no longer conversations reserved for boardrooms at IBM or Microsoft. For small and medium-sized businesses across Northern Ireland, Ireland, and the UK, they are now practical obligations with real commercial consequences. Get it right, and you build faster trust with customers, sidestep regulatory penalties, and position your organisation ahead of competitors, still treating ethics as an afterthought. Get it wrong, and the reputational and legal exposure can outweigh any efficiency gain the AI tool ever delivered.

This guide is not a philosophy lecture. It is a step-by-step governance framework for business owners and managers who are already using AI tools, or planning to, and need a working structure around them. We will cover the regulatory picture facing UK and Irish businesses, the five practical steps of responsible deployment, how to vet AI vendors, what your staff need to understand, and how to communicate your AI use transparently to customers.

Beyond Theory: Why AI Ethics and Responsible Deployment Is Now a Business Priority

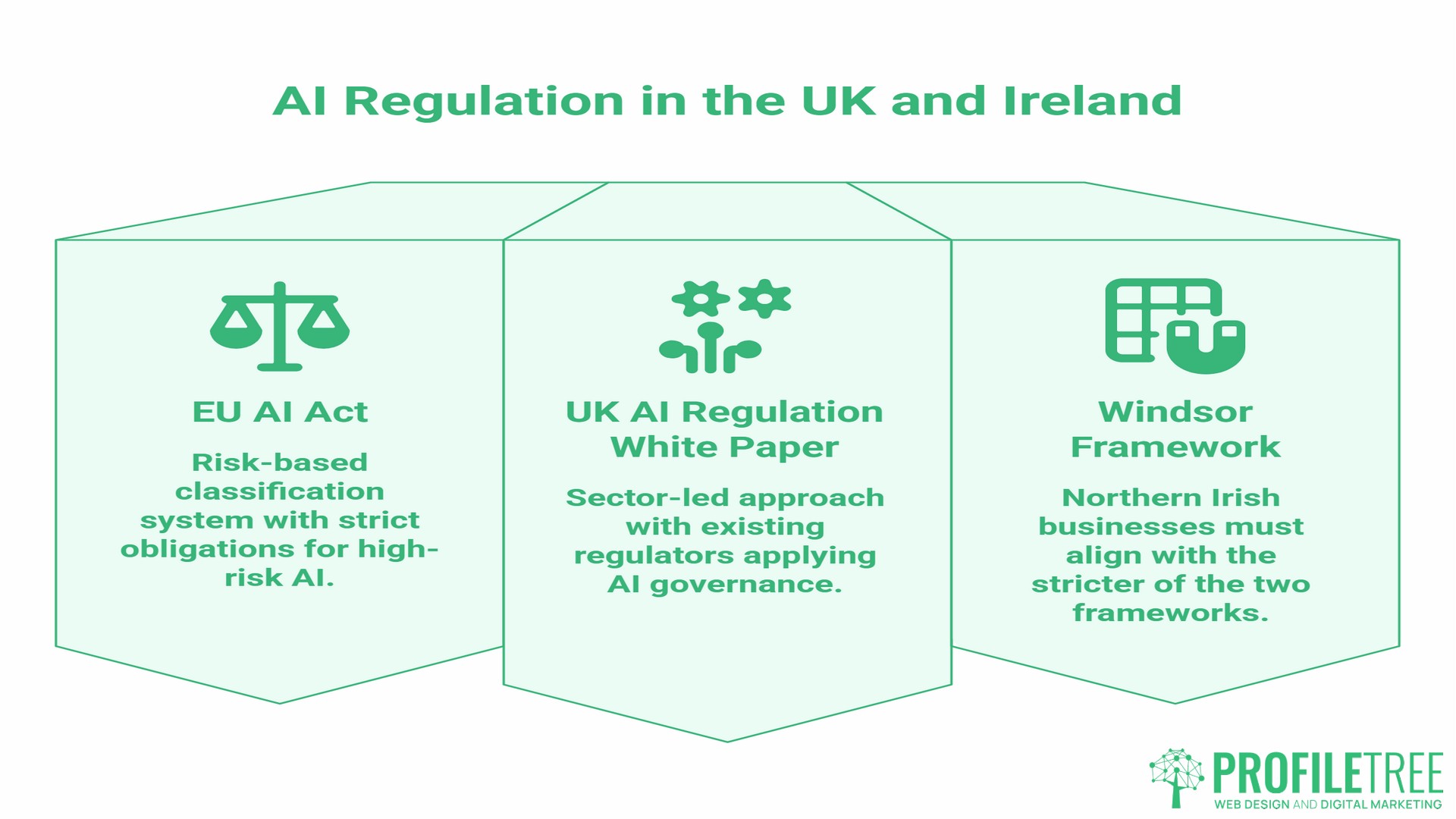

The shift from “we should probably think about this” to “we need to act on this now” happened quickly. The EU AI Act entered into force in August 2024 and has been rolling out in phases ever since, with the most significant obligations for high-risk AI systems applying from August 2026. The UK government, through the Department for Science, Innovation and Technology (DSIT), has taken a deliberately different, sector-led approach, favouring flexibility over a single binding framework.

For most SMEs, neither document reads like something written with a ten-person team in mind. That is the gap this article addresses.

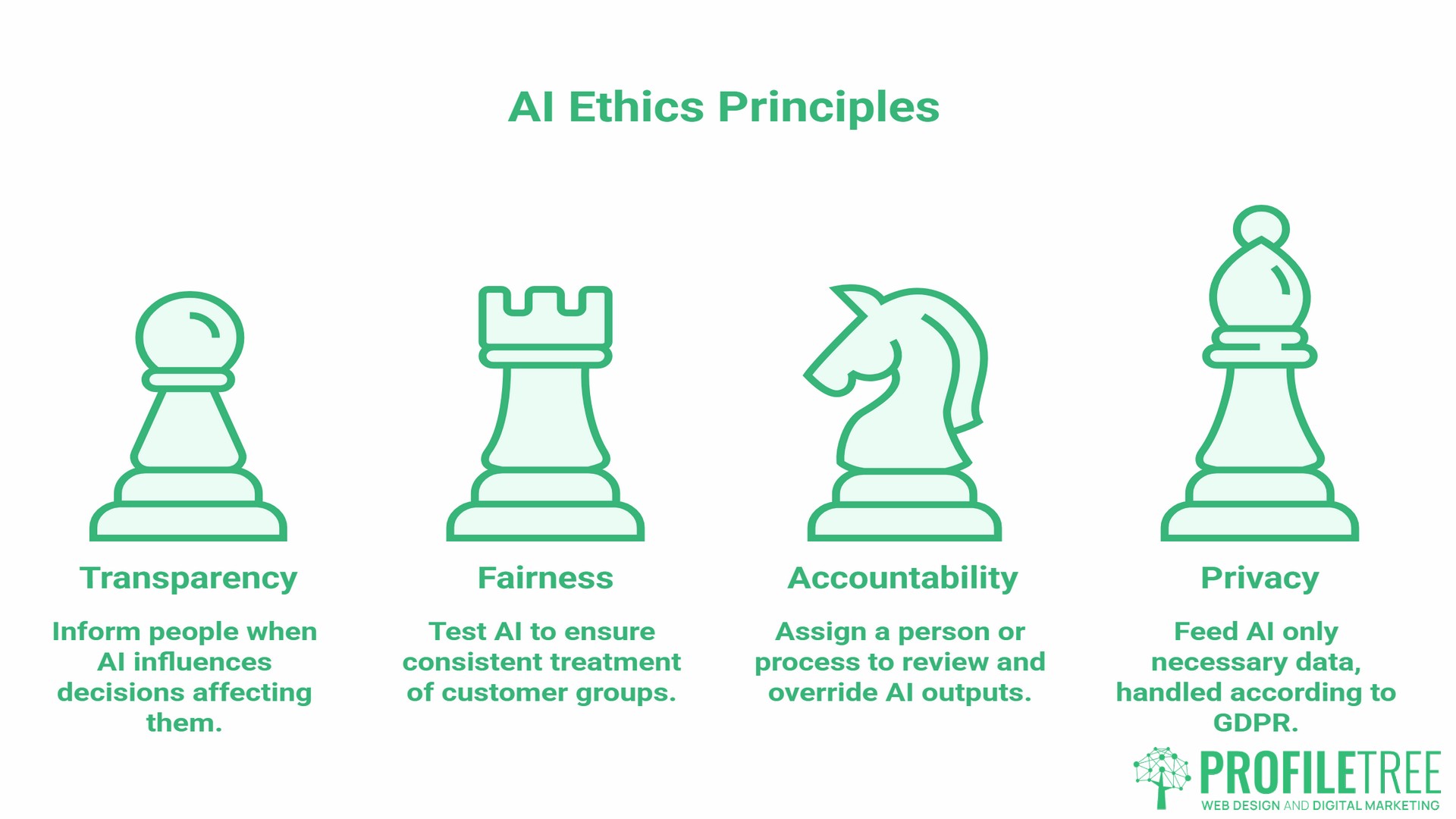

AI ethics and responsible deployment, at their core, come down to four principles: transparency, fairness, accountability, and privacy. These are not abstract values. Each one translates directly into something you either do or fail to do when you deploy an AI tool in your business.

Transparency means telling people when AI is influencing a decision that affects them. Fairness means testing whether your AI treats different groups of customers or applicants consistently. Accountability means having a named person or process that reviews AI outputs and can override them. Privacy means feeding the AI only the data it actually needs, in line with your GDPR obligations.

Understanding where your own AI use sits across those four dimensions is the starting point for everything that follows.

“Ethical AI deployment is not about limiting what technology can do. It is about making sure your business can stand behind every decision a system makes on your behalf,” says Ciaran Connolly, founder of ProfileTree.

The UK and Irish Regulatory Picture: Navigating the Divergence

This is where AI ethics and responsible deployment become more complex for Northern Irish and Irish businesses than for their counterparts in London or Edinburgh. Understanding the divergence clearly is one of the most valuable things this guide can offer.

The EU AI Act (applies to Irish businesses and UK firms trading with EU customers)

The EU AI Act uses a risk-based classification system. AI applications are categorised as unacceptable risk (banned outright), high risk (strict obligations), limited risk (transparency requirements), and minimal risk (largely unregulated). High-risk categories include AI used in recruitment, credit scoring, educational assessment, and certain customer-facing decision systems. If your business operates in Ireland, or if your UK business serves EU customers and uses AI that makes or influences decisions affecting them, the Act’s obligations apply to you.

The phased implementation timeline is as follows:

1 August 2024: The AI Act enters into force. 2 February 2025: Prohibited AI practices banned; AI literacy obligations apply 2 August 2025: GPAI model rules and governance obligations apply 2 August 2026: High-risk AI system requirements fully in force 2 August 2027: High-risk AI embedded in regulated products

The UK AI Regulation White Paper (applies to UK-based businesses)

The UK approach is sector-led rather than legislation-led. Rather than a single AI Act, the UK government has asked existing regulators (the ICO, FCA, CMA, and others) to apply AI governance through their existing frameworks. The five cross-sector principles are safety, transparency, fairness, accountability, and contestability. There is no single compliance body to register with; obligations depend on your sector.

The Windsor Framework dimension for Northern Irish businesses

Northern Irish businesses face additional complexity. Under the Windsor Framework, Northern Ireland maintains alignment with certain EU single market rules for goods. While AI services are not goods in the traditional sense, the practical reality for many Northern Irish firms is that they serve both UK and EU customers and should plan their AI governance around the stricter of the two frameworks rather than defaulting to the UK’s lighter-touch approach.

A straightforward decision rule: if your business collects data from, or makes automated decisions affecting, anyone in the EU or Republic of Ireland, build your governance framework around EU AI Act obligations. The UK framework will not exceed these requirements, so a single EU-aligned policy covers both.

For a detailed breakdown of the legal obligations triggered by your specific AI use cases, our guide to ethical AI and legal requirements for UK businesses maps the regulatory picture by business function.

The Five-Step Framework for Responsible AI Deployment

This is the core of the article. Each step is designed to be actionable for a business without a dedicated legal team or data science department.

Step 1: Appoint an AI Custodian

Someone in your organisation needs to own AI governance. This does not need to be a new hire or a formal “Chief AI Ethics Officer.” In most SMEs with 10 to 100 employees, the AI Custodian role sits with an operations manager, a senior marketer, or a director who already touches technology decisions.

The AI Custodian’s responsibilities are narrow but specific. They maintain a register of all AI tools the business uses, confirm each tool has been assessed against the criteria in Step 3, oversee the review cadence in Step 5, and serve as the named point of contact if a customer questions an AI-influenced decision.

A two-hour monthly review meeting is sufficient for most SMEs at the early stages of AI adoption. Document who holds the role, what they are responsible for, and review the appointment annually as AI use in the business changes.

Step 2: Write an AI Acceptable Use Policy

An AI Acceptable Use Policy does not need to be a legal document. A one-page internal statement that answers four questions is enough to start: which AI tools are approved for use by staff, what data can and cannot be fed into those tools, which decisions must always involve human review before they are acted on, and how the business handles an AI output that seems incorrect or unfair.

The policy sets the boundary between sanctioned AI use and staff going off-script with personal tools that may handle customer data without authorisation. It also creates the paper trail that regulators and insurers increasingly want to see.

For businesses using AI specifically in marketing, content creation, or customer communications, the considerations around personalisation, consent, and disclosure warrant their own treatment. Our guide to the ethics of AI in marketing covers that territory in depth.

Step 3: Assess and Vet Every AI Tool Before Deployment

Most SMEs are not building AI systems. They are buying or subscribing to them. This changes the nature of the ethical obligation. You are not responsible for how the model was trained, but you are responsible for what you do with its outputs and what data you feed into it.

Before deploying any AI tool, your AI Custodian should work through six assessment areas.

- On data handling: where is your data stored, is it used to train the vendor’s model, and can you opt out?

- On bias and fairness: has the vendor published bias testing results, and what populations were the model tested on?

- On explainability: can the system explain why it produced a particular output?

- On data residency: does the vendor store data within the UK or EU, and does this comply with your GDPR obligations?

- On incident response: what happens if the system produces a harmful or discriminatory output, and who do you contact?

- On sub-processors: does the vendor share data with third parties, and who are they?

If a vendor cannot answer these questions clearly, that is itself a risk signal. The inability to explain data handling or bias testing does not mean the tool is unethical, but it does mean you are taking on unknown risk.

AI systems used in recruitment, performance assessment, credit decisions, or any automated process that materially affects individuals are, by definition, higher risk under the EU AI Act and require more rigorous assessment. For a deeper examination of how algorithmic bias enters these systems and how to test for it, our article on mitigating bias in AI algorithms covers the technical and operational details.

Step 4: Build in Human-in-the-Loop Checkpoints

Automation is valuable precisely because it removes human bottlenecks. But for any AI-influenced decision that has a material effect on a person, a human review step is not optional under either the EU AI Act or the UK regulatory framework. It is also common-sense risk management.

Identify the decisions in your business that AI influences currently or will influence, then classify them by consequence. A chatbot answering FAQs carries low consequences if it gets something wrong. An AI tool that screens job applications, flags credit risk, or recommends pricing to individual customers carries high consequences.

For high-consequence decisions, build a mandatory review step. This does not mean a human reviews every AI output. It means a human is available to review any output flagged as uncertain, contested by the affected person, or falling outside expected parameters. Our guide to human-AI collaboration for SMEs covers how to design these checkpoints practically without creating bottlenecks that negate the efficiency gains AI was meant to deliver.

Step 5: Disclose AI Use to Customers and Set a Review Cadence

Transparency with customers about AI use is moving from a reputational nicety to a regulatory requirement. The EU AI Act requires specific disclosure for AI systems that interact with people or influence decisions affecting them. The UK’s ICO has published guidance on transparency in automated decision-making under UK GDPR.

Practically, this means three things for most SMEs. First, update your privacy policy to name the AI tools you use, describe what data they process, and explain how automated decisions can be reviewed or contested. Second, add a brief disclosure wherever customers interact with AI directly: a chatbot that does not identify itself as automated is not just poor practice; it is a potential GDPR breach. Third, make sure customers know their rights if an automated decision affects them, including the right to request human review for consequential decisions.

The second element of Step 5 is internal: set a quarterly review cadence. AI systems drift. A tool that performed well when you deployed it may produce different outputs six months later as the vendor updates the underlying model. Your AI Custodian should review outputs from high-consequence tools quarterly, check for any unexpected findings, and document the results. An annual external review of your entire AI register is good practice once your AI use matures.

AI Vendor Due Diligence: A Practical Checklist

The five-step governance framework covers your internal obligations. Vendor procurement is where AI ethics and responsible deployment most frequently break down in practice, because the majority of AI-related incidents in SMEs originate with tools adopted quickly without adequate assessment.

Before signing up for any AI tool that will handle customer data or influence decisions, work through the following questions:

- On data and privacy: do the vendor’s terms of service give them the right to train their model on your data? Is data stored within the UK or EEA? What is the vendor’s process for a data subject access request? Has the vendor undergone a recent third-party security audit?

- On model behaviour: what is the model’s known failure rate for your intended use case? Does the vendor provide documentation on how the model handles edge cases? Can outputs be overridden or corrected without affecting the model’s behaviour?

- On contractual protections: does the contract include data processing terms compliant with UK GDPR and, where relevant, EU GDPR? Is there a breach notification clause, and what is the notification window? What liability does the vendor accept if the model produces a harmful output?

- On vendor stability: is the vendor financially stable enough that the tool will still exist in 12 months? What happens to your data if the vendor is acquired or ceases trading?

For data privacy obligations specific to AI deployment, our guide on balancing AI innovation with user data rights, along with our overview of data rights in AI, provides the regulatory detail.

Staff Training and the Human-in-the-Loop Mandate

Your governance framework is only as strong as the people operating within it. Staff who do not understand the limits of AI tools, the types of outputs that require human verification, or the data-handling rules for third-party platforms create exposure that no policy document can close.

AI ethics and responsible deployment training for non-technical staff does not need to be a full-day course. For most SMEs, a focused two-hour session covering four areas is sufficient as a starting point: what AI tools the business uses and what they do, what data can and cannot be shared with external AI tools, which outputs require human review before being acted on, and how to flag a concern if an AI output seems incorrect or unfair.

This training should be repeated annually and updated when a new AI tool is introduced. It should be documented to demonstrate to regulators or clients that staff competency has been addressed.

ProfileTree’s digital training programmes include AI literacy sessions designed for SME teams across Northern Ireland, Ireland, and the UK, covering responsible use, prompt discipline, and governance basics. For businesses building longer-term AI capability, our AI competency framework guide outlines the skills progression from basic digital literacy through to advanced AI implementation. The ” How to Train Your Staff on AI Tools ” guide covers the practical session structure for different roles across your organisation.

Measuring the Return on Responsible Deployment

Investing in AI ethics and responsible deployment is often framed as a cost or a constraint. The commercial case runs in the opposite direction.

- Legal risk reduction. Breaching the EU AI Act’s obligations for high-risk AI systems carries fines of up to €15 million or 3% of worldwide annual turnover, whichever is higher. Violations of outright prohibited AI practices attract fines of up to €35 million or 7% of worldwide annual turnover. For SMEs, fines are capped at whichever of those two thresholds is the lower amount, but even a proportionate fine at that level can be material for a small business. A documented governance framework is not a guarantee against enforcement, but it is the strongest mitigating factor regulators consider.

- Customer trust. Customers are more willing to share data and engage with AI-powered services from businesses that disclose their AI use clearly and demonstrate human oversight. Transparency is not just a regulatory requirement; it is a conversion factor.

- Procurement advantage. Larger businesses increasingly include AI governance questions in supplier due diligence. Having a documented AI Acceptable Use Policy and a named AI Custodian may become a procurement prerequisite for enterprise supplier relationships within the next two years.

- Insurance. Cyber insurers are beginning to include AI-related incidents in policy scope. Documented governance frameworks reduce premium risk and may affect coverage terms.

For SMEs evaluating the wider commercial case for AI adoption, our cost-benefit analysis of AI implementation for SMEs and our overview of how SMEs are successfully implementing AI solutions provide the business context.

Future-Proofing Your AI Ethics and Responsible Deployment Strategy

The regulatory environment around AI ethics and responsible deployment will continue to tighten over the next three to five years. The EU AI Act’s high-risk provisions come fully into force in August 2026, with a further wave of obligations applying in August 2027. The UK government has signalled it will introduce primary legislation if voluntary, sector-led compliance proves insufficient. Consumer awareness of AI use is growing, and with it, the reputational cost of being caught without a credible governance framework.

The businesses that will manage this transition most comfortably are not those that adopt AI most aggressively. They are those who built a governance structure early, documented their decisions, trained their staff, and treated transparency with customers as a competitive advantage rather than a compliance burden.

The five steps in this guide are a starting point, not a final answer. Appoint your AI Custodian and write your Acceptable Use Policy first. Then review your vendor contracts, set a quarterly review date, and build from there. The governance structure you put in place today will be the foundation for the more complex decisions you will face as AI becomes more capable and more consequential in your operations.

ProfileTree’s AI implementation and training services support businesses across Northern Ireland, Ireland, and the UK at every stage of that journey, from initial AI readiness assessments through to staff training and governance framework development, so if you are building your AI ethics and responsible deployment strategy and want a partner who understands the specific regulatory context facing UK and Irish businesses, get in touch with our team.

Frequently Asked Questions

What is the difference between AI ethics and AI governance?

AI ethics is the set of values and principles that should guide the development and use of AI: fairness, transparency, accountability, and privacy. AI governance is the operational structure that puts those principles into practice, covering the policies, roles, review processes, and documentation that make ethics real rather than aspirational. Ethics is the “why.” Governance is the “how.” Committing to AI ethics and responsible deployment means building both the values and the structure that delivers on them. An SME that has thought carefully about ethics but has no governance structure in place has not yet addressed its actual exposure.

Do UK companies have to follow the EU AI Act?

Yes, in many cases. The EU AI Act applies extraterritorially: if your AI system’s output is used within the EU, or if the people affected by it are in the EU, the Act applies regardless of where your business is based. UK businesses selling to EU customers, employing EU-based staff, or using AI in any cross-border commercial context should assess their obligations under the Act. Northern Irish businesses, given their operational ties to the Republic of Ireland and the EU single market, should treat EU AI Act compliance as a baseline rather than optional.

Does my small business need an AI Ethics Board?

Not a formal board. What you need is an AI Custodian: a named person who owns AI governance within your organisation, maintains the AI register, oversees vendor assessments, and manages the review cadence. For most SMEs, this is a role added to an existing position rather than a new hire. A two-hour monthly review is sufficient to start. As AI use grows and becomes more consequential, the governance structure should grow with it.

What are the four principles of AI ethics?

Transparency means people are informed when AI is influencing a decision that affects them. Fairness means the AI system treats different groups of people consistently and does not produce discriminatory outputs. Accountability means a person or process is responsible for AI outputs and can intervene when something goes wrong. Privacy means only the data necessary for the AI’s function is collected and processed, in line with applicable data protection law. These four principles underpin every major framework for AI ethics and responsible deployment, including the EU AI Act, the UK AI White Paper, and the NIST AI Risk Management Framework.

What is a human-in-the-loop system?

A human-in-the-loop system is any AI deployment where a person reviews, approves, or can override the AI’s output before it has a material effect on someone. It does not mean a human reviews every output; it means a human is always available to intervene, and for high-consequence decisions, that review is mandatory rather than optional. The principle is that consequential decisions affecting employment, credit, health, legal standing, or significant financial outcomes should never be fully delegated to an automated system without human accountability for the result.

How do I tell my customers I am using AI?

Start with your privacy policy. Add a clear section naming the AI tools you use, what data they process, and how automated decisions can be reviewed or challenged. For any customer-facing AI interaction, add a brief disclosure at the point of contact: “This response has been generated by an AI system. If you would like to speak with a member of our team, contact us here.” For consequential decisions, include explicit information about the customer’s right to request human review. Keep the language plain and specific. Vague references to “technology” or “automated systems” do not satisfy transparency requirements under UK or EU law.

How do I vet an AI vendor before signing up?

Focus on five areas: data storage and residency, training data rights (does their terms of service allow them to train on your data?), bias testing documentation, breach notification terms, and contractual liability for harmful outputs. Ask these questions directly before signing any agreement. If the vendor cannot or will not answer clearly, treat that as a risk signal worth escalating before committing.