Voice-Activated AI: From Smart Speakers to Agentic Tools

Table of Contents

Voice-activated AI has crossed a threshold. The simple ask-and-answer assistants that set timers and played playlists have given way to agentic systems capable of booking meetings, updating CRMs, and handling multi-step customer service calls without human intervention.

For businesses across the UK and Ireland, this shift creates real opportunities and genuine risks. The right platform, deployed correctly within UK data protection rules and EU AI Act requirements, can cut overhead and improve customer experience. The wrong one, or a poorly implemented one, can expose businesses to compliance failures and eroded customer trust.

This guide covers what voice AI agents actually are in 2026, how the leading platforms compare, what the UK and Irish regulatory picture looks like, and how to build a practical implementation plan for your team.

The 2026 Shift: From Voice Assistants to Agentic Voice AI

The distinction between a voice assistant and a voice agent is not a marketing nuance; it is a functional one. Understanding it is the first step to deploying the technology well.

What Changed and Why It Matters

Traditional voice assistants, think early Siri or Alexa, operated on a retrieval model. A user asked a question; the system fetched a pre-determined answer or triggered a pre-built skill. The interaction was a single turn, and the assistant had no ability to reason across multiple steps or take meaningful actions in external systems.

Agentic voice AI is different in structure. These systems combine speech-to-text (STT) transcription with a large language model capable of multi-step reasoning, then connect that reasoning engine to APIs that can actually do things: update a calendar, pull a CRM record, raise a support ticket, or complete an order.

The flow runs from user audio to STT, through natural language understanding (NLU) and agentic reasoning, to an API call, and then back to a text-to-speech (TTS) response. The whole cycle, on competitive platforms in 2026, takes between 400 and 900 milliseconds.

For SMEs, this means a voice agent can field an inbound call from a client, check their account status, log a query, and schedule a callback without a human being involved at any point. That is a materially different proposition from “Hey Alexa, what time is it?”

How Consumers Interact With Voice AI

Consumer behaviour has adapted alongside the technology. Voice searches now skew heavily towards transactional and complex queries. Research by the Marketing Science Institute found that voice shopping users report high satisfaction rates and a strong tendency to repeat purchases with the same retailer. People use voice interfaces for tasks they previously considered too involved for a hands-free channel: checking order status, making returns, and comparing product options.

This behavioural shift has direct implications for how businesses structure their customer journeys. A growing share of inbound enquiries now come via voice, and users expect the same quality of response they would get from a well-trained human agent. Businesses that meet that bar earn loyalty; those that route voice callers to a clunky IVR menu lose them.

To understand how AI is reshaping broader customer interactions, SME AI implementation case studies show how companies have already moved from experimentation to operational deployment.

Accent and Dialect Accuracy in the UK and Ireland

One issue that US-produced content consistently ignores is how these systems handle regional accents. Hiberno-English, Belfast dialect, Glaswegian, Geordie, and Scouse all sit well outside the American English phoneme distributions that most commercial STT models are trained on. Accuracy rates for strong regional accents can drop by 15 to 25 percentage points compared to standard received pronunciation.

OpenAI’s Whisper v3 model, which underpins several commercial platforms, offers custom phoneme training that can be tuned for specific dialect profiles. Retell AI and ElevenLabs both support this configuration. For any UK or Irish business deploying voice AI in a customer-facing role, accent accuracy is not optional; it is a prerequisite for adoption.

Comparing the Top Voice AI Platforms: Benchmarks for UK Businesses

The market for agentic voice AI has consolidated around a handful of platforms, each with a different strength profile. The table below compares the most commercially relevant options on the metrics that matter for UK and Irish deployments.

All prices and figures in this guide are indicative UK examples and correct at the time of writing; use them as a benchmark rather than fixed quotations.

| Platform | Avg. Latency (ms) | Approx. Cost | Interruption Handling | UK/IE Accent Accuracy | Best Use Case |

|---|---|---|---|---|---|

| Retell AI | 400–600 | From ~£0.05/min | Yes | Good (with tuning) | High-volume call automation |

| ElevenLabs + Vapi | 500–800 | From ~£0.08/min | Yes | Very good | Natural, human-like interaction |

| Lindy.ai | 600–900 | Subscription from ~£29/mo | Partial | Moderate | Executive task management |

| OpenAI Realtime API | 300–500 | Usage-based, from ~£0.03/min | Yes | Good | Custom development builds |

| Google Gemini Live | 400–700 | Included in Workspace tiers | Yes | Good | Productivity and meeting tools |

Retell AI: Built for Call Volume

Retell AI is purpose-built for businesses that handle large numbers of inbound or outbound calls. Its infrastructure is optimised for low latency and concurrent session handling, making it a practical option for contact centres and e-commerce operations running thousands of calls per day. The platform supports webhook integrations with most major CRMs and has a no-code workflow builder that reduces the development overhead for smaller teams.

The accent accuracy gap for non-US English can be partially addressed through phoneme configuration, but this requires technical setup time. Businesses planning a UK or Irish deployment should budget for a dialect tuning phase before going live with customers.

ElevenLabs and Vapi: When Voice Quality Is Non-Negotiable

ElevenLabs produces some of the most natural-sounding synthetic voices available, and its integration with the Vapi telephony layer creates a stack that is genuinely difficult to distinguish from a human agent in controlled conditions, for sectors where tone and empathy matter, such as healthcare, financial services, or premium hospitality, this combination is worth the higher per-minute cost.

The pairing also performs well across UK and Irish accents, partly because ElevenLabs allows custom voice cloning from audio samples, meaning businesses can create a voice that reflects their region and brand personality rather than defaulting to a transatlantic neutral.

Lindy.ai: Designed for Knowledge Workers

Lindy.ai takes a different approach, targeting executive task management rather than customer-facing calls. It connects to email, calendar, and project management tools, allowing users to delegate scheduling, research, and summarisation tasks via voice. The latency is higher than that of purpose-built telephony platforms, but for internal productivity applications, this is acceptable.

For businesses already using AI prompts for business workflows, Lindy fits naturally into the stack as a voice-first interface layer on top of existing tools.

Compliance and Ethics: GDPR, the EU AI Act, and UK Data Sovereignty

This is the section most US-produced voice AI content skips entirely. For businesses operating in Northern Ireland, the Republic of Ireland, or anywhere across the UK, data compliance is not a footnote; it shapes which platforms you can legally use and how you must configure them.

The UK and EU Regulatory Split

Post-Brexit, the UK operates its own version of GDPR under the UK Data Protection Act 2018 and the UK GDPR. The UK’s approach to AI regulation has been described as “pro-innovation,” meaning the government has deliberately avoided a prescriptive framework in favour of sector-specific guidance from existing regulators such as the ICO, FCA, and CMA.

The EU AI Act, which came into force in 2024 and reaches full application in stages through 2026, takes a different approach. It classifies AI systems by risk level and imposes conformity assessments, transparency obligations, and, for high-risk applications, human oversight requirements. Crucially, the EU AI Act applies to any business placing an AI system on the EU market or deploying one that affects EU residents, regardless of where the business is incorporated.

For Northern Ireland businesses, this creates a dual compliance challenge. Under the Windsor Framework, NI maintains alignment with certain EU single market rules. Whether this extends to the EU AI Act in full is a live legal question, and businesses in NI should take specific advice rather than assuming either the UK or EU framework applies in isolation. The Brexit impact on digital marketing is one lens through which this divergence has already played out for UK operators.

Recording Calls and Legitimate Interest Under GDPR

Deploying a voice AI agent that records or transcribes calls raises specific GDPR obligations. Recording a call without notifying the other party is, in most circumstances, unlawful. Under UK GDPR, the lawful basis most commonly cited for call recording is “legitimate interest,” but this requires a balancing test demonstrating that the business’s interest in recording the call outweighs the individual’s right to privacy.

In practice, this means every voice AI deployment that involves call recording must include a clear notification to callers at the start of the interaction. Consent is preferable where it can be obtained without creating friction that undermines the user experience. Data retention periods for voice recordings must be defined and enforced; storing transcripts indefinitely is not compliant.

On-Premise Versus Cloud Deployment

For businesses handling sensitive data, including patient information, financial records, or legally privileged communications, the question of where voice AI processes and stores data is critical. Cloud-based platforms process audio on remote servers, which raises questions about data transfer, storage location, and third-party access. Most major providers now offer UK or EU data residency options, but this must be verified contractually, not assumed.

On-premise or private cloud deployment, running the STT and LLM components within your own infrastructure, eliminates the data transfer risk but requires significantly more technical resources to set up and maintain. For most SMEs, a cloud platform with verified UK/EU data residency and a signed Data Processing Agreement is the practical middle ground.

Broader questions about data handling and the ethics of digital tools are covered in the guide to ethics in digital marketing, which addresses the accountability frameworks businesses need to put in place,e regardless of the technology they are using.

Voice AI in Rural UK and Ireland: The Connectivity Challenge

Almost every benchmark published by voice AI providers assumes a fibre connection with 100Mbps or more. That assumption does not hold across significant parts of the UK and Ireland, and ignoring it leads to poor deployment decisions.

Minimum Requirements and Real-World Performance

Low-latency voice AI requires a stable upload and download speed of at least 5Mbps. Below that threshold, the STT pipeline introduces delays that become audible to the caller and undermine the experience. On a 4G connection with typical rural signal variation, the latency can spike from 600ms to over 2,000ms during peak usage periods, which is functionally unusable for customer-facing applications.

The rural broadband gap remains a genuine issue across Northern Ireland, the west of Ireland, the Scottish Highlands, and large parts of Wales and the English countryside. While the UK government’s Project Gigabit programme is extending full-fibre coverage, full rural penetration is years away. Businesses in these areas need to assess their actual connection quality before committing to a cloud-dependent voice AI stack.

Edge Computing as a Partial Solution

Edge computing moves part of the AI processing closer to the device rather than routing all audio to a remote server. For voice applications, on-device STT models can transcribe speech locally and send text rather than audio to the cloud, reducing both latency and bandwidth requirements substantially.

Whisper v3, running locally on a modern laptop or server, can transcribe in near-real time over a 2Mbps connection. This approach sacrifices some of the agentic capability available through fully cloud-connected platforms, but it is a viable option for businesses in areas where connectivity is inconsistent. Vendors, including Vapi, offer hybrid deployment models that use local STT with cloud-based reasoning, which represents a reasonable compromise for most rural use cases.

Northern Ireland and the Island of Ireland Context

Northern Ireland presents an additional consideration: cross-border operations with the Republic of Ireland are common across sectors, including tourism, agriculture, logistics, and professional services. Voice AI deployed in a cross-border context must handle both Hiberno-English and Northern Irish dialect patterns, operate within two separate regulatory frameworks, and potentially process data under both UK GDPR and EU GDPR, R depending on where customers are located.

With tourism being one of the major economic drivers for Northern Ireland, it is worth noting that visitors from across the island and beyond increasingly expect voice-enabled services. Connolly Cove’s guide to top cities in Northern Ireland illustrates the range of visitor touchpoints where voice AI can meaningfully improve the customer experience, from hotel check-in to visitor information.

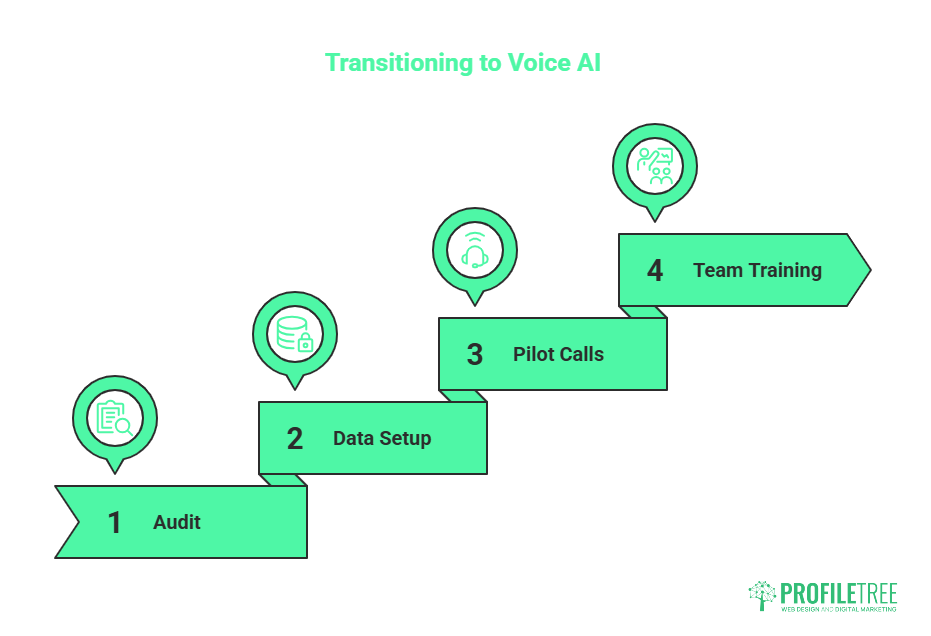

Implementation Checklist: Transitioning Your Team to Voice AI

The platforms are mature enough to deploy. The regulatory picture is clear enough to navigate. What typically holds businesses back is the implementation process: understanding what to build, in what order, with what safeguards. The following framework draws on real-world deployment patterns rather than vendor promises.

Phase One: Audit Before You Build

Start with your inbound call or interaction data. Identify the ten most common reasons people contact your business by phone or chat. Of those ten, how many involve a task that follows a predictable pattern? Appointment booking, order status checks, FAQ responses, and basic account queries are all strong candidates for voice AI automation. Sales conversations, complaint resolution, and technically complex support calls are not, at least not without a human-in-the-loop handoff protocol.

This audit also surfaces the accent and dialect profile of your customer base. If 60% of your callers are from Belfast and 30% are from Dublin, that is the accent matrix your STT model needs to handle reliably before you go live.

Businesses that have already invested in AI adoption for SMEs typically find that the audit phase surfaces far more opportunities than they expected, alongside a clearer picture of where human oversight is non-negotiable.

Phase Two: Data and Compliance Setup

Before writing a single line of configuration, resolve the compliance questions. Confirm your chosen platform’s data residency. Sign a Data Processing Agreement. Write the call notification script that informs callers they are speaking with an AI system. Define your data retention policy for transcripts and recordings. If you are operating in or serving customers in the Republic of Ireland, document your EU AI Act assessment for the system’s risk classification.

This step is tedious, and it feels like it is slowing things down, but if you skip it, you will spend significantly more time unwinding compliance failures after launch.

Phase Three: Pilot With Real Calls

Run a parallel pilot where the voice AI handles a subset of inbound calls alongside your human team. Set a clear accuracy threshold, for example, successful call resolution without human escalation, and define what “successful” means for your specific use cases. Give the pilot at least four weeks to generate statistically meaningful data across different times of day, call types, and caller profiles.

Use the pilot data to refine the phoneme configuration, adjust the interruption handling settings, and identify the escalation triggers that should always transfer to a human agent. A well-run pilot saves months of post-launch remediation.

Phase Four: Team Training and Change Management

The people most affected by voice AI deployment are usually the ones handling calls today. Framing the technology as replacing them is both inaccurate and counterproductive; the reality is that it removes repetitive, low-value interactions and frees staff for complex, relationship-driven work. That message needs to come from leadership and be backed by a clear picture of what the team’s role looks like after deployment.

ProfileTree offers digital skills training that helps teams understand and work alongside AI tools rather than being displaced by them. Change management is consistently underestimated in technology implementations, and voice AI is no exception.

Ciaran Connolly, founder of ProfileTree, puts it plainly: “The businesses that get the most from voice AI are the ones that treat it as a team capability upgrade rather than a headcount reduction exercise. The technology works best when your people understand it and trust it.”

Voice AI and SEO: What Voice Search Means for Your Website

Voice AI deployments are not isolated from your broader digital presence. Voice search queries, whether through a smart speaker, a phone assistant, or an embedded business chatbot, draw on structured data, local business listings, and FAQ content on your website to generate responses.

Implementing Speakable Schema (Schema.org/Speakable) marks specific sections of your content as suitable for voice extraction, increasing the likelihood of your pages being cited in AI Overview results and voice search responses. Structured FAQs with direct, concise answers, ideally in the 40 to 60 word range, are the most commonly cited format in both Google AI Overviews and Bing’s AI search summaries. For businesses investing in AI for business continuity, ensuring the voice and search layers of your digital presence are aligned is a natural next step.

Conclusion

Voice-activated AI has moved well past the novelty stage. For UK and Irish businesses, the practical question is no longer whether to deploy it but how to do so with the right platform, within the correct legal framework, and with a team that understands what the technology can and cannot do.

ProfileTree works with SMEs across Northern Ireland, Ireland, and the UK to plan and implement AI tools that deliver measurable results. Get in touch with our team and explore AI implementation to find out where voice AI fits in your business.

FAQs

Can AI voice agents understand thick UK or Irish accents?

Yes, but accuracy varies by platform and configuration. Models such as OpenAI Whisper v3 support custom phoneme training, which can be tuned for specific dialect profiles, including Belfast, Hiberno-English, Glaswegian, and Geordie.

What is the difference between a voice assistant and an agentic voice AI?

A voice assistant retrieves information or triggers pre-built responses to single-turn queries. An agentic voice AI uses a large language model to reason across multiple steps and connects to external systems via APIs to take real actions, booking appointments, updating records, and placing orders.

Is it legal to record voice AI calls in the UK?

Recording calls without notification is generally unlawful under UK GDPR. Businesses must inform callers at the start of the interaction that the call is being recorded or that it is being handled by an AI system. The most commonly used lawful basis is “legitimate interest,” but this requires a documented balancing test. Data retention periods for recordings and transcripts must also be defined and enforced.

What is the best free voice-activated AI for business use?

OpenAI’s free tier provides access to its Realtime API with usage limits, and Google Gemini Live is available within standard Google Workspace accounts. Both are reasonable for low-volume testing. For production use, neither free tier provides the call volume, SLA guarantees, or data residency options that most business deployments require.

How much does it cost to implement a voice AI agent?

Platform costs typically range from £0.03 to £0.10 per minute of call time, depending on the provider and configuration. Development and integration costs vary widely: a simple FAQ-handling agent can be configured in days; a fully integrated system connected to CRM, billing, and scheduling platforms requires weeks of development work.