Optimise Video Captions and In-Video Text for Platform Algorithms

Table of Contents

Video platforms use captions and on-screen text as primary signals for content categorisation and distribution. When YouTube, Instagram, TikTok, or LinkedIn processes uploaded videos, algorithms extract text from captions, descriptions, and visible on-screen elements to determine relevance, searchability, and suggested placement. Business owners who ignore this technical reality miss opportunities for organic reach.

UK businesses investing in video marketing face intense competition for attention. A product demonstration video with poor caption optimisation might reach 500 people, whilst a competitor’s properly optimised content reaches 50,000. The difference comes down to understanding how platforms read, interpret, and rank video text elements.

ProfileTree’s video marketing services help SMBs across Northern Ireland, Ireland, and the UK create content that performs technically as well as creatively. Through strategic caption writing and on-screen text placement, businesses increase discoverability without additional advertising spend.

Why Platform Algorithms Read Video Captions

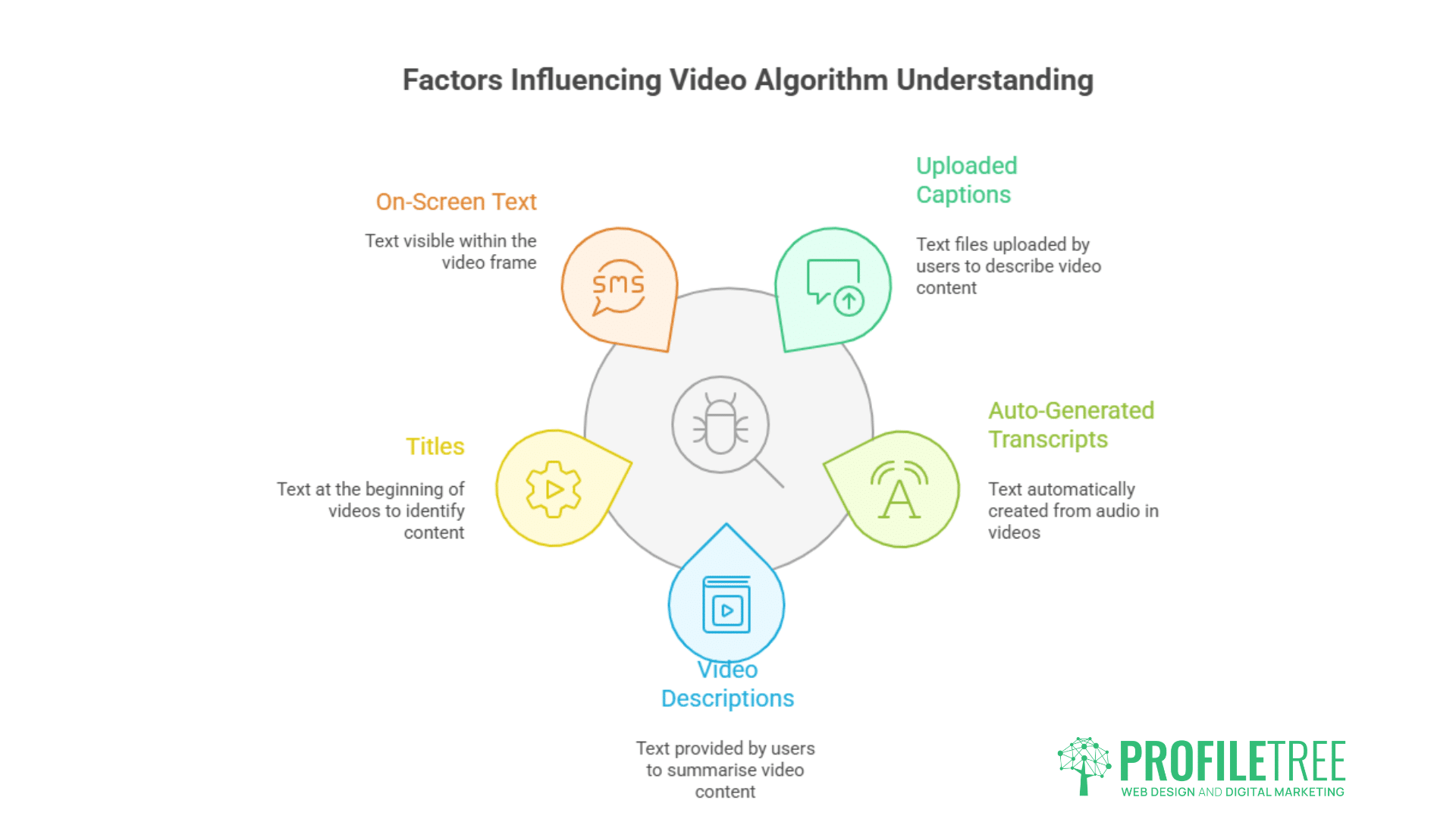

Video platforms analyse multiple text signals to understand content: uploaded captions, auto-generated transcripts, video descriptions, titles, and visible on-screen text. These textual elements provide machine-readable data that algorithms use for categorisation, search indexing, and content recommendation.

YouTube processes uploaded caption files through natural language processing to identify topics, entities, and context. When someone searches “how to fix WordPress errors”, YouTube matches that query against caption text from millions of videos. Accurate, keyword-rich captions increase the likelihood of appearing in relevant searches.

Instagram and TikTok analyse both captions and on-screen text through optical character recognition. A cooking video with recipe ingredients visible on screen provides additional context signals that help algorithms categorise content and show it to food-interested audiences.

LinkedIn prioritises professional content partly based on industry-specific terminology in captions. Business services firms discussing “B2B lead generation” or “enterprise software” benefit from algorithm recognition of these professional terms.

Optimise Video Captions: Types and Impact

Different caption formats provide varying levels of algorithmic value.

SRT Files

SubRip format remains the most widely supported caption standard.

SRT files contain timestamped text that platforms read sequentially. YouTube extracts complete transcripts from SRT uploads, indexing every word for search. The format’s simplicity means fewer technical errors during processing, though it lacks styling options for emphasising key terms.

Business owners should write SRT captions with natural language that includes relevant keywords without forced repetition. A Belfast retailer discussing “sustainable fashion Northern Ireland” should mention those terms when contextually appropriate rather than stuffing every caption line with keywords.

VTT Files

WebVTT format offers styling and positioning controls.

VTT files support text formatting like bold and italics, allowing creators to emphasise important terms that algorithms might weight more heavily. The format also enables caption positioning, keeping text away from important visual elements whilst remaining readable.

Platforms treat VTT formatting as metadata signals. Bold text in captions may indicate topic importance, though YouTube and other platforms have not publicly confirmed weighting differences between formatted and plain caption text.

Auto-Generated Captions

Platform-created transcripts provide baseline accessibility but limited optimisation control.

YouTube’s automatic speech recognition creates captions from audio with approximately 80% accuracy for clear English speech. Irish and Northern Irish accents, technical terminology, and brand names frequently produce errors that hurt searchability.

A Dublin software company discussing “SAAS platforms” might have auto-captions transcribe this as “sass platforms” or “sales platforms”, causing the video to miss relevant searches. Uploading corrected caption files fixes these errors and improves algorithmic understanding.

Strategic Caption Writing for Algorithms

Effective captions balance human readability with machine processing requirements.

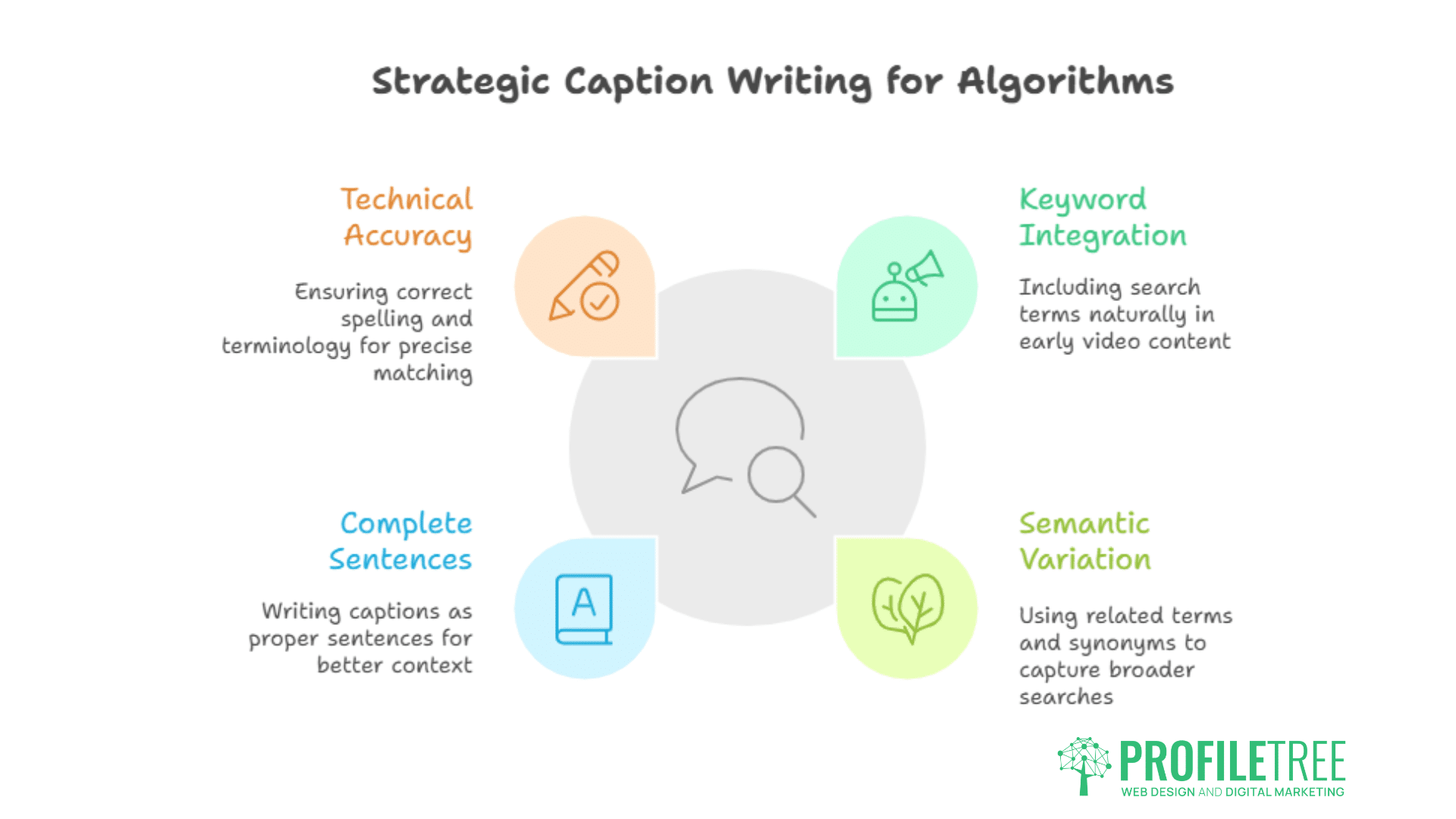

Keyword Integration

Include search terms naturally within the first 30 seconds of video content.

Algorithms place higher weight on words appearing early in captions. Business owners should mention primary topics and keywords within opening statements rather than waiting until mid-video. A marketing agency discussing “digital strategy for SMEs” benefits from stating this phrase early rather than circling the topic for two minutes.

Repeat core terms 3-5 times throughout longer videos without awkward forcing. Natural conversation that returns to main points provides multiple algorithmic signals whilst remaining viewer-friendly. A 10-minute video about web design might mention “responsive layouts”, “mobile-friendly design”, and “user experience” at different points, creating varied keyword signals.

Semantic Variation

Use related terms and synonyms to capture broader search queries.

Platform algorithms understand semantic relationships between terms. Videos about “search engine optimisation” should also mention “SEO”, “google rankings”, “organic search”, and “website visibility. This variety helps content appear in multiple related searches without keyword stuffing.

Local businesses benefit from geographic variation. A Belfast company should mention “Northern Ireland”, “UK”, “Belfast”, and specific areas like “Titanic Quarter” or “Cathedral Quarter” where relevant. This geographic layering helps videos appear in both broad and specific location-based searches.

Complete Sentences

Write captions as proper sentences rather than fragmented phrases.

Algorithms favour natural language processing over keyword lists. Captions like “web design tips small business cost effective” provide weaker signals than “These web design tips help small businesses create cost-effective websites.” Full sentences with proper grammar give algorithms better context for understanding content.

Sentence structure also affects accessibility. Screen readers and automated translation tools work better with grammatically correct captions, expanding potential audiences whilst maintaining algorithmic favour.

Technical Accuracy

Correct spelling and terminology matter more than casual writing suggests.

Misspelt keywords prevent algorithmic matching. A video about “content managment systems” (management spelt incorrectly) misses searches for “content management systems”. Brand names and product terminology require particular attention since algorithms match exact spellings for proper nouns.

Industry-specific terms need consistency. Videos discussing WordPress should not alternate between “wordpress”, “WordPress”, and “Word Press”. Standardised capitalisation helps algorithms recognise entities correctly.

On-Screen Text Optimisation

Visible text in video frames provides additional algorithmic signals through OCR processing.

Text Placement and Duration

Position on-screen text clearly and keep it visible long enough for algorithmic capture.

Platform OCR systems scan video frames for text extraction. Text appearing for less than 2 seconds may not register during processing. Business owners should display key terms and phrases for a minimum of 3-second durations to increase recognition reliability.

Text clarity affects extraction accuracy. Small fonts, low contrast, or stylised typography reduce OCR success rates. Sans-serif fonts in high contrast colours (black on white, white on dark backgrounds) provide the best results. A product video with tiny script fonts for pricing information might have that data ignored by algorithms.

Title Cards and Lower Thirds

Strategic text graphics create strong topical signals.

Opening title cards should contain primary keywords and topics. A video titled “Email Marketing Strategies for UK Retailers” benefits from displaying this text on screen during the introduction. The combination of spoken words (in captions), visible text (via OCR), and video title creates multiple reinforcing signals.

Lower thirds identifying speakers should include relevant credentials. Jane Smith, Digital Marketing Director” provides more algorithmic value than just “Jane Smith. Professional titles help algorithms categorise content as business or educational rather than entertainment.

Animated Text

Moving text must remain readable during motion for OCR capture.

Fast animation reduces text recognition accuracy. Keywords that fly across the screen in one second provide weaker signals than text that animates in, holds for several seconds, then animates out. Motion blur from rapid animation further degrades OCR capability.

Hold animated text static for 3-5 seconds at full size before transitioning. This window allows platform systems to capture and process text content without motion interference.

Platform-Specific Optimisation

Different platforms process video text through distinct systems and priorities.

YouTube

Google-owned YouTube provides the most sophisticated caption indexing.

YouTube treats captions as searchable text equivalent to webpage content. Upload SRT or VTT files for maximum control over indexed text. The platform’s search algorithm matches caption content against user queries, making thorough, accurate captions important for discoverability.

Ciaran Connolly, Director of ProfileTree, notes: “YouTube captions work like on-page SEO for websites. Business owners who write strategic captions see measurable increases in organic video views. The platform actively uses this text data for ranking and recommendations.”

Chapter markers with descriptive text improve both user experience and algorithmic understanding. Videos divided into clearly labelled sections help YouTube identify specific topics within longer content, potentially showing different segments for different search queries.

Thumbnail text should match the early caption content. Viewers clicking a thumbnail promising “5 Lead Generation Tactics” expect those words to appear in opening captions. Consistency between thumbnail, title, description, and captions strengthens topical signals.

Instagram and Facebook

Meta platforms prioritise engagement signals alongside caption text.

Instagram processes both caption text and on-screen text for content categorisation. Reels showing recipe steps benefit from clear on-screen text listing ingredients. The combination of spoken instructions, visible text, and caption hashtags helps algorithms show content to food-interested audiences.

Facebook auto-generates captions but allows uploaded SRT files. Businesses should upload corrected captions since auto-generated versions often misidentify company names, products, and industry terms. Accurate captions help content appear in Facebook’s video search and recommendation systems.

Both platforms scan for text in the first 3 seconds of video to categorise content quickly. Opening frames should contain clear topic indicators through either spoken captions or on-screen text.

TikTok

TikTok’s algorithm weighs on-screen text heavily for content categorisation.

The platform processes visible text through OCR more aggressively than other platforms. Business owners should use TikTok’s built-in text tools rather than pre-rendered text since native text features integrate better with platform systems.

TikTok auto-captions work reasonably well but miss industry terminology and brand names. Edit auto-generated captions before posting to correct errors. The platform’s search function indexes caption text, making accuracy important for discoverability.

Hashtag text appears in captions and provides explicit topical signals. Use 3-5 relevant hashtags that describe video content accurately, rather than chasing trending but unrelated tags. A Belfast cafe posting food content benefits from #BelfastFood and #NorthernIrelandCafe more than generic #FoodTok if seeking local customers.

Professional context matters more than pure keyword frequency.

LinkedIn’s algorithm favours business and professional terminology in captions. Videos discussing “enterprise solutions”, “B2B sales”, or “professional development” receive better distribution to relevant audiences than content using casual language.

Upload caption files rather than relying on auto-generation for business content. Technical discussions containing industry acronyms and company names need manual caption accuracy. A video about “GDPR compliance for UK businesses” should have “GDPR” spelt out as “General Data Protection Regulation” at least once for complete algorithmic understanding.

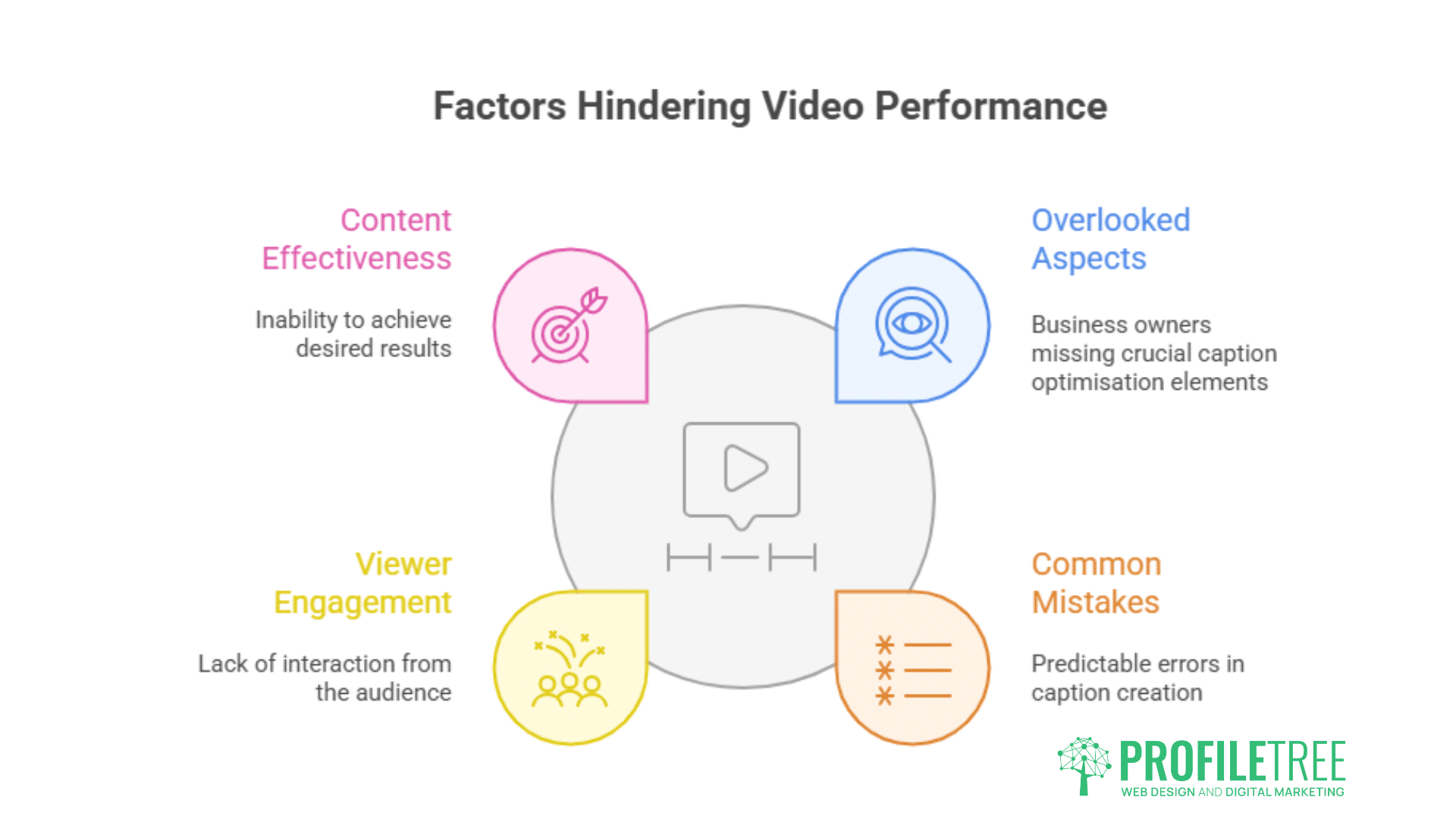

Common Caption Optimisation Mistakes

Business owners frequently make predictable errors that reduce video performance.

Relying Solely on Auto-Generated Captions

Platform-created transcripts contain systematic errors that hurt discoverability.

Auto-captions struggle with accents, proper nouns, and technical terminology. Irish and Northern Irish speakers frequently see their content mis-transcribed. Company names like “ProfileTree” might appear as “profile tree” or “profile three”, preventing brand name searches from finding content.

Download platform auto-captions, correct errors, and re-upload. This workflow takes 10-15 minutes for typical 5-minute videos but significantly improves both accessibility and algorithmic performance. Services like Rev.com provide professional caption editing for businesses without internal resources.

Keyword Stuffing

Forced, unnatural keyword repetition hurts rather than helps.

Platform algorithms detect and penalise obvious keyword stuffing. Captions reading “best web design, best web design services best web design companies” provide a poor user experience and weak topical signals. Algorithms favour natural language over keyword density.

Write captions that sound natural when read aloud. If the caption text sounds awkward or robotic in conversation, rewrite for naturalness whilst maintaining keyword presence.

Ignoring Caption Timing

Poorly timed captions reduce engagement and algorithmic favour.

Captions appearing before or after corresponding audio confuse viewers and signal technical problems to platforms. Captions should sync within 0.5 seconds of spoken words. Caption software like Subtitle Edit allows frame-accurate timing adjustments.

Caption display duration affects readability. Text should remain on screen long enough for comfortable reading, typically 3-7 seconds per sentence, depending on length. Captions flashing by too quickly hurt accessibility while reducing viewer retention metrics that algorithms track.

Neglecting On-Screen Text Contrast

Low-visibility text fails both human viewers and OCR systems.

Light grey text on white backgrounds or dark blue on black provides insufficient contrast for reliable OCR processing. Test on-screen text visibility by converting video frames to greyscale. If text disappears in greyscale, it likely provides weak algorithmic signals.

Add drop shadows or background boxes behind on-screen text to separate it from background video elements. This simple technique improves both human readability and machine processing accuracy.

Measuring Caption Performance

Analytics reveal whether caption optimisation produces measurable results.

Search Traffic Analysis

Track which search terms drive video views.

YouTube Studio shows search queries that led to video views under “Traffic Source: YouTube Search”. Compare these queries against caption keywords to verify whether optimised terms generate actual traffic. Videos ranking for intended keywords validate the caption strategy, whilst ranking for unrelated terms suggests misalignment between content and captions.

TikTok analytics show search terms under “Video Views” for videos receiving search traffic. Monitor whether business-relevant searches drive views or if traffic comes primarily from the For You page recommendations.

Engagement Rates

Better captions improve viewer retention metrics.

Accurate captions increase watch time since viewers find content that matches their search intent. YouTube’s audience retention graphs show whether viewers watch or abandon videos quickly. Videos with optimised captions typically show higher retention in the first 30 seconds as viewers confirm content relevance.

Compare engagement rates between videos with carefully optimised captions versus those using unedited auto-captions. A/B testing across similar content reveals caption impact on performance metrics.

Caption Click-Through Rates

Some platforms show caption previews in search results.

YouTube displays caption excerpts in search results for some queries. Compelling, keyword-rich caption text can improve click-through rates from search results pages. Monitor impressions versus views to track whether appearing in search converts to actual views.

Tools for Caption Creation and Optimisation

Specialised software improves caption quality and efficiency.

Subtitle Edit

Free, open-source caption editor for Windows.

Subtitle Edit provides frame-accurate timing, automatic caption generation through speech recognition, and batch processing capabilities. The software exports to all major caption formats, including SRT, VTT, and platform-specific variants. Business owners can create professional captions without subscription costs.

Rev

Professional human transcription and caption service.

Rev offers 99% accuracy through human transcribers, handling accents and technical terminology that automated systems miss. The service costs approximately £1 per minute of video but guarantees accuracy for business-critical content. Turnaround typically takes 12-24 hours.

Descript

Video editing through transcript editing.

Descript generates transcripts automatically, then allows video editing by cutting transcript text. This workflow creates inherently accurate captions since video and transcript modifications stay synchronised. The platform suits businesses producing regular video content who need efficient caption management.

YouTube Studio

Built-in caption editor with auto-generation.

YouTube Studio allows direct caption editing within the platform. Business owners can download auto-generated captions, edit them in the built-in text editor, and republish without external software. The interface provides timing adjustments and supports multiple language captions for international audiences.

Caption Strategy for Different Video Types

Content format affects the optimal caption approach.

Product Demonstrations

Include product names, features, and use cases in captions.

Product videos should mention specific model numbers, features, and problem-solving capabilities in captions. Someone searching “wordpress security plugin 2025” needs those exact terms in video captions to find relevant demonstrations. Generic captions saying “great security features” provide weaker signals.

On-screen text should highlight key specifications and pricing when discussed. Display text like “256GB Storage” or “£299” reinforces spoken information through additional algorithmic signals.

Educational Content

Structure captions around learning objectives and key concepts.

Tutorial videos benefit from captions that mirror how people search for learning. Someone wanting to “learn email marketing automation” searches using those terms. Captions should repeat core concepts and include related terminology like “drip campaigns”, “behavioural triggers”, and “subscriber segmentation”.

On-screen text works well for step-by-step processes. Number steps clearly and include them in captions: “Step 1: Configure SMTP settings” provides structured information that algorithms can parse and index.

Company Culture Videos

Balance personality with a searchable business context.

Behind-the-scenes content should still include the company name, industry, and location in captions. A Belfast digital agency showing office life should mention “digital marketing”, “Belfast”, and “creative agency” within opening captions to maintain business relevance in algorithmic categorisation.

On-screen text identifying team members can include job titles that signal company focus. “Sarah – SEO Specialist” provides more searchable context than “Sarah – Team Member”.

Customer Testimonials

Include problems solved and measurable results in captions.

Testimonial captions should capture specific challenges and outcomes. Generic praise like “they were great to work with” provides minimal search value. Captions including “increased website traffic 150%” or “reduced cart abandonment by 40%” help videos appear in results-focused searches.

On-screen text displaying company names and results reinforces credibility whilst providing algorithmic signals. Show “Retail Company X: 300% ROI in 6 Months” to combine social proof with searchable metrics.

ProfileTree’s Video Marketing Approach

We integrate caption optimisation within comprehensive video strategies.

ProfileTree’s video marketing services cover production, editing, and distribution optimisation for SMBs across Northern Ireland, Ireland, and the UK. Our approach treats captions as technical SEO elements requiring the same attention as on-page website optimisation.

We script videos with caption optimisation in mind, ensuring speakers naturally mention key search terms and topics. This front-end planning creates better algorithmic signals than trying to retroactively optimise poorly planned content. Belfast businesses working with us see improved video discoverability through strategic caption planning integrated into production workflows.

Our video production includes professional caption creation with platform-specific formatting. YouTube content receives SRT files with strategic keyword placement, whilst TikTok content uses platform-native text tools for maximum OCR compatibility. This tailored approach recognises that different platforms require different optimisation tactics.

We monitor video performance across platforms, tracking which caption strategies generate search traffic and engagement. This data-driven approach allows continuous refinement. Business owners receive reports showing caption optimisation impact on view counts, search rankings, and audience retention.

ProfileTree’s digital training programmes teach internal teams caption optimisation skills for ongoing video content. Rather than creating dependency, we build capability whilst providing expert guidance for complex implementations.

Future Caption Optimisation Trends

Platform algorithm capabilities continue advancing.

Automatic speech recognition accuracy improves annually. Current systems achieve approximately 80% accuracy for clear speech, whilst newer models approach 90-95%. This progression means auto-generated captions become more reliable, though human review remains important for business content where accuracy matters.

Multilingual caption support expands to all major platforms. YouTube, TikTok, and Instagram increasingly support automatic translation of uploaded captions. Business owners serving international markets should create English captions that translate cleanly, avoiding idioms and cultural references that translation systems mishandle.

Visual content understanding through computer vision reduces reliance on text signals. Platforms increasingly recognise objects, scenes, and actions directly from video frames. This trend means that whilst caption optimisation remains important, it becomes one component within broader algorithmic understanding rather than the primary signal.

Real-time caption generation during live streaming improves. Facebook, YouTube, and LinkedIn provide automatic captions for live videos, though with variable accuracy. Business owners hosting webinars or product launches should still prepare caption-optimised scripts to maximise live content searchability.

Conclusion

Video captions and on-screen text provide algorithms with machine-readable signals that determine content distribution and searchability. Business owners who write strategic captions, correct auto-generated transcripts, and design readable on-screen text gain measurable advantages in organic video reach.

Platform-specific optimisation matters since YouTube, Instagram, TikTok, and LinkedIn process video text differently. Upload corrected caption files, use clear on-screen text with high contrast, and integrate relevant keywords naturally throughout content. These technical steps increase discoverability without requiring advertising spend.

ProfileTree helps SMBs across Northern Ireland, Ireland, and the UK implement caption optimisation within broader video marketing strategies. Whether you need production support, caption creation services, or training to build internal capabilities, we provide practical guidance that increases video performance through technical optimisation and creative excellence.