Data Governance for AI: Best Practices for SMEs

Table of Contents

Data governance for AI is no longer a concern reserved for large enterprises with dedicated data teams. For small and medium-sized businesses across Northern Ireland, Ireland, and the UK, the decision to adopt AI tools brings an immediate and practical challenge: how do you manage the data that feeds those systems responsibly, legally, and effectively? Data governance for AI covers the policies, processes, and accountability structures that determine how data is collected, stored, used, and monitored within AI-driven workflows. Without it, even well-intentioned AI deployments can produce unreliable results, expose businesses to regulatory risk, and quietly erode customer trust.

At ProfileTree, a Belfast-based digital agency, we work with SMEs across manufacturing, professional services, hospitality, and retail who are beginning to integrate AI into their operations. The questions we hear most frequently are not about which AI tool to use; they are about whether the underlying data is clean enough, compliant enough, and governed well enough to produce outputs that can actually be trusted. Data governance for AI answers those questions with a structured, practical approach rather than a vague policy document.

This guide is written for business owners and marketing managers who need to understand data governance for AI without a background in data science. We cover the core framework, the UK regulatory context, the practical steps to implement governance inside a small or medium business, and the metrics that tell you whether your approach is working.

What Is Data Governance for AI

Data governance for AI refers to the set of rules, roles, and processes that control how data is handled across the lifecycle of any AI system. That lifecycle begins before a model is deployed, starting with where data comes from, how it is labelled, and whether it is accurate and representative. It continues through training and into live operation, extending to the ongoing monitoring needed to catch errors, drift, or bias over time. Building a sound AI strategy for your SME depends entirely on having this foundation in place before deployment begins.

Standard data governance was designed around structured, tabular data used for reporting. AI systems, particularly the generative tools now accessible to SMEs through platforms like Microsoft Copilot and similar services, consume a far broader range of data types: documents, emails, chat logs, images, and voice recordings. AI chatbots for customer service are among the most common first deployments for SMEs, and the quality of the data they draw from determines the quality of every response they produce.

Why Traditional Data Governance Falls Short

The core problem is that traditional governance was built for deterministic systems. Run a sales report today and tomorrow, and the numbers should match. AI systems are probabilistic; they produce best estimates rather than fixed outputs. Governance must therefore shift from auditing results to auditing the reliability, freshness, and representativeness of the data going in.

Three specific gaps emerge when businesses apply older frameworks to AI:

- Unstructured data is ungoverned. PDFs, transcripts, and emails are the primary fuel for generative AI in most businesses, yet most governance policies were written for spreadsheets and databases.

- Data decay is faster. A customer behaviour dataset from two years ago may produce misleading outputs today. Governance for AI requires near-real-time monitoring of data health.

- Outputs are opaque. Tracing a hallucinated AI output back to a specific training document requires deliberate lineage tracking that most businesses have not yet put in place.

Key Definitions for SME Decision-Makers

Before building out data governance for AI, these are the terms you will encounter most often:

| Term | What It Means for Your Business |

|---|---|

| Data Lineage | The documented trail from a raw data source to the AI output it influences. |

| Data Quality | Accuracy, completeness, consistency, and relevance of data used in AI systems. |

| Data Provenance | The verified origin of a dataset, confirming it was collected lawfully. |

| Model Drift | The gradual degradation of AI accuracy as training data becomes outdated. |

| PII | Any data that could identify an individual; must be strictly controlled in AI workflows. |

| RAG | An AI technique where the model pulls live data from your documents before generating a response. Governance of the source data is critical. |

Building Your AI Governance Framework

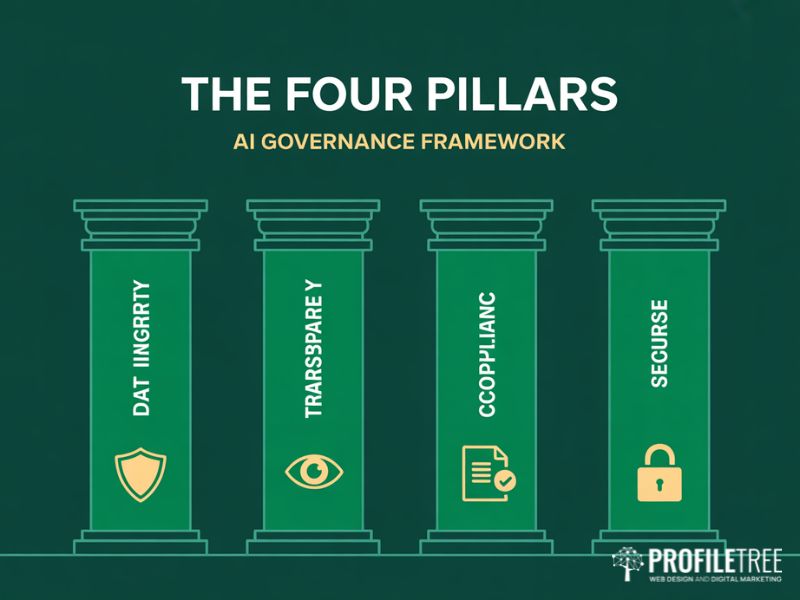

A workable data governance for AI framework does not need to be a lengthy policy document. For most SMEs, a clear accountability structure combined with documented standards for data handling will provide the foundation needed to deploy AI tools responsibly. The four pillars below form the backbone of an effective approach, and together they provide the coverage that UK regulators, including the Information Commissioner’s Office (ICO), expect from organisations using AI to process personal data.

Pillar One: Data Integrity and Bias Mitigation

Integrity in the context of data governance for AI means more than accuracy; it means the data is representative of the real population it is meant to reflect. If your AI is trained on historical hiring decisions, sales performance records, or customer service logs, it will learn and reproduce whatever patterns exist in that history, including any biases. This is especially relevant for businesses using AI marketing and automation tools, where biased training data can skew targeting and campaign optimisation in ways that are difficult to detect without deliberate auditing.

The practical response is to conduct pre-deployment audits on training datasets. Before any dataset touches a model, check it for demographic imbalances, missing segments, and outdated entries. Tools such as Fairlearn (open source) provide basic bias detection for smaller datasets without requiring a data science team. In the UK, the Fairness principle under the Data Protection Act 2018 applies directly to automated decision-making, making this a legal obligation as much as a quality concern.

Pillar Two: Algorithmic Transparency and Data Lineage

Transparency in data governance for AI means having documented lineage: the path from a specific data source, through any processing steps, into the AI system that produced the output. A strong digital strategy for your business should include lineage documentation as a foundational element of any AI deployment, not an afterthought added when something goes wrong.

For SMEs using Retrieval-Augmented Generation (RAG) systems, this is particularly important. If your AI assistant has access to sensitive internal documents, every document in that store should carry a source label, a sensitivity classification, and an expiry date. When a document is updated or removed, the AI’s knowledge base should reflect that change automatically. Without this, outdated PDFs become invisible liabilities inside your AI outputs.

Ciaran Connolly, founder of ProfileTree, explains the practical reality: “An effective data governance framework empowers SMEs to harness their data’s full potential, which positions them to compete more effectively in today’s data-driven market. But that only works when the business knows exactly what data the AI is drawing from and can trace any output back to its source.”

Pillar Three: Regulatory Compliance in the UK Context

Data governance for AI in the UK sits at the intersection of GDPR as retained in UK law, the Data Protection Act 2018, and the ICO’s guidance on AI and data protection. Unlike the EU AI Act, which takes a prescriptive, tiered approach to AI risk, the UK’s stance is pro-innovation, placing responsibility on existing regulators. For SMEs new to this area, the ICO’s published guidance on AI and data protection is the primary reference document and is updated regularly.

The ICO requires organisations to demonstrate lawful basis for processing personal data in AI systems, explain automated decisions when they significantly affect individuals, conduct Data Protection Impact Assessments for high-risk applications, and implement technical measures to minimise data used in training.

| Requirement | UK (ICO / DPA 2018) | EU AI Act |

|---|---|---|

| Basis for AI data processing | Lawful basis under GDPR required | Risk-tier classification required |

| Automated decision transparency | Right to explanation for significant decisions | Explainability mandated for high-risk AI |

| Impact assessment | DPIA for high-risk processing | Conformity assessment for high-risk systems |

| Human oversight | Required for solely automated decisions | Mandatory for all high-risk AI categories |

| Data minimisation | Must use minimum data necessary | Technical measures mandated |

Pillar Four: Security for AI Data Pipelines

Standard cybersecurity applies to AI systems, but data governance for AI introduces additional considerations beyond firewall rules and access controls. The most significant is the security of the vector database or document store used in RAG-based systems. Businesses that invest in secure website hosting and management already have a security foundation they can extend to their AI infrastructure, but the specific controls for AI pipelines need to be configured deliberately rather than inherited by default.

Practical security measures include role-based access controls on AI data sources, encryption for both stored and transmitted data, audit logging of what the AI has accessed and when, and regular review of any AI-connected interfaces. Many third-party AI platforms provide these controls as standard, but they need to be actively configured.

Legal Compliance, GDPR, and AI Ethics for UK SMEs

Legal compliance is woven into every pillar of data governance for AI rather than sitting as a separate track. For UK businesses, the core obligations flow through the ICO, and the AI White Paper signals that existing laws will be applied to AI rather than waiting for specific AI legislation. The businesses most exposed to risk are those treating compliance as a tick-box exercise rather than a continuous operational discipline.

Privacy by Design in AI Deployments

Privacy by design means building data protection into the architecture of an AI system from the outset. For SMEs, this means three practical commitments: collecting only the data the AI genuinely needs, anonymising personal data wherever the task permits, and deleting data when it is no longer needed. This principle applies directly to businesses using content marketing strategies that incorporate AI tools, where customer interaction data must be governed with the same rigour as any other personal data.

When deploying a customer service chatbot, the AI does not need to retain full conversation transcripts indefinitely. A governance policy should specify retention periods, deletion triggers, and what happens to data if a customer exercises their right to erasure under GDPR.

AI Ethics and Responsible Deployment

One area where ethics and commercial practice intersect clearly is AI-generated content. Businesses using AI to support their SEO and search visibility need governance policies that ensure AI-produced content is accurate, attributed correctly, and does not introduce misleading claims that could harm both readers and search performance.

Before deploying any AI system that touches customer data, ask: Can we explain what this AI does and why it produces the outputs it does? Have we checked the training data for imbalances? Is there a human review process for high-stakes outputs? Who is accountable when the AI gets something wrong? These questions require a documented process and an accountable owner, not a philosophy degree.

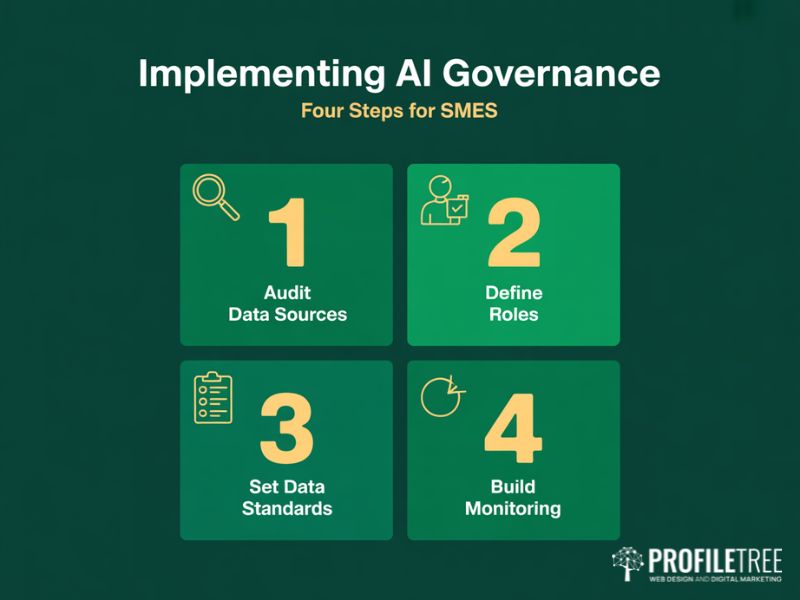

Implementing Data Governance for AI in Your SME

Understanding the theory of data governance for AI is the easier half of the challenge. The harder half is building it into the daily operations of a business with limited resources and no dedicated data team. The steps below are designed to be actionable for a business owner or marketing manager.

Step One: Audit Your Current Data Sources

Start by mapping every data source that currently feeds any AI system your business uses. For businesses running AI-assisted social media marketing, this audit frequently reveals that scheduling and content tools have been granted access to customer data that was never intended to feed an AI model.

- List every AI tool currently in use across the business.

- For each tool, document what data it accesses, processes, or retains.

- Classify each data source: public, internal, personal, or sensitive personal.

- Identify any personal data being processed and confirm the lawful basis under GDPR.

- Flag any data sources that are outdated, unverified, or uncontrolled.

Step Two: Define Roles and Responsibilities

Data governance for AI requires named accountability, not just shared awareness. In a small business, the same person may wear multiple governance hats, but each role should still be explicitly assigned. The three core roles are the Data Owner (a senior stakeholder responsible for data quality and compliance), the Data Steward (managing day-to-day data handling), and the AI System Owner (responsible for a specific tool’s configuration, access controls, and performance monitoring). Documenting these roles removes ambiguity when something goes wrong and provides the ICO with evidence of organisational accountability.

Step Three: Establish Data Standards

Businesses working with a web development partner to integrate AI tools into their site infrastructure should establish these standards before technical build begins. Three core standards cover the majority of risk exposure: a data quality standard specifying accuracy and freshness requirements, a retention and deletion policy specifying how long data is kept and how deletion is verified, and a labelling standard where every document accessible to an AI tool carries a sensitivity classification.

Step Four: Build Monitoring Into the Process

Data governance for AI is an ongoing discipline. The most common failure mode is a business that completes an initial governance exercise and then lets it drift as the AI landscape changes. Minimum monitoring activities include a quarterly review of all AI tools and their data access permissions, a six-monthly audit of data quality in primary sources, and an immediate review whenever a new AI tool is adopted.

Measuring and Improving AI Data Quality

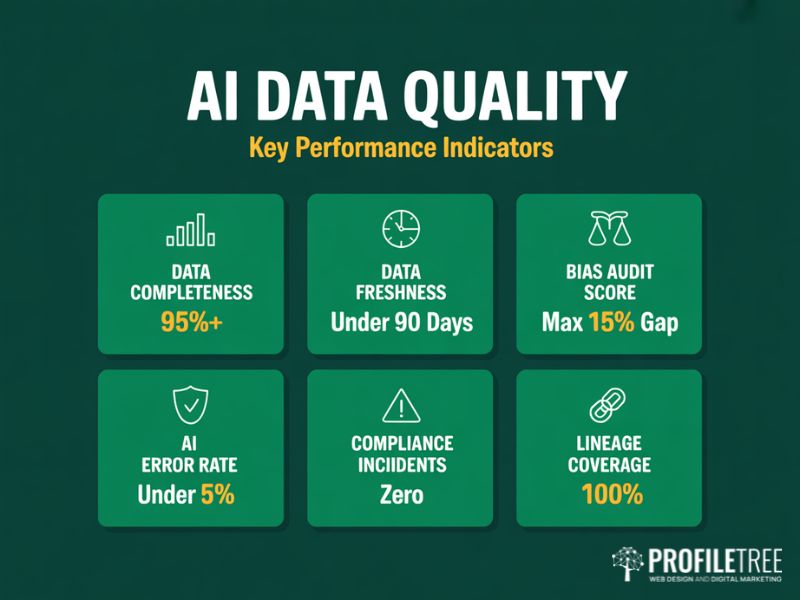

Data governance for AI produces measurable outcomes. The metrics below are designed to be practical for a business without a dedicated analytics team.

| KPI | What It Measures | Target |

|---|---|---|

| Data Completeness Rate | % of required fields populated across AI training data | Above 95% |

| Data Freshness | Average age of documents in AI retrieval stores | Under 90 days |

| Bias Audit Score | Demographic balance across key variables in training data | No segment underrepresented by more than 15% |

| AI Error Rate | % of AI outputs flagged for correction by human reviewers | Under 5% |

| Compliance Incidents | Data handling incidents related to AI tools per quarter | Zero |

| Lineage Coverage | % of AI outputs traceable to a documented source | 100% for high-stakes decisions |

Continuous Improvement Through Data Observability

Data observability means monitoring the health of your data in real time rather than through periodic manual audits. ProfileTree’s approach with clients across Northern Ireland and the wider UK involves integrating governance checkpoints into existing project reviews rather than creating separate governance processes. Building data governance for AI into existing business rhythms makes compliance sustainable rather than burdensome.

Training Your Team on Data Governance

The strongest technical framework for data governance for AI will underperform if the people using AI tools daily do not understand their responsibilities. ProfileTree’s digital training programmes for business teams include practical AI governance modules designed for non-technical staff, covering responsible use and the basics of data handling compliance.

In our AI training for business programmes, delivered to SMEs across manufacturing, retail, and professional services, the most common finding is not technical ignorance but a lack of shared understanding about which data is appropriate to use with which tool, and what counts as a data governance risk versus a normal operational decision.

Building Data Governance for AI as a Competitive Advantage

Data governance for AI is not a compliance burden to be minimised. For SMEs that get it right, it is a genuine competitive differentiator. Businesses that can demonstrate they handle data responsibly, that their AI outputs are reliable and traceable, and that they have the organisational accountability to back those claims are better positioned to win the trust of customers, partners, and regulators alike.

The businesses we work with at ProfileTree, across web design and development, digital marketing, AI training, and digital strategy, consistently find that governance work done at the start of an AI deployment saves significant remediation cost later. A chatbot trained on uncontrolled data, or a recommendation engine fed with outdated records, will produce outputs that undermine confidence in the technology across the organisation.

Data governance for AI will continue to evolve as both the technology and the regulatory landscape develop. A governance framework built on clear principles, documented roles, and regular review cycles will adapt to that change far more effectively than one built around a static policy document. If you are beginning your AI governance journey or looking to formalise an approach that has grown organically, the ProfileTree team offers practical support across AI-powered video marketing, AI chatbot deployment, and AI marketing and automation strategy for SMEs across Northern Ireland, Ireland, and the UK. Contact us to discuss how data governance for AI fits into your wider digital transformation goals.

FAQs

How can SMEs ensure data quality when implementing AI systems?

Set clear data quality standards covering accuracy, completeness, and relevance before any dataset informs an AI system, and run regular audits to maintain them.

What are the essential components of an AI data governance strategy?

A framework covering roles and standards, a data quality management process, a GDPR-aligned compliance protocol, and staff training on responsible AI use.

How should SMEs handle data privacy and comply with GDPR when deploying AI?

Build data protection in from the outset, use only the data the AI genuinely needs, anonymise where possible, and conduct a DPIA for any high-risk AI application.

What role does data provenance play in AI governance?

It establishes the verified origin of a dataset, confirming it was collected lawfully and is appropriate for its intended use, which is essential for both accuracy and regulatory accountability.

How do SMEs establish accountability in AI decision-making?

Assign named roles for AI governance, document decision-making workflows, and implement a feedback mechanism so errors in AI outputs can be reported and resolved.

What is the difference between data governance for traditional systems and for AI?

Traditional governance was built for structured, deterministic data. Data governance for AI must account for unstructured inputs, probabilistic outputs, faster data decay, and the need to trace outputs through complex model architectures.