AI Regulations Worldwide: Analysing Global Legislative Frameworks

Table of Contents

AI regulations are no longer a future concern for businesses and policymakers. They are active, enforceable, and increasingly consequential for any organisation that builds, deploys, or relies on artificial intelligence systems. From Belfast to Brussels, from London to Seoul, governments are moving from voluntary ethics guidelines to hard legislative requirements, with significant financial penalties for organisations that fall short. Understanding how AI regulations differ across regions is not just useful knowledge for legal teams; it is a strategic priority for any business operating in a digital economy.

At ProfileTree, Belfast’s digital agency working with businesses across the UK and Ireland, we see first-hand how AI regulations shape the decisions companies make around web design, digital marketing, AI training, and content strategy. This guide sets out the major regulatory frameworks, compares their approaches, and translates the compliance requirements into practical terms for organisations of all sizes.

Understanding AI Regulations: Why They Matter Now

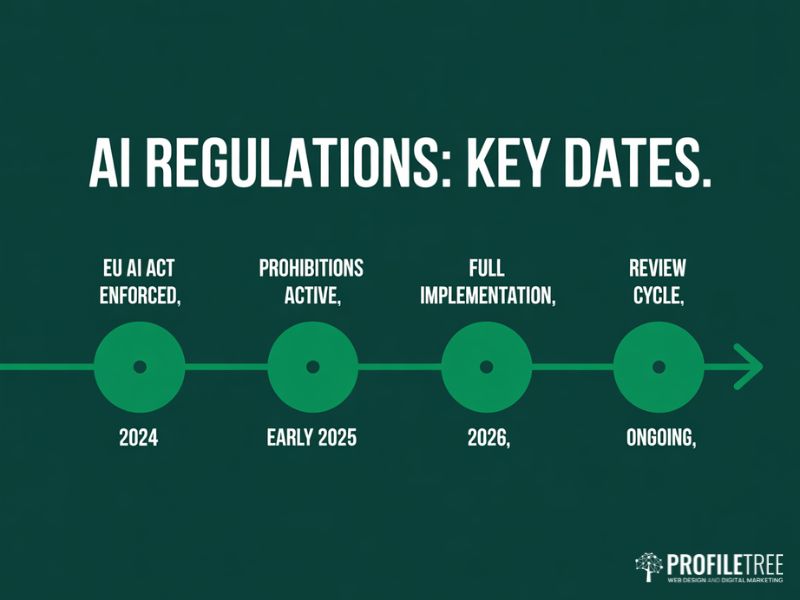

Artificial intelligence governance has entered a new phase. For much of the past decade, AI regulations existed primarily as principles and recommendations rather than binding law. That changed with the EU AI Act becoming fully enforceable in 2024, and the trend has accelerated since. Today, AI regulations govern everything from how recruitment tools assess candidates to how financial institutions use predictive models and how businesses deploy AI marketing and automation tools.

The core rationale behind AI regulations is consistent across jurisdictions: AI systems can produce outcomes that affect fundamental rights, and those outcomes need to be accountable, transparent, and proportionate. Where AI regulations differ is in how they balance that accountability with the need to support innovation and economic growth.

What Drives the Push for AI Regulations?

Several converging forces have made AI regulations a legislative priority worldwide. Algorithmic bias in hiring and credit decisions has been documented across multiple industries. Facial recognition systems have produced incorrect identifications with serious consequences. Generative AI has raised urgent questions about misinformation, intellectual property, and consent. These real-world harms have made the case for AI regulations that go beyond self-regulation.

At the same time, the commercial stakes are enormous. AI regulations that are too restrictive can push development to less regulated markets. AI regulations that are too permissive can expose citizens to harm and erode public trust in AI-enabled services. Getting that balance right is the central challenge facing legislators everywhere.

The EU AI Act: The Benchmark for Global AI Regulations

The European Union’s AI Act is the most comprehensive piece of AI legislation in the world, and it is setting the standard against which other AI regulations are increasingly measured. Agreed by the European Parliament in March 2024 and fully in force since August 2024, it applies not just to EU-based organisations but to any company whose AI systems are used within the EU. This extraterritorial reach makes it directly relevant to UK, US, and globally operating businesses. The OECD AI Principles provide a useful international reference point that influenced much of the Act’s structure.

The Risk-Tier Framework

The central mechanism of the EU AI Act is a four-tier risk classification. Understanding where your AI systems sit within this framework is the starting point for compliance.

| Risk Level | Example Applications | Regulatory Requirement |

|---|---|---|

| Unacceptable Risk | Social scoring, real-time biometric surveillance in public spaces | Strictly prohibited. No exceptions. |

| High Risk | Recruitment tools, credit scoring, critical infrastructure, law enforcement | Mandatory conformity assessments, human oversight, registration in EU database |

| Limited Risk | Chatbots, deepfakes, emotion recognition software | Transparency obligations: must disclose AI interaction to end users |

| Minimal Risk | Spam filters, AI-enabled video games, basic recommendation engines | No specific obligations. Voluntary codes of conduct encouraged. |

For UK and Irish businesses supplying products or services into EU markets, the High Risk category is where most compliance effort is required. AI regulations at this tier demand full technical documentation, bias testing, audit trails, and in many cases a designated EU-based representative. Building a clear digital strategy that accounts for these requirements from the outset is far more efficient than retrofitting compliance after deployment.

Prohibited AI Practices Under EU Regulations

A significant development within EU AI regulations is the explicit prohibition of certain practices, effective from February 2025. These include systems that categorise individuals based on sensitive characteristics such as political beliefs, religious views, or sexual orientation, tools that build facial recognition databases through untargeted scraping, and AI used to detect emotions in professional or educational settings. For businesses that were using AI in any of these ways, immediate compliance action is required.

Fines and Enforcement

EU AI regulations carry penalties that mirror the GDPR in scale. Violations involving prohibited systems can result in fines of up to 35 million euros or 7% of global annual turnover, whichever is higher. High-risk system non-compliance carries fines of up to 15 million euros or 3% of turnover. Providing incorrect information to regulators can result in fines of up to 7.5 million euros. These figures make compliance with AI regulations a board-level financial risk, not a legal department afterthought.

US and UK AI Frameworks: Flexibility Versus Rigidity

Outside the EU, the two most significant AI regulations landscapes for UK-based businesses are those of the United States and the United Kingdom itself. Both have chosen more flexible, sector-led approaches, though the detail of how that flexibility operates differs considerably.

United States: Decentralised AI Regulations

The US federal approach to AI regulations has remained deliberately light-touch at the national level, with the policy intent of preserving the country’s competitive position in AI development. The foundational documents remain the NIST AI Risk Management Framework, which provides a structured approach to identifying and managing AI risks without mandating specific compliance steps, and the Blueprint for an AI Bill of Rights, which outlines principles for protecting individuals but carries no legal force.

Where US AI regulations have become more consequential is at state level. California, Colorado, Texas, and a growing number of other states have enacted or are advancing their own AI regulations, focusing particularly on automated decision-making in employment, housing, and consumer finance. This has direct implications for digital businesses: search engine optimisation strategies that rely on AI-generated content or automated targeting must now account for state-specific disclosure requirements in several US markets.

The change of federal administration in 2025 has shifted the direction of US AI regulations further toward deregulation at the federal level, though state-level activity has, if anything, increased as a result. Businesses exporting AI-enabled services into the US need to monitor state-level AI regulations closely, particularly California’s, which often set precedents adopted elsewhere.

United Kingdom: The Pro-Innovation Model

The UK’s approach to AI regulations is framed around a principles-based model administered by existing sectoral regulators rather than a single overarching AI law. The Information Commissioner’s Office (ICO) governs data-related AI use, the Financial Conduct Authority (FCA) covers AI in financial services, and the Care Quality Commission (CQC) addresses AI in healthcare contexts. Investing in AI literacy training for staff is increasingly recommended by UK regulators as a foundational governance measure, and one of the most practical steps any business can take right now.

The AI Safety Institute, established in 2023, represents the UK’s investment in frontier AI research and safety evaluation. It has focused primarily on testing advanced AI models rather than setting compliance requirements for business use. The UK government has signalled, though, that light-touch AI regulations will not remain indefinitely, and mandatory requirements are expected to follow, particularly for high-risk applications.

As Ciaran Connolly, founder of ProfileTree, notes: “For UK businesses, the current window is an opportunity to build AI governance practices voluntarily before regulation makes them mandatory. The businesses investing in responsible AI now will face a much smoother compliance journey when binding UK AI regulations arrive.”

Asia and Emerging Markets: Divergent Approaches to AI Regulations

Beyond Europe and the anglophone world, AI regulations are developing rapidly across Asia and parts of the Global South, each reflecting distinct economic priorities and political structures.

China: Targeted and Sovereign AI Regulations

China has adopted a model of targeted AI regulations focused on specific high-impact applications rather than a single horizontal law. The generative AI Measures, in force since August 2023, require providers of generative AI services available to the public within China to register their models, submit them for security assessments, and ensure outputs do not violate Chinese law or social norms. AI chatbot systems deployed within China must comply with these content restrictions in addition to standard transparency requirements, and failure to register carries significant penalties.

China’s AI regulations are also closely integrated with its broader data sovereignty framework, which restricts cross-border data flows and gives the government significant oversight over AI systems that handle data about Chinese citizens. For international businesses operating in China, these AI regulations create substantial compliance requirements that frequently conflict with obligations under EU or US frameworks.

India: Emerging AI Regulations with an Inclusion Focus

India’s approach to AI regulations is still developing, led by NITI Aayog’s responsible AI principles framework. The government has emphasised using AI as a tool for economic development and social inclusion, particularly in healthcare, agriculture, and education. Formal AI regulations with binding requirements have been slower to materialise, but the Digital Personal Data Protection Act of 2023 creates a foundation for data-related AI regulation that is expected to be built upon significantly in the coming years.

India’s market scale means that AI regulations here will matter significantly for global technology companies. The combination of an English-language legal tradition and a rapidly growing digital economy makes India’s regulatory direction one to watch closely.

Brazil and the Global South

Brazil’s AI Bill 2338, currently progressing through the legislature, draws heavily from the EU AI Act’s risk-based structure while incorporating provisions specifically relevant to Brazil’s social and economic context, including heightened protections for vulnerable populations and requirements for AI systems to be comprehensible to citizens with lower digital literacy. If passed in its current form, Brazil will have the most comprehensive AI regulations in Latin America.

Across Africa, national AI strategies have been produced by Egypt, Rwanda, South Africa, and others, though binding AI regulations remain at an early stage. The African Union’s AI policy framework provides a reference document that national governments are increasingly drawing on as they develop domestic AI regulations.

AI Ethics, Data Protection, and Cross-Border Compliance

Across every jurisdiction, AI regulations share a set of common ethical concerns, even where the specific legal requirements differ. Understanding these shared principles helps businesses build AI governance frameworks that can satisfy multiple regulatory environments simultaneously.

Algorithmic Bias and Fairness

All major AI regulations frameworks identify algorithmic bias as a primary concern. AI systems trained on historical data tend to reproduce patterns of discrimination embedded in that data. AI regulations address this through requirements for bias testing before deployment, ongoing monitoring for discriminatory outcomes, and in some cases diversity requirements for the teams responsible for developing AI systems. For businesses using AI in recruitment, customer service, or credit assessment, bias testing is not optional under current AI regulations; it is a compliance requirement.

Transparency and Explainability

AI regulations across the EU, UK, and US all emphasise the right of individuals to understand decisions made about them by AI systems. This principle of explainability requires that AI systems operating in high-stakes contexts can produce human-understandable explanations for their outputs. It has direct implications for content marketing practices that rely on AI-generated personalisation, where organisations must be able to explain how content was selected and presented to a given user.

GDPR and Data Protection in AI Systems

For UK and EU businesses, AI regulations do not exist in isolation from data protection law. The GDPR’s principles of data minimisation, purpose limitation, and privacy by design apply directly to AI systems that process personal data. AI regulations under the EU AI Act layer additional requirements on top for high-risk systems. For detailed GDPR compliance guidance, it is worth reviewing how these frameworks interact before deploying any new AI system.

The concept of Privacy by Design is particularly relevant for businesses commissioning bespoke AI tools or AI-powered websites. Building data protection into the architecture from the outset is far less costly than retrofitting compliance after deployment.

Cross-Border Compliance: The Interoperability Challenge

For businesses operating across multiple jurisdictions, the core challenge of AI regulations is interoperability: building one AI governance structure that satisfies multiple sets of requirements. The practical approach is to take the most stringent requirements as the baseline and build upward. For most internationally operating businesses, this means using the EU AI Act as the foundation, since satisfying its requirements will generally satisfy less demanding regimes as well.

Key steps in a cross-border AI compliance framework include: classifying all AI systems in use by their risk level under the EU framework; documenting data sources, training methods, and intended use cases for each system; establishing human oversight processes for high-risk applications; and conducting regular bias and accuracy audits. These steps serve compliance across jurisdictions and build the kind of internal AI governance capability that regulators across all markets are increasingly expecting to see.

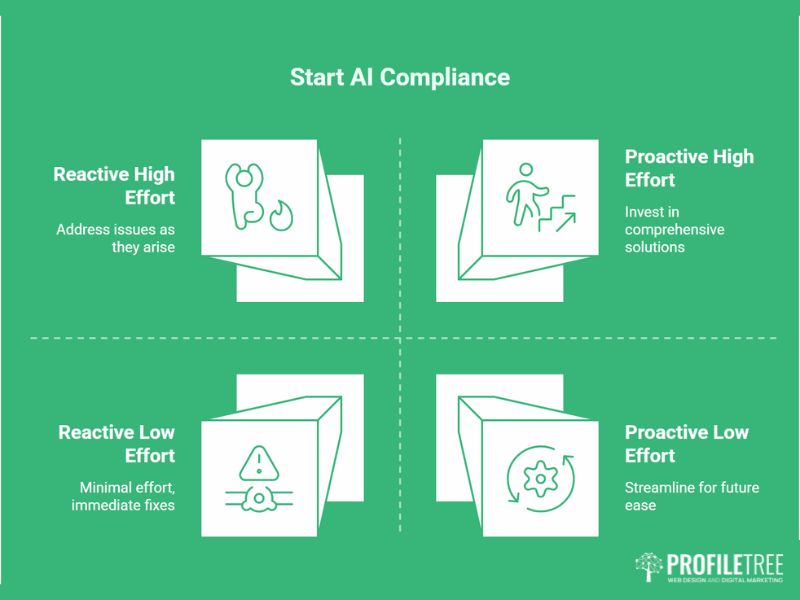

What AI Regulations Mean for Your Business in Practice

Understanding the landscape of AI regulations is one thing; knowing what to do about them is another. For businesses in the UK and Ireland, the practical implications depend significantly on the sectors you operate in, the markets you serve, and how deeply AI is embedded in your products and services.

Questions to Ask About Your Current AI Use

The starting point for any business is an audit of how AI is currently being used. This means mapping every tool, platform, and process that involves automated decision-making or AI-generated outputs, then assessing each against the relevant regulatory requirements. Key questions include: Does this AI system make or influence decisions about individuals? Does it process sensitive personal data? Does it operate in a sector classified as high-risk under EU AI regulations? Is it being used within the EU market?

The SME Compliance Reality

Small and medium-sized businesses face a genuine challenge with AI regulations: the compliance requirements are written primarily with large technology companies in mind, but the law applies regardless of company size. For SMEs, the most practical approach is to focus compliance effort on the AI applications that carry the highest risk and to document that effort clearly. Regulators across all jurisdictions have indicated that they will treat evidenced good-faith compliance effort more favourably than silence.

ProfileTree’s AI business training programmes for businesses across Northern Ireland, Ireland, and the UK specifically address the regulatory context of AI adoption, helping teams understand not just how to use AI tools effectively but how to do so within a defensible governance framework. This is increasingly a competitive differentiator: clients and partners are beginning to ask suppliers about their AI governance practices in the same way they ask about data protection policies.

Building for Future AI Regulations

Given the pace at which AI regulations are developing, building for future requirements is as important as satisfying current ones. The direction of travel across every major jurisdiction is towards more requirements, not fewer, particularly for AI systems that affect individuals’ rights and opportunities. Businesses that build robust internal AI governance now will face lower compliance costs as AI regulations tighten, and will be better positioned to win clients and contracts where governance is becoming a selection criterion.

The specific capabilities worth developing now include documented AI use policies covering what tools staff can use and for what purposes, supplier due diligence processes for AI tools purchased from third parties, and human oversight protocols for AI-assisted decisions. This applies across all digital channels, including social media marketing campaigns that use AI for targeting or content generation, where transparency obligations are already active under several regulatory frameworks.

How ProfileTree Supports Responsible AI Adoption

ProfileTree is a Belfast-based digital agency that has been helping businesses across Northern Ireland, Ireland, and the UK adopt digital technologies since 2011. Our AI-powered website design services are built with governance in mind from the outset, ensuring that the AI tools integrated into client websites meet transparency and accountability requirements under current and forthcoming AI regulations.

Our AI transformation services include AI strategy development, AI business training through Future Business Academy, AI marketing automation, and content strategy that accounts for the growing role of AI in search and discovery. Across all of these, an understanding of AI regulations and responsible AI governance is embedded in how we work.

For businesses at the start of their AI journey, we recommend beginning with a clear inventory of existing AI use, an honest assessment of risk exposure under current AI regulations, and a structured training programme that gives staff the knowledge they need to use AI tools within appropriate governance frameworks.

Keeping Pace with AI Regulations

AI regulations are one of the fastest-moving areas of business compliance. The EU AI Act’s full implementation schedule runs to 2026, and changes to US state-level AI regulations, UK regulatory guidance, and emerging frameworks in Asia and Latin America will continue to reshape the landscape throughout the decade. Staying current requires active monitoring, not a one-time review.

For businesses serious about responsible AI adoption, the investment in understanding AI regulations is straightforward to justify. The alternative is exposure to penalties, reputational damage, and the operational disruption of retrofitting compliance into systems already in production. Building governance from the start is consistently less costly than building it under regulatory pressure.

If your business is at any stage of AI adoption and would like to understand your compliance position, ProfileTree’s team can help. From digital strategy consultation through to the technical implementation of AI-powered websites and marketing systems, we work with businesses across Northern Ireland, Ireland, and the UK to make AI adoption effective, responsible, and commercially sustainable.

FAQs

Which countries have the strictest AI regulations?

The EU has the most comprehensive binding AI regulations globally, through the EU AI Act. China applies strict targeted AI regulations for generative AI and algorithm recommendation systems. The UK is currently lighter in approach but is moving towards formal requirements.

Do AI regulations apply to small businesses?

Yes. AI regulations apply based on what a system does and who it affects, not company size. A small business using AI in recruitment or credit decisions faces the same requirements as a large enterprise.

What is the difference between the EU AI Act and GDPR?

The GDPR governs personal data processing. The EU AI Act governs AI systems based on their risk level. Both apply simultaneously for EU operations, and compliance with one does not cover the other.

How do AI regulations affect digital marketing?

AI tools used for targeting, personalisation, or automated content generation carry transparency obligations under current AI regulations. Businesses should review their AI-driven marketing strategy against relevant requirements and be able to explain to users how AI influences what they see.

What should UK businesses prioritise for AI compliance?

Three priorities: understand the EU AI Act if you operate in EU markets, check your sector regulator’s AI guidance, and build internal documentation covering AI systems in use, their purposes, and the oversight processes in place.

Are there international standards for AI regulations?

Yes. The OECD AI Principles and the ISO/IEC 42001 standard for AI management systems both provide frameworks that cross national borders. ISO/IEC 42001 certification is gaining traction as a way to demonstrate structured AI governance to clients and regulators alike.