Compliance with the EU AI Act: Your 10-Step Operational Guide

Table of Contents

Compliance with the EU AI Act is no longer a future concern for businesses operating in or selling into the European Union. The world’s first comprehensive horizontal AI regulation entered into force in August 2024, and its requirements are rolling out in phases that will affect most organisations by mid-2026. For UK-based businesses, the challenge is doubled: the Brussels Effect means that compliance with the EU AI Act applies to your systems if their output reaches EU users, regardless of where your company is registered.

At ProfileTree, a Belfast-based digital agency specialising in web design, SEO, AI training, and digital marketing strategy, we have been helping clients across Northern Ireland, Ireland, and the UK understand what these obligations mean in practice. This guide cuts through the legal language to give you a clear, operational path to compliance with the EU AI Act.

Unlike GDPR, which focused primarily on data handling, compliance with the EU AI Act demands that businesses assess, document, and govern the AI systems they build and use from the ground up. The fines for non-compliance reach up to 35 million euros or 7% of global annual turnover, making this one of the highest-stakes regulatory frameworks in technology history. Whether you are a software provider, a marketing team using AI-powered tools, or a manufacturer integrating automation, achieving compliance with the EU AI Act requires a structured approach.

Understanding the EU AI Act and Who It Affects

Before any organisation can work towards compliance with the EU AI Act, it must understand the Act’s scope and the roles it defines. The legislation applies to providers, deployers, importers, and distributors of AI systems. Your obligations differ substantially depending on which seat you occupy. ProfileTree’s AI training for businesses includes a dedicated module that helps SME teams understand exactly which role they hold under the Act and what that means operationally.

The Legal Framework

The EU AI Act establishes a tiered, risk-based framework that governs how AI systems must be developed and operated. It was formally adopted in May 2024 and entered into force in August 2024. Prohibitions on unacceptable-risk AI systems apply from February 2025. Requirements for high-risk AI systems, general purpose AI models, and governance infrastructure apply from August 2026.

Organisations must know their obligations under this legal framework to avoid substantial financial penalties arising from non-compliance. A well-structured digital strategy that accounts for AI governance from the outset is far easier to maintain than one that tries to retrofit compliance after the fact.

Defining Your Role Under the Act

The Act distinguishes between four key actors, and your obligations shift materially depending on your position:

| Role | Definition | Key Obligations |

|---|---|---|

| Provider | Develops or places an AI system on the market under its own name | Full technical documentation, conformity assessment, CE marking, registration |

| Deployer | Uses an AI system in a professional context | Human oversight, transparency to users, incident reporting, DPIA where required |

| Importer | Places a third-country provider’s AI system onto the EU market | Verify provider compliance, maintain records, cooperate with surveillance |

| Distributor | Makes an AI system available in the EU market | Check CE marking and documentation before distribution |

A critical point for UK firms: if your company takes an off-the-shelf AI model and fine-tunes it on proprietary data for a specific professional purpose, you may transition from Deployer to Provider under the Act, inheriting the full suite of compliance obligations.

Step 1 and Step 2: AI Inventory and Risk Classification

The first practical step towards compliance with the EU AI Act is knowing exactly what AI systems your organisation uses or provides. You cannot classify what you have not inventoried. Once you have a complete picture, risk classification determines which obligations apply to each system.

Building Your AI Inventory

Your inventory must go further than a simple list of software tools. For each AI system, document the following:

- The intended purpose: the Act regulates purpose, not just technology

- Customer-facing tools such as AI chatbots must be documented with their intended use case and disclosure mechanisms clearly defined

- The data lineage: where training or operational data originates and whether it meets quality standards

- AI marketing and automation platforms must be assessed for whether their outputs affect individuals within the EU

- The deployment model: on-premise, third-party API, or standalone product

The Four Risk Tiers

Compliance with the EU AI Act is structured around four risk categories. Understanding which tier your AI system falls into determines the entire scope of your obligations.

| Risk Tier | Examples | Requirement |

|---|---|---|

| Unacceptable Risk | Social scoring by governments, subliminal manipulation, real-time biometric surveillance in public spaces | Prohibited outright from February 2025 |

| High Risk | AI in recruitment, credit scoring, educational assessment, critical infrastructure, law enforcement | Full conformity assessment, technical documentation, human oversight |

| Limited Risk | Chatbots, AI-generated content, deepfakes | Transparency obligations: users must be informed they are interacting with AI |

| Minimal Risk | Spam filters, AI-powered playlists, basic recommendation engines | No mandatory obligations; voluntary codes of practice encouraged |

For most SMEs and digital agencies, the systems in use will fall into the Limited or Minimal Risk categories. However, if you provide AI tools to clients for use in recruitment, financial decisions, or customer-facing scoring, those systems may cross into High Risk territory and require full compliance with the EU AI Act procedures.

Step 3: Conformity Assessments for High-Risk AI

For organisations operating high-risk AI systems, the conformity assessment is the centrepiece of compliance with the EU AI Act. This is not a one-off exercise; it must be maintained throughout the system’s operational life.

The Conformity Assessment Procedure

The conformity assessment is a systematic process that scrutinises the AI system’s risk management protocols, data governance framework, and technical documentation. The aim is to validate that the high-risk AI system adheres to standards for safety, transparency, and accountability. The five steps are:

- Determine whether the AI system qualifies as high-risk under Annex II or Annex III

- Conduct a full risk assessment and implement a risk management system

- Compile mandatory technical documentation

- Complete internal checks or engage a notified body, depending on the system type

- Affix CE marking and draft an EU declaration of conformity

Technical Documentation Requirements

Documentation serves as the primary evidence of compliance with the EU AI Act during market surveillance. It must be comprehensive and kept up to date. Our guide to AI regulations around the world explains how these documentation standards compare to other international frameworks. At minimum, technical documentation must cover:

- A full description of the AI system and its intended use case

- Justification for the high-risk classification

- Design specifications and expected operational lifetime

- Specifications for system inputs, outputs, and any training data used

- A summary of the risk assessment and the management measures in place

- Logs demonstrating ongoing compliance during operation

According to the EU AI Act official text, technical documentation for high-risk systems must be retained for ten years after the system has been placed on the market.

“In the realm of high-risk AI, leaving no stone unturned in the conformity assessment procedure is foundational for aligning with the EU AI Act. The diligence with which harmonised standards and technical documentation are treated can serve as the linchpin for regulatory alignment and broader market acceptance.” — Ciaran Connolly, Founder, ProfileTree

Steps 4 and 5: Data Governance and GDPR Alignment

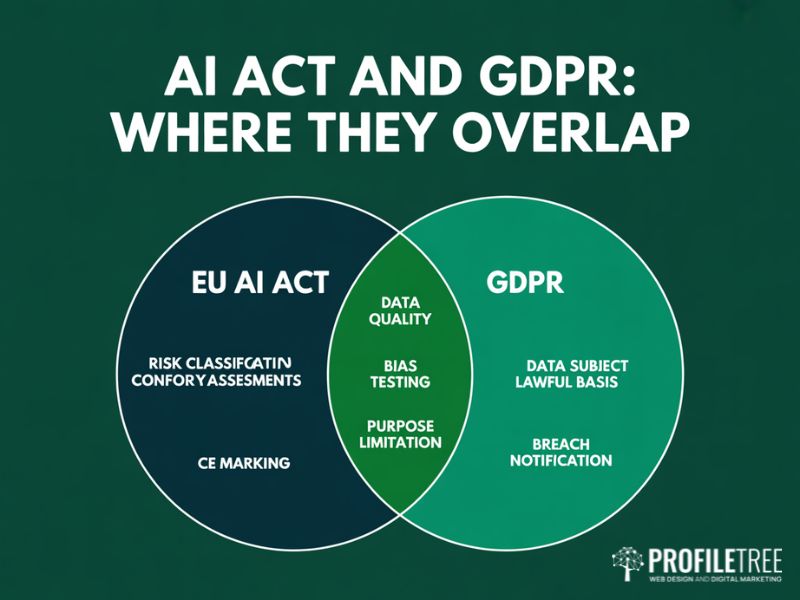

Data governance sits at the heart of compliance with the EU AI Act, and for most UK and EU organisations it connects directly to existing GDPR obligations. Our guide to GDPR and data protection for businesses provides the privacy law grounding that underpins this section. Treating these two regulatory frameworks as separate silos is one of the most common compliance mistakes we see.

Data Governance Under Article 10

Article 10 of the EU AI Act sets out specific requirements for training, validation, and testing data used in high-risk AI systems. Datasets must be relevant, sufficiently representative, and free from errors and biases that could compromise safety or fundamental rights. This means:

- All training datasets must be documented with their origin, scope, and known limitations

- Organisations must implement data governance procedures covering data quality, retention, and access

- Bias testing must be conducted prior to deployment and documented in the technical file

- Data collection processes must be logged and available for regulatory review

Alignment with GDPR and UK GDPR

The EU AI Act and GDPR are complementary, not competing, regulations. Where an AI system processes personal data, both frameworks apply simultaneously. The data minimisation and purpose limitation principles of GDPR apply directly to AI training data. Individuals retain their right to explanation under GDPR Article 22 for decisions made through automated systems.

For UK organisations, the practical approach is to extend your existing Record of Processing Activities to include an AI-specific addendum. Organisations producing AI-powered content marketing must ensure their AI content tools are documented, the outputs reviewed by a human editor, and any personalisation logic assessed for limited-risk transparency obligations.

Steps 6 and 7: Transparency, Human Oversight, and Governance Frameworks

Compliance with the EU AI Act requires organisations to build transparency and human oversight into AI system design from the start. This section covers the practical mechanisms that satisfy these obligations.

Ensuring Transparency in AI Systems

AI systems that interact with individuals must disclose their AI nature. This applies to chatbots, automated decision systems, AI-generated content, and emotion recognition tools. For limited-risk systems, the obligation is specifically transparency: users must be told they are dealing with an AI. For high-risk systems, the requirement is more extensive, covering explainability of decisions and availability of human review.

For digital agencies managing client websites, this means ensuring that any AI feature built into a web presence has the correct disclosure mechanisms in place. ProfileTree’s web design service incorporates AI disclosure design patterns as a standard component of any AI-powered feature brief.

Human Oversight Mechanisms

High-risk AI systems must allow human oversight throughout their operation. This means designing workflows so that a human operator can monitor outputs, intervene to override a decision, and halt the system when needed. The Act requires these override capabilities to be real and accessible, not merely procedural.

- Set up audit trails: log decisions made by the AI system for human review

- Implement override controls: enable operators to reverse AI decisions at any stage

- Train staff on the AI system: ensure they understand the technology and its limitations

Implementing a Governance Framework

Implementing a governance framework is indispensable for compliance with the EU AI Act. Our digital training programmes include a dedicated module on AI governance that covers how to build these structures within existing team workflows, without creating separate compliance silos.

A well-structured governance framework will encompass regular AI system monitoring, maintained compliance records, and staff who understand their obligations. It mandates decision-making structures aligned with legal and ethical standards, and oversight mechanisms that support the Act’s requirements.

“A clear governance framework is not just a regulatory requirement. It is a strategic differentiator. Businesses that govern their AI well earn more trust from customers, partners, and regulators. That trust has real commercial value.” — Stephen McClelland, Digital Strategist, ProfileTree

Steps 8 and 9: Safety, Security, and Fundamental Rights

Compliance with the EU AI Act requires organisations to treat safety and the protection of fundamental rights as design constraints throughout the AI system lifecycle. Risk management is not a one-off exercise; it must be built into ongoing operations.

Risk Management and Cybersecurity

High-risk AI systems must operate under a continuous risk management system. Cybersecurity is an explicit component: AI systems must be resilient against adversarial inputs and data poisoning. Our guide to cybersecurity for small businesses covers the security baseline that underpins AI Act compliance.

- Identify risks: conduct assessments covering scenarios where the AI system might fail or be manipulated

- Mitigate harms: develop protocols to reduce risks, such as fail-safes and user controls

- Integrate cybersecurity features that protect against unauthorised access and data breaches

Protecting Fundamental Rights and Autonomy

The Act requires that AI systems do not discriminate on the basis of protected characteristics, and that individuals retain meaningful autonomy over decisions that affect them. For high-risk applications, bias testing is mandatory. Where an AI system makes or significantly influences a decision about employment, credit, or access to services, its decision logic must be documented, explainable, and open to challenge.

Step 10: Managing UK-EU Divergence

The UK government has chosen a sector-led approach to AI regulation rather than a single comprehensive law. For UK firms whose AI output reaches EU users, compliance with the EU AI Act remains a live obligation. Our broader resource on AI in marketing and digital strategy explains how these regulatory considerations apply specifically to marketing technology and content tools.

The practical advice for UK businesses is to treat compliance with the EU AI Act as your baseline. UK sector-specific guidance generally asks for less, and any system that meets EU standards will satisfy UK regulatory expectations. Building to the higher common denominator once is more cost-effective than maintaining two separate compliance frameworks.

For UK firms, the key checklist items are: determine whether your AI output reaches EU-based users, assess whether the Brussels Effect applies to your systems, and document that assessment. If it does, treat compliance with the EU AI Act as a full obligation regardless of where your servers are located.

How ProfileTree Supports AI Act Compliance for SMEs

Compliance with the EU AI Act is a strategic challenge as much as a legal one. At ProfileTree, the Belfast digital agency founded by Ciaran Connolly, we integrate regulatory considerations into our SEO services, web development, and AI transformation projects from day one. Since 2011, our team has completed over 1,000 web and digital projects, and AI governance is now a standard component of every major AI brief.

Our AI transformation service covers the practical steps that connect compliance with the EU AI Act to business outcomes: AI readiness assessments, staff training on the Act’s classification framework, integration of AI tools into existing digital marketing and website strategies, and governance frameworks that satisfy the Act’s transparency and oversight requirements without adding unnecessary operational burden.

“By embedding ethical AI into web development and digital strategy, we not only future-proof businesses but also build experiences that users can genuinely trust. Compliance with the EU AI Act is not a barrier to innovation; it is the foundation that makes sustainable AI adoption possible.” — Ciaran Connolly, Founder, ProfileTree

If your business uses AI tools in its marketing, customer service, or website operations, contact ProfileTree for an AI Act readiness review. Our AI marketing and automation team can help you determine your risk classification, identify the documentation you need, and build the governance processes that keep you compliant as the law evolves.

Innovation and the Future of AI Governance

Compliance with the EU AI Act and innovation are not in opposition. The Act is designed to create a trustworthy environment in which businesses can invest in AI with confidence. Organisations that achieve compliance early will have a competitive advantage: their AI systems will be demonstrably trustworthy, their documentation will be audit-ready, and their governance processes will scale as they expand.

General Purpose AI models, such as large language models used in content generation, customer service, and data analysis, face their own compliance tier under the Act. Models deemed to have systemic risk face additional requirements including adversarial testing and incident reporting. For most SMEs using these models via API, the compliance obligations fall primarily on the model provider. If your business fine-tunes a general purpose model for a specific commercial purpose, you inherit additional responsibilities.

The next phase of AI governance will see the EU AI Act’s standards influence international frameworks. The UK, the US, and other major economies are watching the EU’s implementation closely. Building to EU standards now positions your business for whatever comes next, in any market.

Next Steps for Your Organisation

Compliance with the EU AI Act is achievable for businesses of all sizes, but it requires a structured approach and early action. Start with your AI inventory. Map every AI system your organisation uses or provides, determine the role you occupy under the Act, and classify each system by risk tier. If AI features are integrated into your website, your website development team needs to be part of that conversation from the beginning.

From there, the compliance pathway is clear: document, assess, govern, and monitor. For high-risk systems, engage a notified body early; the queue for assessments will lengthen as the August 2026 deadline approaches.

For UK businesses, do not let the absence of a domestic equivalent law create a false sense of security. Compliance with the EU AI Act is a live obligation if you have EU customers, and the reputational and commercial cost of non-compliance will far outweigh the cost of building a proper governance framework now. ProfileTree’s AI transformation team works with businesses across Northern Ireland and the UK to build exactly that.

FAQs

What is the timeline for compliance with the EU AI Act?

The Act entered into force in August 2024. Prohibitions on unacceptable-risk AI applied from February 2025. General purpose AI model obligations apply from August 2025. High-risk system requirements apply from August 2026.

Does the EU AI Act apply to UK businesses?

Yes, if your AI system’s output affects people in the EU. The Brussels Effect means location of registration is irrelevant; what matters is where the output lands.

What are the penalties for non-compliance?

Up to 35 million euros or 7% of global annual turnover for using prohibited AI. Up to 15 million euros or 3% of turnover for high-risk system violations.

Who enforces the EU AI Act?

National competent authorities in each EU member state, with the AI Office within the European Commission overseeing general purpose AI models at EU level.

What is the difference between a Provider and a Deployer?

A Provider places an AI system on the market under its own name. A Deployer uses it professionally. Providers carry the heavier compliance burden; deployers are responsible for oversight and transparency in use.

How does the EU AI Act relate to GDPR?

Both apply where AI processes personal data. The AI Act adds obligations on top of GDPR, specifically around data quality, bias testing, and explainability. For a wider comparison, see our guide to AI regulations around the world.