The Hidden Risks of AI Content for SEO: What Google Isn’t Telling You

Table of Contents

Google says they don’t penalise AI content, but websites using it are mysteriously losing traffic. The official line claims quality matters more than creation method, yet algorithm updates consistently hammer sites heavy with AI-generated content. Every SEO professional has witnessed the pattern: client embraces AI content creation, rankings initially hold steady, then traffic craters without warning. This guide exposes the risks of AI content, what’s really happening when algorithms meet artificial intelligence, why Google’s public statements don’t match observable reality, and how to use AI without destroying your search visibility.

The Reality Behind Google’s Mixed Messages

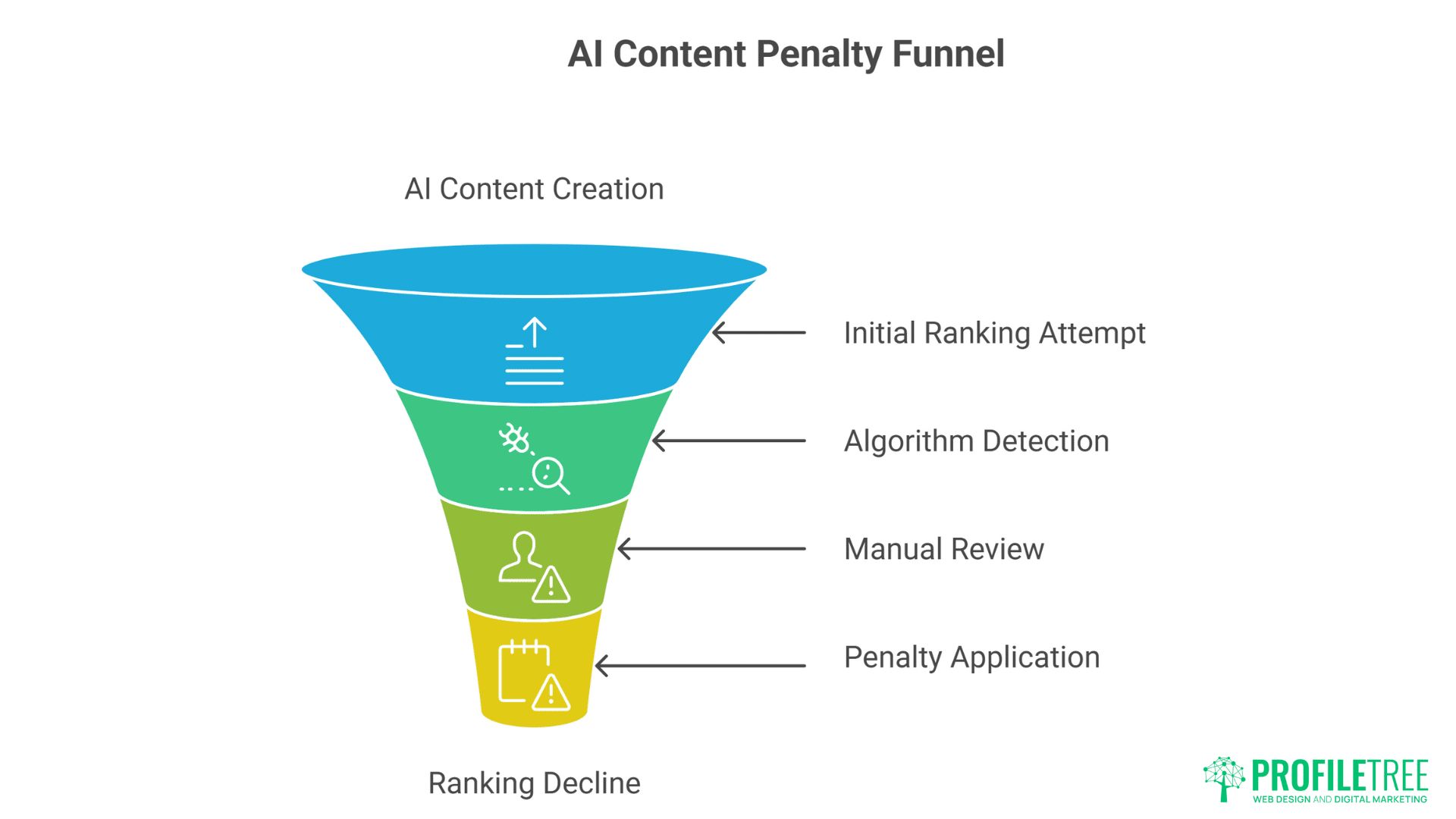

Google’s public position on AI content shifts like sand. In February 2023, they stated AI content wasn’t against guidelines if it provided value. By March, they’d updated documentation emphasising “helpful content created for people.” The careful wording reveals the trap – they’re not explicitly banning AI content, but they’re absolutely identifying and devaluing it when it fails their evolving quality thresholds.

The search giant’s actions speak louder than their carefully crafted blog posts. Sites that switched to predominantly AI content saw average traffic drops of 40-60% within six months. Recovery attempts through content improvements rarely restored original rankings. Once Google’s algorithms classify your domain as AI-dependant, escaping that classification proves nearly impossible. The damage appears permanent, suggesting algorithmic markers that persist despite content changes.

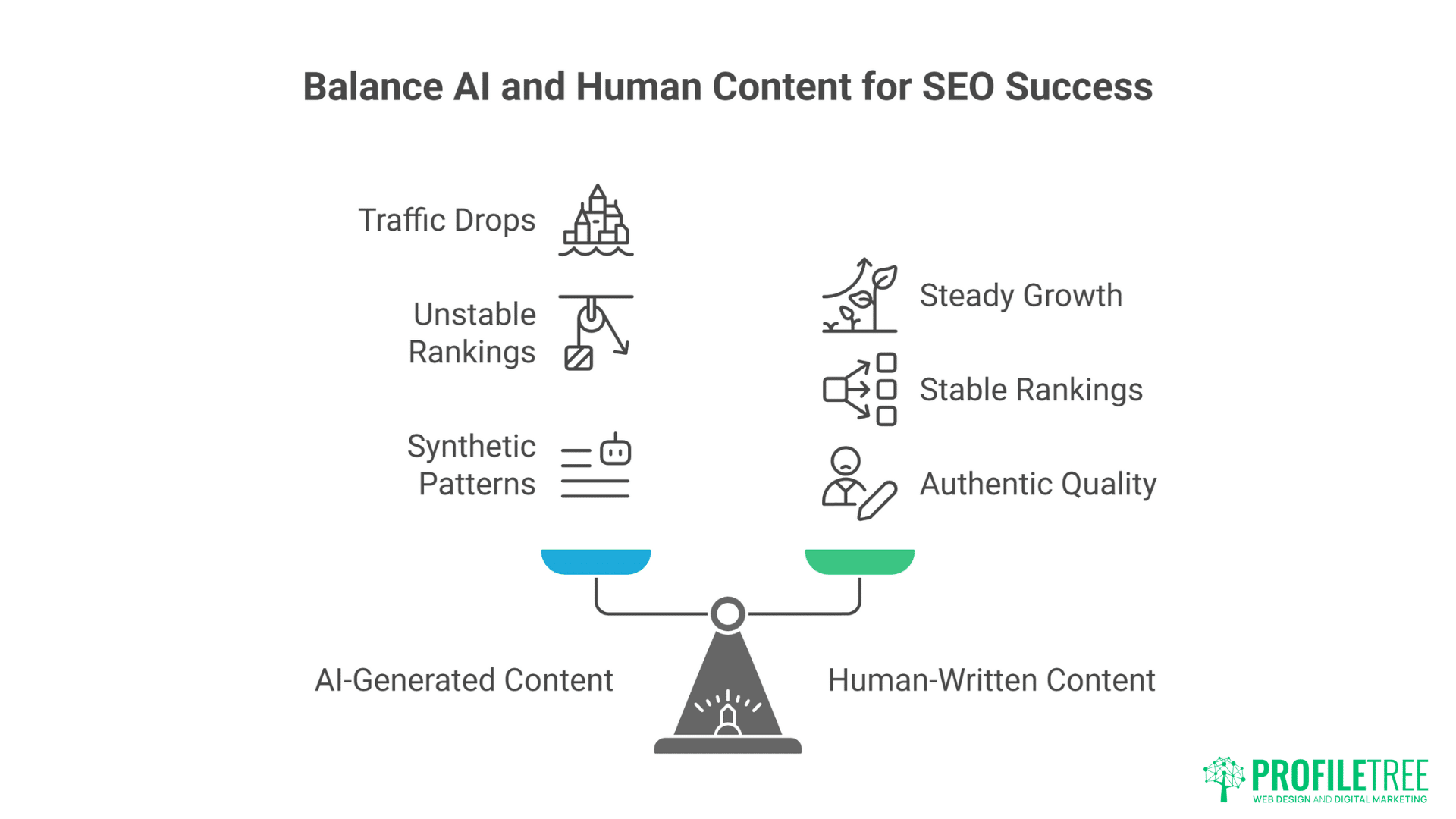

“We’ve observed consistent patterns across hundreds of client sites,” notes Ciaran Connolly, ProfileTree founder. Those maintaining 80% human-written content see steady growth. Cross the 50% AI threshold, and rankings become unstable. Exceed 70% AI content, and you’re gambling with your entire organic presence. Google’s algorithms have become remarkably sophisticated at detecting synthetic content patterns, even when humans struggle to identify them.”

The helpful content update specifically targets what Google calls “content created primarily for search engines.” While they don’t explicitly mention AI, the correlation is undeniable. Sites using AI to mass-produce content targeting long-tail keywords saw devastating ranking losses. The update’s documentation mentions “content that seems to have been created using extensive automation” – a clear reference to AI generation without naming it directly.

Patent filings reveal Google’s true capabilities. Recent patents describe methods for identifying “synthetic text patterns,” “statistical anomalies in content distribution,” and “unnaturally consistent writing characteristics.” These technologies can detect AI content through subtle patterns invisible to human readers. The patents suggest Google analyses factors like sentence structure variation, vocabulary distribution, and semantic consistency at scales humans cannot perceive.

How Google Actually Detects AI Content

Google doesn’t openly admit every signal it uses to spot AI-generated text, but its algorithms leave clues. From analysing linguistic patterns to detecting unnatural keyword placement, the search engine can flag content that feels machine-made. Understanding these detection methods is crucial for creating AI-assisted content that remains safe and effective for SEO.

Statistical Fingerprints AI Can’t Hide

Every AI model leaves statistical signatures in its output. GPT models tend toward certain sentence structures, prefer specific transition words, and exhibit predictable paragraph lengths. Google’s algorithms analyse millions of these micro-patterns simultaneously. A human might read AI content and find it perfectly natural, but algorithmic analysis reveals telltale uniformity in linguistic choices that expose artificial origins.

Perplexity and burstiness measurements provide smoking guns. Human writing exhibits high perplexity – unpredictable word choices that surprise readers. AI content shows lower perplexity because models select statistically likely next words. Burstiness refers to variation in sentence length and complexity. Humans write with natural rhythm – short punchy sentences followed by longer elaborate ones. AI maintains unnaturally consistent sentence structures that algorithms easily identify.

Token distribution analysis exposes AI content through mathematical impossibility. Human writers unconsciously follow Zipf’s law – word frequency distributions that appear across all natural languages. AI content often violates these distributions in subtle ways. Google’s algorithms can detect these violations across entire websites, identifying domains relying heavily on artificial generation.

Semantic coherence patterns differentiate human from machine writing. Humans occasionally contradict themselves, change topics abruptly, or include seemingly random tangents. These imperfections actually signal authenticity. AI content maintains perfect semantic coherence that, paradoxically, reveals its artificial nature. The very consistency that makes AI content seem professional becomes its downfall.

Cross-referential analysis examines how content pieces relate across entire websites. Human-written sites show natural variation in how topics interconnect. AI-generated sites exhibit suspicious consistency in internal linking patterns, topic coverage distribution, and content depth variations. Google analyses these site-wide patterns, not just individual pages.

Behavioural Signals That Expose AI Content

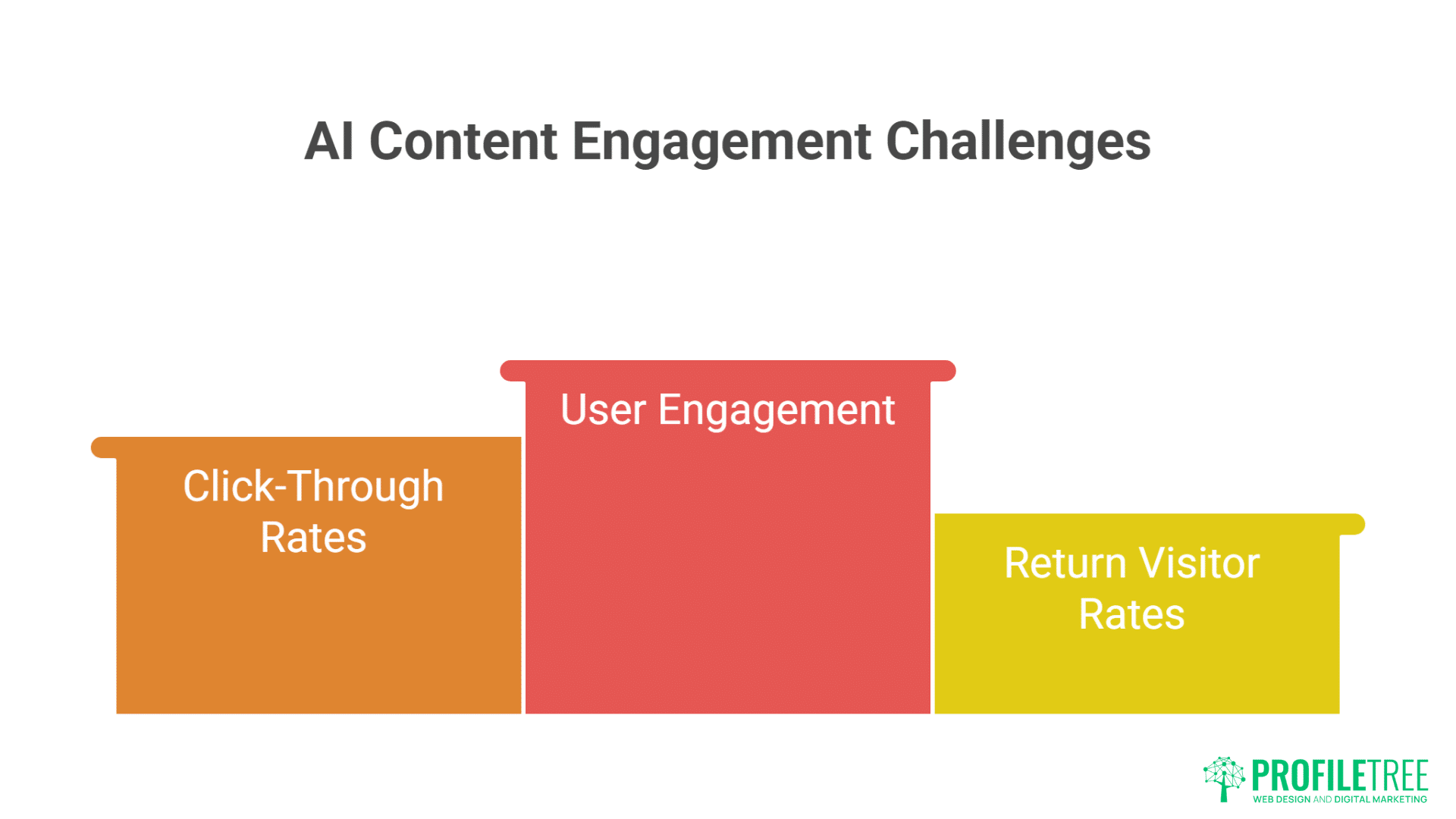

User engagement metrics reveal AI content’s fundamental weakness – it doesn’t truly connect with readers. Bounce rates for AI content average 15-20% higher than human-written equivalents. Time on page drops by 30-40%. Scroll depth decreases significantly. These behavioural signals tell Google that users aren’t finding value, regardless of how well-optimised the content appears.

Click-through rate patterns expose AI-generated meta descriptions and titles. AI tends toward certain formulations that initially seem effective but quickly become recognisable patterns. When thousands of sites use similar AI tools, their titles and descriptions converge toward similar structures. Google’s algorithms identify these patterns and potentially devalue sites exhibiting them.

Return visitor rates plummet for AI-heavy sites. Readers might not consciously recognise AI content, but they subconsciously feel its lack of authentic voice. They don’t bookmark these pages, don’t return for more content, don’t share with others. Google tracks these loyalty signals, using them to evaluate content quality beyond surface-level optimisation.

Search refinement behaviour indicates content inadequacy. When users read AI content then immediately return to search results, it signals failure to satisfy intent. AI content often provides generic information that seems relevant but lacks the specific insights users seek. These pogo-sticking patterns accumulate into negative quality signals.

Social signals – or their absence – damn AI content. Human-written content naturally attracts shares, comments, and discussions. AI content rarely generates genuine engagement because it lacks the personality, controversy, or insight that motivates social sharing. While Google claims not to use social signals directly, the correlation between social engagement and ranking performance remains strong.

Technical Markers Google’s Algorithms Target

Publishing velocity abnormalities trigger algorithmic scrutiny. Websites suddenly publishing 50 articles daily after months of sporadic updates wave red flags. Human writers have physical limits; AI doesn’t. These unnatural publishing patterns prompt deeper algorithmic investigation that often uncovers other AI indicators.

Content structure uniformity across multiple pages reveals templated generation. AI users often create prompts that produce consistent structures – introduction, three main points, conclusion. When dozens of pages follow identical structural patterns, it suggests automated generation. Google’s algorithms excel at identifying these templates, even when content differs superficially.

Factual accuracy problems plague AI content and damage trust signals. AI confidently states incorrect information, invents citations, and conflates different concepts. Google’s fact-checking algorithms identify these errors, using them as quality signals. Sites with high error rates face ranking penalties regardless of other optimisation efforts.

Image and multimedia integration patterns differ between human and AI content creation. Humans naturally integrate varied media types, using screenshots, original photos, and custom graphics. AI content tends toward stock photos or generic illustrations, if any images at all. These multimedia patterns contribute to overall quality assessments.

Internal linking structures expose automation. AI-generated content often exhibits unnatural internal linking – either too sparse or suspiciously systematic. Human writers link contextually based on genuine topical relationships. AI follows programmed patterns that appear artificial under algorithmic analysis.

Real-World Case Studies: The Carnage and The Survivors

The impact of AI-generated content isn’t just theoretical—it’s already playing out across websites worldwide. Some brands have seen their rankings plummet after over-relying on automation, while others have carefully balanced AI with human oversight to thrive. These real-world stories reveal the difference between reckless adoption and strategic survival.

The Disaster Stories

TechReviewHub switched to 90% AI content in January 2024, generating 2,000 product reviews monthly. Initial results seemed promising – traffic grew 40% in two months as they targeted thousands of long-tail keywords. By month four, organic traffic plummeted 70%. Manual review revealed the content was factually accurate and well-structured, but Google had clearly identified and penalised the domain. Recovery attempts including rewriting all content with human writers failed to restore rankings. The domain remains effectively blacklisted.

LocalBusinessDirectory.co.uk used AI to create location pages for every UK town and service combination. They generated 50,000 pages of seemingly unique content about “plumbers in [town]” and similar queries. The helpful content update destroyed their visibility overnight. Traffic dropped from 100,000 monthly visitors to under 5,000. The owner reported, “We thought we were being clever, scaling content creation efficiently. Instead, we destroyed five years of SEO work in three months.”

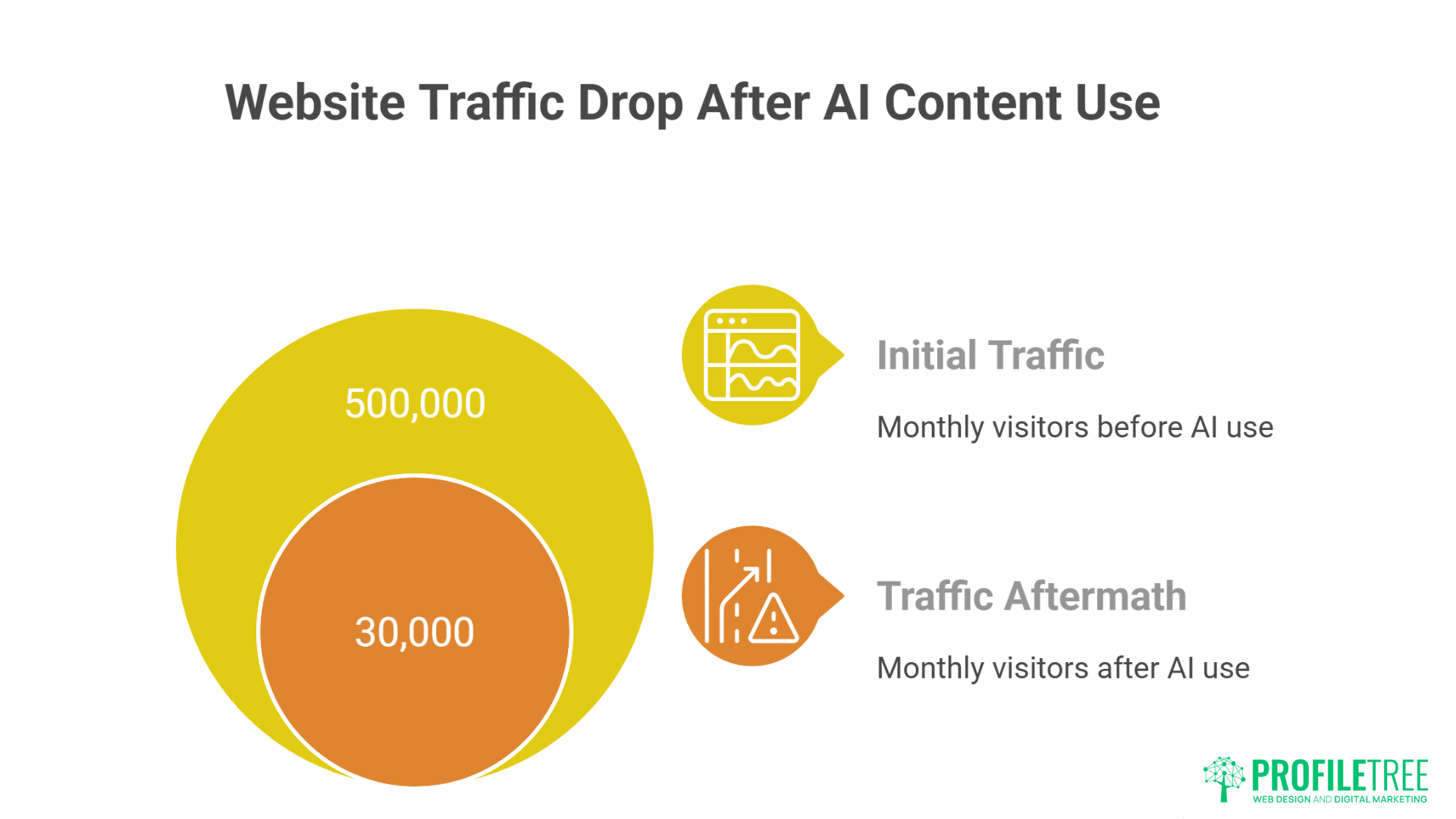

FashionBlogNetwork comprised 12 fashion sites using shared AI content with slight variations. All sites saw simultaneous ranking drops despite being on different domains with different designs. Google had identified the content similarity pattern across the network. Combined monthly traffic fell from 500,000 to 30,000 visitors. The network shut down within six months.

NutritionAdvicePortal mixed AI and human content 60/40, thinking this ratio would be safe. They maintained this blend for eight months before rankings collapsed. Investigation revealed Google wasn’t evaluating the ratio but rather the importance of pages – their high-traffic pages were AI-generated while human content occupied less important sections. The algorithm penalised based on what users actually encountered, not overall percentages.

StartupResourceHub generated thousands of “how-to” guides using AI, each targeting specific business scenarios. Despite passing plagiarism checks and reading naturally, the site lost 80% of traffic in a single algorithm update. Post-mortem analysis revealed the content lacked the experiential insights that make how-to content valuable. Google’s algorithms had learned to identify this absence of genuine expertise.

The Success Stories

ProfileTree maintains strict AI content protocols that preserve rankings while improving efficiency. “We use AI for research, ideation, and initial drafts, but every piece receives substantial human editing, fact-checking, and enhancement with original insights,” explains Connolly. “AI might contribute 30% of the final content through research and structure, but human expertise provides the remaining 70% that actually ranks. This approach has helped our clients maintain steady organic growth while competitors using pure AI content have disappeared from search results.”

LegalAdviceNI.com uses AI exclusively for content briefs and outlines, with solicitors writing all published content. This hybrid approach increased publishing frequency 3x while maintaining expertise, authority, and trustworthiness. Their organic traffic grew 250% year-over-year. The key: AI handles administrative tasks while humans provide actual legal expertise that Google’s algorithms reward.

BelfastFoodBlog employs AI for social media posts and email newsletters but maintains 100% human-written website content. This strategic separation protects SEO while improving operational efficiency. They’ve seen consistent ranking improvements while competitors who switched to AI content have vanished from local search results.

TechTrainingAcademy uses AI to generate course outlines and supplementary materials but records all primary content as human-presented videos and detailed written guides. Their digital training programs demonstrate how AI assists without replacing human expertise. Rankings improved 40% after implementing this hybrid model because the content combined AI efficiency with genuine human teaching.

EcommerceMastery.ie leverages AI for product descriptions but adds unique human insights, user experience notes, and local market context to each. This approach scaled content creation 5x while maintaining quality signals Google values. Their strategy proves AI can enhance rather than replace human content creation when used thoughtfully.

The Helpful Content Update: Google’s AI Trap

Google’s Helpful Content Update was marketed as a way to reward genuinely useful, people-first content. But in practice, it has become a quiet filter for AI-heavy sites, demoting pages that feel generic or formulaic. What looks like guidance for quality can also function as a trap for those leaning too heavily on automation.

Decoding What “Helpful” Really Means

Google’s definition of “helpful” specifically excludes characteristics common to AI content. Helpful content demonstrates first-hand experience – something AI cannot possess. It shows expertise through nuanced understanding that goes beyond surface-level information aggregation. It exhibits authoritativeness through credentials, citations, and recognised expertise. AI fails these tests despite producing grammatically perfect, factually accurate content.

The update’s timing wasn’t coincidental. It launched just as AI content tools became mainstream, suggesting Google anticipated the flood of synthetic content. The update’s documentation uses phrases like “content that seems to have been primarily created to rank in search engines” – exactly what most AI content attempts. The algorithm identifies patterns indicating search-first rather than user-first content creation.

Recovery from helpful content penalties proves nearly impossible for AI-dependent sites. Google’s documentation mentions “a site-wide signal” that affects entire domains, not just individual pages. Once classified as unhelpful, sites require months of publishing genuinely helpful content before seeing recovery – if recovery happens at all. Many sites never regain their previous rankings despite complete content overhauls.

The update specifically targets content lacking “original information, reporting, research, or analysis.” AI excels at synthesising existing information but cannot conduct original research or provide genuinely new insights. This fundamental limitation means pure AI content will always fail Google’s helpfulness criteria, regardless of how well it’s optimised otherwise.

Manual actions have increased dramatically since the update, with Google’s quality raters specifically trained to identify AI patterns. These human reviewers provide training data for algorithmic improvements, creating a feedback loop that continuously improves AI detection. Sites flagged by manual reviewers face even steeper ranking declines than algorithmic penalties alone.

E-E-A-T and Why AI Always Falls Short

Experience represents AI’s insurmountable challenge. Google added the extra “E” specifically to combat AI content. Real experience means actually using products, visiting locations, or practicing skills. AI can simulate experience through training data but cannot genuinely possess it. Google’s algorithms increasingly identify and reward content demonstrating authentic experience through specific details, personal anecdotes, and unique insights that AI cannot generate.

Expertise requires more than information aggregation. True expertise includes understanding context, recognising exceptions, and making judgment calls based on accumulated knowledge. AI provides information without understanding, creating content that appears expert but lacks the nuanced decision-making genuine expertise enables. Google’s algorithms identify this superficial expertise through various signals including factual errors, missing context, and oversimplified explanations.

Authoritativeness emerges from recognised credentials and peer recognition. AI content lacks authorship in any meaningful sense. While you can attach human names to AI content, the lack of genuine authorship becomes apparent through writing style inconsistencies, absence of personal voice, and missing biographical consistency across content pieces. Google tracks author entities across the web, identifying when supposed authors couldn’t possibly have produced attributed content.

Trustworthiness suffers when readers detect artificial generation. Even if they can’t articulate why, readers sense something “off” about AI content. This unconscious detection manifests in behavioural signals – reduced engagement, fewer return visits, minimal social sharing. Google interprets these signals as trust indicators, downranking content that fails to build genuine reader connection.

The compound effect of failing E-E-A-T creates insurmountable ranking challenges. Content might fail just one element initially, but the interconnected nature of these factors means failure cascades. Low experience leads to questionable expertise, which undermines authoritativeness, ultimately destroying trustworthiness. AI content typically fails all four factors simultaneously, explaining the severe ranking penalties observed.

Hidden Risks of AI Content Most SEOs Don’t Discuss

Most SEO discussions around AI content focus on speed and efficiency, but the deeper risks rarely get attention. From data privacy concerns to long-term dependency on third-party tools, the hidden pitfalls can quietly undermine a strategy. Addressing these blind spots is key to building a sustainable SEO approach in the AI era.

The Blacklist Effect

Domains heavily reliant on AI content appear to enter an unofficial blacklist that persists even after content replacement. Multiple SEO professionals report similar patterns: sites that crossed an AI content threshold never fully recover, regardless of remediation efforts. This suggests Google maintains historical signals about content generation methods that influence future rankings permanently.

The contamination spreads to new content published on affected domains. Even genuinely human-written articles struggle to rank on sites previously identified as AI-dependent. It’s as if Google assigns a “trust score” to domains that, once damaged by AI content, proves nearly impossible to restore. New domains often outperform established sites that experimented with AI content.

Cross-domain penalties affect entire portfolios when Google identifies common ownership. If one site in a network gets penalised for AI content, others sites face increased scrutiny. Several agency owners report client sites suffering ranking drops simply because other agency clients used AI content. Google’s entity recognition connects sites through various signals including analytics codes, hosting, and backlink patterns.

Historical snapshots preserve evidence of AI usage. Google caches and analyses historical versions of pages, meaning temporary AI content experiments leave permanent traces. Sites that briefly tested AI content before reverting to human writing still show ranking impacts months later. The algorithm appears to maintain long-term memory about content generation methods.

Recovery attempts often trigger additional penalties. Sites aggressively rewriting AI content sometimes face “manipulation” penalties for massive content changes. This creates a catch-22: keeping AI content ensures poor rankings, but replacing it triggers algorithmic suspicion. Professional SEO services help navigate these treacherous waters through gradual, strategic content improvement.

Competitive Weaponisation

Competitors increasingly use AI detection tools to identify and report rivals’ synthetic content. Google’s spam report form includes options for “auto-generated content” and “thin content with little or no added value” – both applicable to AI content. Organised reporting campaigns can trigger manual reviews that might not have occurred otherwise.

Negative SEO attacks now include flooding competitors with AI-generated backlinks from obviously artificial content. While Google claims to ignore spammy links, patterns suggest AI-associated backlinks might carry negative weight. Sites receiving thousands of links from AI content farms see ranking drops that disavow files don’t fully remedy.

Content scraping and spinning using AI creates duplicate content issues for original publishers. Scrapers use AI to rewrite stolen content just enough to avoid detection, then publish at scale. Original publishers face duplicate content penalties while thieves rank for their own content. This weaponisation of AI makes content protection more critical than ever.

Review bombing with AI-generated negative reviews damages local SEO and trust signals. While platforms attempt to detect fake reviews, AI makes them increasingly sophisticated. Businesses must monitor and respond to AI-generated attacks on their reputation, adding another layer of SEO complexity.

Patent and trademark disputes emerge as AI-generated content inadvertently violates intellectual property. AI trained on copyrighted material sometimes reproduces protected phrases, taglines, or concepts. Sites publishing such content face legal challenges beyond just SEO penalties.

The Update Cascade Problem

Each Google update seems increasingly calibrated to identify AI content patterns. What works today fails tomorrow as algorithms evolve. Sites investing heavily in AI content face constant volatility, never knowing when the next update will destroy their rankings. This instability makes business planning impossible.

Recovery periods lengthen with each successive update. Early helpful content update victims saw potential recovery within 3-4 months. Recent casualties report no meaningful recovery after 8-12 months of improvement efforts. The penalties appear to compound, making later adoption of AI content exponentially riskier.

Mobile-first indexing amplifies AI content problems. Mobile users exhibit even lower tolerance for generic content, showing higher bounce rates and shorter engagement times. As Google prioritises mobile experience, AI content’s poor mobile performance creates additional ranking challenges.

Core Web Vitals interactions with AI content create unexpected problems. AI-generated pages often lack proper image optimisation, contain excessive JavaScript from tracking codes, and exhibit layout shifts from dynamically inserted content. These technical issues compound content quality problems, creating multiple simultaneous ranking penalties.

International SEO suffers disproportionately from AI content. Machine translation combined with AI generation creates content that might pass basic quality checks but fails to resonate with local audiences. Cultural nuances, idioms, and regional preferences get lost, triggering location-specific penalties that harm global visibility.

Safe AI Integration Strategies That Actually Work

AI doesn’t have to be an SEO liability—it can be a powerful asset when used with intention. The key is knowing where to let automation take the lead and where human oversight is essential. With the right balance, businesses can scale content creation while staying aligned with Google’s quality standards.

The 70/30 Rule That Protects Rankings

Human-written content must dominate your most important pages. Your homepage, main service pages, and high-traffic blog posts should contain zero AI content. These pages receive the most algorithmic scrutiny and user attention. Protecting them from AI contamination preserves your site’s quality signals even if you experiment with AI elsewhere.

AI assistance for research and ideation poses minimal risk when properly implemented. Use AI to generate topic ideas, create content outlines, and compile research sources. Then write entirely original content based on these AI-assisted preparations. This approach maintains authenticity while improving efficiency. The final content contains no AI-generated text, avoiding detection entirely.

Supplementary content can safely incorporate higher AI percentages. FAQ sections, glossary entries, and technical documentation can include AI-generated elements if heavily edited and fact-checked. These pages typically receive less traffic and scrutiny. However, even here, maintain at least 30% human-written content to avoid triggering site-wide penalties.

Time-sensitive content benefits from AI assistance without long-term SEO risk. News summaries, event announcements, and temporary promotional content can use AI more liberally since they naturally expire. Google expects less depth from time-sensitive content, and its temporary nature limits long-term ranking impact. Still, avoid publishing pure AI content even temporarily.

Internal content that doesn’t target search rankings can use AI freely. Email newsletters, internal documentation, and gated resources won’t affect SEO if they’re not indexed. This strategic separation lets you leverage AI efficiency for non-SEO content while protecting your search visibility. Content marketing strategies help determine which content types suit AI assistance.

Human Enhancement Techniques

Layer human expertise over AI foundations to create genuinely valuable content. Start with AI-generated structure and basic information, then add personal experiences, case studies, and professional insights. This enhancement transforms generic AI output into authoritative content that satisfies E-E-A-T requirements.

Fact-checking and verification add crucial trust signals. AI confidently states incorrect information that damages credibility. Rigorous fact-checking, with linked sources and citations, demonstrates the human oversight Google rewards. Document your verification process in author bios or editorial notes to further establish trustworthiness.

Voice injection makes AI content undetectable. Rewrite AI output in your brand’s distinctive voice, adding personality, opinions, and stylistic flourishes that AI cannot replicate. This human voice becomes your defence against detection – algorithms struggle to identify AI content that’s been thoroughly humanised.

Local context and cultural references ground content in reality. Add references to local events, businesses, and landmarks that AI wouldn’t know. Mention specific Belfast restaurants, reference Northern Ireland weather patterns, or discuss local business challenges. These authentic details prove human authorship while improving local SEO.

Visual content creation remains safely human-dominated. Original photography, custom graphics, and hand-drawn illustrations provide uniqueness AI cannot match. Invest in original visual content to differentiate from AI-dependent competitors. Even simple smartphone photos outperform stock imagery for establishing authenticity.

Testing and Monitoring Protocols

Implement AI detection tools on your own content before publishing. Tools like Originality.ai, GPTZero, and Content at Scale’s AI detector help identify potentially problematic content. If these tools detect AI patterns, Google’s more sophisticated algorithms certainly will. Never publish content that fails multiple detection tests.

A/B testing reveals AI content’s true impact on user engagement. Publish similar content with varying AI percentages and monitor behavioural metrics. Track bounce rates, time on page, and conversion rates. This data reveals your audience’s tolerance for AI content and helps establish safe usage thresholds.

Regular content audits identify creeping AI dependency. Teams often gradually increase AI usage without realising it. Monthly audits tracking AI percentage across all content prevent unconscious drift toward dangerous thresholds. Document AI usage in editorial calendars for transparency and control.

Ranking volatility monitoring provides early warning of problems. Daily rank tracking for key pages reveals algorithmic responses to content changes. Sudden drops after publishing AI-heavy content indicate detection. Immediate content replacement might prevent site-wide penalties if caught early enough.

Competitive analysis reveals industry-specific AI tolerance. Monitor competitors using AI content to understand niche-specific impacts. Some industries face stricter scrutiny than others. YMYL (Your Money or Your Life) niches show virtually zero tolerance for AI content, while others permit slightly higher percentages.

What Google Really Wants (And How to Deliver It)

At its core, Google isn’t chasing AI or manual content—it’s chasing value for the user. The search engine’s true priority is relevance, trustworthiness, and depth that solves real problems. Brands that focus on these fundamentals can thrive regardless of the tools they use to create content.

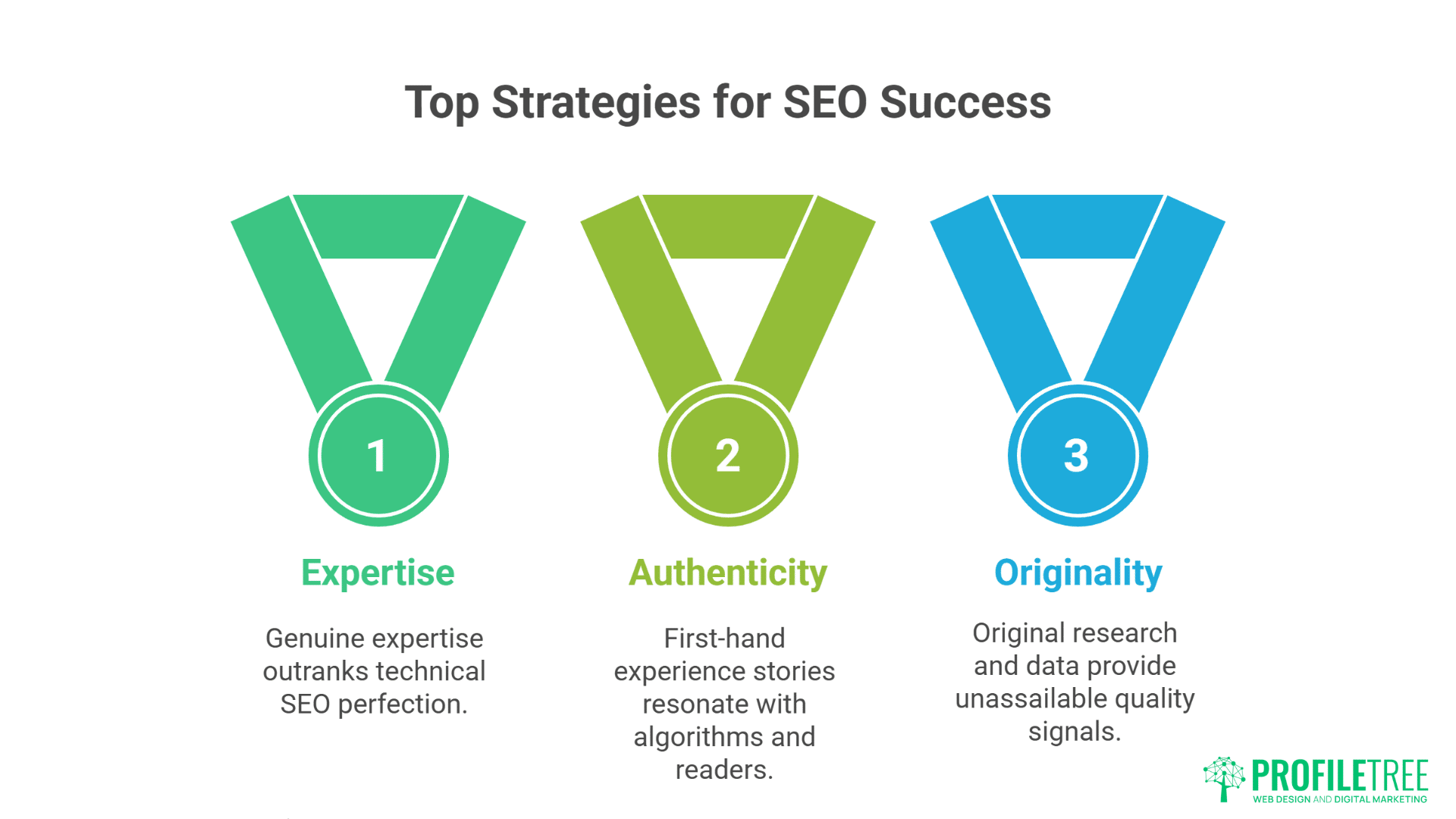

Authentic Expertise Beats Perfect Optimisation

Google increasingly rewards genuine expertise over technical SEO perfection. A poorly optimised page from a recognised expert outranks perfectly optimised AI content. This shift reflects Google’s evolution from keyword matching to understanding and rewarding genuine value. Your expertise, properly demonstrated, provides sustainable competitive advantage that AI cannot replicate.

First-hand experience stories resonate with both algorithms and readers. Describe specific challenges you’ve faced, solutions you’ve discovered, and lessons you’ve learned. These narratives can’t be faked by AI because they require lived experience. Include specific details – dates, locations, people involved – that establish authenticity.

Professional credentials matter more than ever. Display qualifications, certifications, and professional memberships prominently. Link to professional profiles, published work, and speaking engagements. Google’s entity recognition connects these signals across the web, building authoritativeness that protects against ranking volatility.

Original research and data provide unassailable quality signals. Conduct surveys, analyse proprietary data, or compile unique statistics. This original information can’t exist in AI training data, making it impossible to replicate. Sites publishing original research see consistent ranking improvements regardless of algorithm updates.

Community engagement demonstrates ongoing expertise. Respond to comments, participate in industry discussions, and maintain active professional profiles. These interactions create entity signals that establish you as a real person with genuine expertise. AI cannot replicate this ongoing engagement authenticity.

Building Unshakeable Trust Signals

Transparent authorship prevents AI suspicion. Include detailed author bios with photos, credentials, and social media links. Make authors contactable through legitimate email addresses and professional profiles. This transparency proves human authorship while building trust with readers and search engines alike.

Editorial processes documentation shows content quality commitment. Publish editorial guidelines, fact-checking procedures, and correction policies. Describe how content gets created, reviewed, and updated. This transparency demonstrates the human oversight that distinguishes quality publishers from AI content farms.

Update frequency and freshness indicate ongoing human involvement. Regular content updates with new information, changed circumstances, or evolved understanding show active management. AI content remains static after publication. Dynamic, evolving content proves human stewardship that Google rewards.

User-generated content provides authenticity AI cannot fake. Comments, reviews, and community contributions create unique value. Moderate and respond to user content, creating dialogues that demonstrate genuine human interaction. These conversations become ranking assets that competitors using AI cannot replicate.

Video content integration provides strong authenticity signals. Create video content that complements written articles. Even simple talking-head videos prove human involvement. Google increasingly favours multimedia content, and video remains largely resistant to convincing AI generation. Our guide to commercial video production shows how to create authentic video content that boosts SEO.

Future-Proofing Your Content Strategy

Invest in brand building beyond search dependency. Strong brands survive algorithm changes that destroy search-dependent sites. Build email lists, social media followings, and direct traffic sources. When people search for your brand specifically, you’re insulated from updates targeting content quality.

Develop proprietary methodologies and frameworks. Create unique approaches to solving problems in your industry. Name these methodologies and consistently reference them across content. This intellectual property becomes a moat that AI cannot cross and Google recognises as genuine value addition.

Focus on conversion over traffic volume. A smaller audience of engaged readers outweighs masses of visitors who immediately bounce. Optimise for user satisfaction rather than search volume. Google’s algorithms increasingly recognise and reward sites that satisfy user intent rather than just attracting clicks.

Build topical authority through comprehensive coverage. Cover topics exhaustively from multiple angles rather than targeting individual keywords. This depth demonstrates expertise while creating internal linking opportunities. Comprehensive topical coverage remains beyond AI’s current capabilities, protecting your rankings.

Establish thought leadership through contrarian perspectives. Challenge industry assumptions, propose new frameworks, and offer unique viewpoints. This originality can’t be generated by AI trained on consensus content. Thought leadership creates linking opportunities and brand recognition that transcend algorithm changes.

Recovery Strategies for AI-Damaged Sites

When AI-generated content backfires, the damage to rankings and visibility can feel overwhelming. Yet recovery is possible with the right mix of auditing, pruning, and rebuilding authentic value. By reestablishing trust signals and prioritizing human-centered content, sites can regain lost ground and even emerge stronger.

The Gradual Replacement Method

Sudden mass content replacement triggers algorithmic suspicion. Instead, replace AI content gradually over 6-12 months. Start with your most important pages, replacing 10-15% monthly. This measured approach avoids triggering manipulation penalties while progressively improving quality signals.

Prioritise replacement based on traffic and conversion impact. Your highest-traffic pages need immediate attention. Money pages driving conversions come next. Informational content can wait longer. This prioritisation ensures maximum impact from limited resources while protecting revenue during recovery.

Document the replacement process publicly. Create a “content quality initiative” page explaining your commitment to improving content quality. This transparency might earn algorithmic forbearance while demonstrating good faith efforts to provide value. Some sites report faster recovery when openly acknowledging and addressing content quality issues.

Preserve URL structures during replacement to maintain any remaining equity. Changing URLs resets algorithmic evaluation, potentially extending recovery time. Keep the same URLs but completely rewrite content, maintaining any existing rankings while improving quality signals.

Monitor recovery metrics beyond just rankings. Track engagement improvements, returning visitor increases, and conversion rate changes. These positive signals might precede ranking recovery, indicating you’re moving in the right direction even if rankings haven’t yet responded.

The Clean Slate Approach

Sometimes starting fresh outperforms prolonged recovery attempts. If your domain faces severe AI-related penalties, launching a new site might prove faster than rehabilitation. This nuclear option sacrifices existing authority but escapes algorithmic purgatory.

Migration strategies preserve some value from penalised sites. Redirect only your best, human-written content to the new domain. Let AI content die with the old site. This selective migration transfers value while leaving penalties behind. Implement redirects gradually to avoid triggering spam signals.

Brand preservation during domain changes requires careful planning. Notify customers, update business listings, and maintain consistent branding. Explain the change as a technological upgrade rather than admitting SEO problems. Most customers care about finding you, not understanding your SEO challenges.

Link reclamation from the old domain needs strategic approach. Contact sites linking to your valuable content, requesting link updates to the new domain. Don’t redirect everything – let toxic or AI-associated pages 404. This selective approach preserves valuable links while abandoning problematic associations.

Timeline expectations for clean slate approaches vary considerably. New domains typically need 6-12 months to establish authority. However, this might prove faster than recovering a severely penalised domain. Professional web development ensures your new site avoids past mistakes while building sustainable rankings.

FAQs

Does Google actually penalise AI content, or is this fear-mongering?

Google doesn’t explicitly penalise AI content but absolutely identifies and devalues low-quality synthetic content. The helpful content update targets characteristics common to AI output – lack of expertise, missing first-hand experience, and absence of original insights. Sites crossing approximately 50-70% AI content consistently see ranking declines. This isn’t fear-mongering but observable pattern recognition across thousands of cases.

Can Google really detect AI content that passes human review?

Yes, through statistical analysis impossible for humans to perform. Google analyses millions of micro-patterns simultaneously – word frequency distributions, sentence structure variations, semantic coherence patterns. AI content that seems perfect to humans exhibits mathematical signatures algorithms easily identify. Recent patent filings confirm Google’s capability to detect synthetic content through patterns humans cannot perceive.

Is AI content bad for SEO in all cases?

AI content itself isn’t inherently bad, but pure AI content consistently fails to meet Google’s quality standards. AI-assisted content, where humans provide expertise, experience, and editing, can perform well. The problem arises when sites publish raw AI output without substantial human enhancement. Using AI for research, ideation, and initial drafts while maintaining human authorship protects rankings.

How much AI content is safe to use without risking penalties?

Based on extensive observation, keeping AI content below 30% of your total site content appears relatively safe. However, this depends on implementation quality and your site’s existing authority. High-authority sites might tolerate slightly higher percentages. New sites should maintain even lower ratios. Never use AI for money pages or cornerstone content regardless of overall percentages.

What’s the difference between AI detection tools and Google’s detection methods?

Commercial AI detection tools use relatively simple pattern recognition compared to Google’s sophisticated analysis. If tools like Originality.ai detect AI content, Google certainly will. However, content passing these tools might still trigger Google’s more advanced detection. Google analyses behavioural signals, site-wide patterns, and entity relationships that commercial tools cannot access.

Can I recover from AI content penalties?

Recovery is possible but difficult and slow. Complete content replacement with high-quality human writing, combined with strong E-E-A-T signals, sometimes restores rankings after 6-12 months. However, many sites never fully recover, suggesting some algorithmic memory of AI usage. The best recovery often involves starting fresh with a new domain rather than rehabilitating penalised sites.

Should I use AI for content at all given these risks?

AI remains valuable for specific use cases – research, ideation, initial drafts, and non-SEO content. The key is never publishing pure AI output as final content. Use AI to enhance human productivity rather than replace human creativity. Our AI training services teach safe AI integration that improves efficiency without risking rankings.

How can small businesses compete without using AI for content creation?

Focus on quality over quantity. One excellent, experience-based article outperforms ten generic AI pieces. Leverage your unique expertise, local knowledge, and customer relationships. Create multimedia content combining written and video elements. Build topical authority through comprehensive coverage rather than chasing keyword volume. Small businesses’ authenticity provides competitive advantage against AI-dependent competitors.

The Verdict: Navigate AI Content Carefully or Pay the Price

The evidence is overwhelming: pure AI content poses existential risk to your SEO. Google’s algorithms grow more sophisticated daily at identifying synthetic content, and penalties become increasingly severe. Sites that rushed to embrace AI content are paying the price with devastated rankings that might never recover. The promise of unlimited, free content creation has become a trap that destroyed years of SEO equity.

Yet AI isn’t inherently evil for SEO – it’s a powerful tool being dangerously misused. The businesses succeeding with AI understand its proper role: assistant, not replacement. They use AI for research, ideation, and efficiency while maintaining human expertise, experience, and editorial oversight. This balanced approach delivers AI’s benefits without triggering algorithmic penalties.

ProfileTree helps businesses navigate these treacherous waters. Our SEO services incorporate safe AI usage that enhances rather than replaces human expertise. Our content marketing strategies blend AI efficiency with authentic human insight that Google rewards. We’ve watched competitors destroy their rankings with pure AI content while our clients maintain steady growth through strategic human-AI collaboration.

“The future belongs to businesses that view AI as a tool, not a solution,” concludes Connolly. “Those expecting AI to handle their content creation will watch their rankings evaporate. Those using AI to amplify human expertise will dominate search results. The choice you make today determines whether you’ll have organic traffic tomorrow.”

The question isn’t whether to use AI – it’s how to use it without destroying everything you’ve built. Every piece of pure AI content you publish is a gamble with your entire online presence. The odds get worse with each Google update. Protect your rankings by maintaining human oversight, demonstrating genuine expertise, and never crossing the threshold where algorithms classify your site as AI-dependent. Your organic traffic depends on making the right choice now, before it’s too late.