Integrating AI with Existing IT Systems: A Practical Guide

Table of Contents

Most IT integration projects stall before they deliver anything. A business invests in an AI tool, hands it to the technical team, and discovers that the underlying data infrastructure was not built to support it. The model underperforms, the project loses momentum, and leadership writes off AI as overhyped.

The problem is rarely the AI itself. It is the gap between modern machine learning systems and IT environments that were designed a decade or more before those systems existed. Bridging that gap requires a structured approach, not a rushed deployment.

This guide sets out a practical roadmap for integrating AI with existing IT systems, with particular attention to the legacy infrastructure challenges facing UK and Irish businesses. It covers the core integration framework, architectural patterns, regulatory compliance, and how to keep AI systems performing well beyond the initial launch.

Why AI Integration Fails in Legacy Environments

Understanding why AI integration projects fail is arguably more valuable than understanding why they succeed. The failure patterns are consistent across sectors, and most of them originate in decisions made long before any AI tool was selected. Organisations that go into integration with a clear picture of these risks are far better positioned to avoid them.

Data Silos and Architectural Rigidity

The single most common reason AI projects underperform is fragmented data. When customer records sit in one system, transactional data sits in another, and operational logs exist in a third, an AI model cannot build a coherent picture of the business. It trains on incomplete signals and produces incomplete outputs.

This is compounded by architectural rigidity. Legacy systems were often built around proprietary formats and closed APIs. Connecting a modern AI layer to a 15-year-old on-premise ERP is not a configuration task; it requires deliberate engineering work. Organisations that underestimate this consistently overspend and underdeliver.

UK businesses face this particularly acutely. A significant proportion of the country’s manufacturing, financial services, and public sector organisations still run systems that predate cloud computing. The opportunity cost of not addressing this is growing as competitors with more flexible infrastructure move faster.

For a grounded look at how SMEs are currently approaching this challenge, the SME AI implementation case studies on the ProfileTree blog offer practical reference points from businesses at different stages of readiness.

The Latency and Throughput Gap

AI systems built on large language models or real-time machine learning pipelines have very different performance expectations from traditional business software. A CRM system built to process a few hundred transactions per hour was not designed to handle the continuous data throughput that modern AI requires.

When this gap is not addressed before deployment, the result is slow inference times, failed API calls, and a degraded user experience that undermines confidence in the technology. The fix is rarely just more computing power. It usually requires rethinking how data moves between systems, which means confronting the architecture rather than working around it.

The lesson is straightforward: assess infrastructure capacity before selecting an AI model, not after. The model’s requirements should drive the infrastructure conversation, not the other way round.

Underestimating the Human Element

Technical debt is visible. The human resistance to change is harder to see until it has already derailed a project. Staff who feel that AI threatens their role, or who simply do not understand what the new system is doing, will find ways to work around it.

Effective change management is not a soft add-on to an integration project. It is a core workstream. Teams need to understand what the AI is being asked to do, how its outputs should be interpreted, and what their own responsibilities are in maintaining data quality. Without that foundation, even a technically sound integration will struggle to deliver sustained value.

ProfileTree’s work supporting businesses with AI adoption change management consistently shows that the organisations with the smoothest integrations are those that invest in people-readiness alongside technical readiness.

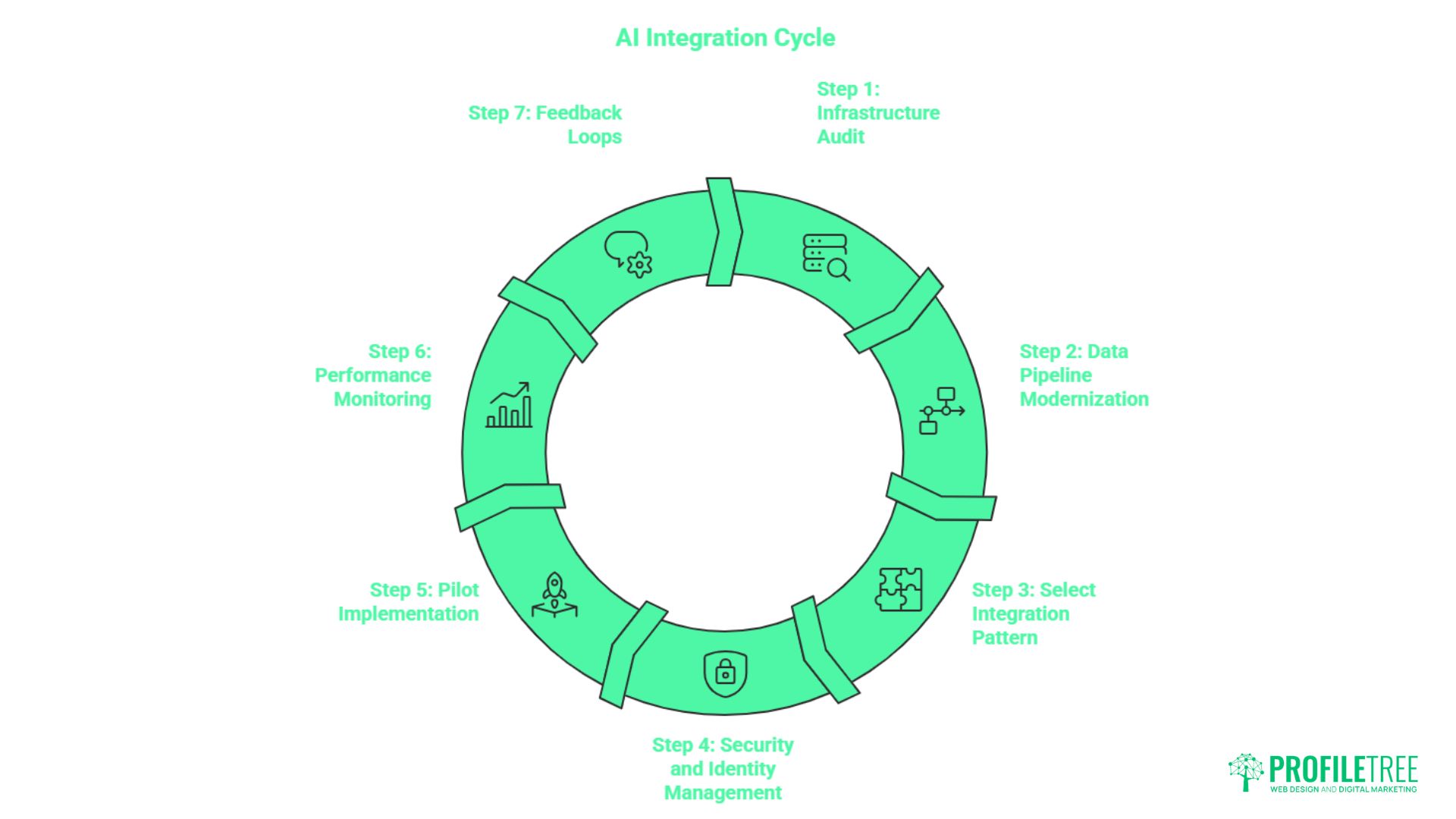

The 7-Step Framework for Successful AI Integration

There is no universal template for AI integration, but there is a reliable sequence. The seven steps below outline the order in which decisions must be made, with each stage creating the conditions for the next. Skipping steps is the most reliable way to create problems that are expensive to fix later.

Step 1: Infrastructure Audit and Technical Debt Assessment

Before any AI tool is selected, map what you have. Identify every system that holds data relevant to the intended AI use case, document how those systems connect (or fail to connect), and flag the technical debt that will need to be resolved before integration is viable.

This audit should include database schemas, API availability, data formats, and the volume and velocity of data each system generates. It is also the moment to identify security vulnerabilities in existing infrastructure, as these become substantially more consequential once an AI layer is added.

An honest assessment of technical debt at this stage will save high costs and delay later. ProfileTree’s AI implementation challenges guide outlines the most common audit findings and how to address them systematically.

Step 2: Data Pipeline Modernisation

Once the audit is complete, the next task is ensuring data can flow reliably from source systems to the AI layer. This typically means moving from traditional Extract, Transform, Load (ETL) processes to more flexible Extract, Load, Transform (ELT) approaches that can handle larger volumes and more varied data structures.

Data quality is the priority at this stage. Inconsistent formats, missing values, and duplicate records will all surface as problems in AI outputs if they are not addressed in the pipeline. Cleaning and standardising data before it reaches the model is far cheaper than diagnosing model errors after the fact.

For businesses working with older on-premise systems, this often means building a data lake or data warehouse as an intermediate layer. That infrastructure investment is not glamorous, but it is the foundation on which everything else depends.

Step 3: Selecting Your Integration Pattern

There are three primary integration patterns to choose from, and the right choice depends on your existing architecture, data privacy requirements, and the nature of the AI application.

API-first integration connects the AI model to existing systems through standardised interfaces. It is the fastest to deploy and the most flexible, but it introduces a dependency on third-party AI services and raises questions about where data is processed.

Custom on-premise deployment keeps the model within your own infrastructure. This is the preferred approach for organisations handling sensitive data or operating in regulated sectors, but it requires significantly more internal resources to maintain.

Hybrid cloud architecture splits the workload: sensitive data stays on-premise, while less sensitive processing moves to the cloud. This is increasingly the default for mid-market UK organisations that need flexibility without sacrificing compliance.

Step 4: Security and Identity Access Management Integration

AI systems introduce new attack surfaces. They access data from multiple sources, generate outputs that may feed into business decisions, and often interact with external APIs. Each of these points is a potential vulnerability if access controls are not properly configured.

Identity Access Management (IAM) needs to be integrated from the start, not retrofitted. Define who can query the AI system, what data it can access, and under what conditions outputs can be acted upon. Audit logs should capture all interactions with the system, both for security purposes and for regulatory compliance.

The practical guide to protecting user data and storage provides a solid reference framework for organisations setting up these controls for the first time.

Step 5: Pilot Implementation Using the Sidecar Approach

Rather than replacing a core system with an AI-enabled equivalent, the sidecar approach runs the AI layer in parallel with the existing system. Both processes use the same inputs, and outputs are compared before the AI system is trusted to operate independently.

This approach limits risk significantly. If the AI produces unexpected results during the pilot, the existing system continues to operate without disruption. The pilot period also generates the real-world performance data needed to refine the model before full deployment.

“The biggest mistake we see is businesses trying to go from zero to full AI dependency in one step,” says Ciaran Connolly, founder of ProfileTree. “A phased pilot approach gives you evidence, builds internal confidence, and surfaces problems while you still have time to address them without crisis management.”

Step 6: Performance Monitoring and Model Observability

Once live, the AI system needs continuous monitoring. Performance metrics should cover accuracy (are outputs correct?), latency (are responses fast enough?), and reliability (is the system available when needed?). These need to be tracked against defined baselines, not just assessed subjectively.

Model observability goes further: it makes the AI’s decision-making process auditable. In regulated sectors, being able to explain why the model produced a particular output is not optional. Even outside regulated industries, internal stakeholders will eventually ask why the system recommended a particular course of action, and you need to be able to answer.

For a structured approach to measuring these outcomes, the AI impact measurement guide covers both quantitative and qualitative indicators that matter at the business level.

Step 7: Feedback Loops and Iterative Scaling

AI integration is not a project with a defined end date. It is an ongoing capability that needs to evolve as the business changes and as new data becomes available. Feedback loops, where the model’s outputs are reviewed, corrected where necessary, and used to retrain the model, are what keep the system accurate over time.

Scaling decisions should be based on evidence from earlier phases. Which use cases delivered measurable value? Which parts of the infrastructure proved to be bottlenecks? Answering these questions with data, rather than assumptions, produces a scaling strategy that is much more likely to succeed.

Architectural Patterns: Connecting the Old with the New

The architectural choices made during integration define the long-term maintainability and performance of the system. Getting these right requires understanding both the capabilities of modern AI infrastructure and the constraints of the legacy systems they need to connect to. There is no elegant shortcut here; the bridge has to be built properly, or it will fail under load.

RESTful APIs as the Standard Bridge

For most organisations, RESTful APIs are the primary mechanism for connecting AI services to existing systems. They are widely supported, well-documented, and flexible enough to accommodate a broad range of data types and request patterns.

The practical challenge is that many legacy systems predate modern API standards. Systems running on AS/400, older versions of SAP, or custom-built databases from the early 2000s may require a middleware layer to translate between their native protocols and the REST interfaces that AI services expect.

This middleware layer is often underestimated in project scoping. It is not a minor configuration task; it requires ongoing maintenance and needs to be designed with the same rigour as any other part of the integration.

Event-Driven Architecture for Real-Time Applications

Where AI applications need to respond to events in real time, such as fraud detection, predictive maintenance alerts, or dynamic pricing, a request-response API model is often too slow. Event-driven architecture, where systems publish data changes to a message broker, and AI services subscribe to relevant streams, is more appropriate.

Tools such as Apache Kafka are commonly used for this purpose in enterprise environments. The benefit is that data moves to the AI layer as soon as it is generated, rather than waiting to be polled. The cost is increased architectural complexity and a steeper operational learning curve for teams not already familiar with event streaming.

Choosing between API-first and event-driven architecture should be driven by the latency requirements of the specific use case, not by a general preference for one approach over the other.

Vector Databases for AI-Native Data Storage

Traditional relational databases store data in rows and columns. AI models, particularly those based on large language models, work with vector embeddings: mathematical representations of data that capture semantic meaning rather than just raw values. These two formats are not directly compatible, which is where vector databases come in.

Vector databases such as Pinecone, Weaviate, and the open-source Chroma allow AI systems to retrieve semantically similar information efficiently. For applications like document search, customer query handling, or knowledge management, adding a vector database layer alongside an existing relational database is often the most practical route to AI capability without replacing the core data infrastructure.

For businesses exploring how advanced machine learning techniques apply to their specific data environment, understanding vector storage is increasingly relevant to practical implementation decisions.

| Factor | API-First | Custom On-Premise | Hybrid Cloud |

|---|---|---|---|

| Implementation Speed | Fast (weeks) | Slow (months) | Moderate (6-12 weeks) |

| Data Privacy | Depends on provider | Full control | Partial control |

| Ongoing Cost | Usage-based | High (infrastructure + staff) | Moderate (shared overhead) |

| Maintenance Burden | Low (provider-managed) | High (fully internal) | Shared |

| Best Suited For | Non-sensitive data, fast deployment | Regulated sectors, sensitive data | Most mid-market UK organisations |

Governance and Compliance: Navigating UK and EU Regulations

Regulatory compliance is not a box to tick at the end of an AI integration project. It shapes which integration patterns are viable, what data can be used for training, where processing can take place, and how outputs can be applied. UK and Irish businesses face a more complex regulatory environment than their US counterparts, and the frameworks are still evolving.

UK GDPR and Data Governance in AI Systems

UK GDPR applies to any AI system that processes personal data relating to individuals in the United Kingdom. For AI integration projects, this has several practical implications. Training data must be lawfully obtained and documented. Individuals have the right to know that their data is used in automated decision-making, and, in certain circumstances, to request human review of decisions made by AI systems.

Data governance frameworks need to be in place before integration begins. This means defining data ownership, establishing retention and deletion policies, and creating audit trails that can demonstrate compliance with the Information Commissioner’s Office if required.

The data protection for businesses guide covers the baseline requirements that apply to most UK organisations working with customer or employee data in an AI context.

The EU AI Act and Its Reach for UK Businesses

The EU AI Act came into full effect in stages through 2024 and 2025 and establishes risk-based regulation for AI systems. Critically, it has extra-territorial reach: any UK business that provides AI-enabled products or services to customers in the European Union must comply with its requirements, regardless of where the AI processing takes place.

The Act categorises AI systems by risk level. High-risk systems, which include those used in employment decisions, credit scoring, and critical infrastructure, face the most stringent requirements: mandatory conformity assessments, technical documentation, and human oversight mechanisms.

For Northern Ireland businesses in particular, which maintain unique trade relationships with both the UK and the EU, understanding where their AI systems fall on the risk classification spectrum is an immediate operational concern, not a future-proofing exercise.

The UK AI Regulation White Paper: A Pro-Innovation Approach

The UK government’s approach to AI regulation, as set out in its AI Regulation White Paper, is deliberately lighter-touch than the EU’s framework. Rather than creating a single AI regulator, the UK distributes oversight responsibility across existing sector regulators, such as the FCA for financial services and the CQC for healthcare.

This creates flexibility, but also ambiguity. Organisations in regulated sectors need to engage with their specific regulator’s AI guidance rather than relying on a single set of cross-sector rules. For businesses operating across both the UK and EU markets, this means navigating two distinct regulatory logics simultaneously.

The practical upside is that UK organisations have somewhat more latitude to experiment and deploy AI in lower-risk contexts without the conformity assessment burden that applies under the EU framework. That latitude comes with the expectation of responsible self-governance, which makes internal AI ethics and governance frameworks more important, not less.

For guidance on building those internal structures, ProfileTree’s resource on AI implementation cost-benefit analysis includes a section on governance investment as a proportion of total integration spend.

Beyond Deployment: Managing Day 2 AI Operations

The period immediately after an AI system goes live tends to receive disproportionate attention, while the months and years that follow receive far too little. Day 2 operations, the ongoing work of maintaining, monitoring, and improving an integrated AI system, are where the long-term value of the investment is either realised or lost. This is also the area where the gap between global consultancy guidance and practical business reality is widest.

Model Drift and Why It Matters

Machine learning models are trained on data from a specific point in time. As the real world changes, the patterns in that training data become less representative of current conditions, and the model’s outputs become less accurate. This degradation is called model drift, and it affects every deployed AI system to some degree.

The speed at which drift occurs varies significantly by use case. A model trained on customer purchasing behaviour from 2022 may have drifted substantially by 2024 if spending patterns shifted. A model trained on manufacturing sensor data may remain accurate for longer if the physical process it monitors has not changed.

Detecting drift requires ongoing monitoring of model outputs against ground truth data. This is not an automated process that can be set up once and forgotten; it requires someone in the organisation to own it. Assigning that responsibility clearly, as part of the integration project rather than after, is one of the most practical things an organisation can do to protect long-term performance.

Retraining Schedules and Infrastructure Requirements

Once drift is detected, or anticipated based on a regular review schedule, the model needs to be retrained on more recent data. This requires maintaining the data pipelines built during integration, keeping training infrastructure available, and having the internal capability to run and validate the retraining process.

For organisations that rely entirely on third-party AI services via API, retraining is typically managed by the provider on a schedule that may not align with the business’s needs. This is one of the arguments for hybrid or on-premise deployment in use cases where accuracy over time is critical.

The importance of data in AI implementation covers the data infrastructure requirements that underpin sustainable retraining programmes, including how to structure data retention policies to support future model updates.

Team Upskilling for Sustained AI Capability

An AI system is only as good as the team responsible for it. Technical staff need to understand how to monitor model performance, interpret drift signals, and manage retraining cycles. Non-technical staff need to understand how to use AI outputs appropriately, recognise when outputs seem anomalous, and escalate concerns through the right channels.

This is not a one-off training exercise delivered at launch. As the system evolves and new use cases are added, training needs to evolve alongside it. Organisations that build continuous learning into their AI governance structure are consistently better at extracting long-term value from their integrations.

ProfileTree delivers structured AI training for business teams through the staff AI tools training programme, which is designed specifically for organisations managing the transition from initial deployment to sustained operational capability.

Northern Ireland and the Republic of Ireland have both invested significantly in technology education infrastructure in recent years. For businesses in this region looking to understand the broader digital context in which AI investment sits, the cities across Northern Ireland profiled on Connolly Cove reflect the scale of tech sector growth across Belfast, Derry, and beyond.

Conclusion

Integrating AI with existing IT systems is a technical and organisational challenge that rewards careful planning far more than it rewards speed. The organisations that get the most from these integrations are those that audit honestly, build infrastructure with long-term maintenance in mind, take compliance seriously from day one, and invest in the people who will run the system after launch.

If you are ready to start mapping out an AI integration strategy for your business, speak to the ProfileTree team to discuss your current infrastructure and the most practical path forward.

FAQs

What is the first step in AI implementation for an enterprise?

The first step is an honest audit of your existing infrastructure and data quality. You need to understand what data you have, where it lives, and what technical debt exists before any model selection or vendor engagement begins.

How much does it cost to integrate AI with existing software?

Costs vary significantly depending on integration complexity, whether you choose API-first, on-premise, or hybrid deployment, and the level of data pipeline work required. Key cost drivers include infrastructure upgrades, API usage fees, internal personnel time, and ongoing training and maintenance.

What are the main security risks of AI integration?

The primary risks include data leakage through inadequately secured API connections, prompt injection attacks on language model interfaces, and third-party API vulnerabilities where the AI provider is the weak link. IAM controls and audit logging are essential mitigations from day one.

Can AI be integrated with on-premise legacy systems?

Yes, via middleware layers and private cloud gateways. Legacy systems running on older ERP platforms such as SAP, Oracle, or AS/400 can connect to modern AI services through purpose-built middleware that translates between native formats and contemporary API standards.

How does the EU AI Act affect UK businesses?

The EU AI Act applies to any business providing AI-enabled products or services to EU customers, regardless of where the business is based. UK organisations serving EU markets must classify their AI systems by risk level and meet the corresponding compliance requirements, including conformity assessments for high-risk applications.