Ethical AI and Legal Requirements: A Compliance Guide for UK Businesses

Table of Contents

Ethical AI has moved from boardroom buzzword to legal obligation. For years, frameworks built around principles like transparency and fairness sat in corporate social responsibility documents and academic papers, rarely influencing day-to-day operations. That era has ended. As of 2025, the convergence of the EU AI Act, UK sector-led enforcement, and updated data protection guidance means that ethical AI failures carry real financial penalties, reputational damage, and legal liability. For any business using AI in its services, content, or client-facing operations, understanding ethical AI is no longer a specialism; it is a baseline requirement.

ProfileTree, a Belfast-based digital agency and AI training provider, has worked with businesses across Northern Ireland, Ireland, and the UK to navigate precisely this challenge. This guide provides a practical roadmap covering the regulatory landscape, the five legal and ethical pillars every organisation must address, and a step-by-step compliance framework. It is written for business owners, marketing managers, compliance officers, and digital teams who need to understand both the obligations and the operational changes required to meet them.

From Principles to Policing: Why Ethical AI Is Now a Legal Matter

The shift from voluntary ethical AI frameworks to enforceable law has been swift. Understanding what changed, and why, is the starting point for any credible compliance strategy.

The End of Soft Law

Until relatively recently, ethical AI governance operated under what regulators call “soft law”: high-level principles issued by bodies such as the OECD, the EU’s High-Level Expert Group on AI, and the UK’s Centre for Data Ethics and Innovation. These frameworks provided aspirational standards but carried no enforcement mechanism. Organisations could adopt them, adapt them, or ignore them with no direct legal consequence.

That changed as AI moved into critical decision-making processes: hiring algorithms screening CVs, credit-scoring models determining loan eligibility, healthcare tools influencing diagnoses, and content recommendation systems shaping public discourse. The scale and consequence of these applications made a purely voluntary approach untenable. Regulators across the EU and UK have responded with binding requirements that apply regardless of company size or sector.

What Has Changed in 2025

Three developments have reshaped the ethical AI landscape in the past 12 months. First, the EU AI Act has moved from political agreement to active enforcement, with prohibited AI practices banned outright and the compliance clock running for high-risk system operators. Second, the UK Information Commissioner’s Office has published updated guidance on automated decision-making under the Data Protection Act 2018, making it clear that the right to explanation is not a theoretical protection. Third, the UK Equality Act 2010 has been successfully cited in employment tribunal cases involving algorithmic hiring tools, confirming that the law applies equally to human and automated decisions. Businesses exploring AI marketing and automation should treat these developments as the baseline for their governance planning, not an afterthought.

Global Regulatory Landscape: UK, EU, and Beyond

Any organisation serious about ethical AI compliance needs to understand which legal frameworks apply to its operations. The answer often involves more than one jurisdiction, particularly for digital services and agencies with clients or users in the EU. A well-structured digital strategy will account for regulatory exposure from the outset rather than retrofitting compliance after deployment.

| Jurisdiction | Framework | Key Focus | Risk to UK Businesses |

|---|---|---|---|

| EU | EU AI Act | Risk-based classification, prohibited uses, mandatory audits | High: Extraterritorial scope applies to UK firms serving EU users |

| UK | ICO / CMA / FCA sector guidance | Pro-innovation, sector-led enforcement via existing regulators | Medium: Multi-regulator compliance required |

| US | NIST AI RMF | Voluntary standards; often a contractual requirement from tech partners | Medium: Applies when integrating US AI platforms |

| Global | OECD AI Principles | Trustworthy AI, human rights, democratic values | Low direct risk; increasingly cited in UK tribunal decisions |

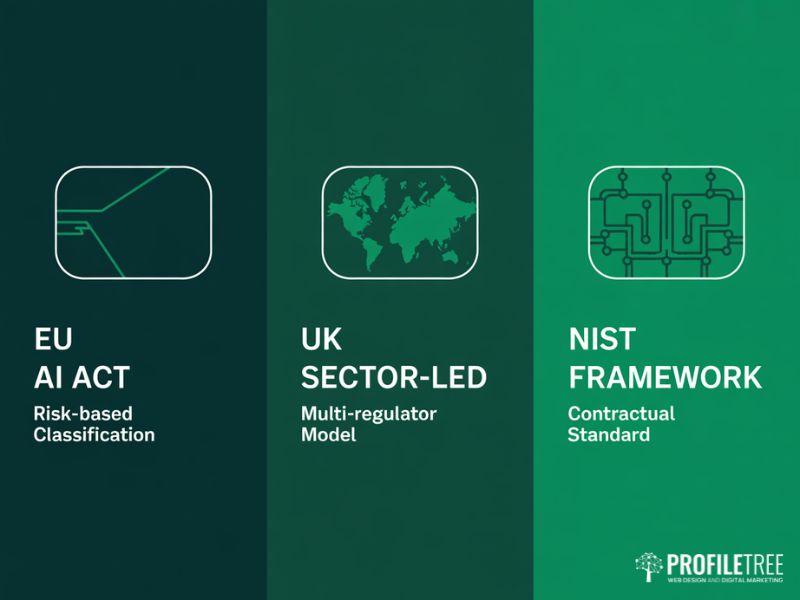

The EU AI Act: The World’s First Horizontal AI Law

The EU AI Act classifies AI systems by risk level. At the top are prohibited systems, including social scoring by governments and AI designed to exploit psychological vulnerabilities. Below that, high-risk systems covering employment, education, credit, healthcare, and law enforcement face mandatory conformity assessments, technical documentation requirements, and in many cases a Fundamental Rights Impact Assessment before deployment.

A critical point for UK businesses: the EU AI Act is extraterritorial. Like GDPR before it, it applies wherever an AI system’s output affects individuals within the EU. A Belfast agency building an AI recruitment tool for a Dublin client falls within the Act’s scope. Even lower-risk deployments such as AI chatbots for customer service carry transparency obligations under the Act’s limited-risk classification, requiring clear disclosure that users are interacting with an automated system.

The UK’s Sector-Led Approach: Flexibility and Vigilance

The UK has deliberately avoided a single “AI Act” equivalent, instead tasking existing regulators to extend their remit into AI governance. This creates a patchwork of obligations. The ICO focuses on the data ethics of AI training and automated decision-making rights. The CMA examines market dominance concerns around foundation models. The FCA monitors algorithmic bias in financial services. The MHRA oversees AI used in medical devices and clinical decision support.

For a digital agency advising clients across multiple sectors, ethical AI compliance is not a single audit but a multi-regulator conversation. The lack of a single statute provides flexibility but demands a higher level of internal governance.

The US NIST Framework: A Contractual Baseline

While the US lacks a federal AI law, the NIST AI Risk Management Framework has become the de facto ethical AI standard for organisations working with US technology platforms. Many enterprise AI vendors now include NIST alignment as a contractual requirement, making it relevant for UK businesses integrating third-party AI tools. Ciaran Connolly, founder of ProfileTree, notes: “We are seeing more clients ask about AI governance frameworks not because they have been told to by a regulator, but because their own enterprise clients are requiring it as part of procurement. Ethical AI is becoming a commercial prerequisite, not just a legal one.”

The 5 Pillars of Legal-Ethical Convergence

Moving from general principles to legal compliance requires organisations to address five specific areas where ethical AI obligations and legal requirements intersect. Each pillar has practical implications for digital agencies, marketing teams, and AI training providers.

| Pillar | Legal Basis | Practical Requirement |

|---|---|---|

| Algorithmic Transparency | UK GDPR Article 22; EU AI Act Article 13 | Document model logic; provide human-readable explanations of automated decisions |

| Data Privacy | UK Data Protection Act 2018; GDPR | Conduct DPIAs; apply data minimisation; record lawful basis for AI training data |

| Bias & Non-Discrimination | UK Equality Act 2010 | Adversarial testing; diverse training data; regular fairness audits |

| Accountability & Oversight | EU AI Act High-Risk provisions | Designate a responsible officer; implement human-in-the-loop for high-stakes decisions |

| Safety & Cyber Resilience | UK PSTIA; NIS2 | Security by design; incident response plans; third-party vendor audits |

Pillar 1: Algorithmic Transparency and Explainability

Transparency is the foundation of ethical AI. Under the UK GDPR and the Data Protection Act 2018, individuals subject to solely automated decisions have the right to request an explanation. Under the EU AI Act, high-risk system operators must provide technical documentation that allows a competent authority to audit the logic. This principle extends to content: a business using AI to generate or personalise marketing material should be able to explain the basis on which that content was produced and targeted. Credible content marketing in the age of AI means maintaining editorial standards that hold up to scrutiny. In practice, this means moving away from opaque, black-box models for any decision-making application and towards architectures where feature weighting can be inspected and explained.

Pillar 2: Data Privacy and Ethical AI Training

The intersection of data privacy law and ethical AI is one of the most active areas of regulatory focus. Training a machine learning model on personal data without a lawful basis is a data protection violation regardless of how the model is ultimately used. The ICO’s guidance on AI and data protection sets out specific requirements for data minimisation, purpose limitation, and the documentation of training datasets.

For organisations using third-party AI tools, due diligence on data provenance is equally important. If a vendor’s model was trained on data sourced without proper consent, your organisation may share liability for its deployment. Ethical AI procurement means asking vendors the right questions about their training data, not just their output quality.

Pillar 3: Bias, Fairness, and the UK Equality Act

Algorithmic bias is not merely an ethical concern; it is a legal liability under the UK Equality Act 2010. The Act is technologically neutral: if an AI hiring tool systematically disadvantages candidates based on age, gender, race, or any other protected characteristic, the organisation deploying it bears the same legal exposure as if a human manager had made that decision. The same applies to AI-powered advertising: social media marketing campaigns that use AI to exclude audiences based on proxies for protected characteristics carry genuine equality law risk.

The compliance response requires adversarial testing: deliberately probing the model to detect whether protected characteristics, or proxies for them such as postcode or name origin, are influencing outputs. Ethical AI bias monitoring must be ongoing because model behaviour can shift as the underlying data changes.

Pillar 4: Accountability and Human Oversight

One of the clearest requirements in both the EU AI Act and ICO guidance is the principle of human oversight for high-stakes decisions. Purely automated decisions affecting individuals in employment, credit, housing, or healthcare require a human review mechanism that is genuinely accessible, not just nominally available.

Accountable ethical AI requires a named responsible person within the organisation: someone who owns the governance of AI systems, ensures documentation is maintained, and can respond to regulatory enquiries. For smaller businesses, this does not require a dedicated AI ethics officer; it requires clarity about who is responsible and that this person has the authority and training to act. Investing in digital training for your team is one of the most practical steps a business can take to build genuine AI accountability.

Pillar 5: Safety, Security, and Cyber Resilience

Ethical AI systems must be secure systems. AI that can be manipulated through adversarial inputs, that leaks personal data through model inversion attacks, or that fails unpredictably in production is not ethically deployed regardless of the intentions behind it. The UK’s Product Security and Telecommunications Infrastructure Act, alongside the NIS2 Directive, creates binding obligations around the security of digital products that include AI-powered tools. Robust website security and managed hosting form part of the infrastructure layer that underpins secure, ethical AI deployment.

Operationalising Compliance: A 6-Step Roadmap

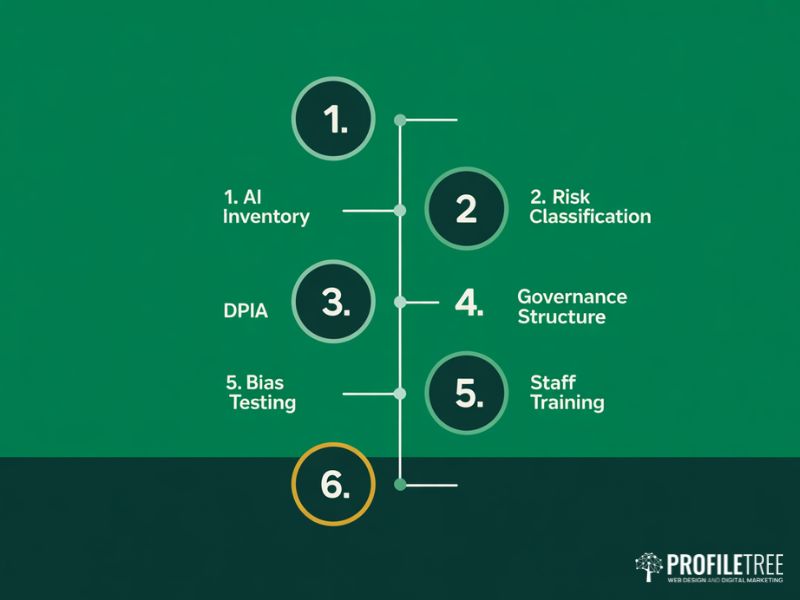

Understanding ethical AI obligations is the first step; building the internal processes to meet them is where most organisations face the steepest challenge. This roadmap applies to businesses of all sizes, from SMEs beginning their AI transformation to established agencies expanding their AI service offering.

Step 1: Conduct an AI Inventory. You cannot govern what you cannot see. Audit every AI system in use across the organisation, from CRM automation and chatbots to AI-assisted content tools and analytics platforms. For each system, document the purpose, the data it processes, the decisions it influences, and the vendor’s compliance status.

Step 2: Classify Your AI Systems by Risk. Using the EU AI Act risk categories as a working framework, classify each system as prohibited, high-risk, limited-risk, or minimal-risk. This classification determines the level of governance investment required.

Step 3: Conduct Data Protection Impact Assessments. For any AI system that processes personal data or makes automated decisions, a DPIA is required under UK GDPR. For high-risk systems, supplement this with a Fundamental Rights Impact Assessment covering potential adverse effects beyond data protection.

Step 4: Establish an AI Governance Structure. Ethical AI governance requires named ownership. This might be an AI Ethics Board, a designated AI Responsible Officer, or governance procedures integrated into existing compliance frameworks. The structure matters less than the clarity of accountability and the regularity of review.

Step 5: Implement Bias Testing and Monitoring. Build a testing and monitoring schedule into the operational plan for any AI system influencing decisions about individuals. Pre-deployment testing checks for bias across protected characteristics; post-deployment monitoring tracks outcomes over time. Document all results as evidence of due diligence.

Step 6: Train Your People. Technology governance is only as strong as the people operating within it. Staff who use AI tools, commission AI projects, or advise clients on AI strategy need a working understanding of ethical AI principles and the legal obligations relevant to their role. ProfileTree’s AI and digital training programmes for SMEs are designed to build practical AI literacy alongside the governance awareness that responsible deployment requires.

Liability, Intellectual Property, and the Ethics of Training Data

Two areas of ethical AI law that receive less attention than they deserve are who is liable when AI causes harm, and the legal status of the data used to train AI systems.

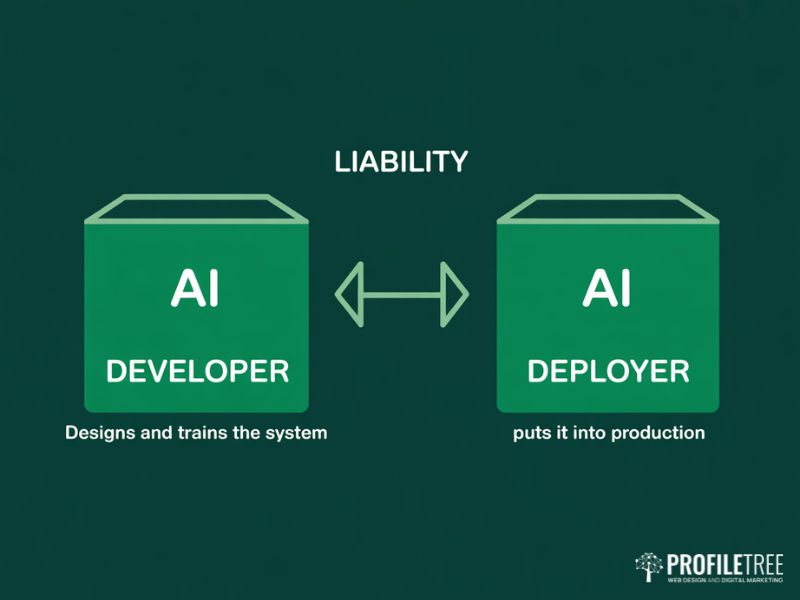

Who Is Liable When Ethical AI Fails?

The EU AI Act distinguishes between AI developers, who design and train the system, and AI deployers, who put it into production. Both can bear liability, but the balance depends on how closely the deployment matches the developer’s intended use case. A business that deploys a general-purpose AI tool for a specialised high-risk application without appropriate customisation carries greater liability than one that follows the vendor’s documented guidelines.

For UK businesses outside the EU AI Act’s direct scope, product liability law, contract law, and the common law duty of care provide the framework for AI-related claims. Ignorance of an AI system’s behaviour is not a defence: deployers are expected to understand what they are deploying and to conduct reasonable due diligence.

The Ethics of Training Data and Intellectual Property

Cases such as Getty Images v Stability AI have brought the intellectual property dimensions of ethical AI into mainstream legal discourse. The core question is whether AI systems trained on copyrighted content without explicit licences have violated copyright law, and whether outputs from those systems carry derivative liability for the organisations using them.

The UK Intellectual Property Office has been consulting on this issue and the legal position remains unsettled, but the ethical AI position is clearer: using data you do not have the right to use creates reputational risk and positions your organisation on the wrong side of an argument regulators are actively moving to resolve. This applies across all content types. Businesses using AI tools within their video marketing and production workflows should ensure they have documented the provenance and licensing of any assets used in model training or AI-assisted production.

Legal and Ethical Issues in Digital Marketing and AI

The legal and ethical issues in digital marketing have multiplied with the adoption of AI tools. Ethical AI considerations now sit at the centre of how agencies, content teams, and marketing consultants approach their work, whether they are commissioning website development projects with AI-powered personalisation or deploying automated social campaigns.

Automated content generation, AI-driven audience targeting, programmatic advertising, and personalisation at scale all raise ethical AI questions and legal considerations under data protection, consumer protection, and advertising standards law. The Advertising Standards Authority has been increasingly active in examining AI-generated advertising claims, while the CMA has signalled concern about AI-driven price discrimination and manipulative design patterns.

Key obligations to be aware of: personalisation systems that tailor content based on inferred personal characteristics require a lawful basis under UK GDPR, and consent must be informed and specific, from web design decisions through to email automation. Disclosing the use of AI in content creation is becoming a regulatory expectation, particularly in regulated sectors. AI-powered ad targeting that uses proxies for protected characteristics carries equality law risk even when the targeting itself appears neutral.

Future-Proofing Your Ethical AI Strategy

Ethical AI compliance is not a project with an end date. The legal landscape will continue to evolve, enforcement will increase, and AI capabilities will expand into new areas of risk and opportunity. The organisations that navigate this well are those that treat ethical AI as a core operational discipline rather than a compliance exercise.

For businesses in Northern Ireland, Ireland, and the UK, the practical priority is to start with the inventory and classification steps described in this guide, build governance structures that can grow with regulatory demands, and invest in the AI literacy that allows your team to make informed decisions. ProfileTree works with businesses at every stage of this journey, from initial AI strategy and training through to AI-powered digital services built with ethical AI principles from the ground up. Whether you need guidance on governance frameworks or support with AI-enhanced marketing strategies, the starting point is always the same: understanding what you have, what it does, and what obligations that creates.

FAQs

Does the EU AI Act apply to UK businesses?

Yes, in many cases. The Act is extraterritorial: if your AI system affects individuals in the EU, it applies regardless of where your business is based.

How can ethics guide responsible AI use for environmental sustainability?

By requiring organisations to account for the full environmental cost of AI, including energy consumption, model efficiency, and the carbon commitments of cloud providers used for training and inference.

What is an essential principle when integrating AI into business processes?

Accountability. Every AI system needs a named owner who understands what it does, what data it processes, and what legal obligations its deployment creates.

What common AI words should businesses avoid in their content?

Terms such as “leverage”, “delve”, “ecosystem”, “cutting-edge”, “robust”, and “seamless” are closely associated with low-quality AI output. For a full reference, see ProfileTree’s guide on common AI words and phrases to avoid.