AI Compliance: What UK and Irish Businesses Must Know

Table of Contents

AI compliance has moved from boardroom talking point to legal obligation in the space of two years. For businesses in the UK, Ireland, and Northern Ireland, the challenge is not just understanding what the rules say — it’s knowing which rules apply to you, when they kick in, and what regulators will actually ask to see.

This guide covers the core regulatory frameworks, the specific pressures facing UK and Irish businesses navigating dual-regime complexity, and the practical steps you need to take to build an audit-ready compliance position.

What Is AI Compliance?

AI compliance is the process of ensuring that AI systems — whether built in-house or bought from a vendor — operate within the legal, ethical, and operational boundaries set by regulators, customers, and your own governance policies.

It covers how AI systems are designed, trained, tested, deployed, and monitored. It includes data protection obligations under the GDPR, sector-specific rules from bodies such as the FCA and ICO, and the incoming requirements of the EU AI Act.

What AI compliance is not is a one-time audit. Regulations are changing, AI systems change as they process new data, and the outputs of a compliant system in January may create liability by March. Compliance is an ongoing process, not a sign-off.

The distinction between AI compliance and AI ethics is worth clarifying here. Ethics covers what your organisation believes is right — fairness, transparency, accountability. Compliance covers what is legally required. The two overlap significantly, but compliance carries penalties. The EU AI Act imposes fines of up to €35 million or 7% of global annual turnover for the most serious violations.

Why AI Compliance Is Now a Business Priority

For most UK businesses, AI regulation felt like a distant concern until very recently. The rules existed at the edges — GDPR covered automated decisions, sector regulators issued guidance — but there was no single law that created clear obligations or penalties. That position has shifted, and the shift has been fast.

Legal Exposure Has Increased Sharply

Until recently, most AI-related legal risk sat under existing data protection law. GDPR’s Article 22 placed restrictions on automated decision-making with significant effects on individuals, and the ICO issued guidance on AI and data protection as early as 2020. For most UK businesses, that was the extent of formal AI regulation.

That position has changed. The EU AI Act entered into force in August 2024. Prohibitions on the most harmful AI practices took effect in February 2025. Requirements for high-risk AI systems begin applying from August 2026. If your business operates in, or sells into, EU markets, you are already inside this regulatory perimeter.

Reputational and Commercial Risk

The legal risk is the floor, not the ceiling. AI-related failures — biased hiring tools, opaque credit decisions, inaccurate automated outputs presented as fact — generate media coverage, regulatory investigations, and customer complaints regardless of whether a specific law has technically been breached.

Businesses that build a credible AI compliance position now will be better placed to win contracts, pass due diligence checks, and retain customer trust as AI regulation tightens across both the UK and EU.

The Regulatory Landscape: EU, UK, and the Northern Ireland Position

This is the section most compliance guides skip or oversimplify. For UK and Irish businesses, the regulatory picture is genuinely complex, and the complexity is not going away.

The EU AI Act: A Risk-Based Framework

The EU AI Act classifies AI systems into four risk tiers:

Prohibited AI covers practices that are banned outright: social scoring by public authorities, real-time biometric surveillance in public spaces, AI that exploits psychological vulnerabilities, and systems that manipulate behaviour subliminally. These prohibitions applied from February 2025.

High-risk AI includes systems used in critical infrastructure, education, employment (including CV screening), essential public services, law enforcement, migration control, and the administration of justice. High-risk systems must meet requirements covering risk management, data governance, technical documentation, transparency, human oversight, and accuracy. These requirements apply from August 2026.

Limited-risk AI — including chatbots and AI that generates synthetic content — must comply with transparency requirements. Users must be told they are interacting with AI.

Minimal-risk AI is not subject to any mandatory requirements under the Act, though the European Commission encourages voluntary codes of practice.

If your business provides AI systems or is classified as a “deployer” of high-risk AI in the EU market, the Act applies to you regardless of where you are based.

The UK Approach: Sector-Led and Principle-Based

The UK has deliberately chosen not to replicate the EU AI Act. The UK government’s position — set out in its AI Regulation White Paper (2023) and subsequent consultations — is to rely on existing sector regulators rather than creating a single AI law.

That means AI oversight in the UK sits with:

- The ICO for data protection and automated decision-making

- The FCA for AI in financial services

- The MHRA for AI as medical devices

- The CMA for AI and competition concerns

- Ofcom for AI in broadcasting and online platforms

The AI Safety Institute (now the AI Security Institute) focuses on frontier model risks rather than business-level compliance. The AI Opportunities Action Plan (January 2025) signalled the government’s intent to keep regulation light-touch for now, with the Pro-Innovation Regulation of AI Bill under ongoing consultation.

For most UK SMEs, this means GDPR and sector-specific rules remain the primary compliance obligations. The practical implication is that there is no single checklist you can follow — you need to understand which regulator governs your AI use case.

Northern Ireland and the Cross-Border Position

Northern Ireland businesses face a specific challenge with no clear resolution. The Windsor Framework means Northern Ireland has a distinct relationship with EU single-market rules in certain areas, but AI regulation is not directly addressed in it.

In practice, businesses operating across the island of Ireland — selling services to ROI customers, operating dual-jurisdiction supply chains, or using AI systems that process data from both jurisdictions — need to plan for both regimes. A recruitment tool that screens CVs for an ROI subsidiary of a Belfast firm may fall under the EU AI Act’s high-risk provisions, even if the parent company is UK-registered.

The Central Bank of Ireland has also published guidance on AI in financial services that diverges from the FCA’s position in several areas, creating additional complexity for firms with operations on both sides of the border.

This cross-border dimension is where most existing guidance falls short. US-based publishers — IBM, TechTarget, Microsoft — write for a global audience and treat the UK and EU as broadly equivalent. They are not, and for Northern Ireland businesses in particular, the differences carry real operational consequences.

Key Pillars of an AI Compliance Framework

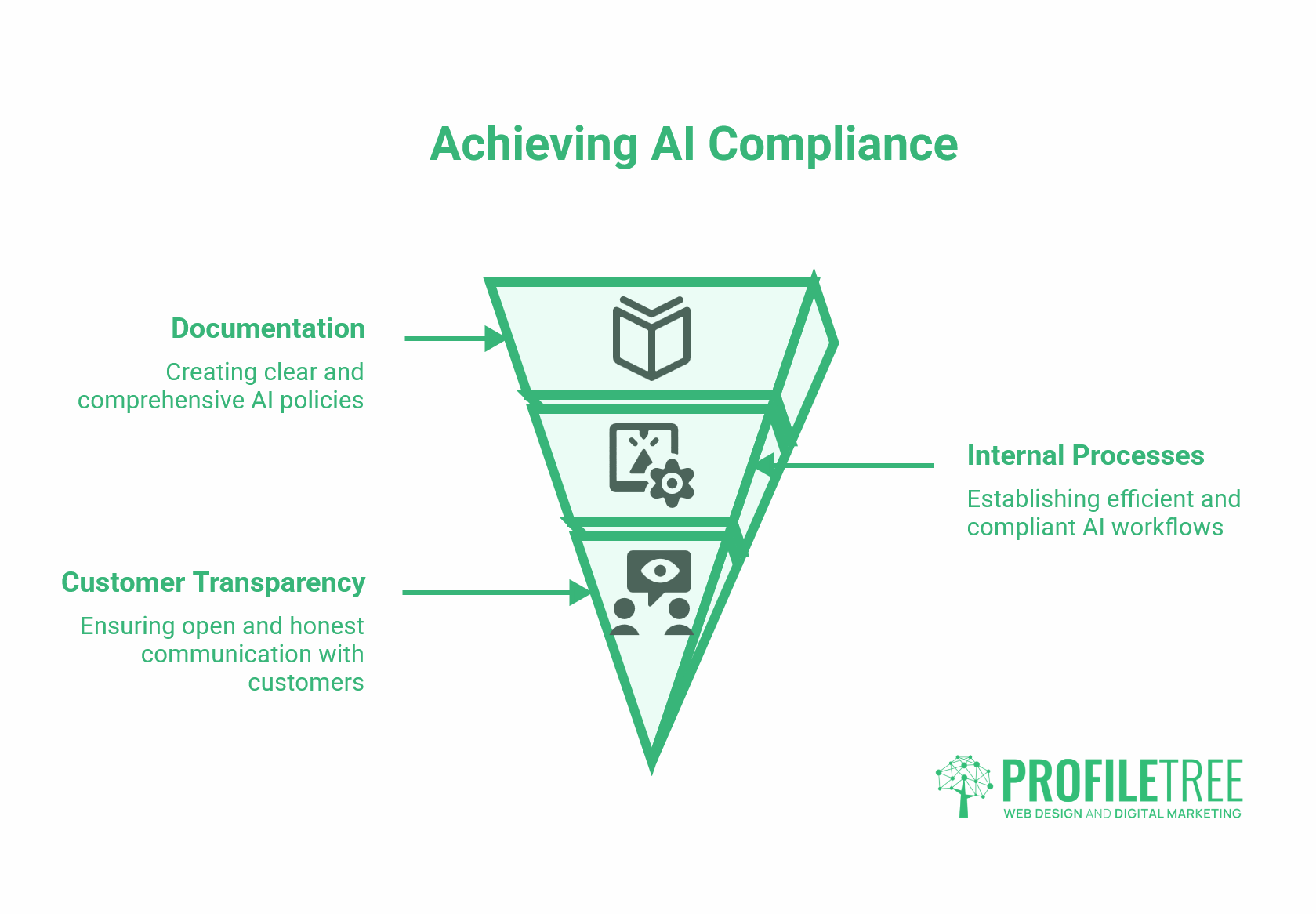

Understanding the regulations is the starting point. Building a compliance framework means translating those requirements into internal processes and documentation.

Data Governance and GDPR Alignment

You cannot have compliant AI without compliant data. GDPR imposes obligations on how personal data is collected, stored, processed, and used — and AI systems that train on, or make decisions about, personal data are firmly within its scope.

The ICO’s guidance on AI and data protection (updated 2023) sets out specific expectations: data minimisation, purpose limitation, lawful basis for processing, and technical measures for accuracy and fairness. For automated decision-making with legal or similarly significant effects, Article 22 requires that individuals have the right to human review, to contest the decision, and to receive an explanation of how it was reached.

Practically, this means documenting the data flows into your AI system, establishing a lawful basis for each processing activity, and building the logging infrastructure needed to reconstruct how a decision was made if it is challenged. Our guide to protecting user data and secure storage techniques covers the technical foundations.

Algorithmic Transparency and Explainability

Explainability is one of the more technically demanding compliance requirements, particularly for machine learning models whose relationships between inputs and outputs are not straightforward.

Regulators do not require you to publish your model architecture. They do require that you can explain, in plain language, how a specific decision was reached for a specific individual. That means logging input data, model version, output, and any human override — and retaining those logs for a period sufficient to cover potential complaints or investigations.

For high-risk AI under the EU Act, the technical documentation requirements are more extensive: training data description, design specifications, testing methodology, accuracy metrics, and known limitations.

Bias Detection and Mitigation

AI systems trained on historical data can encode historical biases. A hiring tool trained on past recruitment decisions may systematically disadvantage certain groups if those groups were historically underrepresented in successful candidates. A credit scoring model trained on postcode data may produce outcomes that correlate with protected characteristics.

Bias detection requires testing your model outputs across different demographic groups and comparing outcomes. Where disparities are identified, investigate whether they reflect genuine risk or underlying bias in the training data, and document the action taken.

The Equality Act 2010 (UK) and the Employment Equality Acts (ROI) create legal exposure for discriminatory AI-driven outcomes regardless of whether an “AI Act” applies. This is existing law.

Human Oversight and the “Human in the Loop” Requirement

Both the EU AI Act (for high-risk systems) and ICO guidance (for automated decision-making) require that humans retain meaningful oversight of AI-driven decisions. “Meaningful” is the operative word — a human rubber-stamping thousands of AI decisions per day without any capacity to review the reasoning does not meet the standard.

For high-risk AI, the Act requires technical design that allows for human intervention, monitoring by qualified operators, and the ability to override or halt the system. Documentation of how oversight is implemented — who is responsible, what triggers a review, what logs are retained — is a regulatory expectation, not a nice-to-have.

Implementing an AI Compliance Programme: A Phased Approach

Most businesses are not starting from zero. You are already using AI tools — in your marketing platforms, your CRM, your HR software, your accounting system — and the first task is understanding what you have.

Phase 1 — AI Inventory and Risk Categorisation

List every AI system your business uses or develops, including third-party vendor tools where AI features are embedded. For each system, record: what it does, what data it processes, what decisions or outputs it produces, and who is affected.

Then categorise each system against the EU AI Act risk tiers (if you operate in or sell into EU markets) and identify which UK regulator’s guidance applies. A simple spreadsheet works for most SMEs at this stage. The goal is visibility, not perfection.

Questions to ask of any AI system:

- Does it make or inform decisions that affect individuals (customers, employees, applicants)?

- Does it process personal data as defined by GDPR?

- Is it used in any of the high-risk application areas listed in Annex III of the EU AI Act?

- Can we explain how it reaches its outputs?

Phase 2 — Gap Analysis Against Applicable Requirements

Once you have your inventory, map each system against the requirements that apply. For high-risk EU AI Act systems, this includes the technical documentation requirements, data governance standards, and human oversight provisions. For all systems processing personal data, this means a GDPR data protection impact assessment (DPIA) if the processing is likely to result in a high risk to individuals.

ISO/IEC 42001 — the international standard for AI management systems — provides a useful framework even for businesses not formally seeking certification. It covers governance, risk management, impact assessment, and continuous improvement. It is not legally mandatory, but it is increasingly cited by regulators as a reference point, and it demonstrates a good-faith compliance effort.

Phase 3 — Documentation, Monitoring, and Ongoing Review

This is what regulators will actually ask to see: the paper trail that demonstrates your compliance position is real rather than nominal.

The documentation that matters includes: the AI inventory itself, DPIAs for high-risk processing, records of bias testing and results, human oversight procedures, training records for staff who operate AI systems, incident response logs, and records of any vendor due diligence conducted before deploying third-party AI tools.

Monitoring means checking that your AI systems continue to perform within the parameters tested at deployment. Models drift as input data changes. A system that met your accuracy and fairness thresholds at launch may not meet them twelve months later without review.

Ciaran Connolly, founder of ProfileTree, notes: “The businesses that handle AI compliance well are the ones treating it as an operational discipline rather than a legal exercise. The documentation question they should be asking is not ‘what do we need to show if audited?’ but ‘what would we need to show tomorrow morning if a regulator called today?'”

AI Compliance by Sector: Finance, Healthcare, and HR

The regulations that apply to your AI use depend heavily on what it does and who it affects. A chatbot handling customer enquiries sits in a very different compliance position to a tool that screens job applicants or flags patients for clinical review. The sector you operate in shapes both the regulator you answer to and the specific obligations you carry.

Financial Services

The FCA published its AI and Machine Learning Discussion Paper in 2022 and has since issued sector-specific guidance through the Consumer Duty framework. AI systems used in credit decisions, product recommendations, fraud detection, and customer service in UK financial services must meet the FCA’s expectations for explainability, governance, and consumer outcomes.

The Central Bank of Ireland has published separate guidance emphasising board-level accountability for AI governance in regulated firms. For firms regulated in both jurisdictions, the requirements are not identical — mapping the differences is a necessary early step.

Healthcare and Life Sciences

AI used in clinical decision support, diagnostics, or patient management may be classified as a medical device under the UK (MHRA) and EU (MDR/IVDR) frameworks, as well as under the EU AI Act. The dual classification creates significant compliance overhead. The NHS AI Lab has published implementation guidance, and the MHRA has specific guidance on software as a medical device (SaMD).

HR and Recruitment

AI-assisted recruitment CV screening, interview scheduling, and candidate ranking — sits squarely in the EU AI Act’s high-risk category. UK employers using such tools are already exposed under the Equality Act 2010 if automated screening produces discriminatory outcomes. The ICO has published specific guidance on the use of AI in recruitment.

The practical question for SMEs using off-the-shelf HR software with AI features: Do you know what the AI is doing? Vendors often embed AI functionality without prominent disclosure. Asking the right questions during procurement is the first step. Our article on SMEs successfully implementing AI solutions covers vendor evaluation in more depth.

AI Vendor Due Diligence: Questions to Ask Before You Deploy

If you are deploying a third-party AI tool — which most businesses are — you do not inherit the vendor’s compliance status. You remain responsible for how the tool processes personal data, what decisions it informs, and whether those decisions are explainable.

Before deploying any AI tool that handles personal data or informs significant decisions, ask:

- Where is the training data sourced from, and how was consent or a lawful basis established?

- Has the model been tested for bias? On what demographic groups, and what were the results?

- What logging is available to support explainability requirements?

- Is the system certified against ISO/IEC 42001 or any other recognised standard?

- What is the vendor’s incident response process if a compliance failure is identified?

- How will the vendor support a DPIA or regulatory audit?

Under GDPR, any vendor processing personal data on your behalf requires a data processing agreement (DPA). Under the EU AI Act, “deployers” of high-risk AI systems have distinct obligations from those of the system’s provider.

Our article on AI content detection covers how AI systems behave when processing external content — relevant context for any business evaluating vendor tools.

How ProfileTree Supports AI Compliance Readiness

Building an AI compliance position requires both technical understanding and communication infrastructure. The documentation, the internal processes, and the customer-facing transparency measures all need to work together.

ProfileTree’s digital strategy team works with SMEs across Northern Ireland, Ireland, and the UK to build the digital foundations that support compliance: structured data governance, website and CMS configurations that meet transparency requirements, and training that helps business owners and their teams understand what AI tools they are actually using.

The ethics and legalities of digital marketing article covers related regulatory territory, including ASA guidance on AI-generated advertising content and GDPR requirements in marketing automation.

For teams looking to build AI literacy across their organisation — which is itself a governance requirement under emerging frameworks — our digital training programmes provide practical grounding in how AI systems work and where the risks sit.

The Future of AI Compliance: What to Plan For

The UK government has indicated it intends to introduce primary AI legislation, though the timing remains uncertain. The consultation on the Pro-Innovation Regulation of AI Bill is ongoing, and sector regulators are developing more detailed guidance under the principles set out in the White Paper.

ISO/IEC 42001 (published December 2023) will become an increasingly standard reference point for AI governance audits. Organisations that build their compliance framework around its structure now will be better positioned as formal requirements develop.

The EU AI Act’s high-risk provisions apply from August 2026, giving businesses with EU exposure approximately 18 months to build compliance programmes from the time of this article’s publication. That is not a comfortable runway for organisations starting from zero.

The direction of travel is clear: AI compliance will become more formally structured, more auditable, and more consequential. The businesses that treat it as a discipline now will have a meaningful advantage over those that wait until the requirement becomes unavoidable.

FAQs

Does the EU AI Act apply to UK companies?

Yes, if you provide AI systems to EU users or deploy AI systems in the EU market, the Act applies regardless of where your company is registered. The extraterritorial reach is similar in principle to that of the GDPR. A Belfast firm providing an AI-powered recruitment tool to a Dublin employer is within the scope of the Act’s high-risk provisions.

What are the penalties for AI non-compliance under the EU AI Act?

Fines are tiered by violation type: up to €35 million or 7% of global annual turnover for prohibited AI practices; up to €15 million or 3% of turnover for non-compliance with obligations for high-risk systems; up to €7.5 million or 1.5% of turnover for providing incorrect information to authorities. For SMEs, the lower of the two figures applies.

Is ISO/IEC 42001 mandatory?

No. ISO/IEC 42001 is a voluntary standard, not a legal requirement. It provides a recognised framework for AI management systems and is increasingly cited by regulators as evidence of good governance. Certification is not required, but aligning your processes with the standard is useful preparation for the upcoming audit requirements.

Does the UK have its own AI Act?

Not yet. The UK currently relies on existing sector regulation (FCA, ICO, MHRA) and the Data Protection Act 2018 rather than a single AI statute. The government has consulted on a principles-based framework and has indicated intent to legislate, but no primary AI Act exists as of the date of this article. This is subject to change.