Generative AI for SEO: Rank for Questions No One’s Asked Yet

Table of Contents

Traditional SEO is dying while you’re still obsessing over keywords. ChatGPT, Claude, Perplexity, and Gemini are fundamentally reshaping how people find information, yet most businesses remain trapped optimising for a search paradigm that’s rapidly becoming obsolete. Users no longer type keywords into search boxes – they have conversations with AI that synthesises information from across the web to answer questions they haven’t even fully formed yet. This guide reveals how to use generative AI for SEO, position your content for selection by Large Language Models, dominate generative search interfaces, and capture traffic from queries that don’t exist until the moment they’re asked.

The New Search Paradigm: Why Everything You Know About SEO Is Obsolete

Search has evolved from retrieval to generation. Users no longer want ten blue links – they want direct answers synthesised from multiple sources. When someone asks ChatGPT about “sustainable marketing strategies for Belfast restaurants,” they receive a comprehensive answer drawing from dozens of sources, not a list of pages to visit. Your beautifully optimised page ranking first on Google becomes irrelevant if AI never references it in generated responses.

The shift happened faster than anyone predicted. ChatGPT reached 100 million users in two months. Google rushed to launch Bard (now Gemini) in response. Microsoft integrated GPT into Bing, fundamentally changing search dynamics. Perplexity emerged as a dedicated answer engine. Within eighteen months, the search landscape transformed more dramatically than in the previous decade. Businesses still optimising for traditional search are preparing for yesterday’s war.

Northern Ireland businesses face particular challenges in this transition. Local SEO strategies that dominated Belfast search results become meaningless when AI generates answers without geographic context. A restaurant that ranked first for “best seafood Belfast” discovers AI recommends competitors when answering “where should I take my partner for an anniversary dinner near Queen’s University?” The query sophistication leap demands entirely new optimisation approaches.

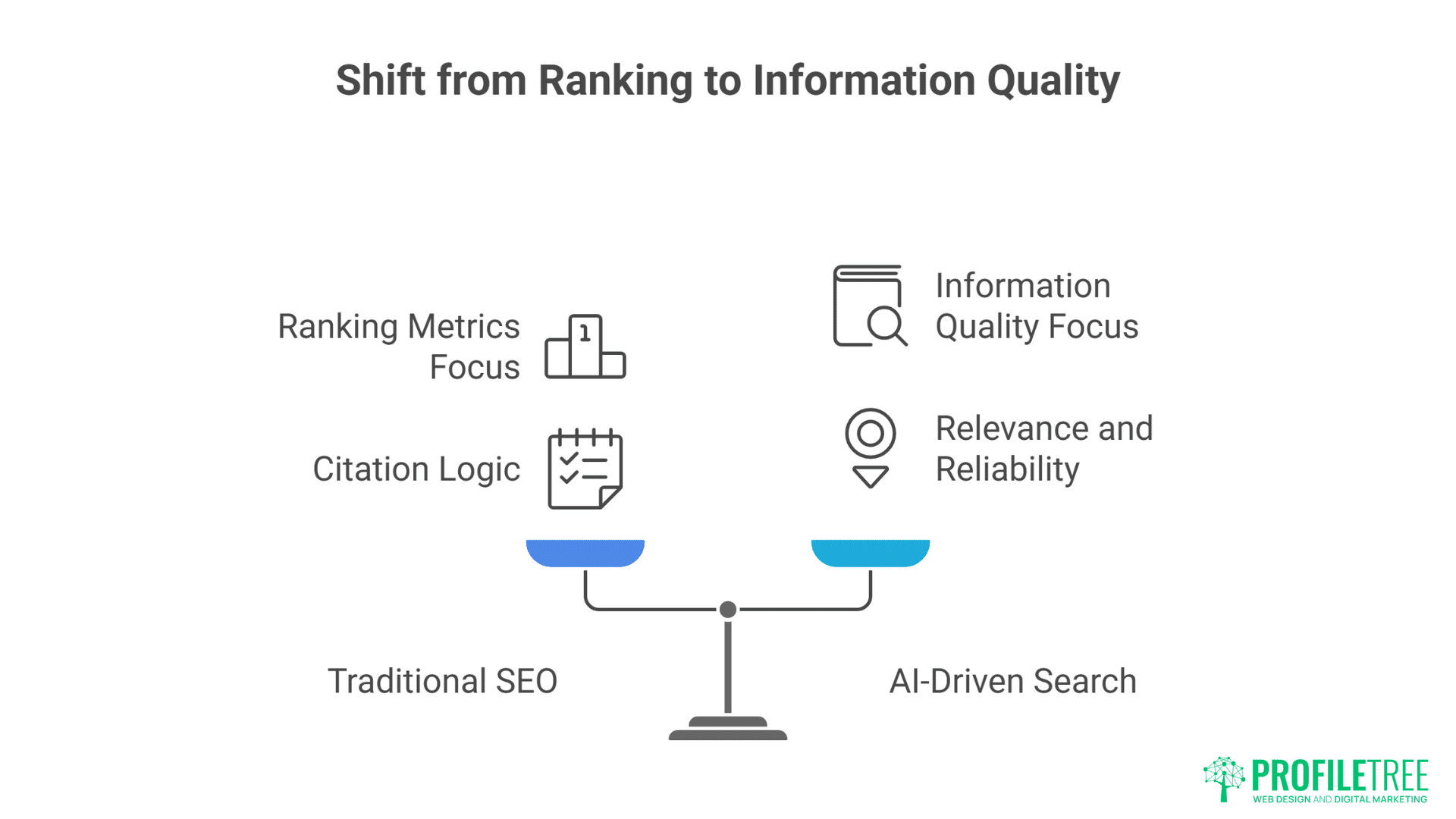

LLMs don’t “search” in any traditional sense. They’ve been trained on massive datasets, encoding information into parameter weights. When generating responses, they’re not retrieving specific pages but reconstructing information from learnt patterns. This fundamental difference means traditional SEO signals – backlinks, keyword density, page authority – matter less than information clarity, factual accuracy, and semantic richness.

The citation revolution changes the game entirely. Perplexity provides sources for every claim. Bing Chat links to references. Google’s Search Generative Experience highlights source websites. But citation doesn’t follow traditional ranking logic. The third Google result might get cited while the first gets ignored. AI selects sources based on information quality, topical relevance, and factual reliability rather than traditional SEO metrics.

Understanding How LLMs Actually Select and Present Information

Large Language Models don’t just “know” answers — they generate them by predicting what words make sense next. This means their responses are shaped by training data, probabilities, and the way prompts guide them. To optimise for SEO in this new landscape, you need to understand how LLMs choose, filter, and frame information.

The Training Data Advantage

LLMs learnt from content published before their training cutoffs. GPT-4’s knowledge extends to April 2023. Claude’s reaches early 2024. Content published during training windows gets encoded into model parameters, gaining massive advantage over newer content. This creates a paradox: older, well-established content has inherent advantage in AI responses, regardless of freshness.

Training data quality matters more than quantity. LLMs learnt to associate certain sources with reliability. Academic papers, established news outlets, and authoritative websites get preferential treatment in response generation. A Wikipedia article from 2019 might outweigh a perfectly optimised blog post from 2024 because the model learnt to trust Wikipedia during training.

The Common Crawl effect shapes AI knowledge. Most LLMs trained on Common Crawl data, a massive web scrape updated periodically. Websites included in Common Crawl gained immortality in AI knowledge. Sites excluded, regardless of quality, remain invisible to base model knowledge. Understanding Common Crawl inclusion criteria becomes critical for long-term AI visibility.

Retrieval-Augmented Generation (RAG) systems bridge the knowledge gap. Modern AI systems supplement trained knowledge with real-time web searches. When you ask ChatGPT about current events, it searches the web, then synthesises findings. This dual system creates two optimisation targets: base model training and RAG retrieval. Success requires addressing both.

Fine-tuning creates preference biases. Organisations fine-tune base models for specific purposes, adjusting which information gets prioritised. OpenAI fine-tuned GPT for helpfulness and harmlessness. Anthropic optimised Claude for accuracy and thoughtfulness. These adjustments affect which content gets selected for response generation. Understanding different models’ fine-tuning biases helps predict content selection.

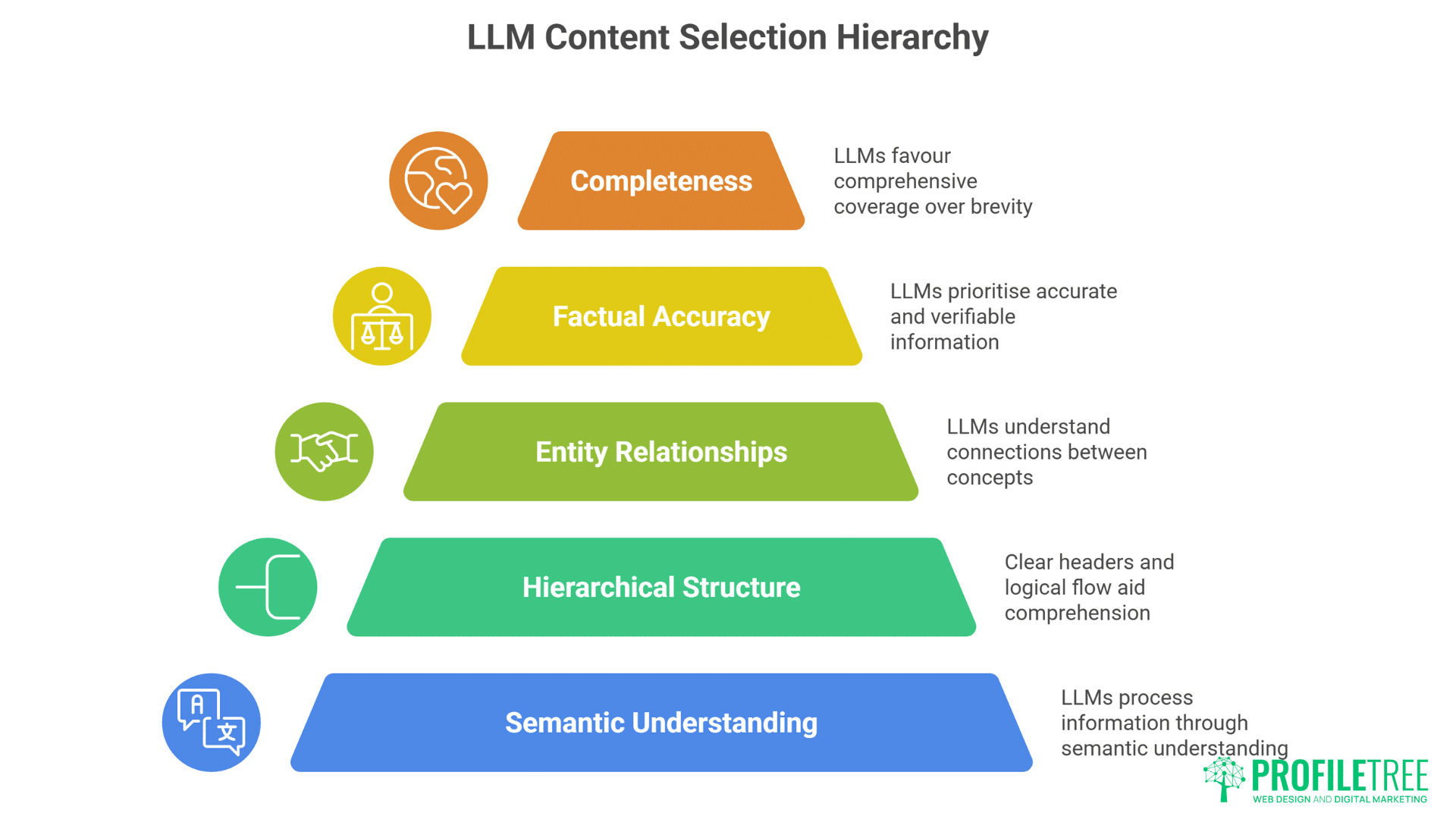

Semantic Density and Information Architecture

LLMs process information through semantic understanding rather than keyword matching. Dense, information-rich content that thoroughly explores topics gets preferentially selected over keyword-optimised but shallow pages. A comprehensive guide covering all aspects of a topic outperforms multiple thin pages targeting individual keywords.

Hierarchical information structure aids AI comprehension. Clear headers, logical flow, and explicit relationships between concepts help LLMs understand and extract information. Content organised with semantic HTML, proper heading hierarchy, and logical sections gets parsed more accurately than visually formatted but semantically poor pages.

Entity relationships matter more than keywords. LLMs understand connections between concepts, people, places, and things. Content that explicitly defines these relationships – “ProfileTree, a Belfast-based digital agency, serves clients across Northern Ireland” – provides clearer signals than keyword-stuffed text. Building rich entity graphs within content improves AI selection probability.

Factual accuracy creates trust signals. LLMs trained to identify and prioritise accurate information. Content containing verifiable facts, proper citations, and correct information gets selected over speculative or inaccurate content. Single factual errors can disqualify entire pages from AI consideration, regardless of other optimisation efforts.

Completeness beats brevity for AI selection. While human readers might prefer concise content, LLMs favour comprehensive coverage. Answer anticipatory questions, address edge cases, provide context. The goal isn’t word count but information completeness. AI selecting your content for partial information might reference competitors for missing elements.

The Context Window Opportunity

Modern LLMs have massive context windows – Claude handles 100,000 tokens, GPT-4 Turbo manages 128,000. This means AI can process entire websites in single prompts. Optimising for context window inclusion means ensuring your most valuable information appears within typical extraction limits.

Front-loading critical information improves selection probability. LLMs often truncate long content, processing only initial portions. Place key facts, unique insights, and authoritative statements early in content. Conclusion-first writing that traditional SEO discouraged becomes advantageous for AI optimisation.

Structured data markup guides AI extraction. Schema.org markup, JSON-LD, and other structured formats help LLMs understand content organisation. FAQPage schema explicitly identifies questions and answers. HowTo schema clarifies instructional content. Product schema defines commercial information. This structured approach improves accurate extraction and citation.

Cross-reference density indicates topical authority. Internal links between related content pieces signal comprehensive coverage. LLMs recognise these patterns, inferring sites with rich internal connections possess deeper topical expertise. Build content clusters with extensive interlinking to signal authority.

Token efficiency maximises impact within limits. Understanding tokenisation helps optimise content for AI processing. Short, clear sentences using common words require fewer tokens than complex, jargon-heavy text. Efficient token usage means more of your content gets processed within context limits.

Generative AI for SEO: Optimisation Strategies for Different AI Platforms

Each AI platform interprets and surfaces content differently, which means one-size-fits-all SEO won’t cut it. From search-integrated models to standalone chatbots, the optimisation levers vary by ecosystem.

Knowing these nuances lets you craft strategies that align with how each platform discovers and delivers answers.

ChatGPT and GPT-Powered Systems

OpenAI’s web browsing feature fundamentally changed ChatGPT’s information access. The system performs web searches, selects relevant pages, and synthesises information. Optimising for ChatGPT’s browser requires understanding its selection criteria: relevance to query, information density, and source credibility.

Title tags and meta descriptions influence ChatGPT selection. While not directly visible in responses, these elements affect whether ChatGPT chooses your page during web browsing. Write descriptive titles that clearly indicate content coverage. Meta descriptions should summarise unique value rather than keyword repetition.

URL structure matters for ChatGPT comprehension. Clean, descriptive URLs help ChatGPT understand page content before visiting. “/ai-marketing-strategies-belfast-businesses” outperforms “/page?id=12345” for selection probability. Implement logical URL hierarchies that reflect content organisation.

Mobile optimisation affects ChatGPT access. The browsing feature sometimes struggles with JavaScript-heavy sites or complex layouts. Ensure content remains accessible with JavaScript disabled. Implement proper semantic HTML that works without client-side rendering. Professional web development ensures technical compatibility with AI crawlers.

API accessibility provides direct integration opportunities. Businesses can build ChatGPT plugins or GPT-powered applications that directly access their content. Creating API endpoints for your valuable content enables deeper integration than traditional web crawling allows.

Claude and Anthropic’s Approach

Claude prioritises factual accuracy and comprehensive responses. Anthropic’s constitutional AI training makes Claude especially sensitive to misleading or biased content. Optimisation requires scrupulous accuracy, balanced perspectives, and explicit acknowledgement of limitations or uncertainties.

Long-form content excels with Claude’s extended context window. Claude can process entire books in single prompts, making comprehensive resources particularly valuable. Create definitive guides, exhaustive documentation, and complete references that Claude can process holistically rather than fragmentarily.

Citation formatting influences Claude’s source attribution. Clear source citations, author credentials, and publication dates help Claude accurately attribute information. Include inline citations, footnotes, or bibliography sections that explicitly identify information sources.

Nuanced discussion outperforms absolutist statements. Claude’s training emphasised thoughtful, balanced responses. Content acknowledging complexity, discussing tradeoffs, and presenting multiple viewpoints gets preferentially selected over black-and-white proclamations.

Technical accuracy matters intensely for Claude selection. Anthropic trained Claude to identify and avoid technical errors. Code examples must be correct. Mathematical calculations need precision. Technical specifications require accuracy. Single technical errors can disqualify content from Claude’s consideration.

Perplexity and Answer Engines

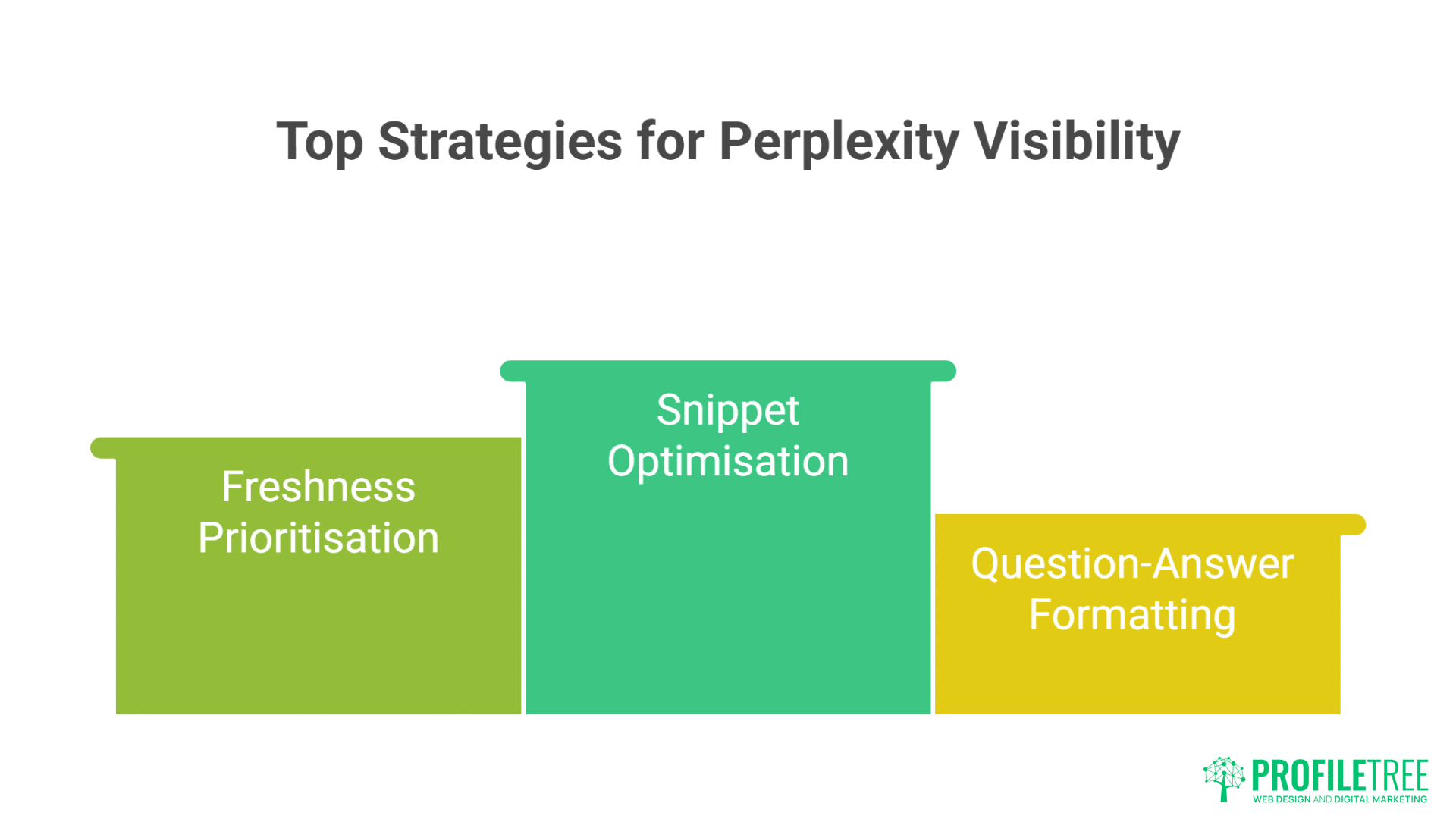

Perplexity explicitly shows source selection, providing unique optimisation insights. Pages cited by Perplexity display below answers, driving direct traffic. Understanding Perplexity’s selection algorithm helps achieve consistent citation visibility.

Snippet optimisation determines Perplexity selection. Perplexity extracts specific passages to support claims. Write self-contained paragraphs that completely answer specific questions. Each paragraph should function as a standalone snippet while contributing to overall narrative.

Freshness matters more for Perplexity than base LLMs. Perplexity prioritises recent content for time-sensitive queries. Regular updates, publication dates, and “last modified” timestamps signal content freshness. Maintain content currency to preserve Perplexity visibility.

Question-answer formatting aligns with Perplexity’s mission. Structure content as explicit questions and comprehensive answers. Use FAQ sections, Q&A formats, and problem-solution frameworks. This alignment with Perplexity’s answer engine purpose improves selection probability.

Source diversity strategies prevent over-reliance on single platforms. Perplexity often cites multiple sources per answer. Position different content pieces to capture various citation opportunities within single responses. Diversified content improves overall citation frequency.

Google’s Search Generative Experience (SGE)

SGE blends traditional search with AI generation, creating hybrid optimisation requirements. Content must rank traditionally while also being suitable for AI synthesis. This dual requirement demands satisfying both algorithmic ranking factors and AI selection criteria.

Featured snippet optimisation translates to SGE success. Content optimised for featured snippets often gets selected for SGE responses. Structure content with clear, concise answers followed by elaboration. Use lists, tables, and structured formats that extract cleanly.

E-E-A-T signals influence SGE selection more than pure AI systems. Google’s emphasis on Experience, Expertise, Authoritativeness, and Trustworthiness extends to SGE. Demonstrate credentials, showcase experience, and build topical authority. SEO services that establish E-E-A-T benefit both traditional and AI-driven search.

Local relevance affects SGE for geographic queries. Google’s SGE considers user location and local intent. Include geographic markers, local context, and region-specific information. Belfast businesses should emphasise local relevance while maintaining broader appeal.

Multimedia integration enhances SGE selection. Google’s SGE can incorporate images, videos, and other media. Create rich multimedia content that provides visual answers alongside text. Video marketing becomes increasingly important as SGE evolves to include diverse media types.

Creating Content for Questions That Don’t Exist Yet

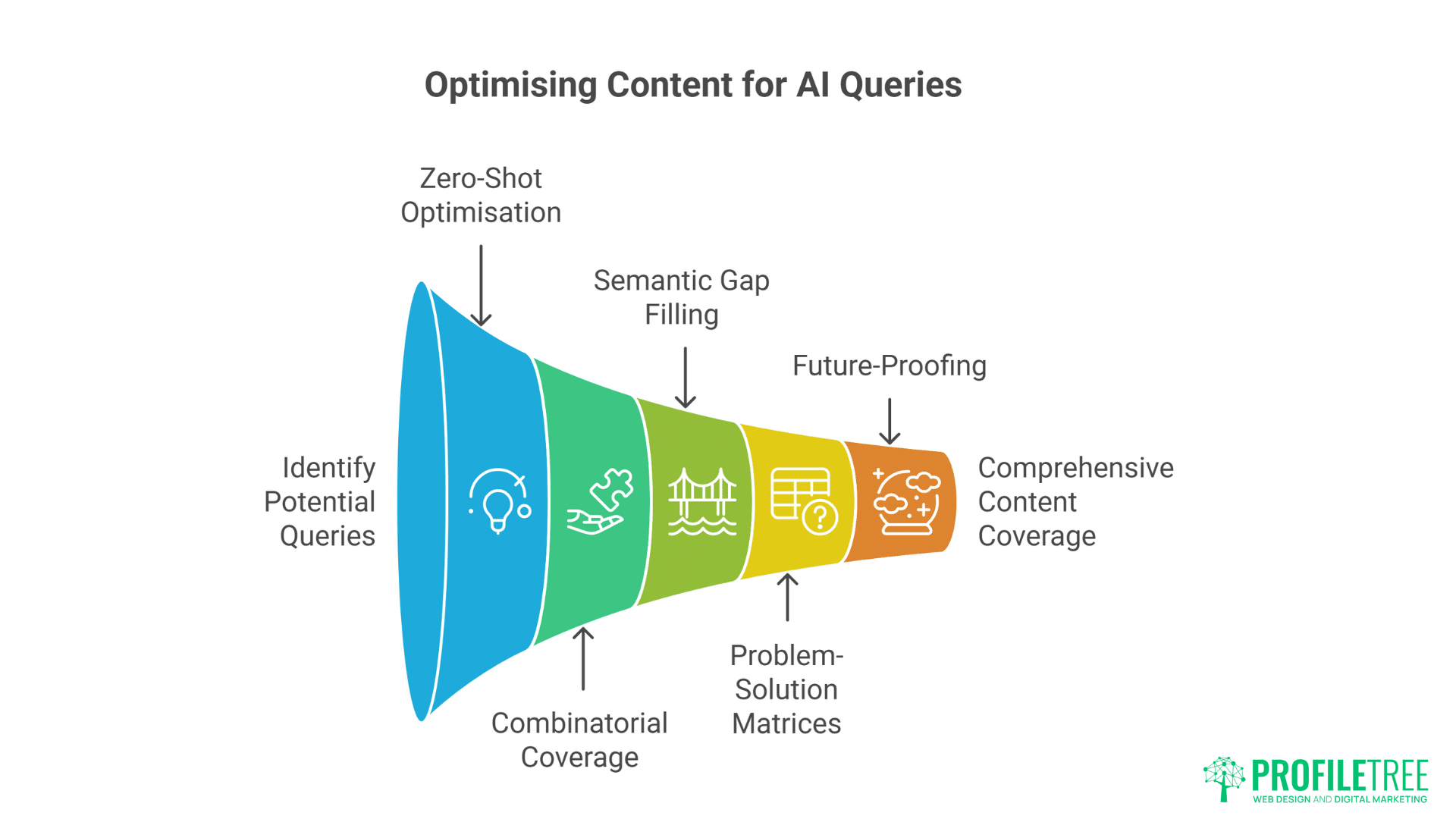

Most content today answers questions people are already asking—but generative AI changes the game.

LLMs can surface and remix ideas users haven’t explicitly searched for, creating demand ahead of the curve. By anticipating these unasked questions, you position your content to lead conversations rather than follow them.

Anticipatory Content Strategy

Zero-shot query optimisation targets questions nobody has asked. LLMs excel at answering novel questions by combining learnt information. Create content addressing potential questions by thoroughly exploring topic spaces. Cover edge cases, unusual scenarios, and hypothetical situations that traditional keyword research misses.

Combinatorial coverage addresses query permutations. Users ask the same question countless ways. Instead of targeting specific phrasings, cover concept combinations comprehensively. Discuss “AI marketing for restaurants,” “restaurant AI strategies,” “hospitality automation marketing,” and related permutations within single comprehensive resources.

Semantic gap filling identifies uncovered territories. Analyse existing content to identify information gaps between related topics. If content exists about “social media marketing” and “Belfast restaurants” separately, create content explicitly connecting these concepts. LLMs synthesise from available information, so filling gaps improves selection probability.

Problem-solution matrices ensure comprehensive coverage. List potential problems your audience faces, then create content addressing each solution thoroughly. This systematic approach ensures content exists for any problem-based query users might pose to AI systems.

Future-proofing through speculative content pays dividends. Discuss emerging trends, potential developments, and future scenarios. When these become reality, your content already exists in training data or crawl indexes. Early coverage of “AI impact on Belfast hospitality” positions you for when this becomes pressing concern.

Conversational Content Architecture

Dialogue-based formatting mirrors AI interactions. Structure content as conversations, with questions and detailed answers. This format aligns with how users interact with AI, making content naturally suitable for extraction and synthesis.

Progressive disclosure accommodates varying query depth. Start with simple answers, then provide increasing detail. This structure serves both quick factual queries and deep exploratory conversations. AI can extract appropriate depth based on user needs.

Contextual branching addresses follow-up questions. Anticipate what users might ask next and provide that information. If discussing restaurant marketing, address follow-up questions about budget, timeline, and implementation. This branching structure supports multi-turn AI conversations.

Narrative coherence ensures extractable insights. Tell complete stories that AI can summarise or excerpt. Case studies, examples, and scenarios provide concrete illustrations AI can reference when generating responses.

Voice consistency aids AI attribution. Maintain consistent authorial voice across content. This consistency helps AI accurately attribute information to your brand when citing sources. Distinctive voice becomes competitive advantage in AI citations.

Multi-Modal Optimisation

Text-to-image AI integration expands visibility. As AI increasingly generates visual content, text descriptions of visual concepts gain importance. Describe designs, layouts, and visual strategies in detail. This text becomes training data for image generation AI.

Code examples for technical queries provide concrete value. Include actual, functional code snippets for technical topics. AI systems can extract and adapt these examples for user queries. Ensure code is correct, well-commented, and follows best practices.

Data tables and structured information extract cleanly. Present data in tables, lists, and structured formats. These elements extract reliably for AI synthesis. Include raw data alongside analysis for maximum utility.

Interactive elements translate to conversational guidance. While AI can’t reproduce interactive features, it can describe them. Document interactive tools, calculators, and features textually. This documentation becomes instruction sets AI can provide to users.

Template provision enables AI-assisted implementation. Provide templates, frameworks, and blueprints users can adapt. AI can reference these resources when helping users implement strategies. Digital training materials that include templates become valuable AI references.

Technical Implementation for AI Crawlers

AI crawlers don’t scan the web like traditional search bots — they parse, segment, and embed content for retrieval. This requires structuring your site in ways that are machine-readable, context-rich, and semantically linked. Proper technical setup ensures your content is not just found, but actually usable by generative AI systems.

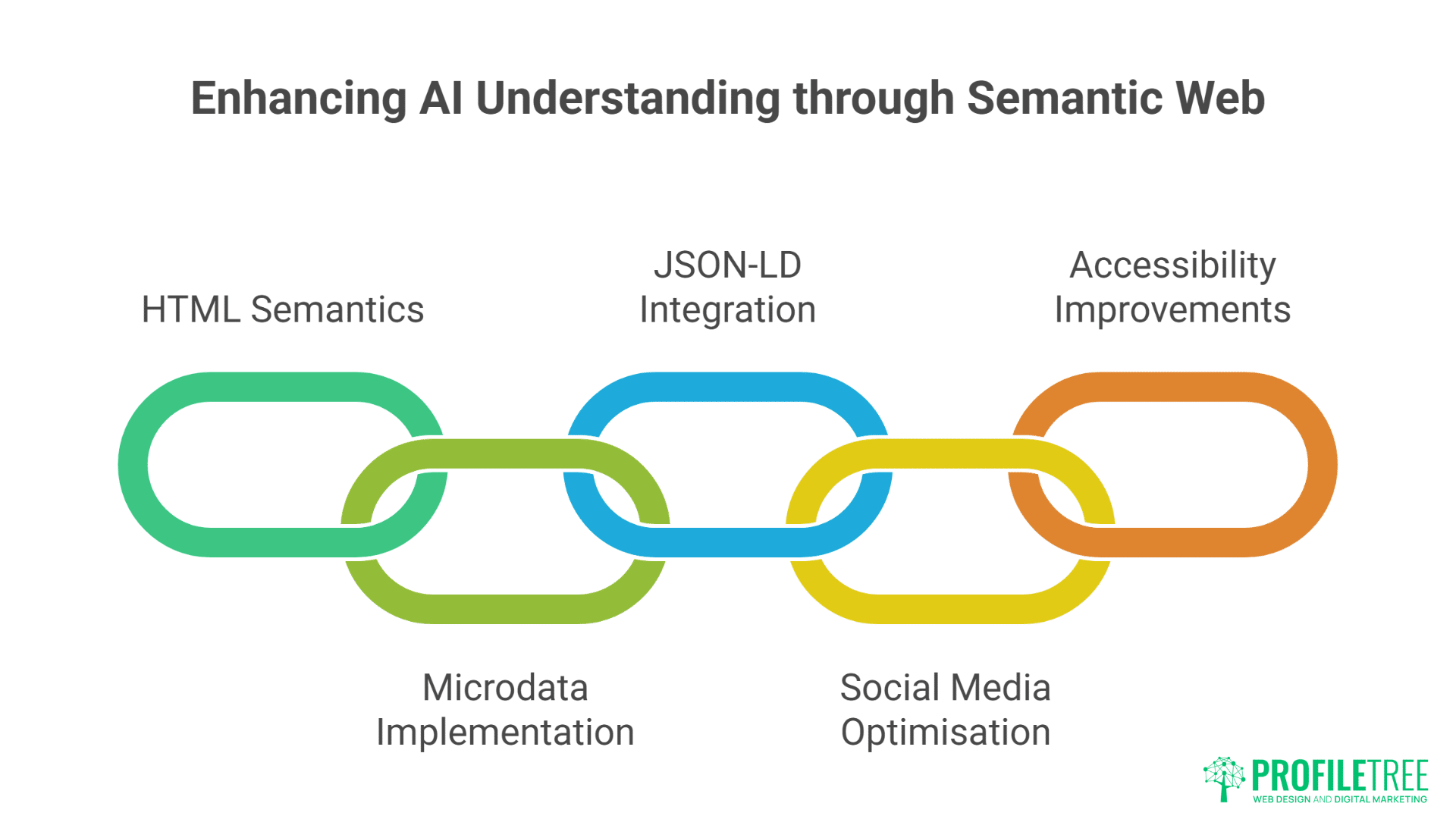

Semantic HTML and Structured Markup

Proper HTML semantics guide AI interpretation. Use article, section, nav, aside, and other semantic elements correctly. These tags help AI understand content structure and relationships. Semantic HTML isn’t just accessibility best practice – it’s AI optimisation necessity.

Microdata implementation provides explicit signals. Beyond basic Schema.org, implement comprehensive microdata. Mark up people, places, organisations, events, and relationships. This explicit structuring helps AI accurately interpret and attribute information.

JSON-LD provides machine-readable context. Include JSON-LD scripts that explicitly define content structure, relationships, and metadata. This machine-readable format ensures AI correctly interprets complex information structures.

Open Graph and Twitter Cards influence social AI. As AI systems increasingly ingest social media, OG tags and Twitter Cards affect how content gets interpreted. Optimise these elements for accurate representation across platforms.

Accessibility improvements aid AI comprehension. Alt text, ARIA labels, and proper heading structure help AI understand content similarly to screen readers. Accessibility optimisation simultaneously serves users and AI systems.

Site Architecture for AI Discovery

Flat architecture improves crawl efficiency. Minimise click depth to important content. AI crawlers, like traditional search bots, might not explore deeply nested pages. Keep valuable content within three clicks of homepage.

XML sitemaps require strategic construction. Prioritise pages for AI crawling through sitemap configuration. Include lastmod dates, priority scores, and changefreq indicators. Guide AI crawlers to your most valuable, recently updated content.

Internal linking density signals topical authority. Rich internal connections between related content indicate comprehensive coverage. AI systems recognise these patterns as expertise signals. Build topic clusters with extensive cross-referencing.

Hub pages aggregate topical information. Create comprehensive resource pages that link to all related content. These hubs become efficient extraction points for AI systems seeking comprehensive information about specific topics.

API documentation enables direct integration. Provide clear API documentation for systems wanting programmatic access. This technical openness encourages AI platforms to integrate your content directly rather than relying on web crawling.

Performance Optimisation for AI Access

Page speed affects AI crawler efficiency. Slow-loading pages might timeout or get skipped by AI crawlers. Optimise performance to ensure complete content indexing. Target sub-second server response times and complete page loads under three seconds.

Progressive enhancement ensures content accessibility. Build pages that work without JavaScript, then enhance with client-side features. This approach ensures AI crawlers can access content regardless of JavaScript execution capabilities.

Content delivery networks improve global accessibility. CDNs ensure fast access from any location. As AI companies operate globally, geographic performance variations affect crawl success. Implement CDN delivery for consistent worldwide access.

Rate limiting requires careful configuration. Don’t accidentally block AI crawlers with aggressive rate limiting. Identify AI crawler user agents and whitelist them appropriately. Monitor logs for AI crawler activity patterns.

Caching strategies balance freshness with performance. Implement intelligent caching that serves fresh content to AI crawlers while maintaining performance. Use cache headers to indicate content freshness accurately.

Measuring Success in the AI-First World

Traditional SEO metrics like clicks and impressions only tell part of the story in an AI-first landscape.

You’ll need to track visibility within AI answers, brand mentions, and conversational relevance.

Success now means measuring influence on generated responses, not just ranking on search results pages.

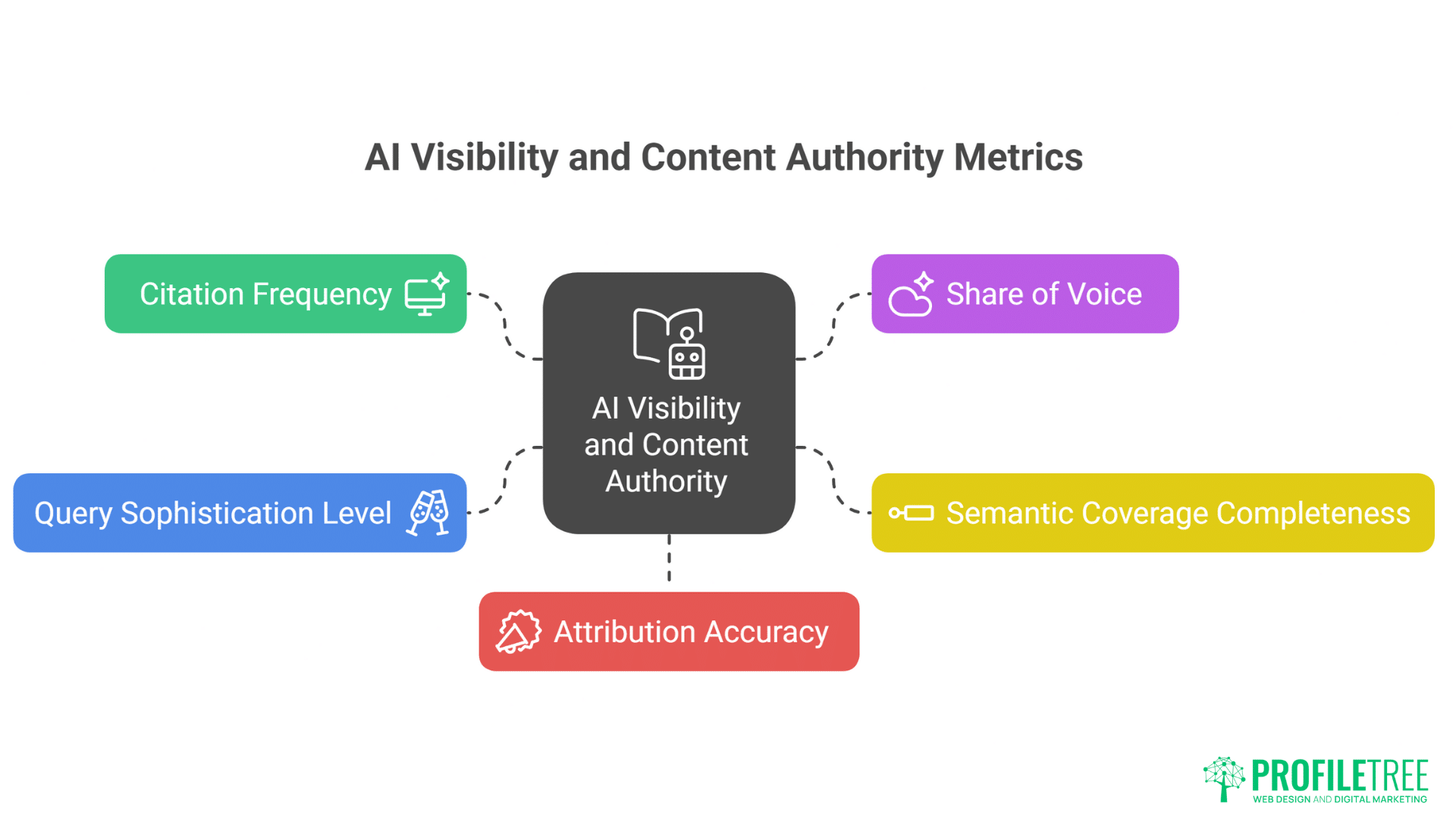

New Metrics for New Paradigms

Citation frequency replaces traditional rankings. Track how often AI systems cite your content rather than search positions. Tools like Perplexity show explicit citations. Monitor ChatGPT and Claude responses for brand mentions and content references.

Share of voice in AI responses indicates market position. What percentage of AI responses about your topic reference your content? This metric reveals true AI visibility beyond traditional search metrics.

Semantic coverage completeness measures topical authority. How completely does your content cover semantic space around target topics? Use entity analysis tools to map coverage and identify gaps.

Query sophistication level indicates content depth. Track whether AI references your content for simple factual queries or complex analytical questions. Higher sophistication queries suggest deeper topical authority.

Attribution accuracy reveals brand building success. When AI mentions your brand or content, is attribution accurate? Misattribution suggests unclear brand signalling requiring correction.

Tracking and Analytics Evolution

AI response monitoring requires new tools. Services emerge to track AI citations across platforms. Implement monitoring for brand mentions, content references, and topical citations across major AI platforms.

Sentiment analysis of AI responses reveals perception. How do AI systems characterise your brand or content? Analyse emotional tone and context of AI references. Negative characterisation requires content strategy adjustment.

Competitive intelligence through AI responses uncovers gaps. Analyse which competitors get cited for specific queries. Identify why competitors get selected and adjust strategy accordingly. Digital strategy consultation helps interpret these insights for strategic advantage.

User journey mapping includes AI touchpoints. Track how users move between AI platforms and your website. Understand which AI interactions drive valuable traffic versus casual browsers.

Conversion attribution extends to AI-driven traffic. Implement tracking that identifies visitors arriving from AI platforms. Understand conversion rates and values from AI-driven versus traditional search traffic.

ROI Calculation for AI Optimisation

Direct traffic value from AI citations provides clear ROI. When Perplexity or SGE cites your content with links, track resulting traffic and conversions. This direct attribution demonstrates immediate value.

Brand lift from AI mentions affects broader metrics. Regular AI citations build brand awareness even without direct clicks. Monitor brand search volume increases correlated with AI citation frequency.

Competitive moat building through AI presence creates long-term value. Early AI optimisation creates enduring advantages as content gets embedded in training data. Calculate competitive advantage value beyond immediate traffic.

Cost comparison with traditional SEO reveals efficiency. AI optimisation often requires less ongoing investment than traditional link building and content creation. Compare costs per acquisition across channels.

Future value modelling accounts for AI growth. As AI adoption increases, early optimisation compounds. Model future traffic based on AI platform growth projections.

Future-Proofing Your AI SEO Strategy

Generative AI is evolving fast, and today’s best practices may look outdated tomorrow. Future-proofing your strategy means building flexible systems that adapt to new platforms, algorithms, and user behaviours. By focusing on durable fundamentals like authority, clarity, and structured data, you’ll stay ahead as AI reshapes search.

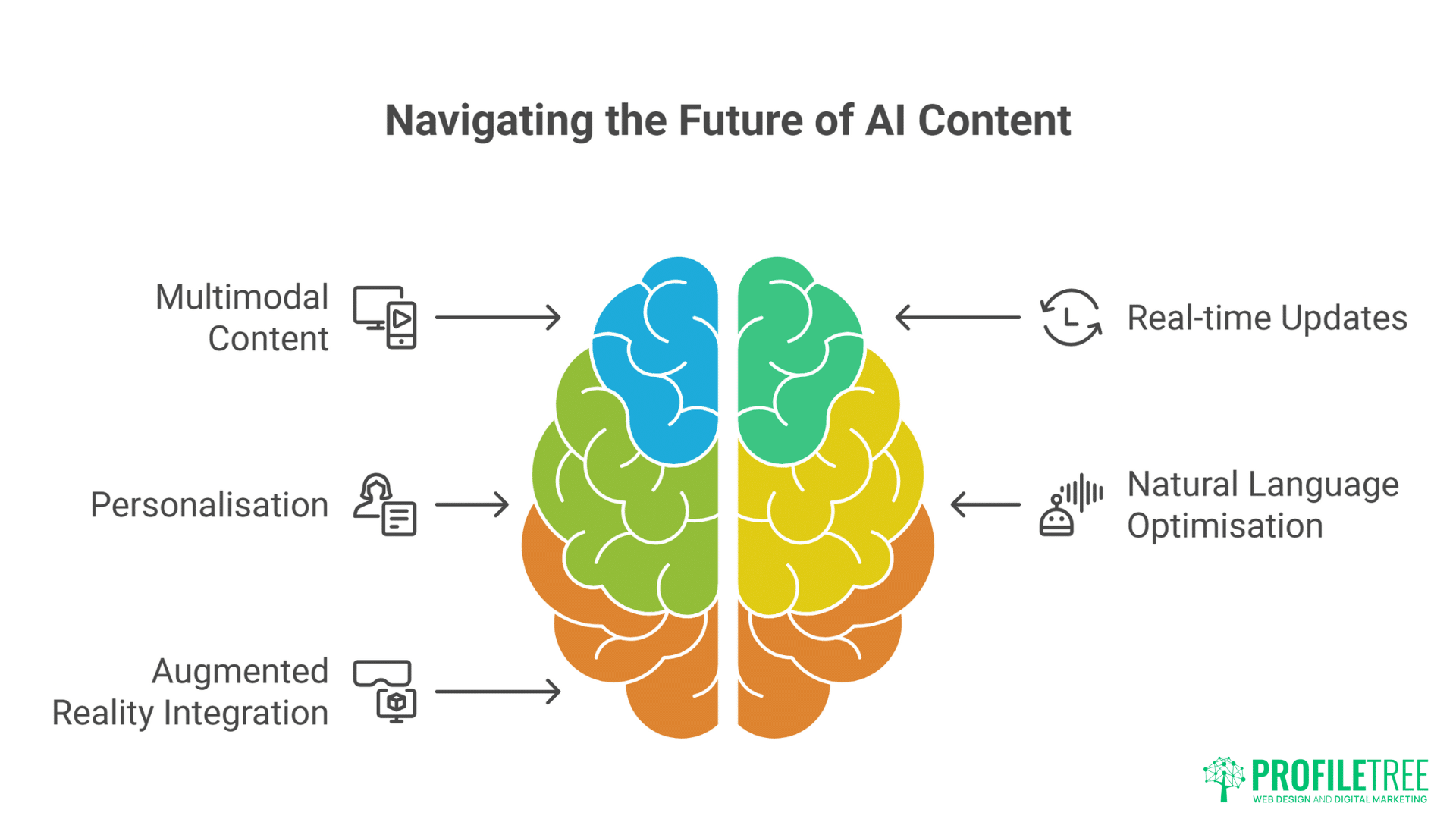

Preparing for Next-Generation AI

Multimodal AI requires diverse content formats. Future AI seamlessly blends text, image, video, and audio. Create content in multiple formats to maintain visibility as AI evolves. Video production services become essential for multimodal AI optimisation.

Real-time information systems demand content freshness. As AI systems increasingly access real-time data, content currency becomes critical. Implement systems for rapid content updates responding to events and changes.

Personalisation at scale through AI requires content variants. AI will deliver personalised responses based on user context. Create content variations for different audiences, expertise levels, and contexts.

Voice and conversational interfaces need natural language optimisation. Optimise for spoken queries and conversational flows. Write content that sounds natural when read aloud by AI voices.

Augmented reality integration extends content into physical spaces. AR-enabled AI will overlay digital information on physical environments. Create content that bridges digital and physical experiences.

Building Adaptive Capabilities

Continuous learning systems require content evolution. As AI systems learn continuously, static content becomes obsolete. Build processes for regular content updates and improvements.

Feedback loop integration improves content selection. Monitor which content gets cited and why. Use insights to refine content strategy continuously. Create virtuous cycles of improvement.

Cross-platform presence ensures comprehensive visibility. Maintain presence across emerging AI platforms. Don’t rely solely on major players – explore niche AI systems serving specific markets.

Partnership strategies multiply reach. Collaborate with other authoritative sources for mutual citation. Build networks that increase overall AI visibility through strategic content partnerships.

Innovation in content formats maintains competitive edge. Experiment with new content types that AI systems might preferentially select. Pioneer formats optimised specifically for AI consumption.

The Northern Ireland Advantage in AI SEO

Northern Ireland offers a unique edge in the AI SEO space, blending strong digital talent with a growing tech ecosystem. Its universities, startups, and research hubs foster innovation at the intersection of AI and content strategy. Such a combination positions the region as a powerful launchpad for businesses aiming to lead in AI-driven search.

Local Expertise Meets Global AI

Belfast’s tech sector growth creates unique opportunities. As Northern Ireland’s tech ecosystem expands, local expertise becomes valuable for AI training. Document local innovations, case studies, and success stories that provide unique training data.

Cross-border complexity provides rich content opportunities. Northern Ireland’s unique position creates complex scenarios perfect for AI content. Address UK-Ireland regulatory differences, currency considerations, and market dynamics that AI systems need to understand.

Cultural nuances offer differentiation. Northern Ireland’s distinct culture, history, and business environment provide unique perspectives. This differentiation helps content stand out in AI selection processes.

Time zone advantages enable real-time content leadership. Belfast’s position between US and Asian markets enables timely content creation for global events. Be first with analysis and commentary that AI systems reference.

English language expertise without US saturation provides opportunity. High-quality English content from outside oversaturated US markets gets preferential treatment. Northern Ireland creators avoid the noise of US content saturation.

Building Local Authority for Global AI

Community building creates citation networks. Foster local business communities that reference each other’s content. These organic citation networks signal authority to AI systems.

Case study development showcases regional expertise. Document successful Northern Ireland businesses and strategies. These case studies become valuable references for AI systems answering regional queries.

Educational content establishes thought leadership. Create educational resources about Northern Ireland market dynamics. Training programmes that get referenced by AI systems build lasting authority.

Government and institutional partnerships provide credibility. Collaborate with Invest NI, universities, and government agencies. These partnerships signal authority that AI systems recognise.

Innovation documentation captures emerging trends. Document Northern Ireland’s innovation in sectors like fintech, cybersecurity, and creative industries. Early documentation positions content for future AI reference.

FAQs

What’s the difference between optimising for Google versus optimising for ChatGPT?

Google prioritises pages based on hundreds of ranking signals including backlinks, site authority, and user engagement metrics. ChatGPT selects information based on relevance, accuracy, and information density within its training data or web browsing results. Google optimisation focuses on ranking signals and user experience, while ChatGPT optimisation emphasises comprehensive, accurate information that directly answers queries.

How can I tell if AI systems are using my content?

Monitor AI platforms directly by asking questions about your area of expertise. Check if Perplexity cites your pages, if ChatGPT mentions your brand, or if Google’s SGE references your content. Use brand monitoring tools adapted for AI platforms. Track unusual traffic patterns that might indicate AI crawler activity. Watch for citation patterns in AI responses about your industry.

Should I block AI crawlers from accessing my content?

Blocking AI crawlers means missing massive future traffic opportunities. As users shift from traditional search to AI interfaces, invisible content loses all organic reach. Instead of blocking, optimise content for proper attribution. Ensure AI systems cite your brand correctly when using your information. The goal is visibility with attribution, not invisibility.

How does local SEO translate to AI-driven search?

Local signals matter differently for AI systems. Traditional local SEO relies on Google My Business, local citations, and geographic keywords. AI systems need explicit geographic context within content – mention Belfast, Northern Ireland, specific neighbourhoods, and local landmarks. Create content that helps AI understand your geographic relevance and service areas naturally within informative text.

Can small businesses compete with large corporations in AI-driven search?

Small businesses have advantages in AI-driven search. Specific expertise, niche knowledge, and authentic experiences often outweigh corporate content in AI selection. AI systems value accurate, detailed information over brand authority. Small businesses can dominate specific topics through comprehensive coverage that large corporations won’t match. Focus on depth over breadth.

What’s the ROI timeline for AI SEO investments?

Immediate returns come from platforms with web browsing like ChatGPT and Perplexity – often within weeks. Training data inclusion for future models requires longer horizons – content published today might influence models trained next year. The compound effect means early investment yields exponential returns as AI adoption grows. Expect meaningful traffic within 3-6 months, transformative impact within 18-24 months.

How technical does my team need to be for AI SEO?

Basic AI SEO requires minimal technical expertise beyond traditional SEO. Focus on content quality, structure, and comprehensiveness. Advanced optimisation involving API integration, structured data implementation, and technical performance optimisation benefits from developer involvement. Professional development services handle technical requirements while you focus on content strategy.

Will AI SEO replace traditional SEO entirely?

Traditional SEO remains important but decreasingly sufficient. Google isn’t disappearing, but user behaviour shifts toward AI interfaces for complex queries. Successful strategies address both paradigms – optimise for traditional search while building AI visibility. The transition period rewards businesses excelling at both approaches.

Seize the AI-First Advantage Now

The window for establishing AI-first authority narrows daily. Every piece of content published today could influence AI systems for years. While competitors obsess over traditional rankings that matter less each day, forward-thinking businesses build presence in AI systems that define tomorrow’s information landscape.

The complexity seems overwhelming, but simple steps yield immediate results. Start by creating comprehensive, accurate content that thoroughly addresses user needs. Structure information clearly with semantic HTML and proper markup. Build topical authority through complete coverage rather than keyword targeting. These fundamentals position you for AI selection regardless of platform evolution.

ProfileTree helps businesses navigate this paradigm shift. Our AI training services teach teams to optimise for both traditional and AI-driven search. Our SEO strategies evolve beyond keywords to build comprehensive AI visibility. We understand that success requires more than technical optimisation – it demands fundamental strategic evolution.

The businesses winning tomorrow are adapting today. They’re creating content for questions not yet asked, building authority in systems still emerging, and establishing presence in training data that will shape AI knowledge for years. The choice is simple: evolve now or become invisible as the world shifts to AI-first information discovery. Your future visibility depends on decisions you make today about AI optimisation.