Google Penguin Update: Link Spam, SpamBrain and Recovery

The Google Penguin update did not just change how Google ranked websites. It changed what SEO actually meant. When it arrived in April 2012, entire link-building strategies became worthless overnight. Some became actively dangerous. The businesses that adapted quickly pulled ahead. The ones that ignored it paid the price in traffic losses that, in some cases, took years to recover from.

This guide covers what Penguin targeted, how it evolved into Google’s current real-time spam systems, and what a clean, effective backlink strategy looks like for UK businesses today. Whether you’re auditing an existing profile or building links for the first time, understanding Penguin’s logic is still the starting point.

Table of Contents

What Is the Google Penguin Update?

The Google Penguin update is a Google algorithm change, first released in April 2012, that targeted websites using manipulative link-building practices to inflate their search rankings. Google initially called it the Webspam Update before rebranding it as Penguin.

Before Penguin, the quantity of backlinks pointing to a page had enormous influence over its ranking position. That created a market for link farms, private blog networks, and paid link placements. Penguin was built to devalue those tactics and reward sites that had earned genuine links through quality content.

The update specifically targeted two categories of manipulation: webspam (low-quality sites that existed purely to host links) and unnatural anchor text patterns (keyword-stuffed link text applied across hundreds of backlinks in a way no natural editorial process would produce).

When Was Penguin Launched?

Google released the first Penguin update on 24 April 2012. That initial version affected roughly 3% of all English-language search queries. Subsequent refreshes extended its reach, with Penguin 2.0 in 2014 broadening the scope to include deeper site pages, not just homepages. The final major iteration, Penguin 4.0, launched in September 2016. That version was significant for two reasons: it became part of Google’s core algorithm, running continuously rather than in periodic batches, and it shifted from penalising sites to devaluing individual links. That distinction matters enormously, and it is one most guides still get wrong.

Devaluation vs Penalisation: The Distinction That Still Causes Confusion

Many businesses that saw traffic drops in 2012 or 2014 assumed they had been “penalised” by Penguin. Some were. But from Penguin 4.0 onwards, the system no longer punishes your site for bad links. It ignores those links instead.

That is a different problem with a different solution. A manual action (a human-applied Google penalty) requires you to clean up your backlinks and file a reconsideration request. An algorithmic devaluation through Penguin simply means those links are no longer counted in your favour. Your ranking drops because you lose the boost those links were providing, not because Google has applied a negative score to your domain.

If you have seen a gradual traffic decline rather than a sudden cliff-edge drop, devaluation is far more likely than a manual action. Our complete guide to backlink analysis walks through how to distinguish between the two using Google Search Console data.

A History of Penguin: From 1.0 to Real-Time Integration

Understanding the update’s history matters because each version shifted the rules in ways that still shape what link-building agencies recommend today.

Penguin 1.0 (April 2012)

The original launch targeted the most obvious manipulation: sites with thousands of exact-match anchor text backlinks from irrelevant pages. If your domain had 800 backlinks all using the phrase “cheap car insurance Belfast” as anchor text, Penguin flagged that as unnatural. The penalty at this stage applied to the whole domain and persisted until the next Penguin refresh, which could be months or years away.

Penguin 2.0 (May 2013) and 2.1 (October 2013)

These refreshes extended Penguin’s reach deeper into site architecture. Previously, internal pages could accumulate spammy links while the homepage remained relatively clean. Penguin 2.0 closed that gap and also tightened its targeting of guest posting networks that were operating purely as link conduits rather than genuine editorial publications.

Penguin 3.0 (October 2014)

This was primarily a data refresh rather than an algorithm change. Its practical significance was in demonstrating how long recovery could take under the old batch-processing model. Sites that had cleaned up their backlink profiles months earlier had to wait until Penguin 3.0 rolled out before seeing any ranking recovery. That delay caused enormous frustration and, in hindsight, drove many site owners to over-disavow, creating new problems.

Penguin 4.0 (September 2016): The Shift to Real-Time

Penguin 4.0 is the version that matters most for understanding SEO today. Two changes defined it.

First, it became real-time. Rather than processing backlink data in periodic batches, Penguin now runs continuously as part of Google’s core algorithm. When Google recrawls a link, Penguin’s logic applies immediately. Recovery is no longer contingent on waiting for a scheduled update.

Second, it shifted from penalisation to devaluation. Rather than reducing your rankings because of bad links, Penguin now simply discounts those links. They stop counting. For sites that relied on manipulative links for their positions, the effect on rankings was similar, but the mechanism and the recovery path were fundamentally different.

“The change to real-time processing was the most important shift in Penguin’s history,” says Ciaran Connolly, founder of ProfileTree. “It meant that cleaning up your backlinks actually had a measurable effect fairly quickly, rather than waiting six to twelve months for the next refresh to kick in. That changed how we approach recovery work for clients.”

How Penguin Evolved Into SpamBrain

This is the section most guides skip, and it is the most practically useful thing to understand about how Google handles link quality in 2026.

Penguin 4.0 did not just become part of the core algorithm. Over the years that followed, Google progressively handed more of its spam-detection work to SpamBrain, its AI-based spam prevention system. SpamBrain does not replace Penguin’s logic; it extends it. Where Penguin targeted specific patterns (keyword-stuffed anchor text, link farm participation), SpamBrain learns to identify spam signals that are harder to codify as explicit rules.

What SpamBrain Does That Penguin Could Not

Penguin worked on identifiable patterns. If a link came from a private blog network, Penguin’s pattern-matching identified that based on features like thin content, over-optimised anchor text, and cross-site linking footprints. SpamBrain can identify link spam even when those surface signals are absent. It can look at the broader network of a site’s behaviour, the types of sites it links to and receives links from, and assess the probability that a link is part of a paid network, even when that network is well-disguised.

Google confirmed SpamBrain’s expanded role in a 2022 Search Central Blog post, stating that it had used the system to neutralise link-based spam across billions of pages. The practical implication is that tactics which may have survived earlier Penguin versions are increasingly unlikely to survive SpamBrain’s analysis.

What This Means for UK Businesses Today

For SMEs in Northern Ireland, Ireland, and across the UK, the SpamBrain era means that short-term link schemes have become genuinely high-risk rather than merely inadvisable. Legacy UK business directories that were once standard practice for local SEO are now assessed for quality signals in ways they were not in 2015. Directory submissions are not inherently problematic, but submitting to directories that are themselves part of link networks, or that host content with no genuine editorial value, carries real risk.

Our SEO services for Northern Ireland businesses include backlink profile audits that account for both Penguin-era signals and SpamBrain’s expanded pattern detection.

Penguin vs Panda: Understanding the Core Difference

These two updates are regularly confused, and the confusion leads to misdiagnosed problems. If you are trying to work out why your rankings dropped, understanding which system is involved changes what you should do about it.

| Factor | Google Panda | Google Penguin |

|---|---|---|

| Targets | On-page content quality | Off-page backlink quality |

| Main signals | Thin content, duplicate content, low engagement | Paid links, spammy anchor text, link farms |

| Applies to | Pages and sites | Individual links (from 4.0) |

| Recovery path | Improve content quality | Clean or disavow problematic links |

| Current status | Part of core algorithm | Part of core algorithm via SpamBrain |

The clearest way to separate them: Panda cares about what is on your pages; Penguin cares about who is linking to those pages and why.

A site hit by Panda will typically see drops correlated with content-heavy pages or site-wide quality assessments. A site affected by Penguin or SpamBrain will tend to see drops that correlate with changes in its backlink profile, a surge of low-quality links, or the devaluation of links that were previously providing ranking lift.

Our guide to the Google Panda update covers the content side of this equation in full, and it is worth reading alongside this one if you are troubleshooting a traffic drop of unclear origin.

How to Tell If You Have Been Affected

Most sites that experienced genuine Penguin penalties did so before 2016. Since Penguin 4.0 moved to real-time devaluation, the effect on most sites is more gradual and harder to pin to a single cause. Here is how to assess your situation.

Signs of Link Devaluation

A gradual decline in rankings for pages that were previously stable, with no clear correlation to content changes, technical issues, or a Google core update, may indicate that links previously supporting those rankings have been devalued. This is different from a penalty. There is no notification in Google Search Console. Your rankings simply drift down as the supporting link equity disappears.

Signs of a Manual Action

If Google has applied a manual action to your site for unnatural links, you will see a notification in the Manual Actions section of Google Search Console. The notification will specify whether the action applies to specific pages or the whole site. This is now relatively rare for most legitimate businesses, but it does still happen, particularly for sites that have participated in aggressive link-building schemes.

How to Investigate

Start with Google Search Console. Check the Manual Actions report first. If that is clear, move to the Links report and look at your top linking domains. Cross-reference those against your ranking history in whatever position-tracking tool you use.

Tools like Semrush and Ahrefs both provide toxicity or spam scores for linking domains. These are useful as a first filter, though they should not be treated as definitive. A high toxicity score from a third-party tool does not automatically mean Google has devalued a link. Use them to prioritise which links to examine more closely, not as grounds for mass disavowal.

Our Semrush vs Ahrefs comparison covers the practical differences between these tools for backlink auditing in detail.

UK and Ireland Context: Link Quality Issues Specific to This Market

US-focused SEO guides rarely address the link-quality landscape for UK and Irish businesses. There are some patterns worth knowing.

Legacy UK Business Directories

Throughout the 2000s and early 2010s, submitting to UK business directories was standard local SEO practice. Many of those directories are still active, still indexable, and still part of some businesses’ backlink profiles. The problem is that a significant number of them have deteriorated in quality, hosting thin content, accepting paid inclusion without editorial review, and participating in cross-linking schemes.

A directory link is not automatically harmful. Yell, Thomson Local, and well-maintained sector-specific directories still provide genuine value. The risk comes from directories that aggregate links without any editorial judgment. If you are unsure whether a directory in your profile falls into that category, look at the quality of other sites listed on it and whether it is indexed meaningfully by Google.

Guest Posting Networks

The UK market has a number of active guest posting networks that sell placement on nominally independent blogs. These operated below Penguin’s radar for years but are increasingly within SpamBrain’s detection capacity. The signal is usually the pattern: if the same broker is placing links across dozens of sites with suspiciously similar layouts, content, and linking structures, that footprint is detectable.

Genuine guest posting on editorially independent, topically relevant publications remains one of the most effective link-building strategies available. The key distinction is editorial control. If the publication accepts any submission from any domain in exchange for payment with no content quality assessment, it is a guest post farm by another name.

For businesses in Northern Ireland and across the Republic of Ireland, there are genuine regional publications in business, technology, and professional services that accept high-quality contributed content. Building relationships with those publications produces links that are editorially earned and topically relevant. Our digital marketing services for Northern Ireland businesses include editorial outreach as part of structured link-building programmes.

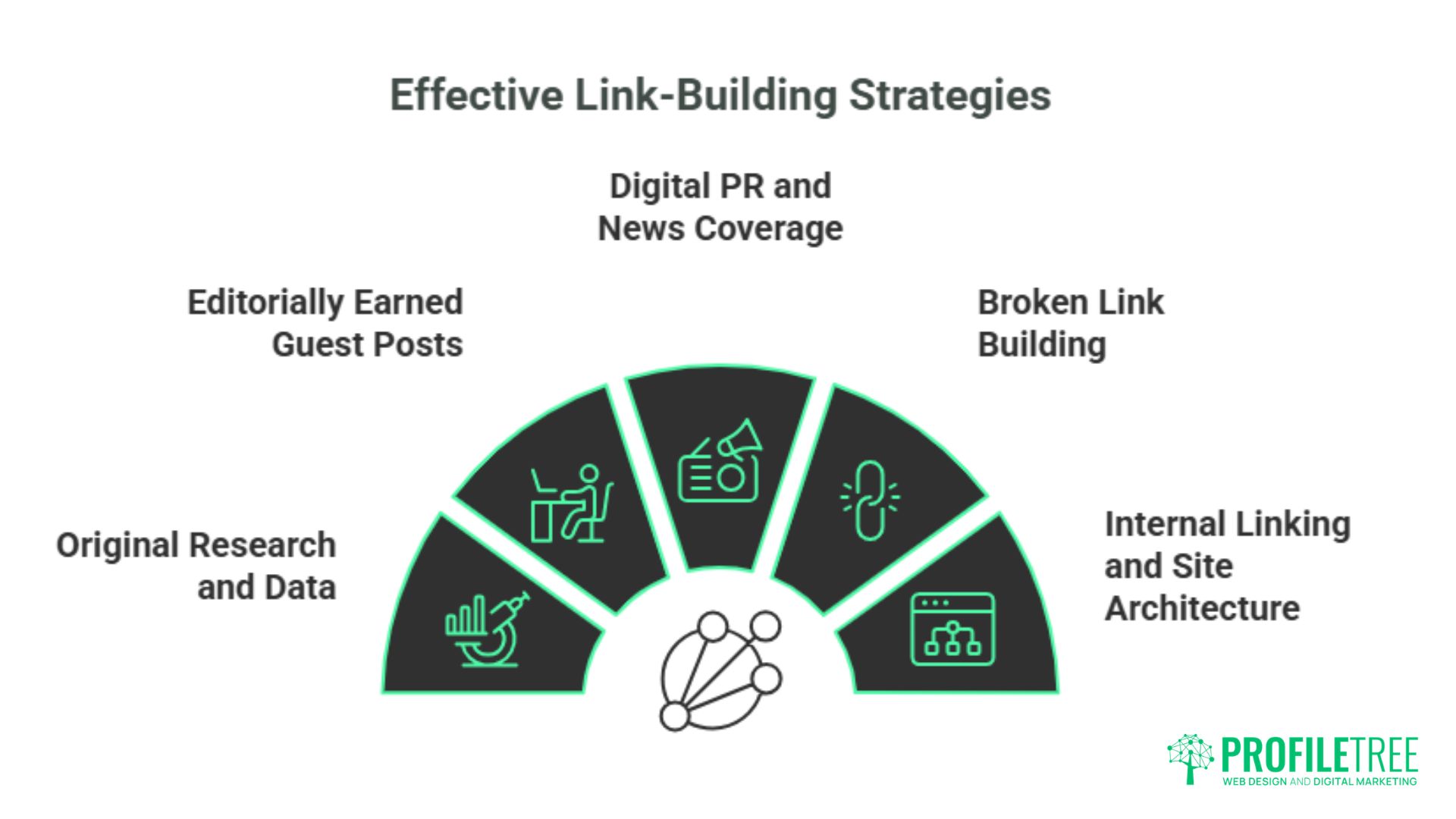

Five Link-Building Strategies That Work Under Penguin and SpamBrain

The rules Penguin established in 2012 have become more strictly enforced, not less. These are the approaches that hold up.

Original Research and Data

Content that contains proprietary data, original surveys, or unique analysis attracts links from journalists, bloggers, and industry publications that are editorially valuable by definition. A piece of research specific to your sector or geographic market is far more likely to earn genuine editorial links than a guide that synthesises information available elsewhere. Our content marketing services include data-led content strategies built specifically to generate editorial coverage.

Editorially Earned Guest Posts

As covered above, the distinction between a genuine guest post and a paid placement on a link farm is editorial control. Target publications with real readerships, real editors, and genuine topical relevance to your business. Accept that a piece may be rejected or revised. That friction is what makes the link editorially earned.

Digital PR and News Coverage

A press release sent to a wire service produces low-value links. A story that a journalist chooses to cover produces a high-value editorial link. Digital PR involves identifying the angles in your business that are genuinely newsworthy and pitching them to relevant publications in a way that serves the journalist’s audience, not just your backlink profile.

Broken Link Building

This involves identifying pages on authoritative sites in your niche that link to content that no longer exists (404 errors) and reaching out to offer a relevant replacement page from your own site. It is methodical, time-consuming, and produces exactly the kind of contextually relevant link that Penguin and SpamBrain reward.

Internal Linking and Site Architecture

Not all link equity comes from external sources. A well-structured internal linking strategy distributes authority from your strongest pages to the ones you most want to rank. Pages covering how Google’s YMYL guidelines affect content quality, or how Google Analytics bounce rate relates to content engagement, should connect to each other when they are genuinely related. That internal network compounds the value of every external link your site earns.

Conclusion

The Google Penguin update is now over a decade old, and its core logic has never been more firmly embedded in Google’s operations. SpamBrain extended and automated what Penguin started, and the direction of travel is clear: links earned through genuine editorial endorsement will continue to be rewarded, and links manufactured through schemes will continue to be devalued or ignored.

For businesses across Northern Ireland, Ireland, and the UK, the practical implication is straightforward. A smaller number of genuinely earned links from relevant, authoritative publications will consistently outperform a larger profile built through directories, networks, or paid placement.

If you want to understand where your backlink profile stands today, get in touch with the ProfileTree team to discuss a structured SEO audit.

FAQs

Does Google Penguin still exist?

Yes, though not as a standalone update. Penguin’s logic is now part of Google’s core algorithm, running in real time as part of the same infrastructure that processes every crawl. SpamBrain handles much of the workload that earlier Penguin versions managed through pattern-matching rules.

How long does it take to recover from a Penguin impact?

Since Penguin 4.0, recovery is tied to Google’s recrawling schedule rather than a periodic refresh. Once Google recrawls the links affecting your site, the algorithmic devaluation adjusts. For sites with large numbers of problematic links, this can take weeks or months, depending on crawl frequency.

Should I disavow links if my traffic drops?

Not automatically. First, determine whether the drop is correlated with a core update, a technical change, a content quality issue, or a change in the backlink profile. If you have a manual action, disavowal is part of the process. If the drop is algorithmic, assess your backlinks carefully before disavowing anything.

Can a competitor use negative SEO against me with Penguin?

This is less of a risk than it was before Penguin 4.0. Google’s current guidance is that the algorithm can identify and ignore most spam links, regardless of who built them. For targeted, high-volume negative SEO attacks, you can still use the Disavow Tool as a precaution, but proactively disavowing every low-quality link out of fear of negative SEO is unnecessary and counterproductive.

Is link quantity still important for SEO?

Relevance and authority matter more than raw numbers. One editorial link from a high-authority publication in your industry will typically outweigh hundreds of links from low-quality directories. That said, a large number of high-quality links from diverse, topically relevant sources remains the strongest possible signal of authority. The issue with chasing quantity is that it tends to push site owners toward lower-quality sources.