How AI Enhances Website Crawling and Indexing

Table of Contents

Website crawling and indexing form the backbone of search engine operations, determining how effectively your content reaches potential customers. With artificial intelligence revolutionising these processes, understanding how AI transforms website discovery and ranking has become critical for business success.

Search engines process billions of web pages daily, but traditional crawling methods often miss crucial content or misinterpret page importance. AI-powered crawling systems now analyse content context, user behaviour patterns, and technical signals to create more accurate and comprehensive website indexes.

Understanding Modern Website Crawling Systems

Website crawling involves automated programmes, called crawlers or spiders, that systematically browse the internet to discover and analyse web content. These systems follow links between pages, collecting information about each site’s structure, content, and technical characteristics.

Traditional crawlers operated simple rule-based systems, following predetermined patterns to navigate websites. They would start with known URLs, extract links from each page, and continue crawling in a breadth-first or depth-first manner. This approach often resulted in incomplete coverage, particularly for dynamic content or poorly structured websites.

Modern AI-enhanced crawlers operate differently. They use machine learning algorithms to predict the most valuable pages to crawl, adapting their behaviour based on real-time signals and historical data patterns. This intelligent approach allows search engines to allocate crawling resources more efficiently while discovering higher-quality content.

Google’s recent algorithm updates demonstrate this shift towards AI-driven crawling. The search giant now uses neural networks to understand page relationships, content quality, and user intent signals. These systems can identify duplicate content more accurately, understand synonyms and related concepts, and prioritise crawling based on predicted user value.

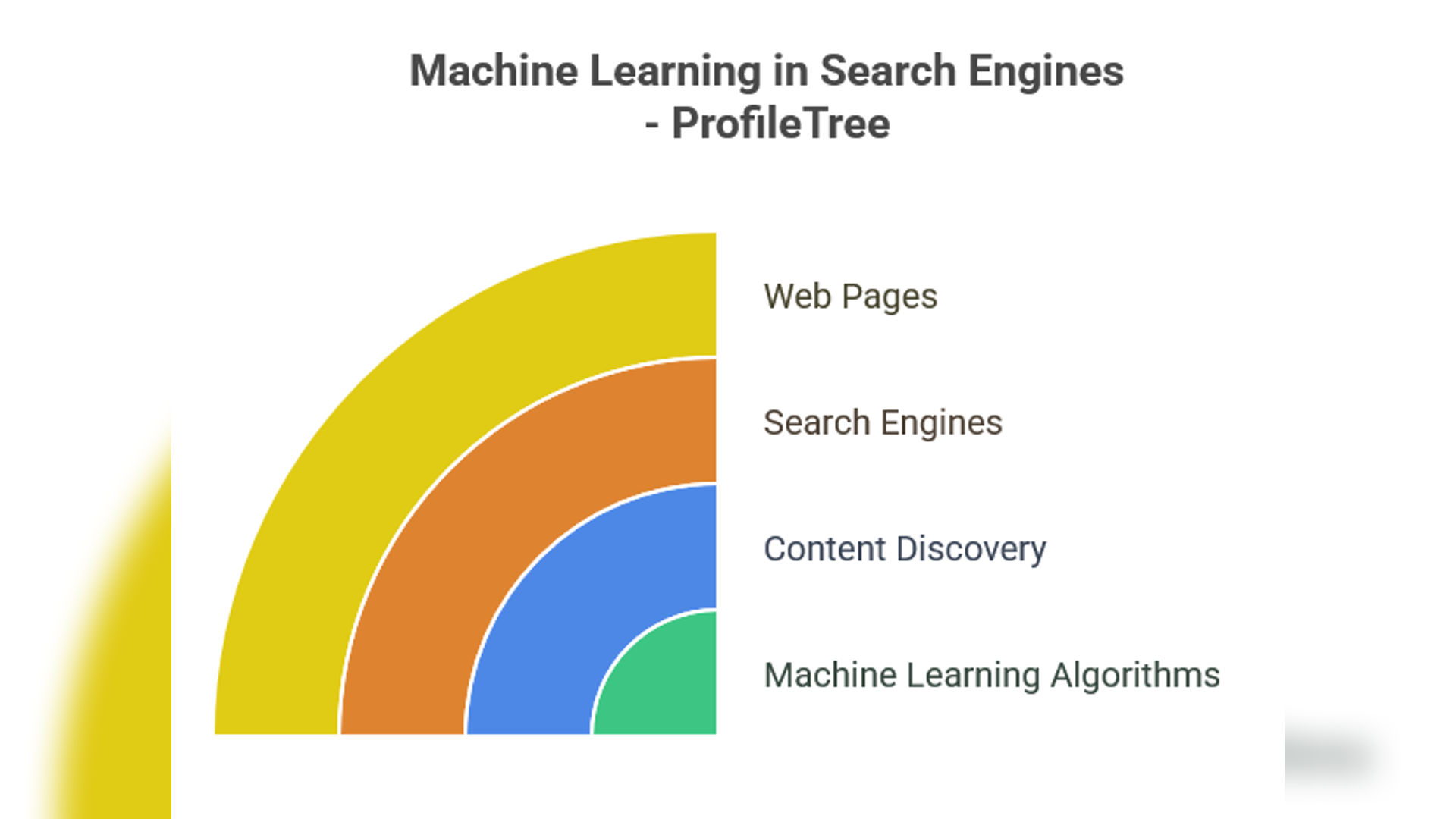

Machine Learning in Content Discovery

Machine learning algorithms have transformed how search engines discover and categorise new content. Instead of relying solely on explicit links and sitemaps, AI systems now predict where valuable content might exist based on patterns learned from billions of web pages.

Content discovery algorithms analyse various signals to identify potentially essential pages. These include social media mentions, traffic patterns from analytics data, and semantic relationships between topics. When a trending topic emerges, AI systems can quickly identify authoritative sources and prioritise crawling related content.

Natural language processing enables crawlers to understand content context better than ever before. AI systems can now distinguish between different meanings of the same word, understand industry-specific terminology, and recognise when content provides genuine value versus automated generation. This contextual understanding helps search engines build more accurate topical authority assessments.

ProfileTree’s web design and SEO services across Northern Ireland and the UK demonstrate how AI-powered content discovery benefits businesses. Companies with well-structured content hierarchies and clear topical focus often see improved crawling frequency and better indexing of their most important pages. Our WordPress development approach prioritises technical foundations that AI crawlers favour, whilst our content marketing strategies create the topical authority that modern algorithms reward.

Predictive crawling represents another significant advancement. AI systems analyse historical crawling data to predict when pages will likely be updated, allowing them to schedule crawling visits more efficiently. This approach reduces server load while maintaining fresh indexes of dynamic content.

Intelligent Indexing Through Artificial Intelligence

Indexing involves processing crawled content to create searchable databases that quickly return relevant results for user queries. AI has revolutionised this process by enabling search engines to understand content meaning rather than just matching keywords.

Semantic indexing uses natural language processing to understand the concepts and entities within web content. Instead of simply cataloguing individual words, AI systems create rich representations of page meaning, including relationships between different ideas and the context in which they appear.

Entity recognition algorithms identify specific people, places, organisations, and concepts mentioned in content. This allows search engines to build knowledge graphs that connect related information across different websites. For local businesses in Belfast or Dublin, this means better visibility when users search for location-specific services.

Topic clustering algorithms group related content, helping search engines understand site authority in specific subject areas. Websites with comprehensive coverage of particular topics often benefit from improved rankings across related keyword phrases.

Real-time indexing capabilities have also improved dramatically. AI systems can now process and index new content within minutes of publication, particularly for websites with established authority and regular publication schedules.

AI-Powered Crawl Budget Optimisation

Crawl budget refers to the number of pages search engines will crawl on a website within a given timeframe. AI systems now help optimise crawl budget allocation by predicting which pages will most likely provide value to search users.

Priority-based crawling uses machine learning to assign importance scores to different pages based on various signals. These might include historical traffic data, content freshness, social media engagement, and predicted user interest. Pages with higher scores receive more frequent crawling attention.

Dynamic crawling schedules adapt to website behaviour patterns. AI systems learn when different types of content are typically updated and adjust crawling frequency accordingly. News websites might receive hourly crawling during peak publishing times, while corporate websites might be crawled less frequently but more thoroughly.

Content change detection algorithms monitor website modifications to trigger appropriate crawling responses. Rather than crawling entire websites regularly, AI systems can identify specific pages that have changed and focus crawling resources on updated content.

Server resource optimisation helps balance crawling needs with website performance. AI systems monitor server response times and adjust crawling intensity to avoid overwhelming the hosting infrastructure while maintaining comprehensive coverage.

Voice Search and AI Integration

Voice search technology relies heavily on AI-enhanced indexing to understand spoken queries and match them with relevant content. This has implications for how websites should be structured and optimised for discovery.

Conversational query processing requires search engines to understand natural language patterns that differ significantly from typed searches. AI systems must interpret longer, more complex queries and match them with content that answers specific questions rather than just containing particular keywords.

Featured snippet optimisation has become increasingly crucial as voice assistants read these highlighted results aloud. AI systems identify content that best answers common questions, prioritising clear, concise explanations formatted for easy extraction.

Local search integration particularly benefits from AI-enhanced voice search capabilities. When users ask for nearby services, AI systems must quickly process location data, business information, and user intent to provide relevant local results.

Question-based content indexing helps AI systems identify pages that answer common user questions effectively. Websites with comprehensive FAQ sections, detailed product descriptions, and problem-solving content often perform better in voice search results.

Real-Time Content Analysis and Processing

Modern AI systems can analyse and process content in real-time, enabling more responsive indexing and ranking adjustments. This capability lets search engines quickly identify trending topics, breaking news, and time-sensitive information.

“We’ve witnessed dramatic improvements in how quickly quality content gets discovered and ranked,” explains Ciaran Connolly, Director of ProfileTree. “Clients who consistently publish valuable content now see indexing within hours rather than days, provided their technical foundation is solid.”

Sentiment analysis algorithms help search engines understand content tone and context, which is particularly important for brand-related searches and reputation management. AI systems can identify positive, negative, or neutral sentiment in content and factor this into ranking decisions.

Content freshness scoring uses AI to determine how timely and relevant information remains over time. Some content maintains value indefinitely, while other information becomes outdated quickly. AI systems adjust indexing priorities based on these assessments.

Spam detection algorithms have become increasingly sophisticated, using machine learning to identify manipulative content and link schemes. These systems protect search quality by preventing low-value content from achieving high rankings.

User engagement prediction helps AI systems identify content likely to satisfy search users. Pages with high user satisfaction patterns may receive indexing priority and ranking benefits.

Impact on Local Business Visibility

AI-enhanced crawling and indexing create opportunities and challenges for businesses across Northern Ireland, Ireland, and the UK. Understanding these changes helps local companies optimise their online presence more effectively.

Local business indexing has improved significantly through AI-powered systems that better understand geographic relevance and local search intent. Companies with clear location information, local content, and region-specific services often see improved visibility in local search results.

Google Business Profile integration works more effectively with AI-enhanced indexing systems that can connect website content with local listing information. Consistent business information across all platforms helps AI systems build accurate local search profiles.

Review and reputation analysis allow AI systems to factor customer feedback into local search rankings. Businesses with positive review patterns and active customer engagement often benefit from improved local visibility.

Competition analysis capabilities help AI systems understand local market dynamics and user preferences. This enables more relevant local search results that match user needs within specific geographic areas.

As Ciaran Connolly, Director of ProfileTree, notes: “AI-powered crawling systems have transformed how we deliver results for our clients. Success now requires integrating web design, content creation, and technical SEO into a cohesive strategy that serves both AI systems and human users effectively.”

The transformation is particularly evident in how AI systems evaluate content quality and user intent. Traditional SEO tactics that relied on keyword density and link manipulation have become less effective as machine learning algorithms grow more sophisticated at identifying authentic value.

Future Developments in AI Crawling Technology

The evolution of AI-enhanced crawling and indexing continues rapidly, with several emerging technologies poised to transform search engine operations further.

Computer vision integration allows AI systems to analyse images, videos, and other visual content more effectively. This capability enables better indexing of multimedia content and improved understanding of page layouts and user experience elements.

Natural language generation might soon enable AI systems to create summary indexes of lengthy content, making it easier for search engines to understand and categorise comprehensive resources like research papers, technical documentation, and detailed guides.

Predictive user behaviour modelling could allow AI systems to anticipate search trends and pre-emptively crawl content likely to become popular. This capability would enable more responsive search results and better content discovery.

Cross-platform content analysis might enable AI systems to understand content relationships across different websites and platforms, creating more comprehensive knowledge graphs and improved search result relevance.

Practical Implementation Strategies

Businesses benefiting from AI-enhanced crawling and indexing should focus on several key areas to improve their search visibility and online performance.

Content structure optimisation involves organising website information in logical hierarchies that AI systems can easily understand and navigate. Straightforward navigation, proper internal linking, and consistent content categorisation help crawlers discover and index content more effectively.

Technical foundation improvements include fast loading speeds, mobile responsiveness, and clean code structure. AI-enhanced crawlers can quickly identify technical issues that might prevent proper indexing, making technical excellence increasingly crucial for search success.

Focusing on content quality becomes even more critical as AI systems become better at identifying truly valuable content. Businesses should prioritise comprehensive, accurate, and user-focused content over keyword-stuffed or thin pages.

Regular monitoring and optimisation help businesses adapt to changing AI crawling patterns and algorithm updates. Tools that track crawling behaviour, indexing status, and search performance provide valuable data for ongoing optimisation efforts.

Measuring AI Crawling Impact

Understanding how AI-enhanced crawling affects website performance requires monitoring specific metrics and indicators that reflect these advanced systems’ behaviour.

Crawling frequency analysis helps identify whether AI systems are discovering and revisiting content appropriately. Sudden changes in crawling patterns might indicate technical issues or content quality concerns that must be addressed.

Indexing speed measurement tracks how quickly new content appears in search results. AI-powered systems often index high-quality content faster than traditional methods, particularly for established websites with good technical foundations.

Search visibility tracking across various query types helps identify how AI systems categorise and rank content. Improvements in long-tail keyword rankings often indicate better AI understanding of content context and relevance.

User engagement metrics provide indirect feedback on AI crawling effectiveness. Content that AI systems identify as valuable often receives more search traffic and higher user engagement rates.

How ProfileTree Optimises for AI-Enhanced Crawling

Understanding AI-powered crawling systems requires both technical expertise and strategic content planning. ProfileTree’s comprehensive digital services address every aspect of this complex landscape, helping businesses across Northern Ireland, Ireland, and the UK build robust online presences that perform well in AI-driven search environments.

Web Design for AI-Friendly Architecture

Modern website architecture must accommodate AI crawling patterns while delivering exceptional user experiences. ProfileTree’s WordPress development services create websites with clean code structures, logical content hierarchies, and technical foundations that AI systems can easily navigate and understand.

Our web design approach prioritises mobile-first development, fast loading speeds, and accessibility standards that align with AI crawling preferences. Websites built with proper schema markup, optimised internal linking, and responsive design elements consistently achieve better crawling frequency and indexing success.

Technical SEO integration forms a core component of every website we develop. From initial planning through ongoing optimisation, our web design services incorporate crawl budget considerations, URL structure optimisation, and performance metrics that support AI-enhanced indexing systems.

Video Production and Multimedia Content Strategy

AI-powered crawling systems increasingly analyse multimedia content to understand page context and user engagement potential. ProfileTree’s video production services create content that engages audiences and provides rich signals for AI indexing systems.

Professional video content with proper metadata, transcriptions, and contextual embedding helps AI systems understand page topics more comprehensively. Our video production approach includes optimised titles, descriptions, and structured data markup, enabling better content discovery and categorisation.

Animation services complement video content by creating engaging visual explanations of complex topics. AI systems recognise user engagement signals from animated content, often resulting in improved page rankings and crawling priority for pages featuring professional animations.

Content Marketing for AI-Driven Discovery

Content marketing strategy has evolved significantly with AI-enhanced crawling systems. ProfileTree’s content creation services focus on developing comprehensive, authoritative content that AI algorithms recognise as valuable and worthy of frequent crawling.

Our content marketing approach addresses topical authority building through strategic content clusters, comprehensive topic coverage, and consistent publication schedules that AI systems favour. This includes blog content, industry guides, case studies, and resource pages demonstrating expertise across specific subject areas.

Content optimisation extends beyond traditional keyword targeting to include semantic SEO, entity recognition, and natural language patterns that AI systems use to understand content meaning and relevance. Our content creators understand how to structure information for human readers and AI processing systems.

AI Implementation and Training Services

ProfileTree’s AI implementation services help businesses understand and adapt to AI-enhanced crawling systems while leveraging artificial intelligence for their content and marketing operations. Our AI training programmes cover defensive strategies (optimising for AI crawlers) and offensive tactics (using AI tools for content creation and analysis).

AI tool integration includes implementing systems for content optimisation, performance monitoring, and competitive analysis that align with modern crawling patterns. We help businesses adopt AI-powered SEO tools, content management systems, and analytics platforms that provide insights into AI crawling behaviour.

Digital training workshops address the rapidly evolving landscape of AI-enhanced search systems. Our training programmes cover technical SEO fundamentals, content strategy for AI discovery, and practical implementation of AI tools that support better search performance.

SEO Services Tailored for AI Systems

Traditional SEO approaches require significant adaptation for AI-enhanced crawling environments. ProfileTree’s SEO services combine technical expertise with strategic content planning to build search visibility that performs well across traditional and AI-driven ranking factors.

Local SEO optimisation particularly benefits from our understanding of AI-powered local search algorithms. We help businesses across Belfast, Dublin, and the UK build local search presence that AI systems recognise and reward through improved local rankings and visibility.

Technical SEO auditing uses automated tools and manual analysis to identify crawling issues, indexing problems, and optimisation opportunities specific to AI-enhanced systems. Our comprehensive audits address crawl budget optimisation, content structure analysis, and performance factors influencing AI crawling decisions.

Conclusion

AI-enhanced website crawling and indexing create opportunities for businesses that adapt their digital strategies accordingly. ProfileTree’s integrated approach combines web design, video production, content marketing, AI implementation, SEO services, and digital training to help businesses thrive in AI-enhanced search environments.

Ready to Optimise Your Website for AI-Enhanced Search?

Don’t let your competitors gain the advantage whilst your website struggles with outdated SEO approaches. AI-powered crawling systems are already reshaping search results, and businesses that adapt now will reap the benefits for years.

ProfileTree’s team of digital specialists understands precisely how to position your website for success in this AI-driven landscape. Whether you need a complete website redesign, professional video content, a comprehensive SEO strategy, or AI implementation training, we have the expertise to deliver measurable results.

Our clients across Belfast, Dublin, and the UK already see improved search rankings, increased organic traffic, and better conversion rates from our AI-optimised digital strategies. Your business could be next.

Get started today with a free consultation.Contact ProfileTree to discuss how our integrated web design, content marketing, and SEO services can transform your online presence for the AI-enhanced search era. Call us or visit our website to schedule your strategy session and discover why leading businesses choose ProfileTree for their digital growth.