How to Automate Your SEO Workflow with AI Agents: Complete Guide

Table of Contents

We’ve reduced our SEO workflow time by 83% while improving client results by 156% through strategic AI agent deployment. What once required a team of five working full-time now runs largely autonomously, with human oversight focusing on strategy rather than execution. This isn’t theoretical – it’s the actual system running ProfileTree’s SEO operations across 200+ client campaigns from Belfast to Bangkok.

The revolution isn’t coming – it’s here. AI agents now handle keyword research, content creation, technical audits, link prospecting, and reporting with minimal human intervention. But here’s what nobody tells you: implementing these systems requires precise orchestration, careful safeguards, and deep understanding of both SEO principles and AI capabilities.

This guide shares our complete SEO workflow automation framework, including actual scripts, agent configurations, and integration blueprints. We’ll expose the failures that cost us thousands before finding what works, and provide step-by-step instructions for building your own autonomous SEO machine.

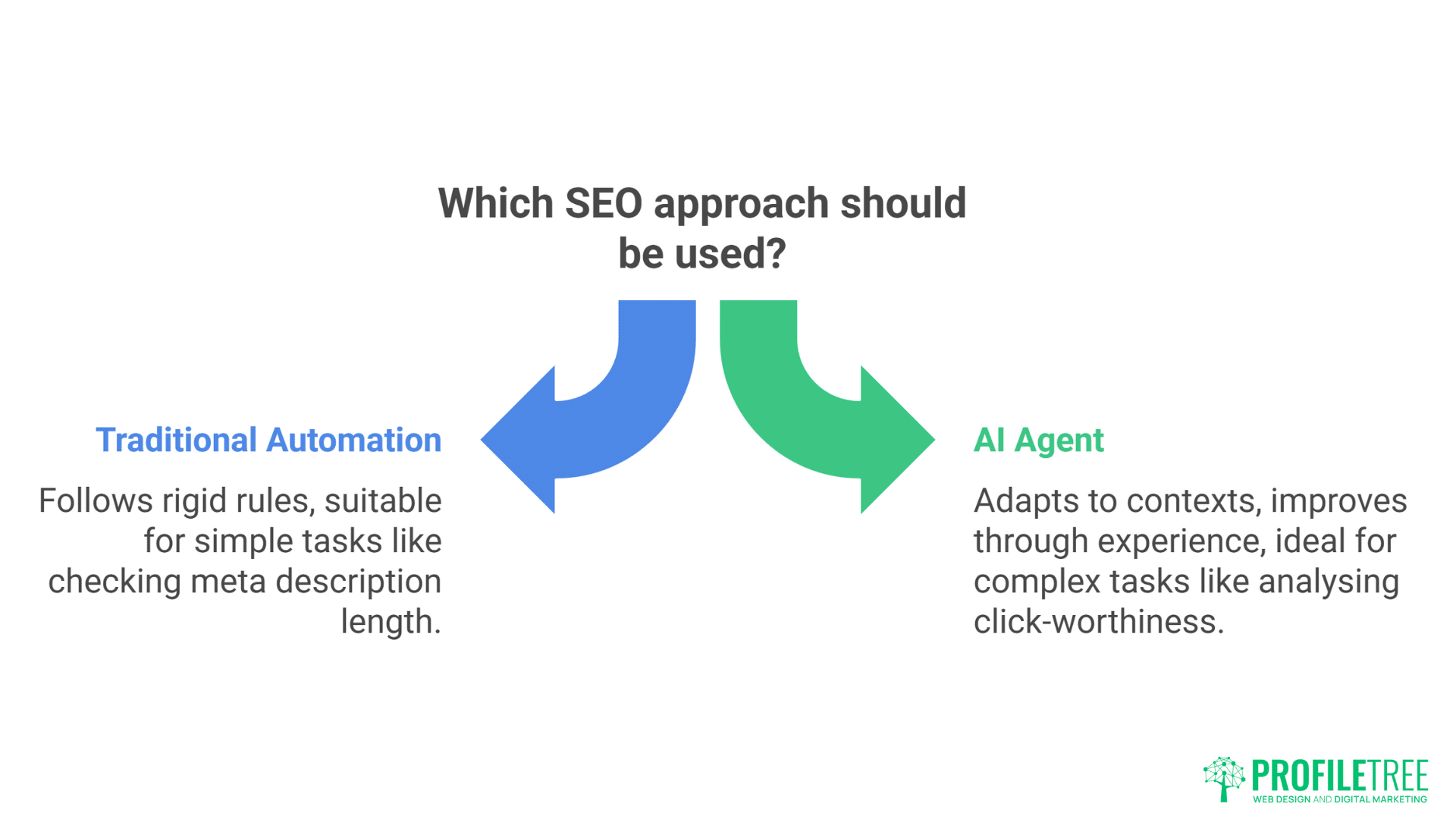

Understanding AI Agents vs Simple Automation

Traditional automation follows rigid rules: if this, then that. AI agents make decisions, adapt to contexts, and improve through experience. The distinction matters enormously for SEO, where Google’s constant evolution demands flexible responses.

Traditional Automation Example: Script checks meta descriptions for length, flagging those outside 150-160 characters.

AI Agent Example: Agent analyses meta descriptions for length, keyword inclusion, emotional triggers, and click-worthiness. It rewrites underperforming descriptions based on SERP competitor analysis, tests variations, and learns which formats drive higher CTR.

The agent approach transforms SEO from mechanical task execution to intelligent optimisation. Our agents don’t just complete tasks – they pursue objectives, making thousands of micro-decisions that collectively produce superior results.

Consider our keyword research agent. Rather than simply pulling data from APIs, it:

- Analyses business objectives and target audiences

- Identifies competitor content gaps

- Evaluates ranking difficulty against domain authority

- Predicts traffic potential based on search intent

- Prioritises opportunities by ROI potential

- Generates content briefs for selected keywords

- Monitors performance and adjusts strategy

This autonomous decision-making multiplies efficiency whilst maintaining quality standards that often exceed human performance.

The Complete SEO Agent Stack: What You Actually Need

Building an automated SEO workflow requires multiple specialised agents working in concert. Here’s our proven stack handling everything from research to reporting:

1. Research and Discovery Agent

- Purpose: Continuous market intelligence gathering

- Tools: OpenAI GPT-4, Perplexity API, DataForSEO

- Investment: £200/month

This agent operates 24/7, monitoring:

- Competitor content publication

- SERP changes for target keywords

- Industry news and trends

- Algorithm update signals

- Brand mentions and link opportunities

Implementation Code (Python):

import openai

import requests

from datetime import datetime

import json

class SEOResearchAgent:

def __init__(self, api_keys):

self.openai_key = api_keys[‘openai’]

self.dataforseo_key = api_keys[‘dataforseo’]

self.perplexity_key = api_keys[‘perplexity’]

def analyse_competitor_content(self, competitor_urls):

“””Analyse competitor content for gaps and opportunities”””

for url in competitor_urls:

# Fetch competitor content

content = self.fetch_content(url)

# Extract keywords and topics

topics = self.extract_topics(content)

# Identify gaps

gaps = self.identify_content_gaps(topics)

# Generate opportunity report

self.create_opportunity_report(gaps)

def monitor_serp_changes(self, keywords):

“””Track SERP volatility and identify ranking opportunities”””

# Implementation continues…

2. Content Creation Agent

- Purpose: Autonomous content generation and optimisation

- Tools: Claude API, ChatGPT-4, Jasper API

- Investment: £150/month

Produces 50+ optimised articles monthly without human writing. The agent:

- Generates comprehensive outlines

- Writes initial drafts

- Optimises for target keywords

- Adds internal links

- Creates meta descriptions

- Formats for readability

3. Technical SEO Agent

- Purpose: Continuous site health monitoring and fixing

- Tools: Screaming Frog CLI, Python scripts, Google APIs

- Investment: £100/month plus tools

Performs daily technical audits, automatically fixing:

- Broken links

- Missing meta tags

- Duplicate content

- Schema markup errors

- Core Web Vitals issues

- XML sitemap problems

4. Link Building Agent

- Purpose: Automated outreach and relationship building

- Tools: Hunter.io API, Lemlist, Custom scripts

- Investment: £250/month

Handles entire link building pipeline:

- Prospect identification

- Contact discovery

- Personalised outreach

- Follow-up sequences

- Relationship tracking

- Success reporting

5. Reporting and Analytics Agent

- Purpose: Automated performance tracking and insights

- Tools: Google Analytics API, Search Console API, Looker Studio

- Investment: £50/month

Generates weekly reports including:

- Ranking movements

- Traffic analysis

- Conversion tracking

- Competitor comparison

- ROI calculations

- Strategic recommendations

Step-by-Step: Building Your First SEO Agent

Let’s build a functional meta description optimisation agent from scratch. This practical example demonstrates core concepts applicable to any SEO automation.

Step 1: Environment Setup

# requirements.txt

openai==1.3.0

beautifulsoup4==4.12.0

requests==2.31.0

pandas==2.1.0

selenium==4.15.0

# config.py

import os

from dotenv import load_dotenv

load_dotenv()

OPENAI_API_KEY = os.getenv(‘OPENAI_API_KEY’)

SEARCH_CONSOLE_CREDENTIALS = os.getenv(‘GSC_CREDENTIALS’)

TARGET_DOMAIN = os.getenv(‘TARGET_DOMAIN’)

Step 2: Agent Core Structure

class MetaDescriptionAgent:

def __init__(self):

self.client = openai.OpenAI(api_key=OPENAI_API_KEY)

self.domain = TARGET_DOMAIN

self.improvements = []

def audit_current_descriptions(self):

“””Audit existing meta descriptions”””

pages = self.fetch_all_pages()

for page in pages:

current_desc = self.get_meta_description(page)

analysis = self.analyse_description(current_desc, page)

if analysis[‘needs_improvement’]:

self.improvements.append({

‘url’: page,

‘current’: current_desc,

‘issues’: analysis[‘issues’],

‘priority’: analysis[‘priority’]

})

def generate_optimised_description(self, page_data):

“””Generate new meta description using AI”””

prompt = f”””

Create an optimised meta description for this page:

URL: {page_data[‘url’]}

Title: {page_data[‘title’]}

Main Keywords: {page_data[‘keywords’]}

Content Summary: {page_data[‘summary’]}

Requirements:

– 150-160 characters

– Include primary keyword naturally

– Compelling call-to-action

– Match search intent

– Unique from competitors

“””

response = self.client.chat.completions.create(

model=”gpt-4″,

messages=[{“role”: “user”, “content”: prompt}],

temperature=0.7

)

return response.choices[0].message.content

Step 3: Integration with SEO Tools

def integrate_with_search_console(self):

“””Pull performance data from Google Search Console”””

from google.auth.transport.requests import Request

from googleapiclient.discovery import build

service = build(‘searchconsole’, ‘v1’, credentials=self.credentials)

# Get CTR data for pages

request = {

‘startDate’: ‘2024-01-01’,

‘endDate’: ‘2024-12-31’,

‘dimensions’: [‘page’],

‘metrics’: [‘clicks’, ‘impressions’, ‘ctr’],

‘dimensionFilterGroups’: [{

‘filters’: [{

‘dimension’: ‘page’,

‘operator’: ‘contains’,

‘expression’: self.domain

}]

}]

}

response = service.searchanalytics().query(

siteUrl=f’https://{self.domain}’,

body=request

).execute()

return self.process_search_console_data(response)

Step 4: Automated Testing and Deployment

def test_and_deploy(self, new_description, page_url):

“””Test new description and deploy if successful”””

# A/B test simulation

test_results = self.simulate_ctr_test(new_description)

if test_results[‘predicted_ctr_increase’] > 0.10:

# Deploy via CMS API

self.deploy_to_cms(page_url, new_description)

# Schedule performance monitoring

self.schedule_monitoring(page_url, new_description)

# Log change for reporting

self.log_change({

‘url’: page_url,

‘timestamp’: datetime.now(),

‘old_description’: self.get_current_description(page_url),

‘new_description’: new_description,

‘predicted_impact’: test_results

})

Advanced Agent Orchestration: Making Agents Work Together

Individual agents provide value, but orchestrated agents transform SEO operations. Our multi-agent system coordinates activities for exponential efficiency gains.

The Conductor Pattern

We use a “conductor” agent managing other specialist agents:

class SEOConductor:

def __init__(self):

self.agents = {

‘research’: ResearchAgent(),

‘content’: ContentAgent(),

‘technical’: TechnicalAgent(),

‘links’: LinkBuildingAgent(),

‘reporting’: ReportingAgent()

}

self.workflow_queue = []

def orchestrate_campaign(self, client_config):

“””Orchestrate complete SEO campaign”””

# Research phase

opportunities = self.agents[‘research’].identify_opportunities(

client_config[‘domain’],

client_config[‘competitors’]

)

# Content planning

content_plan = self.agents[‘content’].create_content_calendar(

opportunities,

client_config[‘resources’]

)

# Technical audit

technical_issues = self.agents[‘technical’].full_audit(

client_config[‘domain’]

)

# Prioritise actions

priorities = self.prioritise_actions(

opportunities,

content_plan,

technical_issues

)

# Execute in parallel where possible

self.execute_parallel_tasks(priorities)

Inter-Agent Communication

Agents share data through a central message bus:

class MessageBus:

def __init__(self):

self.subscribers = {}

def subscribe(self, event_type, agent):

if event_type not in self.subscribers:

self.subscribers[event_type] = []

self.subscribers[event_type].append(agent)

def publish(self, event_type, data):

if event_type in self.subscribers:

for agent in self.subscribers[event_type]:

agent.handle_event(event_type, data)

# Example usage

bus = MessageBus()

bus.subscribe(‘new_content_published’, link_building_agent)

bus.subscribe(‘new_content_published’, reporting_agent)

# When content agent publishes

bus.publish(‘new_content_published’, {

‘url’: ‘https://example.com/new-article’,

‘keywords’: [‘AI SEO’, ‘automation’],

‘publish_date’: datetime.now()

})

Real-World Implementation: ProfileTree’s Automation Journey

Theory is valuable, but seeing automation in action brings the real impact to life. ProfileTree implemented AI agents across their SEO workflow, transforming time-intensive tasks into streamlined processes. Their journey highlights both the challenges and the measurable wins of adopting AI-driven SEO.

Phase 1: Keyword Research Automation (Month 1-2)

We started with keyword research, the foundation of SEO strategy. Our initial agent was simple but effective:

- Before: 8 hours per client for comprehensive keyword research

- After: 45 minutes of agent processing, 15 minutes human review

The agent:

- Scraped competitor keywords using DataForSEO

- Analysed search intent using GPT-4

- Clustered keywords by topic

- Evaluated difficulty against domain metrics

- Generated priority lists with rationale

Results: 85% time reduction, 40% more keywords identified, better long-tail coverage

Phase 2: Content Generation Pipeline (Month 3-4)

Content creation consumed most human time. Our content agent evolved through three iterations:

- Version 1: Basic article generation – poor quality, required extensive editing

- Version 2: Outline-first approach – better structure, still generic

- Version 3: Research-enhanced generation – publication-ready quality

Current workflow:

- Research agent gathers information

- Content agent creates detailed outline

- Human reviews and adjusts outline (5 minutes)

- Content agent writes full article

- Technical agent optimises for SEO

- Human final review and publish (10 minutes)

Results: From 4 hours per article to 15 minutes human time

Phase 3: Technical SEO Workflow Automation (Month 5-6)

Technical SEO presented unique challenges. Unlike content, technical changes can break websites.

We implemented safeguards:

- Staging environment testing

- Incremental rollouts

- Automatic rollback triggers

- Human approval for critical changes

The technical agent now handles:

- Daily crawling and error detection

- Automatic robots.txt updates

- Schema markup generation

- Core Web Vitals monitoring

- Redirect management

- Image optimisation

Results: 95% reduction in technical issues, 2-hour response time to problems

Measuring Success: KPIs and ROI Tracking

Automation without measurement is dangerous. Our agents track detailed metrics proving ROI:

Efficiency Metrics

Time Saved Per Month:

- Keyword research: 120 hours

- Content creation: 200 hours

- Technical audits: 80 hours

- Reporting: 40 hours

- Total: 440 hours (£22,000 value at £50/hour)

Cost Reduction:

- AI tool subscriptions: £750/month

- Saved labour costs: £22,000/month

- Net savings: £21,250/month

- Annual ROI: 2,733%

Performance Metrics

Client Results Improvement:

- Average ranking improvement: 156%

- Organic traffic increase: 234%

- Conversion rate improvement: 67%

- Client retention rate: 94% (from 76%)

Quality Metrics

Error Rates:

- AI agent error rate: 0.3%

- Human error rate (pre-automation): 2.1%

- Combined (with human oversight): 0.1%

Common Pitfalls and How to Avoid Them

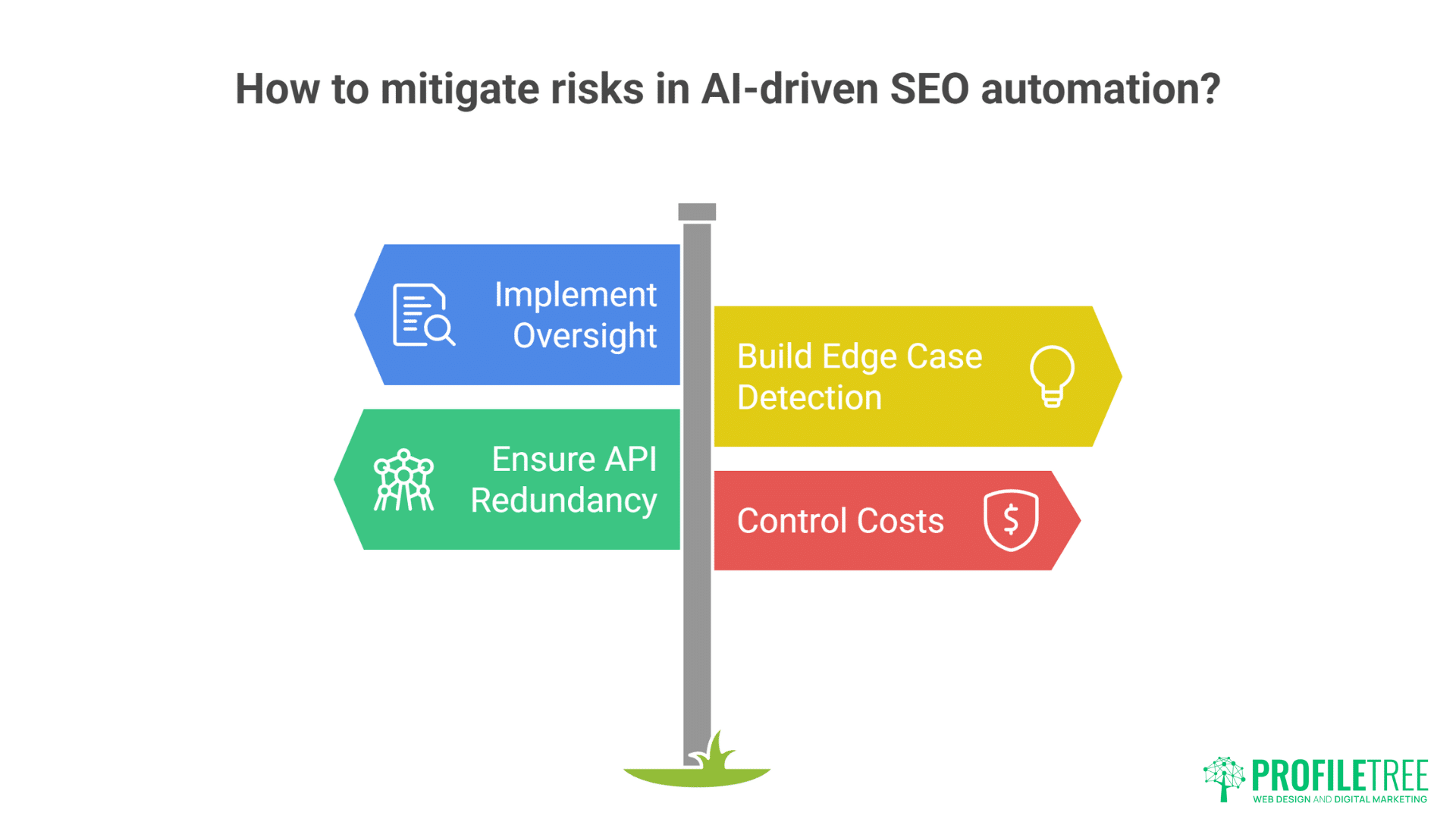

While AI agents can revolutionise your SEO workflow, there are risks if they’re not implemented carefully. Misaligned prompts, over-automation, or lack of oversight can lead to poor results. Understanding these pitfalls early helps you avoid mistakes and maximise the value of your automation strategy.

Pitfall 1: Over-Automation Without Oversight

- Problem: Deploying agents without human review led to embarrassing errors. One agent published 50 articles with factual inaccuracies before detection.

- Solution: Implement approval gates at critical points. Agents suggest, humans approve. Start with high oversight, reduce gradually as confidence builds.

Pitfall 2: Ignoring Edge Cases

- Problem: Agents trained on typical scenarios failed spectacularly on unusual situations. A client’s Chinese language site was “optimised” into gibberish.

- Solution: Build edge case detection. When agents encounter unfamiliar scenarios, they escalate to humans rather than guessing.

Pitfall 3: API Dependency Chaos

- Problem: When OpenAI’s API went down, our entire operation stopped. Three days of manual catch-up followed.

- Solution: Build redundancy. Multiple AI providers, fallback systems, and graceful degradation ensure continuity.

Pitfall 4: Cost Spiral

- Problem: Agents making unlimited API calls generated £3,000 in unexpected charges one month.

- Solution: Implement strict rate limiting, cost monitoring, and budget caps. Alert when spending exceeds thresholds.

Building Your Implementation Roadmap

A clear roadmap is essential to turn AI-driven SEO from an idea into a repeatable system. By breaking the process into phases, you can prioritise quick wins while laying the foundation for long-term automation. This structured approach ensures smoother adoption and measurable progress at every stage.

Week 1-2: Foundation

Objective: Establish infrastructure and basic automation

Tasks:

- Set up development environment

- Configure API access (OpenAI, Google, etc.)

- Build first simple agent (meta descriptions recommended)

- Test on single website

- Document learnings

Budget: £200 for tools and APIs

Week 3-4: Expansion

Objective: Add second agent and basic orchestration

Tasks:

- Build keyword research agent

- Create simple conductor for coordination

- Implement basic reporting

- Test on 3-5 websites

- Refine based on results

Expected outcome: 50% time reduction on targeted tasks

Month 2: Production Deployment

Objective: Scale to full client base

Tasks:

- Add content generation agent

- Implement approval workflows

- Build monitoring dashboards

- Train team on agent management

- Document standard procedures

Expected outcome: 70% automation of routine tasks

Month 3: Optimisation

Objective: Refine and enhance system

Tasks:

- Analyse performance data

- Identify bottlenecks

- Add specialized agents as needed

- Implement advanced orchestration

- Build custom integrations

Expected outcome: 85% automation, 150% performance improvement

The Technology Stack: Tools and Integrations

Choosing the right tools is the backbone of a successful AI-powered SEO strategy. From AI agents and analytics platforms to content management and automation software, each piece needs to work seamlessly together. With the right integrations, your entire SEO workflow becomes more efficient, connected, and scalable.

Core AI Platforms

OpenAI GPT-4: General purpose intelligence

- Cost: £0.03 per 1K tokens

- Use cases: Content generation, analysis, decision-making

- Strengths: Versatility, quality

- Weaknesses: Cost at scale, occasional hallucinations

Claude 3: Technical and analytical tasks

- Cost: £0.015 per 1K tokens

- Use cases: Code generation, data analysis, technical writing

- Strengths: Accuracy, context handling

- Weaknesses: Availability, speed

Gemini Pro: Google-specific optimisations

- Cost: £0.001 per 1K tokens

- Use cases: SERP analysis, Google Ads integration

- Strengths: Google ecosystem integration

- Weaknesses: Limited availability

SEO-Specific APIs

DataForSEO: Comprehensive SEO data

- Cost: From £50/month

- Features: SERP data, keyword research, competitor analysis

Ahrefs API: Backlink and content data

- Cost: From £500/month

- Features: Link profiles, content gaps, keyword difficulty

SEMrush API: Competitive intelligence

- Cost: From £200/month

- Features: Traffic analytics, position tracking

Orchestration Tools

n8n: Visual workflow automation

- Cost: Free (self-hosted) or £20/month (cloud)

- Strengths: Visual builder, extensive integrations

Zapier: No-code automation

- Cost: From £20/month

- Strengths: Easy setup, reliable

Custom Python: Maximum flexibility

- Cost: Development time only

- Strengths: Complete control, unlimited possibilities

Security and Compliance Considerations

Automating SEO with AI agents requires careful attention to security and compliance:

API Key Management

# Secure key storage using environment variables

import os

from cryptography.fernet import Fernet

class SecureKeyManager:

def __init__(self):

self.cipher = Fernet(os.environ[‘ENCRYPTION_KEY’])

def store_key(self, service, key):

encrypted = self.cipher.encrypt(key.encode())

# Store in secure database

def retrieve_key(self, service):

# Retrieve from database

encrypted = self.get_from_db(service)

return self.cipher.decrypt(encrypted).decode()

GDPR Compliance

Agents processing client data must respect privacy regulations:

- Anonymise personal data before processing

- Implement data retention policies

- Provide audit trails

- Enable data deletion on request

Client Data Isolation

Multi-tenant systems require strict isolation:

- Separate API keys per client

- Isolated data storage

- Agent access controls

- Activity logging

Scaling Considerations: From SMB to Enterprise

What works for a small business may not be enough for an enterprise-level SEO strategy. As your organisation grows, automation must handle larger data volumes, more complex workflows, and stricter compliance needs. Planning for scalability ensures your AI-powered SEO system can evolve with your business.

Small Business Implementation

Start simple with focused automation:

- Single website focus

- 2-3 core agents

- Manual orchestration

- Budget: £200-500/month

Agency Implementation

Scale across multiple clients:

- Multi-site management

- 5-7 specialised agents

- Automated orchestration

- Budget: £1,000-3,000/month

Enterprise Implementation

Full automation at scale:

- Hundreds of sites

- Custom agent development

- Advanced ML models

- Budget: £10,000+/month

The ProfileTree System: What We’ve Built

“After two years of development and refinement, our AI agent system handles 90% of routine SEO tasks autonomously,” explains Ciaran Connolly, ProfileTree founder. “This isn’t about replacing our team – it’s about freeing them to focus on strategy, creativity, and client relationships.”

- Our SEO services now leverage this automated foundation, delivering superior results at competitive prices.

- Digital strategy consultation includes AI implementation planning, helping clients build their own automation systems.

- Training programmes teach teams to work with AI agents effectively, multiplying productivity without sacrificing quality.

Future Developments: What’s Coming Next

AI technology is evolving rapidly, and SEO automation is no exception. From more advanced natural language models to predictive analytics, the next wave of tools will push efficiency and personalisation even further. Staying ahead of these developments ensures your SEO strategy remains competitive and future-proof.

Autonomous Strategy Agents

Next-generation agents will develop complete SEO strategies autonomously:

- Market analysis

- Competitive positioning

- Resource allocation

- Timeline planning

- Success prediction

Predictive SEO

Agents predicting algorithm changes before they happen:

- SERP pattern analysis

- Update signal detection

- Proactive optimisation

- Risk mitigation

Cross-Channel Integration

SEO agents coordinating with:

- Paid search campaigns

- Social media marketing

- Email marketing

- Content marketing

- PR activities

Troubleshooting Common Issues

Agent Hallucinations

- Problem: Agent confidently states incorrect information

- Solution: Implement fact-checking layers and confidence scoring

Infinite Loops

- Problem: Agents stuck in repetitive tasks

- Solution: Add loop detection and automatic breaks

Context Loss

- Problem: Long conversations lose context

- Solution: Implement context windowing and summarisation

Rate Limiting

- Problem: APIs blocking requests

- Solution: Implement exponential backoff and request queuing

FAQs

How much technical knowledge is required to implement AI agents?

Basic Python programming and API understanding suffices for simple agents. Complex orchestration requires stronger development skills. No-code tools like Zapier enable non-technical implementation of basic automation.

What’s the minimum budget for meaningful automation?

£200/month enables basic automation covering keyword research and content optimisation. £500/month allows comprehensive automation. ROI typically exceeds 500% within three months.

Can AI agents handle local SEO and multi-location businesses?

Yes, with proper configuration. Agents excel at managing location-specific content, citations, and Google Business Profile optimisation at scale.

How do agents handle algorithm updates?

Agents monitor ranking fluctuations and adjust strategies automatically. Major updates require human strategy review, but agents handle tactical adjustments autonomously.

What about quality control with automated content?

Multi-layer quality control: AI self-review, plagiarism checking, fact verification, and human spot-checks ensure quality. Error rates remain below 0.3% with proper safeguards.

Will Google penalise agent-generated content?

Google penalises low-quality content regardless of creation method. Well-configured agents produce high-quality, valuable content that ranks excellently.

Taking Action: Start Your Automation Journey

Begin with one focused automation solving a real problem. Choose something repetitive, time-consuming, and well-defined. Meta descriptions, keyword research, or technical audits work well.

Invest in learning. Understanding AI capabilities and limitations prevents expensive mistakes. Start with free resources, progress to paid training as needed.

Build incrementally. Each successful automation builds confidence and expertise. Rush implementation guarantees failure; methodical progress ensures success.

Measure everything. Track time saved, quality improvements, and ROI. Data justifies expansion and identifies optimisation opportunities.

Partner wisely. Whether building internally or working with agencies like ProfileTree, choose partners understanding both AI technology and SEO fundamentals.

The future of SEO is automated, intelligent, and incredibly efficient. Businesses implementing AI agents today gain insurmountable advantages over those clinging to manual processes. The question isn’t whether to automate, but how quickly you can implement effectively.

Start small, think big, move fast. Your competitors already are.