AI Guidelines for Small Businesses: UK & Irish SME Guide

Table of Contents

Most small business owners using AI tools today have not written a single rule about how those tools should be used. No guidance on what data can be shared with an AI platform. No process for checking AI-generated content before it reaches a client. No clarity for staff on where AI ends, and human judgment begins. That gap is not just a compliance risk; it is the reason many SMEs fail to get consistent value from AI at all.

AI guidelines for small businesses are not a bureaucratic exercise. They are the operational foundation that allows a business to adopt AI tools quickly, confidently, and without the kind of mistakes that damage client relationships or fall foul of UK and Irish data protection law. The businesses that will move fastest with AI are not the ones that rush in without structure. They are the ones that put clear guidelines in place first, then adopt at a pace.

This guide sets out a practical framework for creating AI guidelines for small businesses: what to include, how to communicate them to staff, how to stay on the right side of UK and EU regulation, and how to use your guidelines as a springboard for productive AI adoption rather than a reason to hold back.

Why AI Guidelines for Small Businesses Cannot Wait

The most common assumption among SME owners is that AI governance is something large companies worry about. Legal teams, compliance officers, and enterprise software vendors. Not a 12-person marketing agency in Belfast or a family-run accountancy practice in Dublin.

That assumption is wrong, and it is becoming more costly by the month.

Employees across every sector are already using AI tools, often without telling their employers. A team member might be pasting client briefs into ChatGPT to draft proposals. Someone in accounts might be uploading invoice data into an AI summariser to speed up reporting. These are not rogue behaviours; they are pragmatic responses to workload pressure. But without AI guidelines for small businesses, there is no agreed standard for what is acceptable, what is risky, and what is off-limits entirely.

The ICO (Information Commissioner’s Office) in the UK has been explicit that organisations are responsible for how personal data is processed, regardless of whether that processing occurs within a traditional software system or a third-party AI tool. Uploading client data to a public AI platform without checking its data processing terms is a potential UK GDPR breach, even if the person doing it had no malicious intent. The ICO publishes guidance on AI and data protection at ico.org.uk; however, it is worth noting that this guidance is currently under review following the Data (Use and Access) Act 2025, so checking for the most current version before drafting your policy is advisable.

The risk is not only legal. AI systems can produce confident-sounding outputs that are factually wrong. Without a review process built into your guidelines, that content can reach clients, appear on your website, or inform business decisions before anyone has checked it. ProfileTree’s work on AI content detection outlines exactly why human review remains non-negotiable at this stage of AI development.

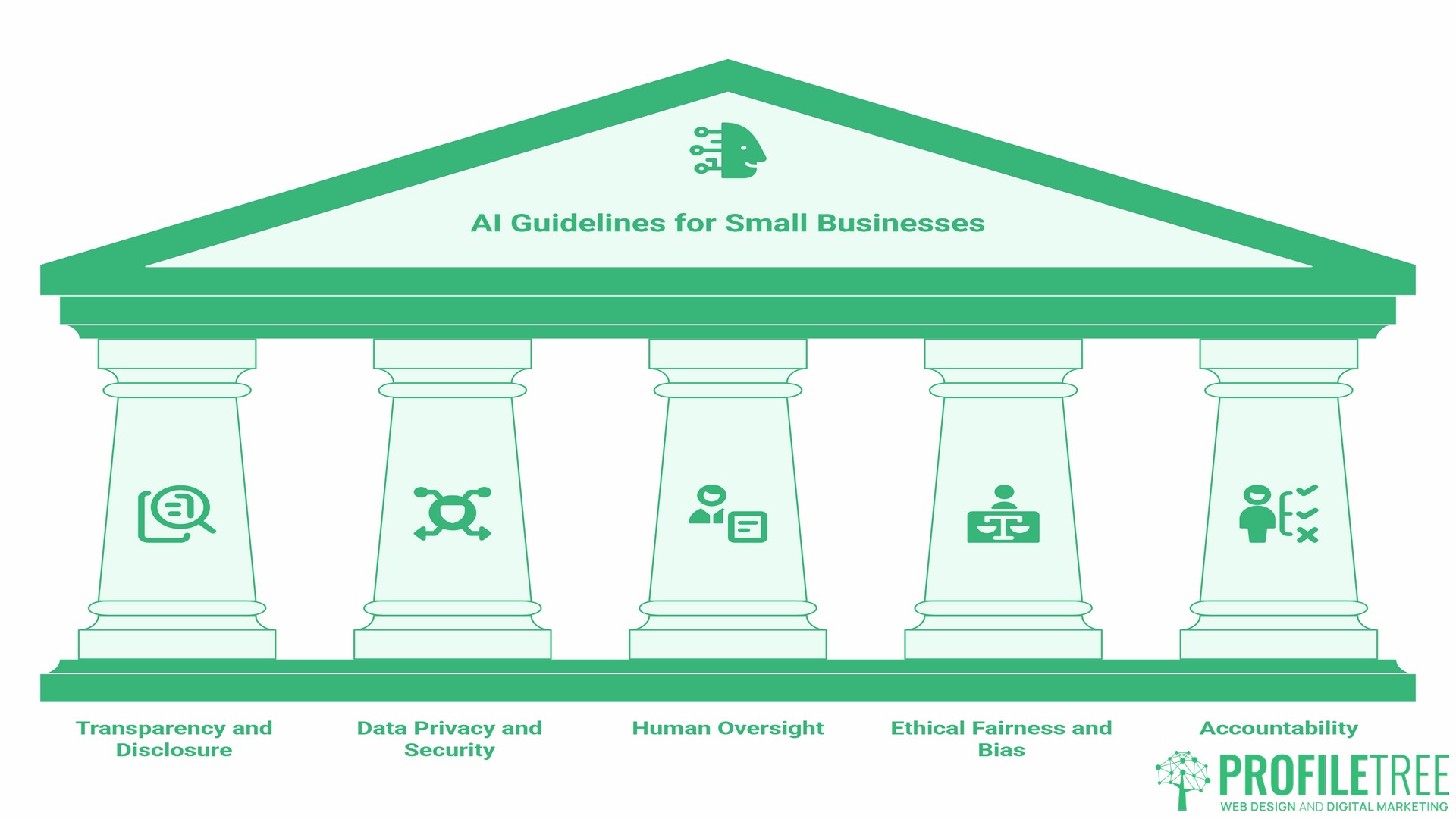

A Five-Pillar Framework for AI Guidelines for Small Businesses

Before drafting any document, it helps to understand the five core areas that every set of AI guidelines for small businesses should address. These are not abstract principles; each one has direct practical implications for how your staff use AI day to day.

Transparency and Disclosure

Your guidelines need to state clearly when AI use should be disclosed and to whom. At a minimum, staff should know whether they are permitted to use AI-generated content in client-facing work and, if so, whether that use needs to be flagged to the client. Some sectors, including legal, financial, and healthcare services, have professional standards that make non-disclosure a serious risk. Even outside regulated sectors, clients increasingly ask whether content or analysis has been AI-assisted. Having a clear, consistent answer protects your reputation.

Externally, your website’s privacy policy and terms and conditions should reflect how AI is used in your operations. ProfileTree’s own approach to this is embedded within our terms and conditions, and it is something every SME website should address as a baseline of digital transparency. If your website needs updating to reflect how you now operate, web design support for Belfast and Northern Ireland businesses can help you build that transparency into your site structure properly.

Data Privacy and Security

This is the area where most SMEs face the greatest legal exposure. Your guidelines should clearly define what categories of data can be processed using AI tools and which cannot. As a working principle: no personal data belonging to clients, employees, or third parties should be entered into a public AI platform unless you have confirmed that the platform does not use submitted data for model training and that its data processing terms are compatible with your UK GDPR obligations.

For Northern Ireland businesses with clients in the Republic of Ireland or across the EU, this is doubly important. The EU AI Act entered into force in August 2024, with obligations phasing in through to August 2026. Even if your business is based in Belfast, if your AI system’s output affects people in the EU, certain provisions of the Act may apply to you. Your guidelines should acknowledge this explicitly rather than leave it open.

The ICO publishes guidance on AI and data protection, including its expectations around automated decision-making and transparency. As noted above, this guidance is under active review following the Data (Use and Access) Act 2025. Referencing the ICO’s current published position in your internal policy is good practice, and checking ico.org.uk periodically for updates should be part of your policy review cycle.

Human Oversight

AI tools are assistants, not decision-makers. Your guidelines should specify which outputs require human review before they are acted on or shared externally. A working rule that applies to most SMEs: any AI-generated content that will be seen by a client, published on a website, submitted as part of a tender, or used to inform a financial or legal decision must be reviewed and approved by a named person before it leaves the business.

This is not about distrust of the technology. It is about accountability. If an AI-generated report contains an error that costs a client money, the question will not be “what did the AI do wrong?” It will be “who approved this before it went out?” Your guidelines need to answer that question in advance.

Ethical Fairness and Bias

AI systems learn from historical data, and historical data contains historical biases. This is relevant to SMEs in hiring, customer-facing communications, and any context where AI is used to segment, score, or evaluate people. Your guidelines should flag these risks and require human review for any AI output that involves people-related decisions. This connects directly to the broader topic of ethics and legalities in digital marketing, where the boundaries between automation and accountability are increasingly tested.

Accountability

Every AI-generated output needs a human owner. Your guidelines should state that the person who uses an AI tool to produce a piece of work is responsible for the accuracy, appropriateness, and quality of that work. AI authorship is not a defence against errors; it is simply a description of the process. This framing matters because it keeps staff engaged and critical rather than passive consumers of AI output.

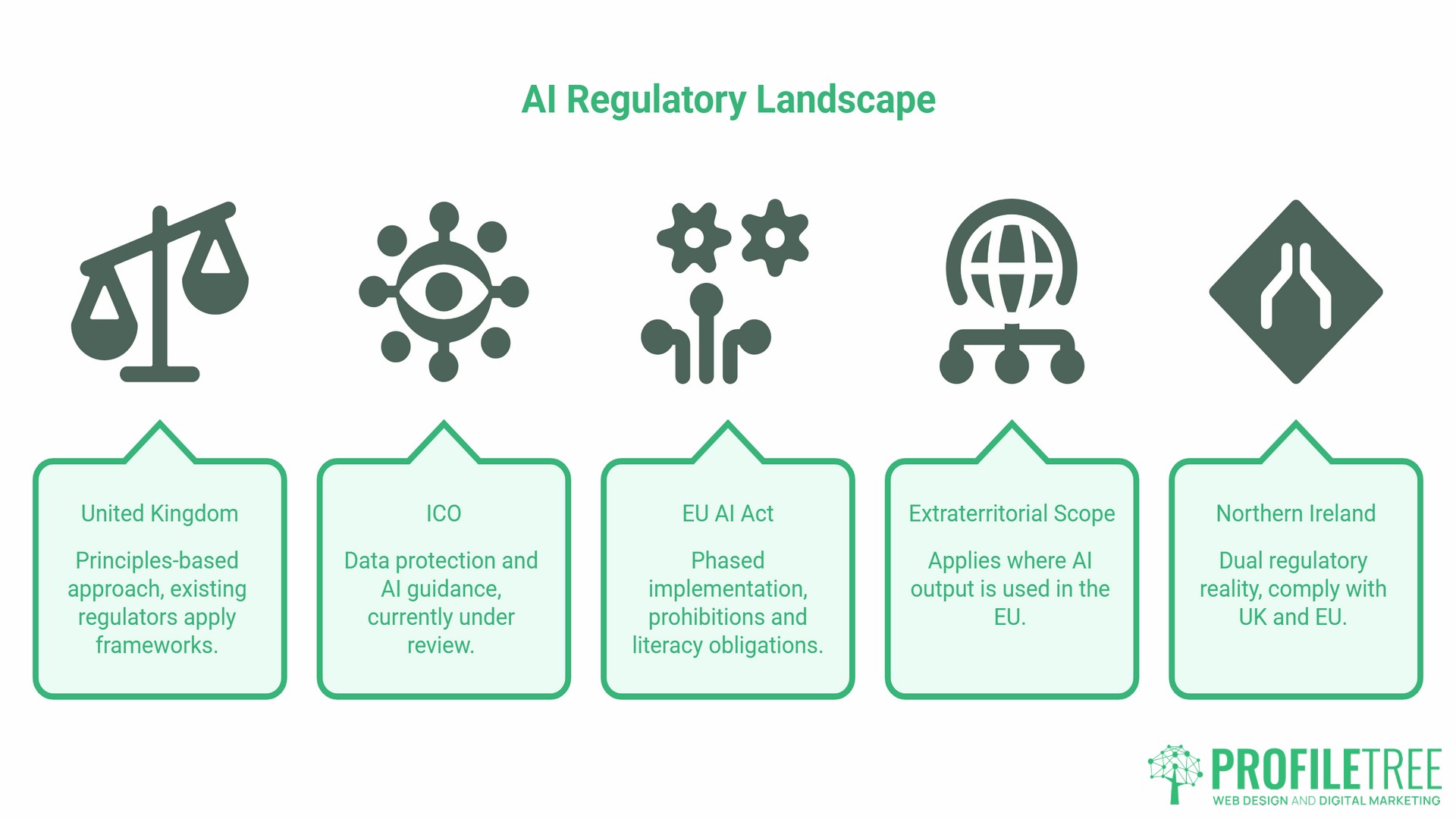

The UK and Irish Regulatory Landscape

Understanding the regulatory context is not optional for SME owners. It is the basis for your AI guidelines for small businesses.

In the UK, the primary regulatory framework for AI and data remains anchored in UK GDPR, administered by the ICO. The UK government published its AI Regulation White Paper in March 2023, setting out a principles-based approach that asks existing sector regulators, including the ICO, the FCA, and others, to apply their existing frameworks to AI-related activities within their domains. The UK has deliberately chosen not to introduce a single binding AI law, unlike the EU’s approach. That position remains current, though the government has indicated it will keep the legislation question under review as AI capabilities develop.

In Ireland and for Northern Ireland businesses with EU-facing operations, the EU AI Act introduces a tiered risk classification system. The Act entered into force in August 2024. Prohibitions on certain AI practices and AI literacy obligations came into effect in February 2025. Governance rules and obligations for general-purpose AI model providers became applicable in August 2025.

Full applicability for most remaining provisions follows in August 2026. Most AI tools used by typical SMEs, such as writing assistants, image generators, and scheduling tools, fall into lower-risk categories and face lighter-touch requirements. The key obligation that applies broadly is transparency: if you are using an AI system that interacts directly with people, such as a chatbot, those people must be informed they are dealing with AI.

Critically, the EU AI Act has extraterritorial scope. It applies to providers and deployers of AI systems located outside the EU whose output is used in the EU. For Northern Ireland businesses serving clients in the Republic of Ireland, this means EU AI Act obligations may apply to your operations even though your business is based in the UK. Your guidelines should address both the UK and EU frameworks, and if you are uncertain which obligations apply to your specific situation, taking professional legal advice is worthwhile.

“Compliance is not a hurdle for SMEs,” says Ciaran Connolly, founder of ProfileTree, the Belfast-based digital agency. “For any small business looking to win contracts with larger organisations or public sector clients, having documented AI guidelines is fast becoming a procurement requirement. The businesses that treat governance as a competitive advantage will move ahead of those that treat it as a box-ticking exercise.”

How to Create and Roll Out Your AI Guidelines

Creating AI guidelines for small businesses does not need to be complex. For most SMEs, a clear, practical internal document is more useful than a lengthy legal text.

The first step is an audit. Before writing any rules, you need to understand how AI is already being used in your business. Ask your team directly and without judgment. Which tools are people using? What tasks are they applying them to? Are any of those tasks involving client data, personal information, or commercially sensitive material? The answers will shape the scope of your guidelines and reveal the highest-priority risks to address first.

Once you have a picture of current use, draft your guidelines based on the five pillars outlined above. Keep the language plain and direct. A document that staff can read in 15 minutes and actually understand is far more valuable than one that covers every theoretical scenario but sits unread in a shared drive.

The rollout matters as much as the document itself. Present the guidelines in a team meeting rather than sending them by email. Give people the chance to ask questions. Address the job security concern directly: AI guidelines are not a signal that the business is moving toward replacing people. They are the structure that allows the business to use AI tools in a way that protects everyone, including staff whose work could be put at risk by an unreviewed AI error reaching a client.

Schedule a review date. AI tools are evolving rapidly, and guidelines written today may need updating within 6 months. Build the review cadence into the document itself so it does not become an afterthought.

ProfileTree’s digital training programmes for SME teams are designed for exactly this phase of the AI adoption journey: giving business owners and their staff the practical knowledge to use AI tools well, within a framework that makes sense for their specific operations.

Beyond the Document: From AI Guidelines to AI Adoption

Writing AI guidelines for small businesses is the starting point, not the destination. The businesses making the most of AI are not those with the most sophisticated policies. They are the ones with clear guidelines in place and who act on them, using that structure to adopt tools faster and with greater confidence than their competitors.

Once your guidelines are in place, the logical next step is understanding which AI tools are actually worth using for your specific business. That means honestly evaluating your workflows, identifying where AI can genuinely save time or improve output, and building the internal skills to use those tools effectively. It also means knowing when not to use AI, which is a judgment call that only comes with experience and training.

ProfileTree works with SMEs across Northern Ireland, Ireland, and the UK on exactly this journey: from initial AI readiness assessment through to practical implementation and staff training. The process described in this guide mirrors what we help businesses work through in our AI implementation and transformation services. Having guidelines in place is what makes the implementation process faster, cleaner, and less risky.

The businesses that will have the strongest AI capability in three years are not starting with the tools. They are starting with the framework.

Small Business AI Policy Template

Use the following as a starting point for your own internal document. Adapt each section to reflect your actual operations, tools, and team structure. The goal is a document your staff will read and use, not one that covers every theoretical scenario.

Artificial Intelligence Policy: [Business Name]

Purpose

This policy sets out how [Business Name] uses artificial intelligence tools in its operations and what is expected of all team members when using AI in the course of their work.

Scope

This policy applies to all employees, contractors, and freelancers working on behalf of [Business Name].

Approved Uses of AI

The following uses of AI tools are permitted within [Business Name]:

- Drafting initial versions of written content (subject to human review before any external use)

- Summarising long documents or research materials

- Generating images for internal use or for client projects (subject to client agreement and copyright checks)

- Analysing data sets to identify patterns or anomalies

- Scheduling, formatting, and administrative tasks that do not involve personal data

Prohibited Uses of AI

The following uses are not permitted:

- Entering client personal data, financial data, or commercially sensitive information into any public AI platform

- Publishing AI-generated content externally without a named person reviewing and approving it first

- Using AI to make decisions about individuals (hiring, performance, credit) without human oversight

- Presenting AI-generated work as independently researched or personally authored without disclosure

Data Protection

All use of AI tools must comply with UK GDPR. If you are unsure whether a specific use involves personal data, check with [designated contact] before proceeding.

Human Review

Any AI-generated output that will be shared with a client, published online, or used to inform a significant business decision must be reviewed and approved by [designated reviewer] before use.

Disclosure

Where AI has been used to produce client-facing work, this should be disclosed to the client in line with our standard terms unless the client has waived this requirement in writing.

Review Date

This policy will be reviewed by [date] and updated as needed to reflect changes in the tools we use and the regulatory environment.

Building Your AI Guidelines: Next Steps

AI guidelines for small businesses serve one primary purpose: giving your team the clarity they need to use AI tools well. That clarity reduces risk, improves output quality, and removes the uncertainty that causes many SMEs to either avoid AI altogether or adopt it without adequate oversight.

The framework in this guide covers the five pillars every policy needs, the UK and Irish regulatory context you must account for, a step-by-step rollout process, and a template you can adapt and use today. What comes next is implementation: understanding which tools fit your specific workflow, building the skills to use them effectively, and reviewing your guidelines as technology and regulation continue to evolve.

If your business is at the stage of moving from guidelines to active AI adoption, get in touch with the ProfileTree team to talk through what a structured AI implementation programme looks like for an SME of your size and sector.

Frequently Asked Questions

Do I really need written AI guidelines if my business only has a few people?

Yes. The size of your team does not change your legal obligations under UK GDPR, and it does not reduce the reputational risk of an AI-related error reaching a client. A short, clear document takes a few hours to produce and can save significantly more time in remediation if something goes wrong. Smaller teams often have less formal oversight, which makes written guidelines more important rather than less.

Does the EU AI Act apply to my business in Northern Ireland?

If your business provides services to clients in the Republic of Ireland or elsewhere in the EU, the EU AI Act may apply to you. The Act has extraterritorial scope: it applies to providers and deployers of AI systems located outside the EU where the output of that system is used in the EU. The most broadly applicable requirement for typical SMEs is transparency: if you use an AI system that interacts directly with people, such as a customer-facing chatbot, those people must know they are dealing with AI. If you are uncertain about your specific obligations, taking professional legal advice is the right step.

Is a free tool like the basic version of ChatGPT safe for business use?

For tasks that do not involve personal data or commercially sensitive information, public AI tools can be used safely within the parameters of your guidelines. The specific risk with free-tier tools is data retention: some platforms use submitted content to improve their models unless you actively opt out or use a paid business account with different data processing terms. Check the terms of any tool before using it for anything client-related, and make this check a standard part of your AI approval process.

Who should be responsible for overseeing AI use in a small business?

In most SMEs, this will be the business owner or a senior team member with a good understanding of both the operations and the regulatory context. Some businesses designate an “AI champion” whose role includes staying current with tool developments, maintaining the guidelines, and being the first point of contact for staff questions. This does not need to be a full-time or specialist role; it simply needs to be someone’s named responsibility.

How often should AI guidelines be updated?

Every six months is a reasonable starting point given how quickly AI tools and the regulatory environment are changing. The EU AI Act is phasing in obligations through to August 2026, and the ICO’s AI guidance is currently under review following the Data (Use and Access) Act 2025. Both of those developments alone make a six-month review cycle worth maintaining. Build the review date into the document itself so it becomes a standing calendar item rather than a task added only when something goes wrong.

Can I be held liable for errors in AI-generated content?

Yes. Responsibility for the accuracy and appropriateness of any content produced in the course of your business sits with your business, regardless of how that content was generated. AI authorship is not a legal defence. This is precisely why human review of AI-generated outputs before they reach clients or the public is a non-negotiable part of any sensible AI guidelines for small businesses.

What is “shadow AI” and should I be worried about it?

Shadow AI refers to employees using AI tools without the knowledge or approval of the business. It is extremely common, particularly in businesses that have not yet established AI guidelines. Staff are not typically being reckless; they are trying to be efficient. The most effective response is not prohibition but structure: give people clear guidelines on what is permitted, and the need to operate outside those boundaries largely disappears.