AI and Cybersecurity: Threats, Detection, and Defence for Businesses

Table of Contents

AI and cybersecurity have become two of the most consequential forces shaping how businesses operate online. The relationship between them is not straightforward. AI and cybersecurity interact on both sides of the threat landscape: defenders use artificial intelligence to spot and stop attacks faster than any human team could manage, while attackers use the same technology to automate, refine, and scale their methods. For any business with a digital presence, understanding how AI and cybersecurity intersect is no longer optional.

Cyber threats are now evolving at machine-driven speed. The volume of signals, logs, and alerts that a modern organisation generates every day is too large for manual review. Traditional rule-based security tools, which block known attack signatures, cannot keep pace with adversaries who constantly adapt. This is the gap that artificial intelligence fills.

ProfileTree works with businesses across Northern Ireland, Ireland, and the UK on digital transformation, web design, and AI adoption. Our team sees first-hand how many organisations treat cybersecurity as a compliance exercise rather than a strategic investment. This guide covers the core technologies driving AI-powered defence, how attackers are weaponising the same tools, and a clear framework for strengthening your own position.

How AI Is Used in Cybersecurity Defence

AI and cybersecurity defence work best together when artificial intelligence acts as a force multiplier for security teams rather than a replacement for human judgement. The technology excels at processing scale and speed that humans cannot match. A security analyst can review dozens of alerts per shift; a well-configured AI system can evaluate millions per second. The same principle applies across digital operations more broadly: our AI marketing and automation services demonstrate how AI-driven systems improve both efficiency and accuracy when humans retain strategic oversight.

Automated Threat Monitoring

Traditional security information and event management (SIEM) systems collect logs and fire alerts based on pre-written rules. AI-powered systems go further by learning what normal behaviour looks like across a network and flagging anything that deviates from that baseline. An employee accessing file servers at 3 AM from an unfamiliar device triggers an alert not because a rule exists, but because the system has learned that this pattern is abnormal.

This is called behavioural analytics, and it is one of the most practical applications of AI and cybersecurity technology available to businesses today. The key advantage is catching threats that have never been seen before, not just known attack signatures. For businesses running WordPress sites, keeping software updated and monitored is the foundation on which these tools build. Our website security and management service covers exactly this layer before more advanced AI-powered monitoring is added.

Automated Security Operations

Security Orchestration, Automation and Response (SOAR) platforms use machine learning to triage incoming alerts, prioritise the most serious incidents, and trigger automated responses. When a phishing email is detected, the system can automatically quarantine the message, block the sending domain, and notify the security team within seconds. This speed matters: the average time between intrusion and detection across industries still runs to days or weeks, and AI-powered monitoring closes that window significantly.

Predictive Analytics and Threat Intelligence

Predictive analytics represents one of the most significant shifts AI and cybersecurity have brought about together. Rather than reacting to attacks after they occur, security systems can now analyse historical data, current network conditions, and external threat intelligence feeds to identify likely attack vectors before exploitation happens.

How Threat Intelligence Works with AI

Threat intelligence involves collecting and analysing data about known attack methods, malicious IP addresses, compromised credentials, and emerging malware families. On its own, this data is too voluminous for any team to action manually. AI and cybersecurity platforms can ingest thousands of threat indicators per hour, cross-reference them against your own environment, and surface only the relevant risks.

Treating cybersecurity as part of a broader digital strategy means that security investment decisions sit alongside decisions about infrastructure, data governance, and digital growth rather than being treated in isolation. Natural Language Processing tools scan the open web and security research publications to identify discussions of new vulnerabilities or planned attacks against specific industries, giving organisations intelligence that changes their defensive posture immediately.

Proactive Defence Rather Than Reactive Response

Ciaran Connolly, founder of ProfileTree, makes this point directly when working with clients: “Most businesses we speak with are still in reactive mode. They update their software when they get a breach notification, not before. AI and cybersecurity tools now make it possible for small and medium businesses to operate with the same threat awareness that large enterprises paid specialist teams to maintain. The entry cost has fallen dramatically.”

Predictive capabilities are not foolproof. They depend on high-quality training data and regular model updates. A system trained exclusively on historical attack patterns will miss entirely novel techniques. AI and cybersecurity strategies should combine predictive analytics with real-time monitoring rather than treating prediction as a substitute for it.

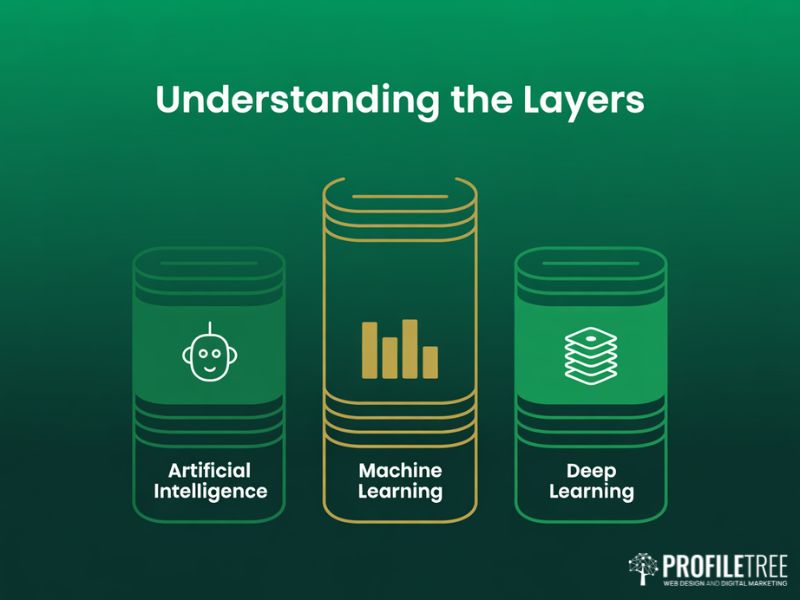

Machine Learning and Deep Learning for Threat Detection

Machine learning and deep learning are the technical engines behind most AI and cybersecurity applications on the market today. Understanding the difference matters when evaluating tools or working with a technology partner on a security strategy. For teams building this knowledge internally, our digital training programmes cover AI literacy for non-technical staff alongside more advanced technical pathways.

| Technology | How It Works | Cybersecurity Application |

|---|---|---|

| Artificial Intelligence | Simulates human reasoning to make decisions from data | Overarching framework for all intelligent security tools |

| Machine Learning (ML) | Algorithms learn from historical data to identify patterns | Email spam filters, anomaly detection, fraud scoring |

| Deep Learning (DL) | Multi-layered neural networks process complex unstructured data | Malware binary analysis, network traffic classification |

| Natural Language Processing (NLP) | AI that understands and analyses human language | Phishing detection, threat intelligence scanning |

Supervised and Unsupervised Learning in Security

Supervised machine learning trains on labelled datasets: examples of known malware versus clean files, or fraudulent transactions versus legitimate ones. Support Vector Machines and decision tree algorithms are common here and perform well when large volumes of historical incident data exist.

Unsupervised learning takes a different approach. The algorithm receives no labels and must find structure in the data itself. For AI and cybersecurity purposes, this means the system identifies clusters of similar behaviour and flags outliers. It is particularly valuable for detecting zero-day exploits, attacks that target previously unknown vulnerabilities and for which no labelled training data yet exists.

Deep Learning and Anomaly Detection

Deep learning models, particularly autoencoders and recurrent neural networks, have proven especially effective in AI and cybersecurity anomaly detection. An autoencoder is trained to reconstruct normal network traffic. When it encounters traffic it cannot reconstruct accurately, the reconstruction error spikes and triggers an alert. This approach catches sophisticated attacks that deliberately mimic legitimate traffic patterns because even small deviations from the learned baseline become visible.

The challenge with deep learning in security contexts is that these models can be opaque in their reasoning. Security teams need to understand why an alert was triggered, not just that it was. Explainable AI frameworks are increasingly built into commercial AI and cybersecurity platforms to address this. According to the UK’s National Cyber Security Centre, transparency is a core consideration when procuring AI-powered security tools for regulated environments. The NCSC’s guidance on AI and cybersecurity is worth reviewing for any organisation making procurement decisions in this space.

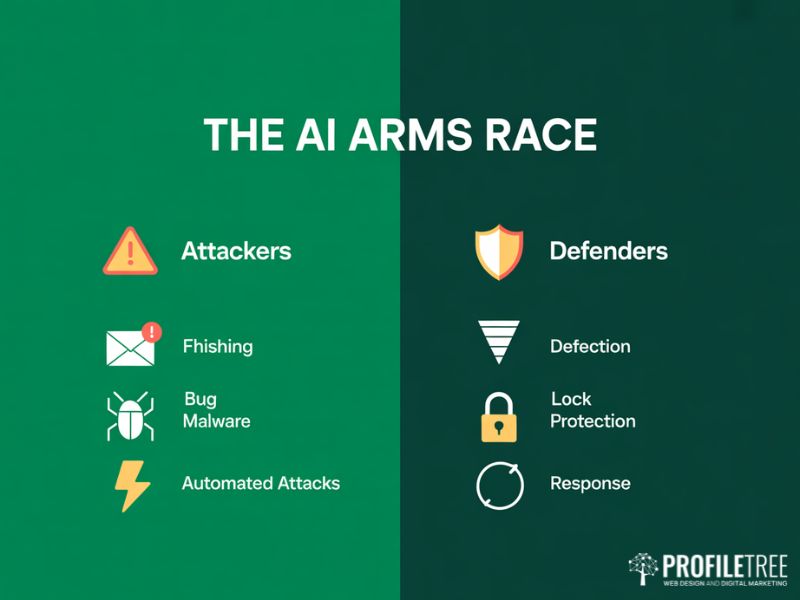

Offensive AI: How Attackers Are Using the Same Technology

The same advances that make AI and cybersecurity defence more effective are also being exploited by attackers. AI-powered offensive tools are already in active use, and their capabilities are accelerating.

AI-Generated Phishing and Social Engineering

Traditional phishing campaigns were relatively easy to identify. Poorly written emails, generic greetings, and obvious impersonation attempts were common tells. Generative AI has changed this. Attackers now produce highly personalised phishing emails at scale, tailored to the recipient’s name, role, and recent activity scraped from public sources. Voice cloning tools allow attackers to impersonate senior executives in audio calls to authorise fraudulent transfers, a technique known as vishing.

This has implications beyond security. The growing sophistication of AI-generated content means businesses need both robust content marketing strategies built on authentic expertise, and security awareness training that reflects the new reality. The old advice of checking for spelling mistakes is no longer sufficient. Employees need to understand that a convincing, personalised message is not evidence of legitimacy.

Adversarial Attacks on AI Security Systems

More technically sophisticated attackers now target AI systems themselves. Adversarial machine learning involves making small, deliberate modifications to malware or network traffic to fool detection models. A file designed to be classified as malicious can be subtly altered so that the AI model classifies it as benign, in ways that are invisible to human reviewers.

Model poisoning is a related threat. If an attacker can inject corrupted data into a system’s training pipeline, they can degrade the model’s performance over time or create specific blind spots. AI and cybersecurity platforms in high-stakes environments need robust data provenance controls and regular model audits to defend against this.

Automated Attack Campaigns

AI allows attackers to run vulnerability scanning, exploit attempts, and credential stuffing campaigns at a speed that was previously only available to well-resourced state actors. Small criminal groups can now automate the entire attack chain from reconnaissance to data exfiltration. For businesses without mature AI and cybersecurity defences, this represents a genuine step-change in risk.

| Attacker Technique | How It Works | Defensive AI Countermeasure |

|---|---|---|

| AI-generated phishing | Personalised emails created at scale using language models | NLP-based email analysis, behavioural anomaly detection |

| Voice cloning (vishing) | Deepfake audio impersonates trusted individuals | Out-of-band confirmation workflows, voice biometric verification |

| Adversarial malware | Files modified to evade ML detection models | Ensemble detection models, behavioural analysis |

| Automated vulnerability scanning | AI tools identify weaknesses faster than patches arrive | Continuous vulnerability management, AI-prioritised patching |

| Credential stuffing | AI automates testing of compromised credentials | Behavioural authentication, MFA enforcement |

What UK Businesses Should Do Now

Translating the theory of AI and cybersecurity into practical action is where most businesses struggle. The technology is advancing quickly, vendor claims are often exaggerated, and the skills gap in the UK security sector means in-house expertise is scarce.

Assess Your Current Position

Before selecting any AI and cybersecurity tool, map what you already have. Most businesses running Microsoft 365 or Google Workspace have access to AI-powered security features they are not using. Defender for Business includes endpoint detection with machine learning at no additional cost for many licence tiers. Start with what you already pay for before procuring new platforms.

Establish a baseline for your key metrics: mean time to detect and mean time to respond. Businesses with strong digital visibility, supported by effective SEO and organic search strategies, carry greater reputational exposure if a breach is publicly reported. This raises the cost-benefit calculation for security investment and should inform how urgently leadership treats AI and cybersecurity as a board-level issue.

Prioritise Your Highest-Risk Areas

For most small and medium businesses in the UK, the highest-probability risks are phishing and social engineering, ransomware delivered via email or unpatched software, credential compromise through password reuse, and supply chain attacks via third-party software. AI and cybersecurity investments should address these in order, not chase sophisticated nation-state threats unlikely to target your organisation.

Regulatory Context for UK Businesses

UK businesses operating under GDPR have a legal obligation to implement appropriate technical measures to protect personal data. The ICO has published guidance confirming that AI-powered monitoring tools are acceptable under GDPR provided they are proportionate, transparent, and subject to human oversight. These obligations start at the infrastructure level. Our website development services include security best practice as a standard part of build specifications, not an optional add-on.

The NCSC Cyber Essentials scheme provides a practical baseline framework for UK businesses. Achieving certification does not require AI-powered tools, but it establishes the foundations on which AI and cybersecurity investments can build effectively.

Building Internal AI Literacy

ProfileTree delivers AI training for businesses and SMEs across the UK and Ireland. One consistent finding is that organisations which invest in broad AI and digital skills training make better decisions about AI and cybersecurity tools. When staff understand how machine learning models work at a basic level, they are less likely to over-trust automated alerts and more likely to notice when something unusual is happening that the system has missed.

Security awareness training needs to be updated to reflect AI-generated threats. Annual e-learning modules written before generative AI became widely available are not adequate preparation for the current threat environment.

FAQs

What is the difference between traditional cybersecurity and AI-powered cybersecurity?

Traditional cybersecurity blocks known threats based on pre-written rules and signatures. AI and cybersecurity tools learn from data, identifying novel threats based on unusual patterns even when a specific attack technique has never been seen before.

Does AI replace cybersecurity professionals?

No. AI handles volume and speed tasks while human professionals manage context, judgement, and escalation. The technology makes existing teams more productive rather than removing the need for human expertise.

What are the main risks of using AI in cybersecurity?

The main risks are over-reliance on systems that can be fooled by adversarial techniques, alert fatigue from too many low-quality alerts, data privacy risks when AI tools process sensitive information, and difficulty explaining why an AI system made a particular decision.

How should a small UK business start with AI cybersecurity?

Start with the security tools already included in your existing software licences. Ensure your website is properly maintained with our WordPress management and security service, then pursue Cyber Essentials certification before evaluating specialist AI and cybersecurity platforms.

How does AI help with phishing detection?

AI and cybersecurity phishing detection analyses email content, sender metadata, sending patterns, and writing style simultaneously. NLP-based systems can flag AI-generated phishing content even when emails are polished and personalised, because these messages share detectable stylistic patterns.

What regulations apply to AI use in cybersecurity in the UK?

UK GDPR requires AI tools processing personal data to be lawful, fair, and transparent, with human review available for automated decisions. The NCSC publishes guidance on AI use in security contexts, and businesses in financial services, healthcare, and critical infrastructure face additional sector-specific requirements.