EU AI Act Impact on International Businesses: Compliance

Table of Contents

The EU AI Act represents the most consequential piece of artificial intelligence legislation anywhere in the world. Passed by the European Parliament in March 2024 and entering phased enforcement from August 2024, this regulation does not simply apply to EU-based companies. Any business that deploys AI within the European market, including organisations headquartered in the UK, the United States, or beyond, falls within its scope. For international businesses, the EU AI Act is not a distant regulatory concern. It is an operational reality that demands attention now.

At ProfileTree, the Belfast-based web design, digital marketing, and AI transformation agency, we have spent considerable time working with SMEs and larger organisations to understand what the EU AI Act means in practice. This guide draws on that experience alongside the published regulatory framework to give you a clear, honest picture of what the EU AI Act requires, who it affects, and what you should do about it.

The scale of the EU AI Act is worth stating plainly. Fines for non-compliance with provisions covering prohibited AI systems can reach 35 million euros or 7% of global annual turnover, whichever is higher. For high-risk AI systems, the figure is up to 15 million euros or 3% of global turnover. These are not theoretical penalties. Enforcement timelines are already running, and the obligations for high-risk AI systems became applicable in August 2026.

The EU AI Act Explained

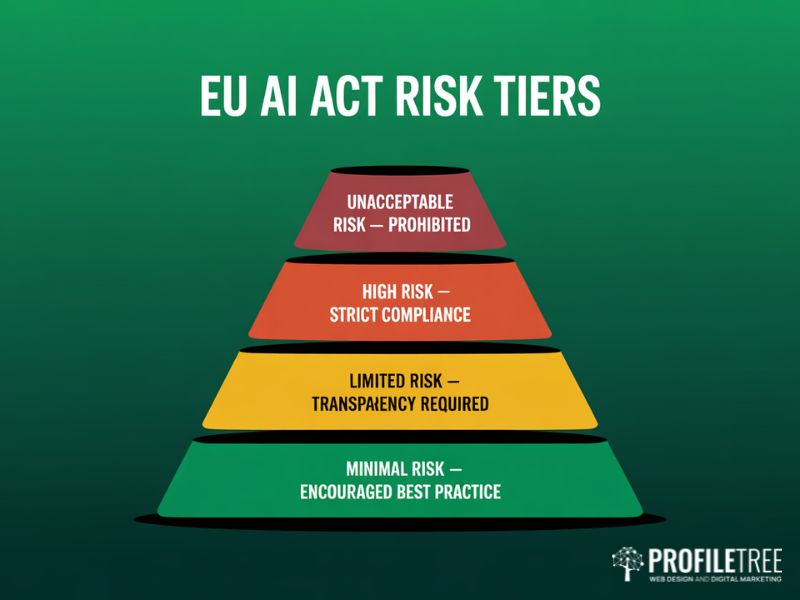

Before examining what the EU AI Act demands of your organisation, it is worth understanding what the legislation is actually trying to achieve. The EU AI Act is built around a risk-based framework, the first of its kind at legislative scale. Rather than applying uniform rules to every AI application, it creates a tiered structure that scales compliance obligations to the potential harm an AI system could cause. Understanding this structure is the foundation for any compliance programme.

Objective and Scope

The EU AI Act aims to ensure the safety and fundamental rights of individuals and businesses whilst encouraging responsible innovation. It applies to providers of AI systems placed on the EU market, users of AI systems within the EU, and any provider or user located outside the EU whose AI outputs are used inside the EU. The extraterritorial reach is deliberate and mirrors the approach the GDPR took with personal data. Building a coherent AI and digital strategy that accounts for these obligations from the outset is far more efficient than trying to retrofit compliance after deployment.

The Four-Tier Risk Classification

The EU AI Act divides AI applications into four risk categories. Understanding which category your AI use cases fall into is the starting point for any compliance programme.

| Risk Level | Description | Examples | Compliance Obligation |

|---|---|---|---|

| Unacceptable Risk | Banned outright under the EU AI Act | Social scoring, manipulative subliminal AI, real-time biometric ID in public spaces | Prohibited. No deployment permitted. |

| High Risk | Significant potential harm to health, safety, or fundamental rights | AI in medical devices, CV-screening tools, credit scoring, law enforcement | Mandatory risk management, data governance, human oversight, EU database registration |

| Limited Risk | Specific transparency obligations apply | Chatbots, deepfakes, AI-generated content | Must disclose AI nature to users |

| Minimal Risk | Most AI applications | Spam filters, recommendation systems | No specific obligations, but best-practice guidelines encouraged |

Core Provisions and Principles

At the heart of the EU AI Act lie requirements centred on transparency, risk assessment, and data governance. Businesses must conduct rigorous testing and documentation to demonstrate their AI systems are trustworthy. This applies to a wide range of tools, from automated marketing platforms to customer-facing AI chatbots deployed on websites. Ethical guidelines include provisions for human oversight and the avoidance of opaque decision-making processes, upholding individuals’ rights to non-discrimination and privacy. As ProfileTree’s Digital Strategist Stephen McClelland has noted: “Recognising an AI system’s category under the EU AI Act is not just a regulatory box-tick. It is a commitment to responsible technology deployment that your clients and customers will increasingly expect.”

AI Governance and Compliance Structures

Understanding what the EU AI Act requires is one thing. Building the internal structures to deliver compliance on an ongoing basis is quite another. For international businesses, this means establishing clear governance frameworks that define who owns AI risk, how it is assessed, and how decisions about AI deployment are documented and reviewed. Getting this right early avoids the considerably greater cost of fixing gaps under enforcement pressure.

The Role of National Competent Authorities

National Competent Authorities (NCAs) operate at the country level, ensuring organisations comply with the EU AI Act within their jurisdiction. Each EU member state is required to designate at least one NCA, which acts as a supervisory body, offering guidance, enforcing the EU AI Act’s provisions, and applying sanctions where companies fall short. For international businesses entering the EU market with AI-enabled products or services, engaging with the relevant NCA early, particularly for high-risk AI applications, is strongly advisable.

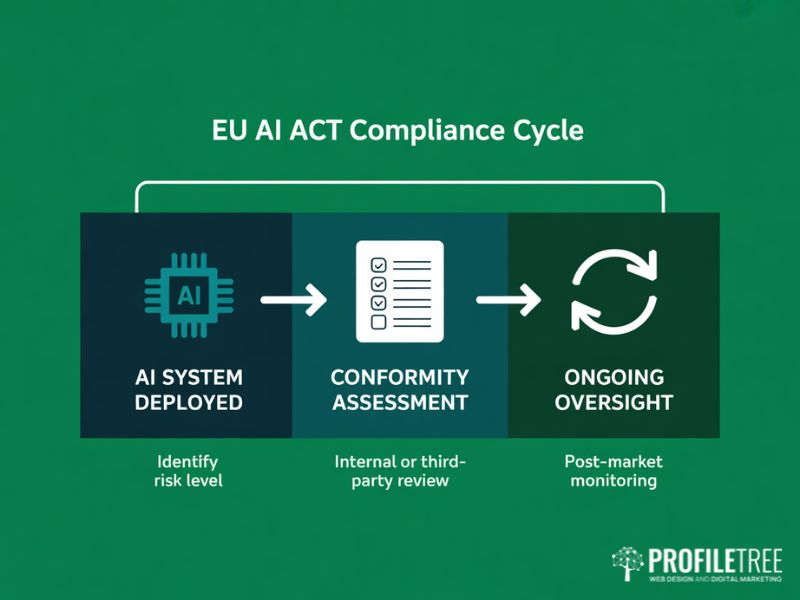

Conformity Assessment and Ongoing Oversight

Conformity assessment involves a structured review process where AI systems are evaluated against the EU’s regulatory standards before they reach the market. Depending on the risk level of the AI application, this may require internal checks supported by documentation or third-party assessment by a notified body. For high-risk AI systems, post-market monitoring is also required. The EU AI Act does not treat compliance as a one-time event but as an ongoing obligation that continues throughout the system’s deployment lifecycle.

Establishing a dedicated AI governance function within your organisation, whether that is a formal AI office, an AI Ethics Committee, or clearly defined responsibilities within an existing risk or compliance team, creates the backbone for meeting the EU AI Act’s requirements. This function should maintain a register of AI systems in use, including any AI marketing and automation tools, oversee risk assessments, manage documentation obligations, and liaise with the relevant NCA where needed.

Alignment with GDPR and DORA

The EU AI Act does not operate in isolation. It was designed to work alongside the General Data Protection Regulation (GDPR), which governs how personal data is processed, and the Digital Operational Resilience Act (DORA), which applies specifically to financial sector entities. For businesses already maintaining GDPR compliance programmes, several of the EU AI Act’s data governance obligations will feel familiar: transparency requirements, data minimisation, accuracy, and the need for detailed documentation and traceability. Organisations producing content marketing using AI-generated tools must also ensure that AI-created material is clearly identifiable where required under the Act’s transparency provisions.

The key difference is that the EU AI Act adds a layer of obligations specifically around how AI models are trained, tested, and monitored, not just how data is stored and used. Key points for organisations operating across both frameworks:

- AI systems handling personal data must comply with GDPR principles throughout the data lifecycle, including training data, inference data, and logged outputs.

- Financial entities using AI in decision-making processes must also ensure operational resilience as required under DORA, including scenario testing for AI-related failures.

- Documentation produced for GDPR compliance, particularly Data Protection Impact Assessments (DPIAs), can often be adapted to meet the EU AI Act’s risk management documentation requirements.

High-Risk AI Systems and Compliance

The high-risk category under the EU AI Act carries the most demanding compliance obligations, and it is broader than many businesses initially expect. If your organisation uses AI in any of the sectors listed below, you will need to treat compliance planning as a priority rather than a future consideration. The consequences of misclassifying a system as lower-risk are significant.

Identifying High-Risk Applications

According to the EU AI Act, high-risk AI applications are those used in critical sectors where failures could cause substantial harm to individuals’ rights and safety. The EU AI Act lists eight categories of high-risk AI application in Annex III, covering biometric identification and categorisation, management of critical infrastructure, education and vocational training, employment decisions, access to essential public and private services, law enforcement, migration and border control, and administration of justice.

For businesses in healthcare, financial services, HR technology, and the public sector, the high-risk provisions of the EU AI Act are directly relevant. Even digital marketing tools that use AI to personalise content or make decisions about individuals at scale should be assessed carefully against the limited and high-risk criteria. The European Parliament’s published guidance on the EU AI Act sets out the full scope of obligations for each category.

| Sector | AI Use Cases Likely Classified as High-Risk | Key Obligation |

|---|---|---|

| Healthcare | Diagnostic AI, clinical decision support, AI in medical devices | Full risk management system, human oversight, registration |

| Financial Services | Credit scoring, fraud detection with individual consequences, insurance underwriting | Transparency to affected individuals, data governance documentation |

| HR and Recruitment | CV screening, candidate ranking, performance evaluation tools | Human oversight mandatory, bias testing required |

| Law Enforcement | Predictive policing, crime analysis, risk assessment tools | Narrow authorisation required, judicial oversight |

| Education | Automated examination grading, student monitoring systems | Transparency obligations, human review required |

Safety and Risk Management for High-Risk AI

Once an AI system is identified as high-risk under the EU AI Act, the compliance obligations are substantial. Businesses must implement a risk management system that is documented, regularly reviewed, and proportionate to the risks identified. They must also demonstrate that the AI system has been tested and validated for its specific use case before deployment. Ongoing monitoring for bias, errors, and performance degradation is required throughout the deployment period.

Documentation requirements under the EU AI Act for high-risk systems include technical documentation explaining the system’s purpose and design, information about training methodologies and datasets, performance metrics and known limitations, instructions for use and human oversight arrangements, and records of the conformity assessment process. This documentation must be kept current and made available to national authorities on request.

“In practice, the EU AI Act’s documentation requirements for high-risk AI are more demanding than most businesses expect. The work required to produce a compliant technical file for a CV screening tool or credit scoring model is substantial. Start early and treat it as an ongoing process, not a project with an end date.” Ciaran Connolly, Founder, ProfileTree

Prohibited Practices Under the EU AI Act

The EU AI Act outright bans certain AI applications regardless of the safeguards applied. Banned applications include AI systems that exploit psychological vulnerabilities to manipulate individuals’ decisions, social scoring systems operated by governments, real-time remote biometric identification in public spaces for law enforcement (with very narrow exceptions subject to judicial authorisation), and AI systems that infer sensitive attributes such as political opinion or sexual orientation from biometric data. The restrictions on biometric identification are particularly relevant for retail, security, and hospitality businesses considering any form of customer recognition technology.

How UK and Irish Businesses Are Affected

For businesses based in Northern Ireland, the Republic of Ireland, or elsewhere in the UK, the EU AI Act’s reach is more immediate than it might initially appear. Ireland is an EU member state, which means businesses operating there are directly subject to the EU AI Act. Northern Ireland and the rest of the UK are not EU member states, but any UK business selling AI-enabled products or services into the EU market, or whose AI outputs affect EU residents, falls within the regulation’s extraterritorial scope. This is not a grey area.

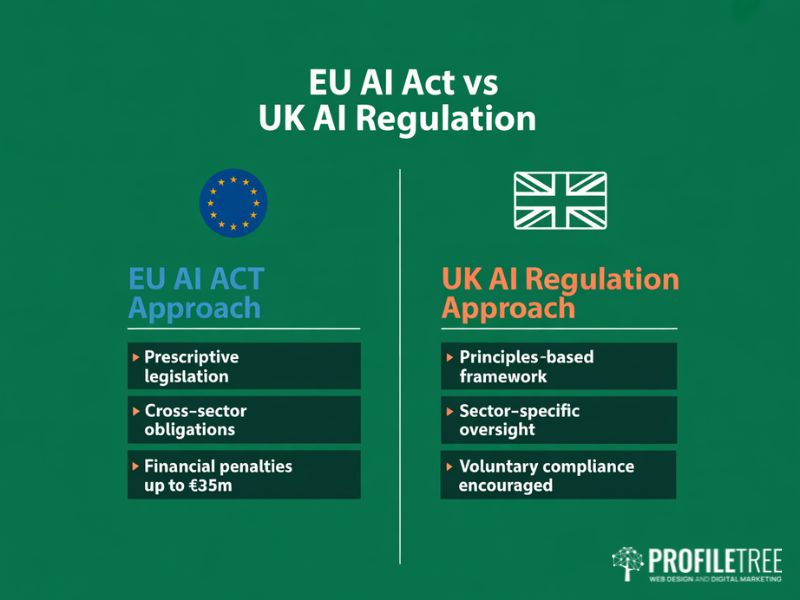

The UK Regulatory Divergence

Unlike the EU’s prescriptive, legislation-based approach, the UK Government has taken a principles-based, sector-specific stance on AI regulation. The UK’s approach delegates primary oversight responsibility to existing regulators such as the ICO, the FCA, and the CQC, each applying AI governance principles within their own sector. There is no single AI Act in the UK equivalent to the EU’s legislation.

For businesses operating across both markets, this creates practical complexity. A company using AI in employment decisions must satisfy the EU AI Act’s high-risk compliance requirements for its EU operations whilst also meeting the ICO’s published guidance on AI and data protection for its UK operations. Investing in digital training that covers both frameworks helps teams understand their obligations without confusion about which rules apply where. The pragmatic approach is to build compliance programmes to EU AI Act standards, which will then exceed UK requirements in most areas.

The Brussels Effect in Practice

The Brussels Effect describes how EU regulations set de facto global standards, because multinational businesses find it more efficient to apply the strictest regulatory standard across all their operations rather than managing separate compliance programmes by jurisdiction. The EU AI Act is expected to replicate this pattern. Non-EU businesses, including UK firms, are already finding that enterprise clients and procurement teams request EU AI Act compliance documentation as part of supplier due diligence processes, even where the regulation does not technically apply directly. Building brand trust through credible social media marketing and transparent digital communications supports a business’s positioning as an AI-responsible organisation, which increasingly matters to enterprise buyers.

ProfileTree works with businesses across Northern Ireland, Ireland, and the UK on digital strategy and AI transformation. One consistent theme we see is that early engagement with the EU AI Act framework, even where it is not yet legally mandatory, provides a competitive advantage. Clients who can demonstrate AI governance maturity are winning contracts and building trust faster than those who are waiting for regulatory pressure to force the issue.

Practical Implications for Digital Agency Clients

For businesses working with digital agencies like ProfileTree on web development projects, AI-powered chatbots, marketing automation, and content generation tools, the EU AI Act has specific practical implications. AI chatbots deployed to interact with customers fall within the limited-risk category and require clear disclosure that users are interacting with an AI system. AI tools used to make or significantly inform decisions about individuals, such as automated lead scoring that determines who receives financial offers or credit terms, may fall into the high-risk category.

If your business is deploying AI in video marketing and production workflows that use generative AI to create or personalise content, the EU AI Act’s transparency requirements for AI-generated material are directly relevant. Any synthetic or AI-generated content distributed to audiences must be clearly labelled, a requirement that affects creative agencies, broadcasters, and marketing teams alike.

Preparing for Compliance: A Practical Roadmap

Preparing for EU AI Act compliance is not a single project but an ongoing programme of work. The timeline for full implementation runs to August 2027 for most provisions, but several obligations are already in effect. Organisations that delay risk being caught short as enforcement attention increases year by year. Below is a practical outline of the steps international businesses should prioritise.

| Timeline | Obligation | Who It Affects |

|---|---|---|

| August 2024 | EU AI Act enters into force | All organisations |

| February 2025 | Prohibited AI applications banned | Any business deploying AI in the EU market |

| August 2025 | GPAI model obligations and governance rules apply | General-purpose AI model providers |

| August 2026 | High-risk AI system obligations fully applicable | All deployers of high-risk AI |

| August 2027 | Full EU AI Act implementation complete | All organisations within scope |

Step 1: Complete an AI Systems Inventory

Before you can assess compliance, you need a comprehensive picture of every AI system your organisation uses or deploys, including third-party tools and AI features embedded within existing software platforms. This inventory should capture the system’s purpose, the data it processes, the decisions it informs or automates, and the individuals it affects. Many businesses are surprised by the scope of their AI footprint when they carry out this exercise properly.

Step 2: Apply the Risk Classification Framework

For each system identified, apply the EU AI Act’s four-tier risk classification. Most AI applications used by SMEs will fall into the minimal or limited risk categories. However, any system used in hiring, credit assessment, insurance underwriting, healthcare decision support, or public sector service delivery warrants careful assessment against the high-risk criteria in Annex III. When in doubt, apply the higher classification and document your reasoning.

Step 3: Build Your Documentation Framework

For high-risk AI systems, begin building the technical documentation required by the EU AI Act. For limited-risk systems, implement the required transparency measures. This includes reviewing your public-facing communications: your website design and privacy notices should clearly reflect how AI is used in any customer interactions, which is itself a transparency obligation under the EU AI Act. Every business should establish a policy that governs how AI tools are approved for use, who is responsible for reviewing AI-related risks, and how concerns are escalated.

Step 4: Invest in Training and Awareness

The gap between policy and practice is most often a training problem. Employees who use AI tools daily, from marketing teams using generative AI for content production to HR teams using screening software, need to understand what responsible AI use looks like within the EU AI Act’s framework. Role-specific digital training programmes produce better outcomes than generic awareness sessions and give employees the confidence to raise concerns early. ProfileTree’s AI training programmes for SMEs, delivered through Future Business Academy, cover both the practical use of AI tools and the governance principles that underpin responsible deployment.

Step 5: Engage Your Digital Partners Early

If you are working with a digital agency or technology supplier on AI-powered projects, raise the EU AI Act in the initial scoping conversation. A responsible digital partner should be able to advise on how the systems they are building align with the EU AI Act’s requirements. This extends to ongoing obligations: website management and hosting arrangements should include provisions for monitoring AI-enabled features and keeping technical documentation current. Retrofitting compliance into a deployed AI system is significantly more expensive and disruptive than building it in from the outset.

Taking the Next Step

The EU AI Act is already in effect, and enforcement obligations are growing year by year. For international businesses, the question is not whether to engage with compliance but how to do so efficiently and in a way that builds genuine organisational capability rather than just satisfying a checklist.

The businesses that are best positioned are those that have treated the EU AI Act as an opportunity to build better governance, clearer AI policies, and more trustworthy products. ProfileTree supports businesses across Northern Ireland, Ireland, and the UK in developing AI marketing and automation strategies and AI transformation programmes grounded in responsible practice. Whether you are at the beginning of your AI governance journey or looking to strengthen an existing compliance programme, the most important step is to start the conversation.

To discuss how the EU AI Act affects your specific operations, or to explore our AI training and digital strategy services, get in touch with the ProfileTree team.

FAQs

What does the EU AI Act mean for non-European companies?

Any non-European company whose AI system is used within the EU, or whose AI outputs affect EU residents, must comply with the EU AI Act. The regulation’s extraterritorial scope makes compliance a condition of market access, not an optional consideration.

Which sectors are most affected by the high-risk classifications?

Healthcare, financial services, HR technology, law enforcement, and education face the most direct obligations. Any business using AI to make or inform decisions about individuals in these areas should treat high-risk compliance as an immediate priority.

How does the EU AI Act relate to the GDPR?

The EU AI Act and GDPR work alongside each other rather than replacing one another. The GDPR governs data handling; the EU AI Act adds obligations around how AI systems are designed, tested, and monitored. A solid GDPR compliance programme provides a useful foundation but does not substitute for EU AI Act compliance.

Does the EU AI Act apply to UK businesses after Brexit?

The EU AI Act does not apply to UK-only operations. However, any UK business deploying AI that affects EU residents, or selling AI-enabled products and services into the EU market, falls within its extraterritorial scope and must comply accordingly.

How do penalties for non-compliance work?

Fines scale with the severity of the breach. Prohibited AI applications carry penalties of up to 35 million euros or 7% of global annual turnover. High-risk violations carry penalties of up to 15 million euros or 3% of global turnover. Providing incorrect information to authorities carries a fine of up to 7.5 million euros.

What is the Brussels Effect?

The Brussels Effect describes the tendency for EU regulations to become de facto global standards, because international businesses find it more practical to apply the strictest standard uniformly. The EU AI Act is expected to follow this pattern, as the GDPR did with data protection.